Healthcare and life sciences organizations hold some of the most sensitive data in existence — genomic sequences, clinical trial outcomes, real-world evidence, diagnostic imaging, and more — yet scientific collaboration often requires this data to move across organizational boundaries. A hospital must share patient information with a research institution while a pharmaceutical company needs real-world outcome data to validate a drug trial. And in every case, the question is the same — how do you share something so sensitive and still be confident that patient privacy is preserved, data integrity is maintained, and no unauthorized party can alter or exploit it once it crosses organizational boundaries?

Answering that question requires a fundamentally different approach — Zero Trust architecture, where every request, every workload, and every data movement is verified before it is permitted, regardless of where it originates. Central to making this work is Confidential Computing. If you are not already familiar with it, the blog post Protecting Sensitive Healthcare Workloads with Confidential Computing on OCI walks through how it works.

What Zero Trust Really Means

Zero Trust is built on the principle of “never trust, always verify.” Trust is not based on network location or infrastructure boundaries — every user, workload, service, and communication path must be continuously verified before access to applications or data is permitted.

Zero Trust spans all layers — from network segmentation and private connectivity to workload integrity verification and sensitive data protection. To learn more about Zero Trust security principles and foundational OCI implementation guidance, refer to the article What is Zero Trust Security? and the blog Accelerating Your Zero Trust Journey on OCI with Landing Zones.

This blog focuses specifically on how Zero Trust principles can be implemented for healthcare data sharing on OCI using Confidential Computing to enforce workload integrity and trust verification before any sensitive data is accessed or processed.

Use Case: Hospital Sharing Sensitive Data with a Research Organization

To see this in action, let us consider a real-world scenario — a hospital (provider) with valuable patient data and a research organization (consumer) working together on scientific innovation that has the potential to change patient outcomes, yet unable to move forward without a secure and trusted way to share the data.

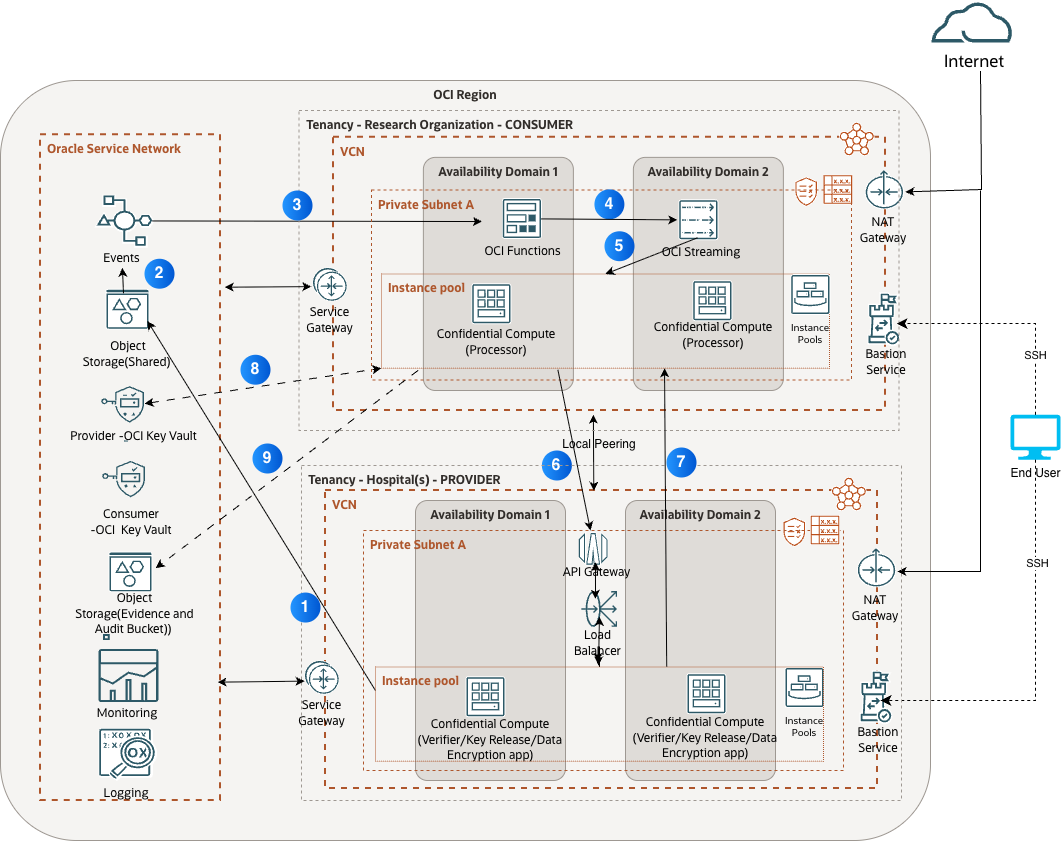

The architecture below depicts a Zero Trust pipeline on OCI where a hospital system (the data provider) securely shares sensitive patient data with a research organization (the data consumer) without exposing raw data to either research organization or the underlying infrastructure. The entire pipeline runs inside private subnets across two OCI tenancies, uses Confidential Computing at every processing layer, and enforces signed attestation before any decryption key is released.

Architecture Walkthrough: Step by Step

Step 1: Encrypted Data Upload The pipeline begins when the provider (hospital) encrypts sensitive patient data client-side using a data encryption key (DEK) managed in the provider’s OCI Vault and writes the encrypted payload to shared OCI Object Storage. The encryption key never leaves the provider’s vault — the consumer (research organization) receives only the encrypted data, meaning the provider retains cryptographic ownership even after the data has moved. OCI Object Storage provides highly durable storage through automatic redundancy across multiple Fault Domains and Availability Domains within a region. This ensures that the encrypted data remains highly available for downstream processing without requiring customers to manually manage internal replication or hardware availability.

Step 2: Event-Driven Workflow When the encrypted object lands in OCI Object Storage, OCI Events automatically emits a notification , fully decoupling the provider’s upload workflow from the consumer’s processing pipeline. The event payload contains no sensitive data — only a reference to the object location — meaning the trigger mechanism itself poses no risk of exposure.

It is worth noting that all calls from the VCNs to supported OCI services route through the Oracle Services Network via the OCI Service Gateway, ensuring that data stays within the OCI private network.

Step 3: Serverless Orchestration using OCI Functions The event invokes an OCI Function in a private subnet within the consumer’s tenancy. The function validates the event schema, checks OCI IAM authorization policies, and forwards the message to OCI Streaming. Because OCI Functions are serverless, there is no persistent attack surface — the function spins up, executes, and terminates. When upload volumes spike, OCI automatically spawns concurrent function instances in parallel — no pre-provisioning required. Refer to the reference architecture for configuration details.

Step 4: Streaming via OCI Streaming The OCI Function publishes a processing job message to the OCI Streaming service, OCI’s native managed streaming service. In OCI Streaming, messages are synchronously replicated across multiple Availability Domains (ADs) where available, and across multiple fault domains in a single Availability Domain region, minimizing the risk of data loss at the infrastructure layer. Unprocessed messages are retained and replayed based on the configured retention period, improving the overall resiliency of the pipeline. From a Zero Trust standpoint, access to the stream is governed by OCI IAM policies tied to verified workload identity using Instance Principals — a compute instance must prove who it is before it can read from the stream, regardless of network location.

Step 5: Streaming Message Processing Confidential Computing instances deployed as instance pools across both Availability Domains consume messages from the stream. These instances run inside hardware-enforced Trusted Execution Environments (TEEs) where memory is encrypted by a key held within the secure processor — inaccessible to the hypervisor, OCI operators, or the consumer’s own administrators. These instances are the only environment in which the DEK will ever be used in plaintext — ensuring decryption and processing happen exclusively within the hardware-enforced boundary. Data is always protected — even while being processed. If one Availability Domain fails, the remaining pool continues processing without interruption in another Availability Domain.

Step 6: Consumer’s Environment Attestation and Verification To access the provider’s encrypted data stored in OCI Object Storage, the consumer’s Confidential Computing environment must first engage the Verifier service deployed within the provider’s tenancy. The consumer’s TEE generates an attestation report from the underlying secure processor and sends it along with the verification request to an OCI API Gateway endpoint exposed within the provider’s tenancy. The API Gateway acts as the secure entry point, validating the request before forwarding it to the OCI Load Balancer, which distributes attestation requests across both Availability Domains, ensuring the Verifier service remains highly available.

The Verifier service confirms that the consumer’s compute environment is running verified hardware and approved, unmodified software before releasing the DEK — the same key used by provider to encrypt data in Step 1. Communication between the VCNs occurs through Local VCN Peering over the OCI backbone without traversing the public internet. OCI IAM policies control the peering relationship, ensuring neither tenancy can independently expand the connection.

Step 7: Data processing by Consumer within TEE Once attestation is validated, the Verifier retrieves the DEK — the same key used by the provider to encrypt the data in Step 1 — from the Provider OCI Key Vault and wraps it using the public key from the consumer’s attestation report, a technique called key wrapping, where one key is encrypted by another so only the intended recipient can unlock it. Only the consumer’s verified TEE holds the corresponding private key, meaning no other environment, regardless of network location or administrative privilege, can unwrap the DEK.

Once the wrapped DEK is delivered to the consumer’s TEE, it is unwrapped using the TEE’s private key entirely within the hardware-enforced boundary. The workload then uses the DEK to decrypt the provider’s encrypted data before processing begins. From this point, the consumer’s workload processes the decrypted data entirely within this protected boundary, where memory remains encrypted and inaccessible to the hypervisor, OCI operators, and the consumer’s own administrators. At no point does raw patient data exist in a readable form outside the hardware-enforced boundary of the TEE.

Step 8: Consumer’s Encrypted Output The consumer’s OCI Vault manages the encryption keys used for encrypting and decrypting intermediate and final processed results. Access is controlled through OCI IAM policies scoped to specific confidential compute instance identities, enforcing least-privilege access at the key management layer.

Step 9: Monitoring and Logging Every operation — event invocation, function execution, attestation request, key release, and data access — is written to an OCI Object Storage Evidence and Audit Bucket alongside entries in OCI Logging and OCI Monitoring, creating a time-stamped evidence chain for regulatory review.

Supporting Services: Bastion Service and NAT Gateway

Beyond the core pipeline, two services keep the environment secure and maintainable without creating an inbound attack surface. OCI Bastion provides time-limited SSH access to private instances — no public IP required, every session logged and auditable. The NAT Gateway allows outbound connections for updates without exposing instances to inbound internet traffic. Together they ensure the pipeline remains maintainable while maintaining Zero Trust at every access point.

Conclusion

Together, these controls create a multi-layered Zero Trust security model that protects sensitive healthcare workloads across every stage of the pipeline. Confidential Computing, signed attestation, private networking, audit logging, and least-privilege access controls work together to ensure sensitive data remains protected as it moves across organizational boundaries. The architecture assumes failure is inevitable and is designed for resilience at every layer, ensuring workload continuity even when individual components or Availability Domains fail.

Reference Links