Let us consider a scenario: a research organization (consumer of data) wants to train an AI model on genomic data held by partner hospitals (provider of data). The hospitals are willing to contribute insights — but not raw patient records — because the primary risk is protected health information (PHI) being leaked or misused once it leaves their controlled environment.

So the question becomes: how do you ensure that sensitive data such as PHI shared outside the hospital’s boundaries remains protected at rest, in transit, and in use; that the environment processing it has not been tampered with and is running only approved code; and that at no point, even during active processing, can any person, process, or system outside the trusted execution boundary access the sensitive information inside?

Healthcare and life sciences organizations manage highly sensitive data — electronic health records (EHRs), clinical trials, medical imaging, genomics, and other sensitive datasets. While encryption protects data at rest and in transit, a critical gap remains — data in use.

Confidential Computing closes this gap — protecting data in use.

Why Confidential Computing Matters

Confidential Computing addresses this challenge by protecting data during processing using Trusted Execution Environments (TEEs). These hardware-isolated environments ensure that data and code remain protected — even from the hypervisor, the host operating system, and the cloud administrators.

Memory inside a TEE is encrypted with a key that the secure processor itself manages and never exposes to the host operating system, hypervisor, or cloud administrators.

From Isolation to Verifiable Trust

Hardware isolation alone is not enough. Protection without proof creates a trust problem: how does a hospital know the workload requesting its patient data is running inside a genuine TEE and not an emulator, a tampered VM, or an environment with known vulnerabilities? Every participant in a data-sharing scenario needs verifiable evidence before releasing sensitive data.

This is where attestation establishes verifiable trust.

Attestation

Attestation is the mechanism by which the underlying secure processor generates a hardware-signed report describing exactly what is running inside it: firmware version, platform configuration, and a cryptographic measurement of the workload. Because the report is signed by a key rooted in the secure processor itself, it cannot be forged by software — not even by the cloud administrators.

A complete attestation answers three questions:

- Is the workload running in a genuine TEE on trusted hardware?

- Is the software stack the expected, untampered version?

- Does the environment satisfy the relying party’s security policy?

Attestation Architecture: Key Roles

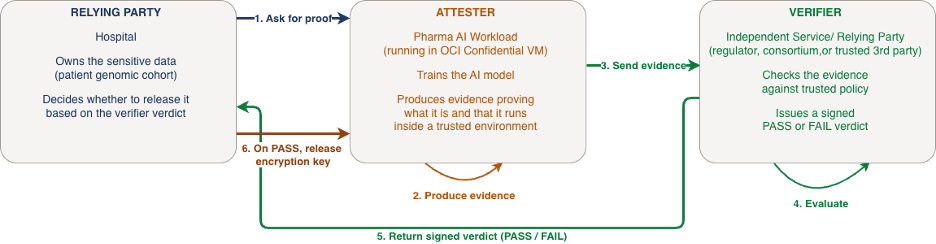

Every attestation flow involves three roles:

- Attester — the workload running inside the TEE that requests a hardware-signed report from the secure processor and presents it as evidence of its integrity.

- Verifier — the independent service that evaluates the attestation evidence against a defined security policy and issues a signed PASS or FAIL verdict.

- Relying Party — the organization that requires proof of integrity before releasing sensitive data or granting access to a protected resource.

These three roles can operate within the same platform (local attestation) or across organizational boundaries (remote attestation) depending on the use case.

End-to-End Attestation Flow in Action

Let us walk through a real-world scenario. A research organization wants to train an AI model on genomic data held by three partner hospitals. The hospitals do not share raw patient data directly. Instead, they allow it to be decrypted only inside a verified TEE. The workload runs in an OCI Confidential VM, and each hospital releases the decryption key only after attestation confirms the environment is genuine, untampered, and running only approved code.

Here is how the three roles interact, step by step:

- Relying party asks for proof. Each hospital (the relying party) tells the workload: “Before I share my genomic data with you, prove to me that you are who you claim to be and that you are running in a trusted environment.”

- Attester produces evidence. The workload running inside the OCI Confidential VM (the attester) requests a hardware-signed attestation report from the underlying secure processor. This report describes the workload, how it is configured, and confirms that it resides inside a genuine and untampered TEE — evidence that no software layer, including the hypervisor or cloud administrators, can alter.

- Attester sends evidence to the verifier. The attestation report is submitted to an independent verification service (the verifier). The verifier can be hosted by a hospital, by the pharmaceutical company, by a regulator, or jointly by the consortium — what matters is that it is trusted by the relying party.

- Verifier evaluates the evidence. The verifier checks the evidence against the relying party’s policy: Is the workload the approved one? Is the environment a genuine TEE? Does it meet the security requirements? The verifier then determines whether the workload satisfies the defined trust policy.

- Verifier sends the verdict. The verifier issues a signed PASS or FAIL verdict back to the relying party.

- Relying party makes the trust decision. The hospital receives the verification result (PASS/FAIL). On PASS, it releases the encryption key for its genomic data to the workload. The data is decrypted only within the TEE, where it remains inaccessible to third parties. Training begins.

Implementing Confidential Computing on OCI

Oracle Cloud Infrastructure (OCI) enables Confidential Computing through secure VM instances backed by Trusted Execution Environments (TEEs).

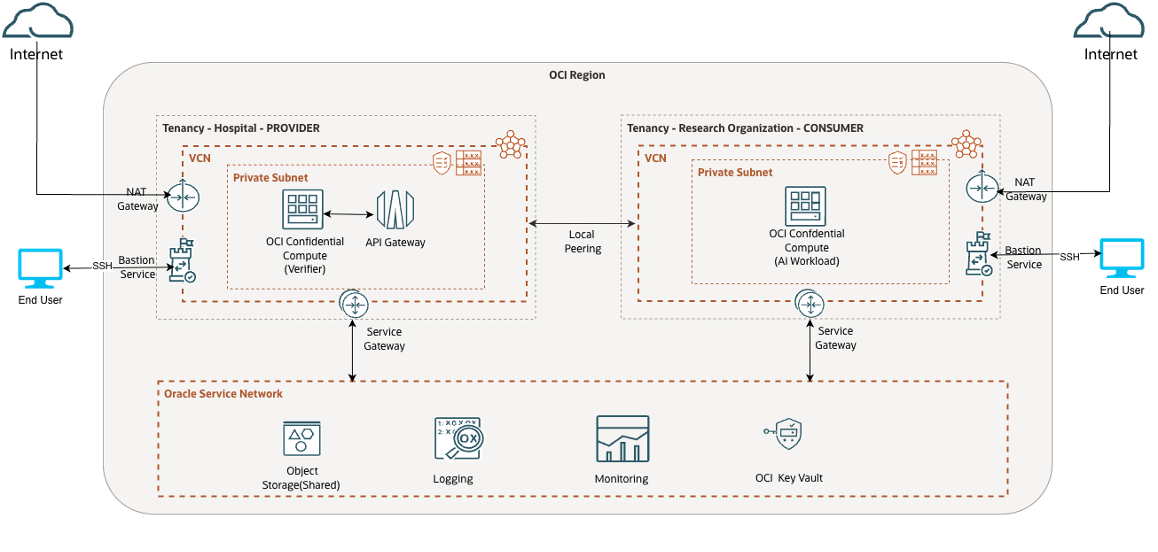

The reference architecture shows a secure OCI setup where a hospital (relying party and verifier) interacts with a research organization (attester) to validate a confidential workload before sharing sensitive data. Both the hospital and research organization deploy their resources within separate OCI tenancies inside the same OCI Region, each isolated inside a Virtual Cloud Network (VCN) with a Private Subnet, ensuring no compute instance is directly reachable from the public internet. Administrative access to either environment is gated exclusively through the OCI Bastion Service, which enforces time-limited, audited SSH sessions without requiring permanently exposed SSH access. The two VCNs are connected via Local VCN Peering, ensuring all attestation traffic flows over OCI’s private backbone rather than the public internet.

On the hospital side, an OCI Confidential Compute Instance runs the verification service inside a hardware-isolated TEE, ensuring that even the verification logic and key release decisions are protected from the hypervisor and cloud administrators. An API Gateway fronts this environment, receiving attestation evidence from the research organization’s workload and returning signed verdicts. OCI Vault manages the hospital’s decryption keys, releasing them only after a verified PASS verdict is received. OCI Logging captures an auditable record of key access, attestation events, and API calls, while OCI Monitoring provides real-time alerting on anomalous behavior.

On the research organization side, an OCI Confidential Compute Instance runs the AI training workload inside a hardware-isolated TEE, where memory remains encrypted by the secure processor and inaccessible to any external software layers — including the hypervisor and cloud administrators. OCI Vault manages the keys used to encrypt data written to OCI Object Storage, which durably holds encrypted genomic inputs, intermediate artifacts, and final model outputs.

Open-source tools for attestation and verification

Open-source toolsets are available for both the attester and verifier roles. The links below are good starting points; the ecosystem evolves quickly, so review current documentation before deploying the tools.

Verification service:

- Veraison – Project Veraison builds software components that can be used to build an Attestation Verification Service.

Attestation tools:

- VirTEE – VirTEE is an open community dedicated to developing open source tools for the bring-up, attestation, and management of Trusted Execution Environments.

- SEV-SNP Platform Attestation Using VirTEE/SEV

Getting Started

Here is the core setup path to get your first confidential workload running on OCI:

- Provision Confidential Compute enabled VMs in OCI

- Deploy an attestation agent (e.g., VirTEE) inside the VM

- Stand up a verifier service (e.g., Veraison)

- Define your expected configurations and security policies

- Test both success and failure paths (PASS/FAIL)