Introduction: Oracle Acceleron Multiplanar Networking

Large AI clusters are engineered around a simple constraint: synchronized jobs only move as fast as their slowest network path. When thousands of GPUs participate in collectives, a single congested link, failed path, or unlucky flow collision can stretch tail latency and waste expensive compute. Traditional datacenter networks tend to top out at around 60% fabric utilization—sometimes significantly lower—because standard Equal-Cost Multiple-Path (ECMP) routing pins flows to paths without knowing their size, resulting in uneven packing.

Oracle Acceleron Multiplanar Network topology is a high-scale architecture that uses multiple parallel network planes to deliver predictable performance, fault isolation, and near-linear bandwidth scaling.

Oracle Acceleron Multiplanar Network shifts control of how traffic moves through the network. Instead of the network trying to guess the best way to balance traffic and route traffic, the OCI network gives NICs full control. With state moved from the routers to the packets, NICs are able to respond to congestion and link flaps and achieve full fabric utilization.

Two-tier Multiplanar Networking Architecture

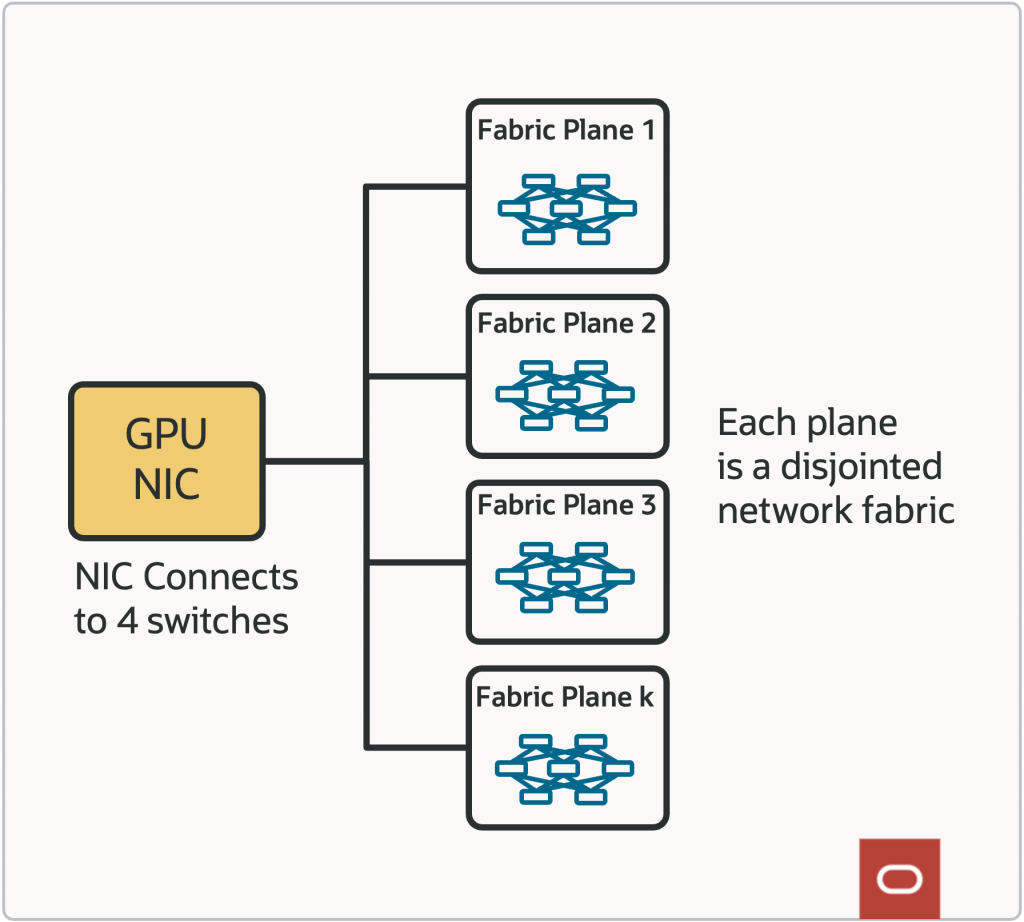

With the multiplanar network, there are many independent planes, each with isolated control and order of magnitude higher path diversity. Packet routing function is necessary at the edges since the host NIC needs to select the network plane for each network flow or packet.

With Oracle Acceleron Multiplanar Networking Architecture, we established the foundational baseline:

- Multiple independent planes eliminate network-wide fate sharing.

- Each plane is an independent Clos fabric with its own data and control behavior.

- A NIC fans out into several planes, such as an 800G NIC using 4 x 200G links.

- If one plane has a hardware fault, software issue, or congestion event, traffic can be shifted away from unhealthy planes.

Powerful Controls from the Edge of the Network

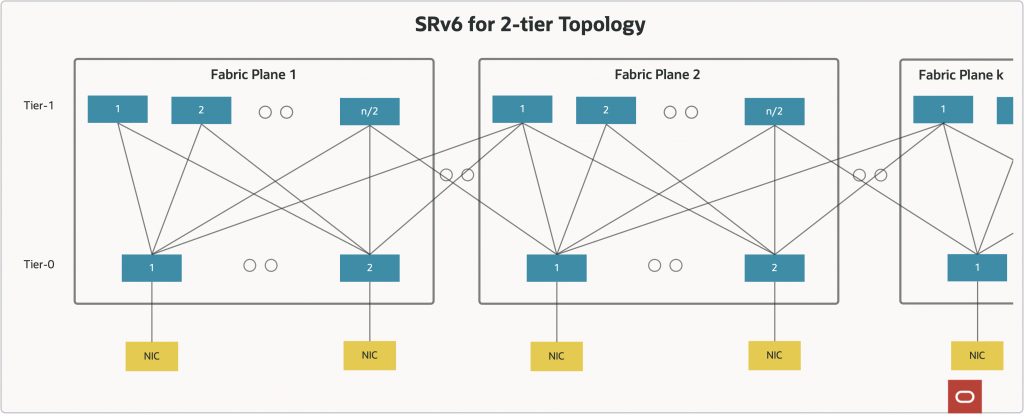

Traditional datacenter networks are built around hop-by-hop destination-based routing. Oracle Acceleron instead uses source-based routing, using SRv6 (Segment Routing over IPv6) which allows NICs to control the exact path that packets take through the datacenter network by specifying each hop in the network path embedded in an IPv6 address.

A centralized control plane computes N-diverse paths between every pair of NICs in the network and distributes those pre-computed segment lists to the hosts. The host NIC also monitors path health in-band and can fail over to an alternate segment list nearly instantly without needing on-switch control-plane reconvergence. All the traffic engineering intelligence packet spraying across multiple paths, per-tenant plane isolation, jitter-sensitive flow steering, QoS marking—lives in the host NIC and control plane. The result is higher utilization, lower jitter, faster failover, and fine-grained control over how traffic moves through the fabric—all without adding routing complexity to the core.

The centralized control plane-based routing and NIC based congestion and error handling, apply to both RDMA network traffic for AI cluster networks as well as TCP/IP traffic.

With Oracle Acceleron, control plane and NIC software & firmware come with OCI AI Infrastructure services. Additionally, we empower and enable key customers to design and implement their own control plane and NIC software & firmware.

Multipath Reliable Connection (MRC) in OCI

MRC (Multipath Reliable Connection) is the specification behind control plane & NIC software framework used in conjunction with Oracle Acceleron Multiplanar network in OCI AI Infrastructure for Stargate in Abilene, TX.

MRC extends the RDMA Reliable Connection model with multipath behavior. MRC achieves single-QP high throughput even when the physical capacity is split across multiple ports and many network paths. It distributes traffic across many available paths using packet spraying, with NIC support for out-of-order data placement to handle reordering.

MRC uses SRv6 based static routing. By relying on simple static routing in the network and shifting intelligence to the endpoints, MRC achieves high throughput, resilience, and low tail latency at scale.

MRC was developed through collaboration among AMD, Broadcom, Intel, Microsoft, NVIDIA and OpenAI. OCI partnered with OpenAI, NVIDIA and Arista, to deploy and scale MRC across OCI’s cluster network.

OpenAI’s MRC blog provides in-depth details on MRC.

Conclusion

Oracle’s two-tier multiplanar architecture gives the physical foundation for large AI clusters: low latency, high bisection bandwidth, and independent network planes. MRC turns that foundation into reliable transport behavior for synchronized workloads that need single-connection performance across many physical paths.

The outcome is a network architecture designed for real cluster conditions, where links fail, queues form, packets reorder, and workloads still need to make forward progress. Abilene supplies the fabric. MRC supplies the path-aware reliable transport that lets the fabric perform.

Key Takeaways

- Oracle Acceleron delivers pioneering and class-leading network performance with multiplanar network.

- Our AI datacenter for Stargate in Abilene, TX uses a two-tier, no-oversubscription, multiplanar Clos fabric for large AI clusters.

- Oracle Acceleron Multiplanar network’s performance and resilience is enabled by powerful controls implemented at the edge of the network

- Multipath Reliable Connection (MRC) implementation on top of multiplanar supercluster provides the network controls at the edge of the network to deliver the necessary performance and resiliency.