Oracle Fusion Data Intelligence (FDI) is a family of prebuilt, cloud-native analytics applications for Oracle Fusion Cloud Applications that provide ready-to-use insights to help improve decision-making. It’s extensible and customizable, allowing customers to ingest data and expand the base semantic model with additional content.

Overview

This blog is part of the series FDI Notifications focusing on writing to Oracle Autonomous AI Lakehouse.

You can use Oracle Cloud Infrastructure (OCI) Functions to capture pipeline statistics notifications and save them in Oracle Autonomous AI Lakehouse. This process involves configuring Event Services to detect notifications when pipeline events are triggered, a function that processes the trigger data, and then storing the relevant statistics as files in Oracle Autonomous AI Lakehouse for later analysis.

Note: In this blog, you’ll see an example of logging events related to Priority Dataset Refreshes and have named artifacts accordingly. You can follow these same steps and adapt the artifact names to fit any of your own use cases.

Creating Oracle REST Data Services (ORDS) Enabled Table in Oracle Autonomous AI Lakehouse

- Follow the instructions here to enable REST access if the schema isn’t already REST-enabled; this process enables REST on the user schema.

- Create the table

EVENT_NOTIFICATIONin an ORDS-enabled user schema:

CREATE TABLE event_notification (

id VARCHAR2(36),

event_time TIMESTAMP,

event_type VARCHAR2(200),

resource_name VARCHAR2(512),

request_type VARCHAR2(200),

status VARCHAR2(100),

compartment_id VARCHAR2(128),

source VARCHAR2(200),

CONSTRAINT event_notification_pk PRIMARY KEY (id, event_time));- REST-enable the table created in the ADMIN user schema:

BEGIN ORDS.ENABLE_OBJECT(

p_enabled => TRUE,

p_schema => '<YOUR-REST-ENABLED-USER>',

p_object => 'EVENT_NOTIFICATION',

p_object_type => 'TABLE',

p_object_alias => 'event_notification'

);

END;Creating Application and Deploying Function

- Read the Deploy OCI Functions for Fusion Data Intelligence Event Notifications blog to set up an application and create a function.

- In the

func.pyfile, delete the existing code and replace it with the new function code provided as an example. See Writing to AI Lakehouse Sample Code. - Open the

requirements.txtfile using the command:nano requirements.txt - Add

requestsas a dependency since the code uses that library. Save and close the file.requests - Follow the rest of the steps in the blog to deploy the application.

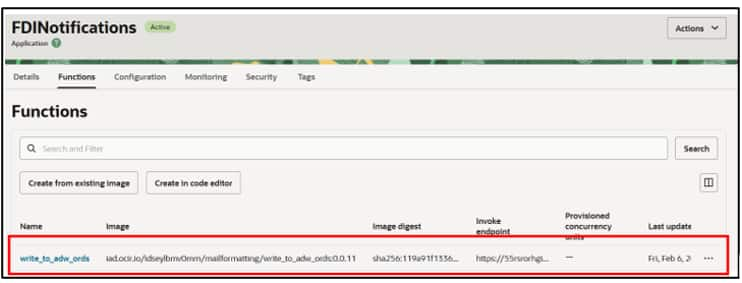

- As an example, if you have created an application called FDINotifcations and a function called write_to_adw_ords, on deployment you see the highlighted state in your OCI application artifact.

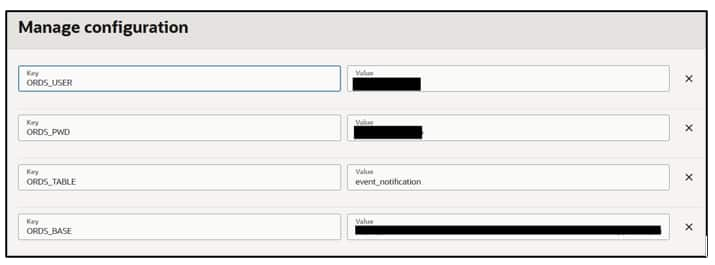

Configuring Environment Variables for the Function

- Set up configurations and environment variables that the script uses.

- Under Configurations, navigate to Manage Configurations to define the values for environment variables to the function.

- ORDS_TABLE: Enter

event_notification. - ORDS_BASE, ORDS_USER, ORDS_PWD: For the steps to find these values in your OCI tenancy, follow the steps in ORDS.pdf.

Configure Topics and Rules

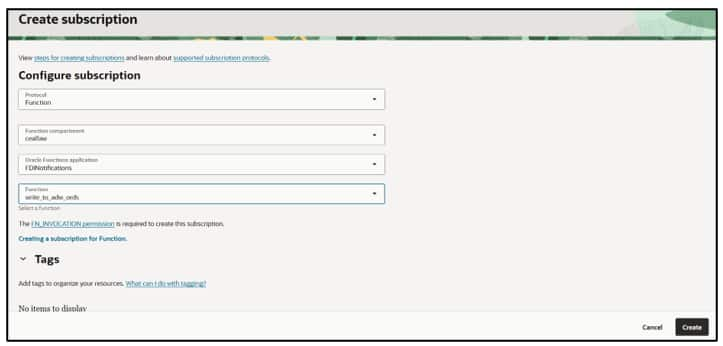

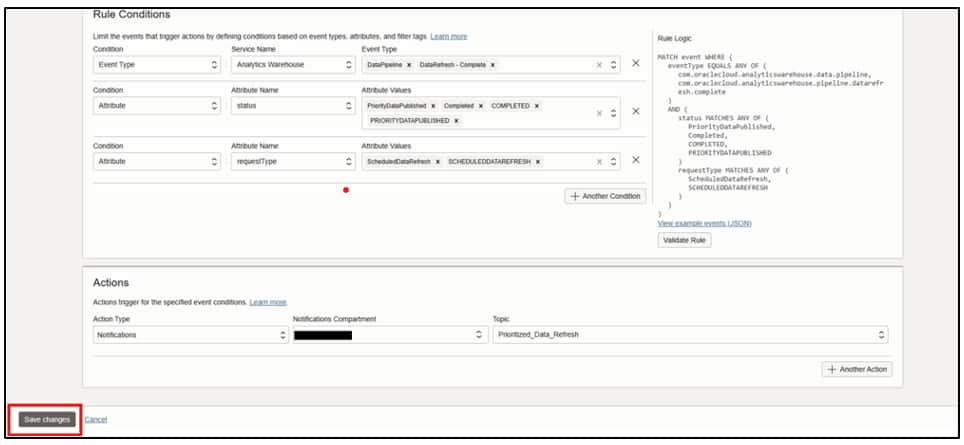

- Read Overview to set up Topics and Rules for Event Monitoring to set up Topics and Rules for the events.

- To subscribe a function to your topic, select Function as the protocol, then choose the application and function containing your code. Click Create.

- As an example, provide the details below and configure the rule conditions and attributes to receive notifications upon completion of prioritized data refreshes.

For all available Event Types and Attributes, see Event Types, Attributes, and Attribute Values for Event Rules.

Writing Events to Tables

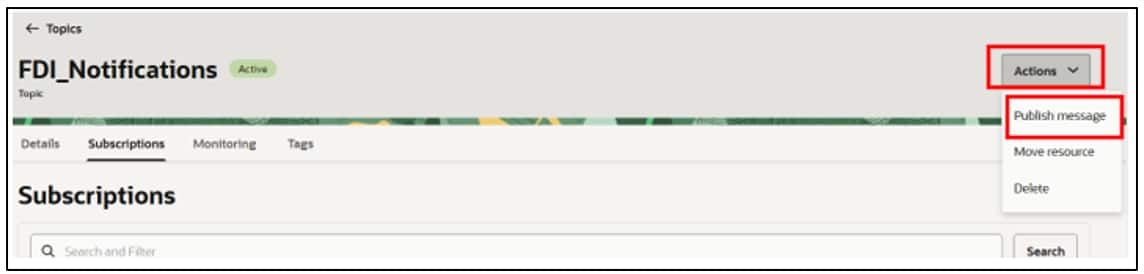

- To test the function without waiting for the actual pipeline trigger, use the Publish Message option associated with the topic you created.

- The message can be any format, such as a JSON payload or even a single-line statement depending on what your function requires as input. The title is the subject of the email you receive if your email address is subscribed to the topic.

- Click Publish and validate the results.

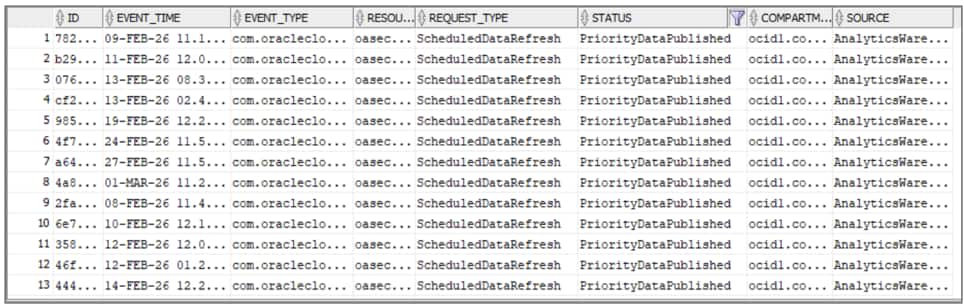

Navigate to the created table and review the data inserted.

Conclusion

By saving your event notifications to Oracle Autonomous AI Lakehouse, you can collect and use the event data for reporting or analysis automatically and easily. For additional options to store these notifications for further processing and analysis, see:

- Store them as files in an Object Storage bucket. See Writing events to OCI Object Storage.

- Format the trigger payload as an easily readable email. See Formatting Oracle Fusion AI Data Platform Pipeline Email Notifications.

Call to Action

Now that you’ve read this post, try it yourself and let us know your results in the Oracle Analytics Community, where you can also ask questions and post ideas.