In traditional MySQL, analytics require data to be ingested into InnoDB tables and processed on a single primary instance using row-based storage engine and limited parallelism. Scaling analytical workloads typically involves replicas, ETL pipelines, or even external analytics system.

With MySQL HeatWave, Oracle’s fully managed service in the cloud, analytics execution is offloaded to a distributed, in-memory engine that uses columnar storage and parallel processing across multiple nodes running on the back of a MySQL instance. This enables MySQL to support large-scale analytical queries directly without data duplication, without separate analytical databases, and without changing application queries. When a query is executed, the MySQL optimizer automatically offloads eligible queries to HeatWave , which scan in-memory data in massively parallel processing, delivering low-latency analytics on large datasets using familiar MySQL SQL syntax.

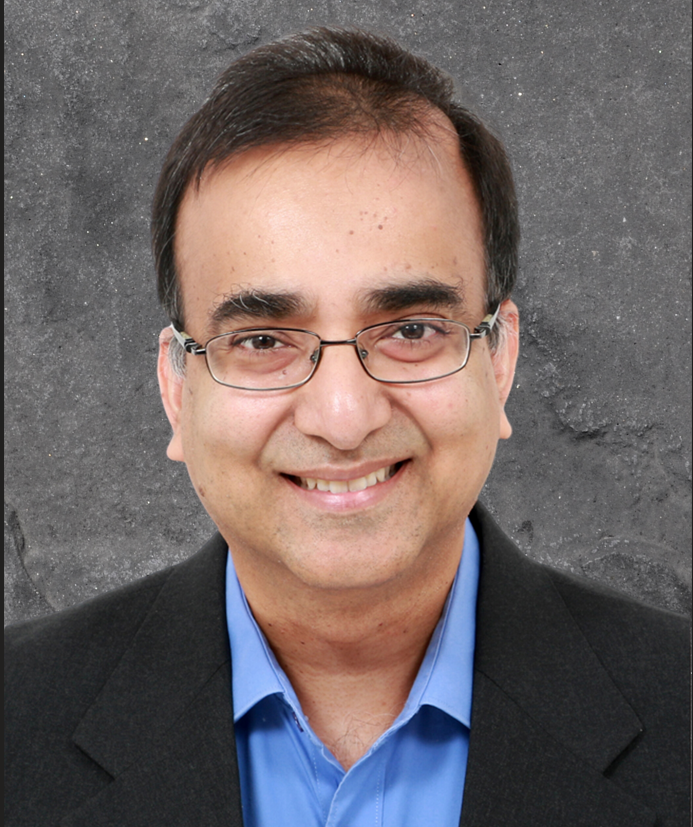

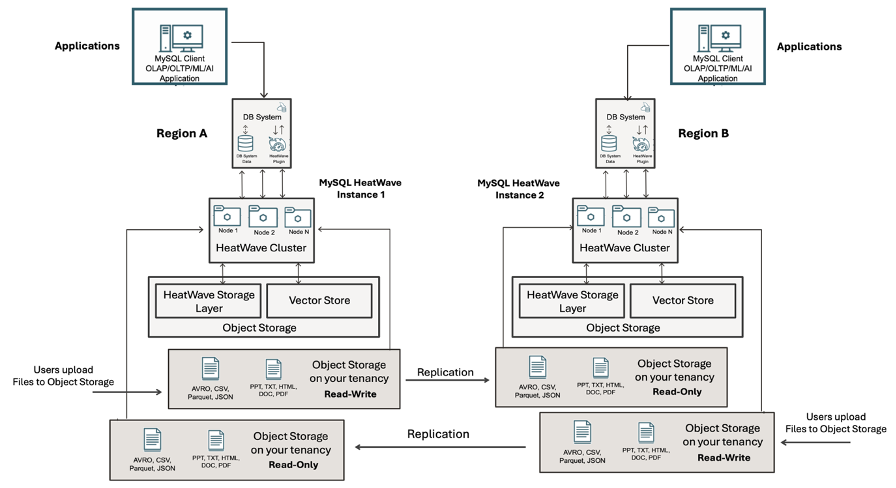

Fig-1. Architectural picture of MySQL HeatWave

Lakehouse feature allows developers to run standard SQL queries against files and semi-structured data stored in object storage in various formats, which are JSON, CSV, Avro, Parquet, and Delta Lake without building ETL pipelines or loading data into MySQL tables. External tables are defined in MySQL schema and behave like regular tables for querying data from OCI object storage buckets. Beginning with version 9.5.0, MySQL HeatWave Lakehouse supports reading Delta Lake tables and this integration allows users to conduct high-performance analytics on Delta Lake data within OCI object storage.

Eliminating Single Point of Failure in HeatWave Lakehouse

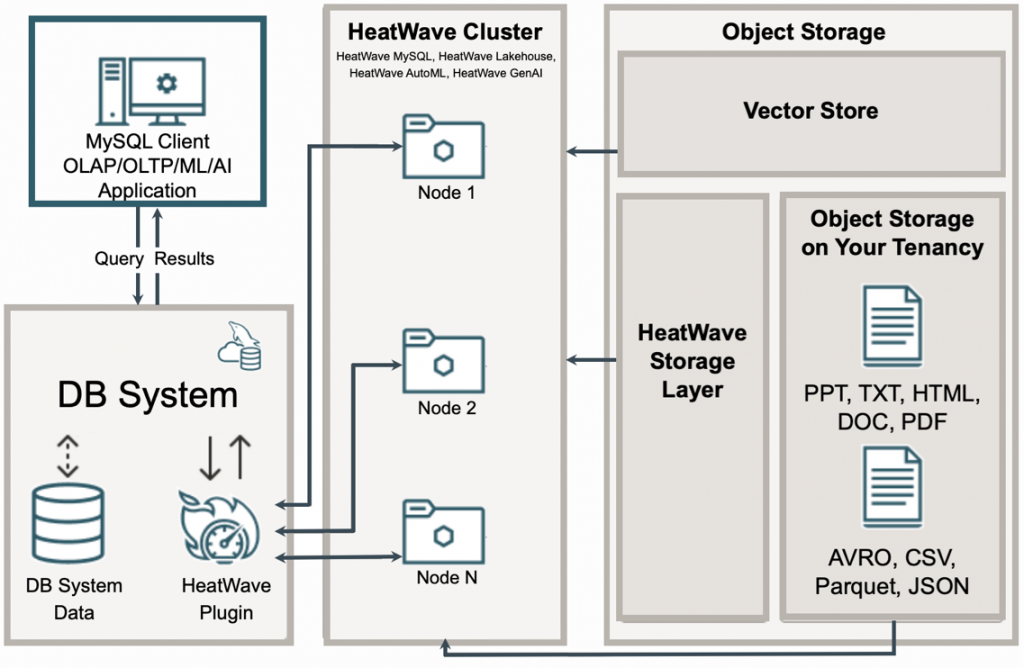

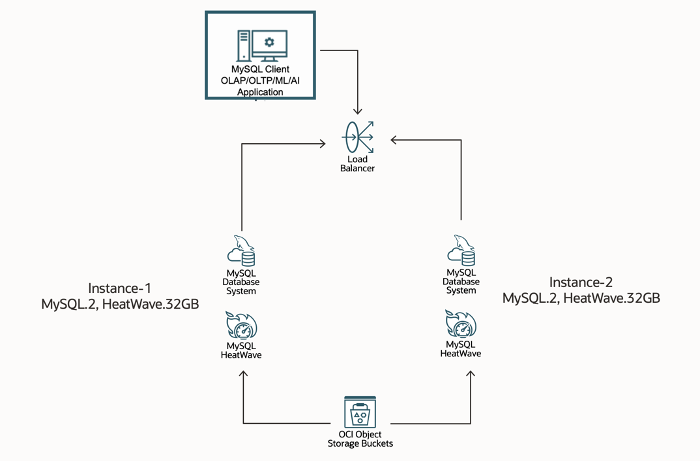

In production environments, high availability is a requirement rather than an option. MySQL HeatWave Lakehouse addresses this by removing single point of failure at the data layer using multiple MySQL HeatWave instances which concurrently access the same object storage buckets, with the data remaining external to any individual database instance. Object Storage buckets are regionally durable and independent of a specific Availability Domain, providing inherent resilience at the storage layer. This enables an architecture where multiple HeatWave instances share a common, highly available data source, and define the same external table DDL locally. Multiple HeatWave instances can query the same underlying data without replication. This decoupled model simplifies high-availability design and enables fault-tolerance while scaling analytics independently.

Fig-2. Multiple HeatWave instances share a common Object Storage Bucket

The diagram above illustrates a Load Balancer positioned in front of multiple MySQL HeatWave instances, exposing a single, consistent database endpoint through a private IP within the subnet. Client applications connect to this endpoint transparently without awareness of the underlaying instances. The Load Balancer distributes incoming connections across MySQL HeatWave instances using configurable routing policies such as round-robin, IP hash, or least-connections. This ensures balanced workload, seamless client connectivity, and predictable performance as instances scale or fail.

Multi-Region Read Scaling Architecture

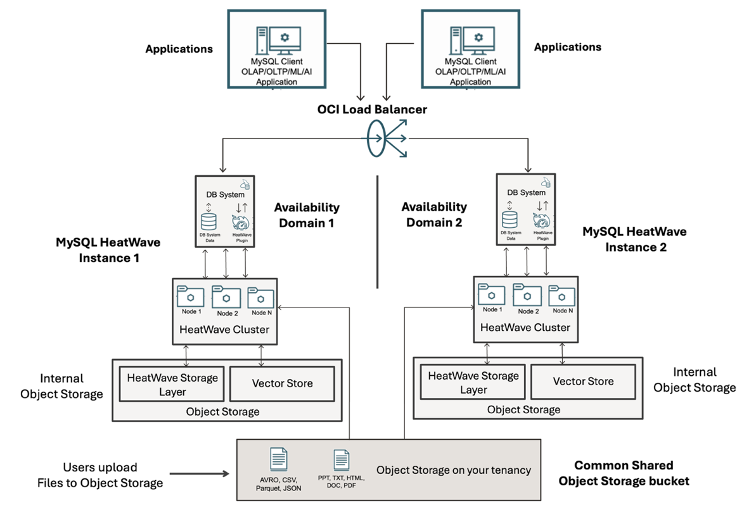

Beyond supporting cross-Availability Domain (AD) access, OCI Object Storage provides cross-region replication, allowing bucket contents to be replicated in near real-time across regions. You define replication policies at the bucket level, which ensure any new or updated objects referenced by MySQL HeatWave external tables are automatically synchronized to a target region. This setup enables HeatWave instances deployed in multi regions to access the replicated data locally, reducing latency and eliminating the need for custom data movement pipelines.

By leveraging this feature, architects can implement region-level disaster recovery and also achieve multi-region read scaling, enabling analytical workloads to scale globally without introducing database level replication complexity. Essentially, Object Storage replication allows HeatWave to maintain consistent, low-latency access to larger datasets across regions, forming a critical backbone for resilient, high performance Lakehouse architecture.

Fig-3. Cross region Object Storage replication accessed by local MySQL HeatWave instances

For details on setting up Object Storage Bucket cross-region replication with read-write bucket replication to read-only bucket on remote region, please refer to https://docs.oracle.com/en/learn/object-replication-for-dr/

If the primary region becomes unavailable, MySQL HeatWave Lakehouse in the remote region can continue to serve against the same logical dataset, sourced from the replicated object storage bucket, providing a geo-redundant, high performance MySQL Lakehouse analytics that can withstand failures while maintaining data consistency and service continuity.

Fig-4. Bi-directional Cross-Region Object Storage replication for Active/Active Sites

For robust disaster recovery with MySQL HeatWave Lakehouse, maintain two sets of Object Storage buckets, one in the primary region (e.g. us-ashburn-1) and one in the secondary region (e.g. us-chicago-1). This enables bidirectional replication: east-to-west and west-to-east. Since Object Storage replication is inherently read/write to read-only, each region’s bucket set serves as the read-only replica for the other region. MySQL HeatWave instances in each region reference their local replicated buckets using identical external table definitions, allowing seamless analytics in either region while providing a fully resilient Active/Active DR architecture.

Disaster recovery strategy for Object Storage is well explained in this blog and it can be fully adopted on both Active/Passive and Active/Active DR architecture:

Moving forward in this blog

The following steps walk through the deployment of a high availability of MySQL HeatWave Lakehouse architecture, consisting of shared object storage across two MySQL HeatWave instances and a Load Balancer configured to route traffic between them.

The Environment

For this technical blog, two identically configured MySQL HeatWave instances are provisioned to demonstrate the architecture. Both run MySQL HeatWave 9.5.0 on the MySQL.2 shape with 50 GB storage and a single node cluster HeatWave.32. Instance-1 (10.0.1.121) and instance-2 (10.0.1.209) listen on standard MySQL port 3306 for classic and 33060 for X protocol, and are fronted by a load balancer to provide a consistent database endpoint for accelerated analytical workloads.

Both instances use Resource Principals to access Object Storage securely without managing secrets or static credentials. Resource Principals authenticate via OCI-managed certificates, allowing HeatWave to call the APIs directly. Access is controlled through Dynamic Groups and IAM policies, which explicitly define permitted actions. To enable this, configure a dynamic group and an IAM policy that grants the HeatWave Lakehouse access to Object Storage buckets and objects within the working compartment.

Fig-5. High Level Architecture

Refer to the following online blog on how to configure Resource Principal for allowing MySQL HeatWave access to Object Storage bucket:

or the following documentation:

https://dev.mysql.com/doc/heatwave/en/mys-hw-lakehouse-loading-data-resource-principal.html

Accessing Object Storage Data using External Table

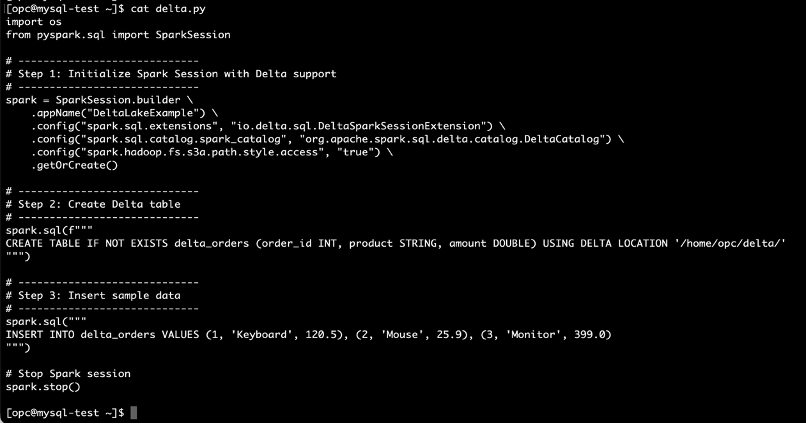

In this blog post, we demonstrate loading and reading Delta Lake from Object Storage where a Delta Lake is generated by a Python-based script. The script handles both the table creation and data insertion, storing the resulting Delta Lake files locally under /home/opc/delta/, then upload manually to Object Storage bucket.

Fig-7. Python script: delta.py

Simply run the script using the following command:

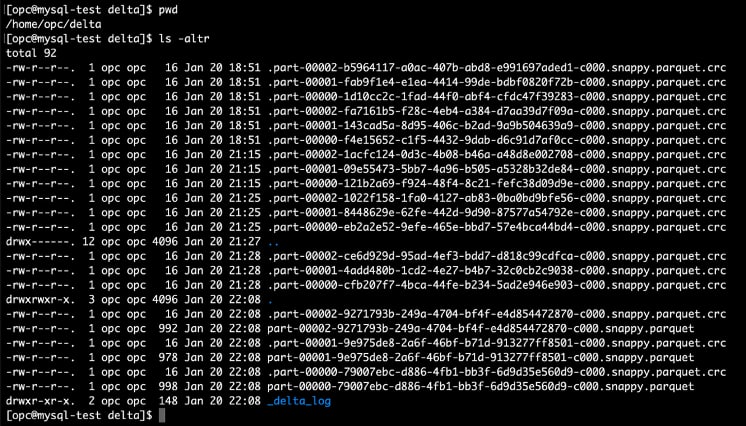

spark-submit --packages io.delta:delta-spark_2.12:3.1.0 delta.pyParquet files and log are created locally.

Fig-8. Delta Lake Files

Upload the files to the “delta” folder in the Object Storage bucket “demo”, and then create an external table on each MySQL HeatWave instance using the following DDL.

CREATE EXTERNAL table market_data.delta_orders (order_id INT, product longtext, amount DOUBLE)

FILE_FORMAT = (FORMAT delta) FILES = (URI 'oci://demo@mysqlpm/delta/');Perform initial table loading to read files and load data from Object Storage bucket on each instance.

ALTER TABLE market_data.delta_orders SECONDARY_LOAD;Login to instance-1 and query the external table:

mysql > select @@hostname, a.* from market_data.delta_orders a;

+------------------+----------+----------+--------+

| @@hostname | order_id | product | amount |

+------------------+----------+----------+--------+

| qpw9vqjsbpbxlicv | 2 | Mouse | 25.9 |

| qpw9vqjsbpbxlicv | 3 | Monitor | 399 |

| qpw9vqjsbpbxlicv | 1 | Keyboard | 120.5 |

+------------------+----------+----------+--------+Login to instance-2 and query the external table:

mysql > select @@hostname, a.* from market_data.delta_orders a;

+------------------+----------+----------+--------+

| @@hostname | order_id | product | amount |

+------------------+----------+----------+--------+

| dywysulxczwdhoa3 | 2 | Mouse | 25.9 |

| dywysulxczwdhoa3 | 3 | Monitor | 399 |

| dywysulxczwdhoa3 | 1 | Keyboard | 120.5 |

+------------------+----------+----------+--------+Both results are identical and the @@hostname expose the unique backend hostname of each instance to identify which instance processed the query. The same technique is also useful when connecting to both instances from Load Balancer, to determine which instance actually connecting.

As time goes by, files in Object Storage bucket may change with incoming new files, deletion of old files, or altering existing files. To maintain up-to-date insights, schedule incremental loads at synchronized intervals on all participating MySQL HeatWave instances to keep up with the data change on Object Storage bucket. Below is a sample code for the incremental load.

SET @input_list = '[{"db_name": "market_data", "tables": [{"table_name": "exchange_data"}]}]';

SET @options = JSON_OBJECT('mode', 'normal','refresh_external_tables', TRUE);

CALL sys.HEATWAVE_LOAD(CAST(@input_list AS JSON), @options);Conclusion:

MySQL HeatWave provides a cloud-native MySQL database-as-a-service designed for high performance analytics. It combines Lakehouse flexibility, allowing queries directly on Object Storage in addition to InnoDB tables, with in-memory SQL acceleration for speed. Its architecture supports high availability across Availability Domains and Regions, making it resilient and capable of multi-region read scaling, which makes it ideal for mission critical performance intensive analytics workload.

Always Free tier is available at no cost! Try MySQL HeatWave today and experience the performance.