High-concurrency applications often create a steady stream of short-lived connections. This pattern increases load on connection tracking, TLS handshakes, and load balancer control paths. If you size the load balancing layer for average throughput only, connection churn can still become the limiting factor.

This post analyzes how the Oracle Cloud Infrastructure (OCI) Flexible Load Balancer handles extreme connection churn. By stressing connections per second (CPS) and TLS handshakes, we evaluate its upper limits. The findings validate linear scalability, demonstrating that distributing traffic across multiple load balancers provides a proportional and predictable increase in total system throughput.

Why this analysis matters

Evaluating extreme connection rates helps you answer the following practical routing and scaling questions:

- How many new TLS connections a load balancer can handle per second at a given configuration.

- How latency changes as CPS increases.

- How reliably the system behaves when clients open and close connections continuously.

- How predictably you can scale when you add load balancers and distribute traffic.

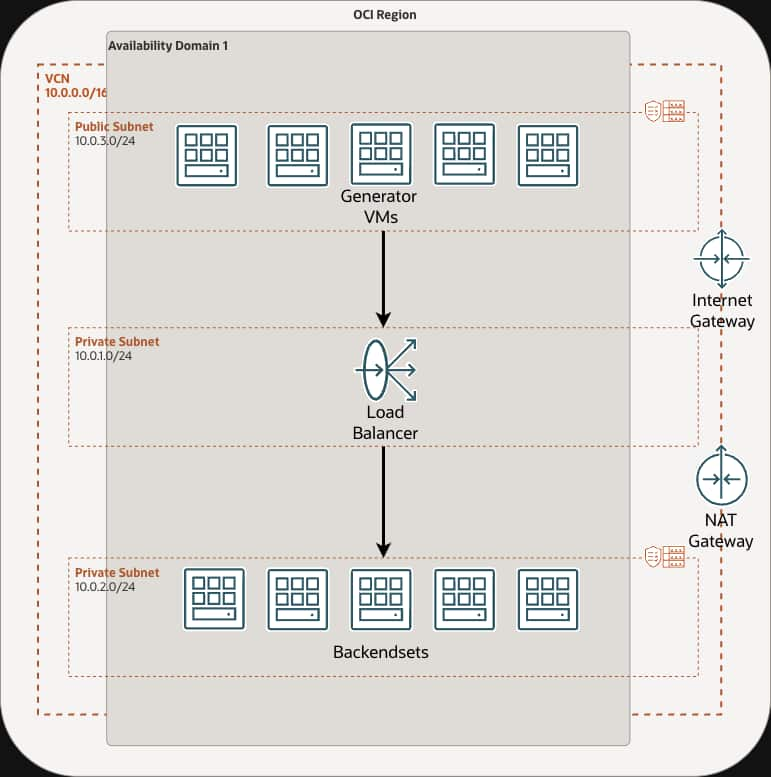

Test architecture

This test was run in the OCI San Jose region (US West), and the performance remains consistent across regions. It uses a dual-stack virtual cloud network (VCN). It includes runs that use IPv6 frontend VIPs routing to IPv4 backends, along with IPv4-to-IPv4 paths.

The following elements stay consistent across the stateless runs described in the test report:

- Load balancer shape: OCI Flexible Load Balancer with 8,000 Mbps minimum and 8,000 Mbps maximum bandwidth.

- Listener: TCP port 443 with TLS offload.

- TLS settings: TLS 1.2 and TLS 1.3 with cipher suite

oci-modern-ssl-cipher-suite-v1using an ECDSA P-256 certificate. - Backend protocol: HTTP/1.1 on port 80.

- Client source preservation: Proxy Protocol v2 enabled between the load balancer and backends.

- Network security: Stateless network security group (NSG) rules only.

Why stateless security rules matter for high CPS

In high-CPS scenarios, clients repeatedly open and close connections. Stateful security rules require connection tracking in the networking fabric. Connection tracking can become a bottleneck or hit table limits when connection churn is high.

Stateless NSG rules avoid that tracking requirement. You still need to define explicit ingress and egress rules to support a symmetric return path. This approach removes a common scaling pressure point for connection-heavy traffic.

Why ECDSA certificates matter for extreme connection rates

Because this evaluation uses Connection: close, every request forces a new TCP connection and a full TLS handshake. During this process, cryptographic negotiation is typically the most CPU-intensive operation.

Using an Elliptic Curve Digital Signature Algorithm (ECDSA P-256) certificate instead of a traditional RSA certificate significantly reduces the compute overhead required for each handshake. This configuration prevents the load balancer from bottlenecking on cryptographic math, ensuring the evaluation accurately measures the connection-tracking and routing limits of the underlying infrastructure.

Methodology

The evaluation is designed to measure connection processing rather than long-lived throughput.

Load generation

- Tool: Distributed Locust.

- Request pattern: The test uses

Connection: closeso each request forces a full TCP connection plus a TLS handshake. - Pre-run validation: The test runs an automated HTTPS sanity check before each tier. It confirms an HTTP 200 response.

- Soak period: Each tier includes a 5-minute hold after reaching the target user count.

Host-level tuning

The generators and backends use VM.Standard.E5.Flex instances (16 OCPUs and 64 GB memory). The test applies kernel tuning to increase headroom for high concurrency. The following settings illustrate the tuning approach used in the report:

net.core.somaxconn=262144

net.ipv4.ip_local_port_range=1024 65535

net.ipv4.tcp_tw_reuse=1

fs.file-max=1000000Test scenarios and outcomes

The test evaluates multiple tiers and topologies. The generator count, backend count, and load balancer count vary across runs. The table in this section highlights two representative datapoints that support the linear scaling conclusion.

By passing Connection: close on each request, this evaluation intentionally forces constant connection setups and TLS handshakes. Because the system must continuously establish new sessions, the observed requests per second (RPS) closely tracks the target CPS throughout these runs.

Results

The following table lists the complete stateless results from the test report.

| Generators | Config | LBaaS | Backends | Config | CPS | RPS | Avg Time (ms) | Failures | Nines |

|---|---|---|---|---|---|---|---|---|---|

| 1 | 16/64 | 0 | 1 | 16/64 | 10000 | 9889 | 21 | 0.0000% | 100.0000% |

| 1 | 16/64 | 1 | 1 | 16/64 | 10000 | 9954 | 9.72 | 0.0000% | 100.0000% |

| 1 | 16/64 | 1 | 1 | 16/64 | 12500 | 12176 | 23.36 | 0.0000% | 100.0000% |

| 1 | 16/64 | 1 | 2 | 16/64 | 12500 | 12200 | 24.33 | 0.0000% | 100.0000% |

| 1 | 16/64 | 1 | 2 | 16/64 | 15000 | 13577 | 86.25 | 0.0000% | 100.0000% |

| 2 | 16/64 | 1 | 1 | 16/64 | 20000 | 19702 | 11.91 | 0.0000% | 100.0000% |

| 2 | 16/64 | 1 | 1 | 16/64 | 25000 | 24781 | 29.29 | 0.0006% | 99.9994% |

| 2 | 16/64 | 1 | 2 | 16/64 | 25000 | 24153 | 22.11 | 0.0010% | 99.9990% |

| 2 | 16/64 | 1 | 2 | 16/64 | 30000 | 26286 | 79.36 | 0.0000% | 100.0000% |

| 3 | 16/64 | 1 | 1 | 16/64 | 20000 | 19900 | 5.83 | 0.0000% | 100.0000% |

| 3 | 16/64 | 1 | 1 | 16/64 | 25000 | 24832 | 8.32 | 0.0004% | 99.9996% |

| 5 | 16/64 | 1 | 5 | 16/64 | 50000 | 49500 | 10.26 | 0.0001% | 99.9999% |

| 5 | 16/64 | 1 | 5 | 16/64 | 60000 | 58694 | 17.1 | 0.0000% | 100.0000% |

| 5 | 16/64 | 1 | 10 | 16/64 | 50000 | 49430 | 9.61 | 0.0001% | 99.9999% |

| 5 | 16/64 | 1 | 10 | 16/64 | 60000 | 58711 | 17.77 | 0.0000% | 100.0000% |

| 10 | 16/64 | 1 | 6 | 16/64 | 50000 | 49960 | 5.85 | 0.0004% | 99.9996% |

| 10 | 16/64 | 1 | 6 | 16/64 | 60000 | 59881 | 5.47 | 0.0002% | 99.9998% |

| 10 | 16/64 | 2 | 10 | 16/64 | 120000 | 118940 | 18.92 | 0.0002% | 99.9998% |

How to read these columns:

- Failures are the request failure percentage reported by the test.

- Nines is the corresponding success percentage (for example, 99.9998 percent).

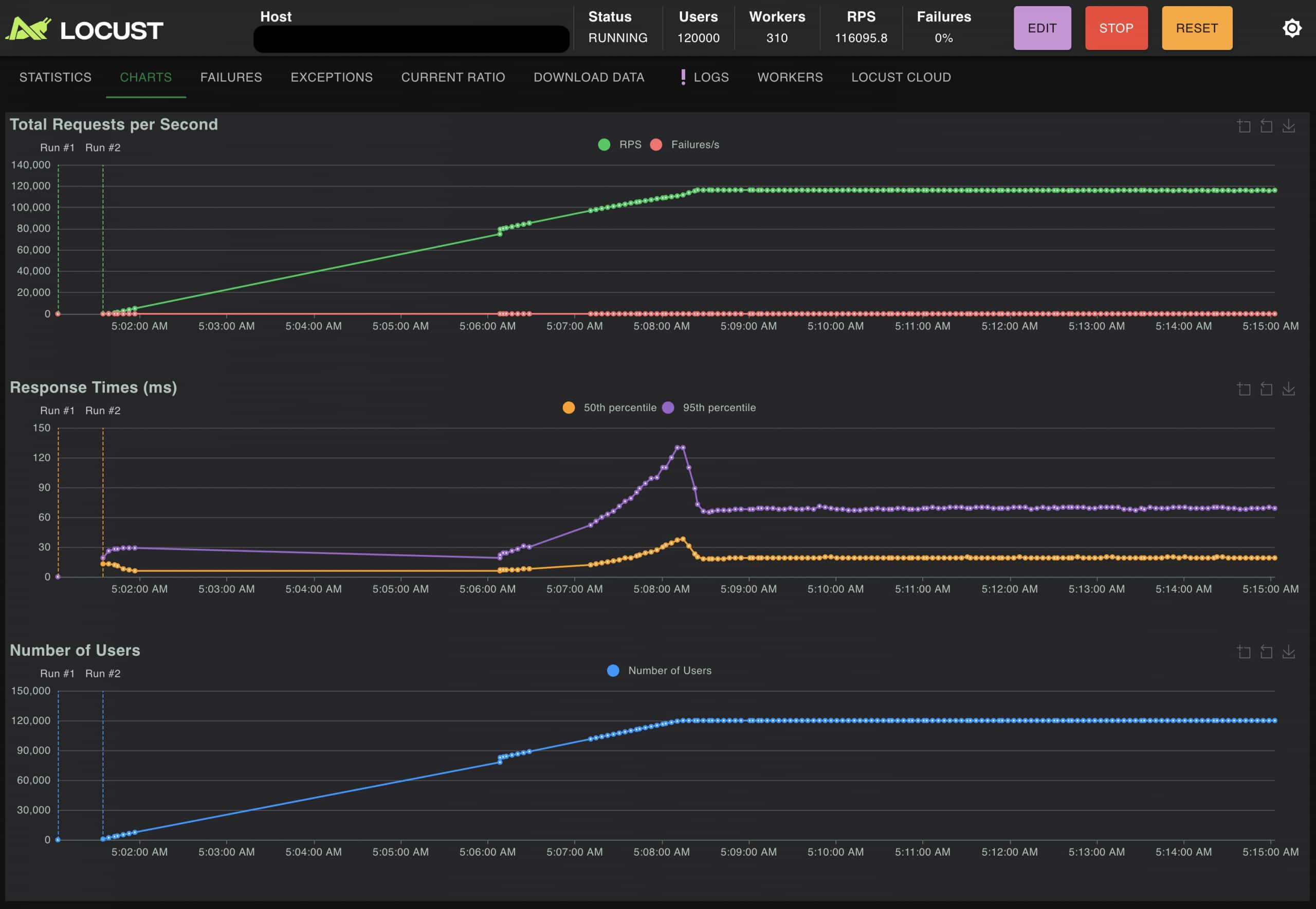

Linear scaling with multiple load balancers

The analysis validates a simple scaling strategy. In the test below, we increased total CPS capacity by adding an additional load balancer and distributing traffic across multiple VIPs.

A single-load-balancer configuration reaches 60,000 CPS with an average time of 17.1 ms. When the test doubles the load balancer count and increases the generator and backend fleets, it reaches 120,000 CPS with an average time of 18.92 ms. This result shows near 2x scaling under the same stateless approach and TLS offload configuration.

Key Takeaways

- Use stateless NSG rules for CPS-heavy traffic patterns.

- Size the generator fleet so the evaluation measures the load balancer’s actual limits, rather than the clients.

- Include soak periods at each tier to confirm steady-state behavior.

- Scale out with additional load balancers when you need more CPS. Fan-out provides predictable capacity growth.

Appendix: configuration snippets

Locust file snippet

This code snippet illustrates the strategy used to generate high connection churn. By injecting the header, it forces short-lived connections, ensuring every request triggers a fresh TCP and TLS handshake.

import os

from itertools import cycle

from locust import HttpUser, task, constant

VERIFY_TLS = os.environ.get("LOCUST_VERIFY_TLS", "false").lower() == "true"

CONNECT_TIMEOUT_S = float(os.environ.get("LOCUST_CONNECT_TIMEOUT_S", "8"))

READ_TIMEOUT_S = float(os.environ.get("LOCUST_READ_TIMEOUT_S", "15"))

WAIT_TIME_S = float(os.environ.get("LOCUST_WAIT_TIME_S", "1.0"))

MODE = os.environ.get("LOCUST_MODE", "cps").lower()

HEALTH_PATH = os.environ.get("LOCUST_HEALTH_PATH", "/healthz")

THROUGHPUT_PATH = os.environ.get("LOCUST_THROUGHPUT_PATH", "/payload_100k")

RAW_TARGETS = os.environ.get("LOCUST_TARGETS", "")

TARGETS = [t.strip() for t in RAW_TARGETS.split(",") if t.strip()]

NAME_BY_VIP = os.environ.get("LOCUST_NAME_BY_VIP", "true").lower() == "true" # default true for per‑VIP labels

class CpsUser(HttpUser):

host = os.environ.get("LOCUST_DEFAULT_HOST", "https://127.0.0.1")

wait_time = constant(WAIT_TIME_S)

def on_start(self):

self._targets = cycle(TARGETS) if TARGETS else None

def _next_base(self):

if self._targets:

return next(self._targets)

return self.host

@task

def do_request(self):

path = HEALTH_PATH if MODE == "cps" else THROUGHPUT_PATH

base = self._next_base()

url = f"{base}{path}" if base else path

headers = {"Connection": "close"} if MODE == "cps" else {}

name_val = path

if NAME_BY_VIP and base:

vip = base.replace("https://", "").replace("http://", "")

name_val = f"{vip}{path}"

self.client.get(url, headers=headers, verify=VERIFY_TLS,

timeout=(CONNECT_TIMEOUT_S, READ_TIMEOUT_S),

name=name_val)Kernel tuning snippet (sysctl.conf)

# Backend

net.core.somaxconn=262144

net.core.netdev_max_backlog=500000

net.ipv4.tcp_max_syn_backlog=262144

net.ipv4.ip_local_port_range=1024 65535

net.ipv4.tcp_fin_timeout=15

net.ipv4.tcp_tw_reuse=1

net.core.rmem_max=33554432

net.core.wmem_max=33554432

net.ipv4.tcp_syncookies=1

net.ipv4.tcp_fastopen=3

# Additional safe CPS-focused tweaks

net.ipv4.tcp_abort_on_overflow=0

net.ipv4.tcp_synack_retries=2

net.ipv4.tcp_syn_retries=3

fs.file-max=1000000

# Generator

net.core.somaxconn=65535

net.core.netdev_max_backlog=250000

net.ipv4.tcp_max_syn_backlog=262144

net.ipv4.ip_local_port_range=1024 65535

net.ipv4.tcp_fin_timeout=15

net.ipv4.tcp_tw_reuse=1

fs.file-max=1000000Terraform snippet (main.tf)

The following excerpt shows the Terraform configuration used to provision the environment, including the dual-stack VCN, stateless NSGs, and the TCP 443 listener with TLS offload and Proxy Protocol v2.

terraform {

required_providers {

oci = {

source = "oracle/oci"

version = ">= 7.0.0"

}

}

}

provider "oci" {

config_file_profile = var.oci_profile

region = var.region

}

# VCN (dual-stack enabled)

resource "oci_core_vcn" "vcn" {

cidr_block = "10.0.0.0/16"

compartment_id = var.compartment_id

display_name = "cps-combined-vcn"

is_ipv6enabled = true

}

# NSG Rules (stateless + symmetric return-path) — ALL tcp_options nested blocks are multi-line

resource "oci_core_network_security_group_security_rule" "lb_ingress_443_from_generators" {

network_security_group_id = oci_core_network_security_group.nsg_lb.id

direction = "INGRESS"

protocol = "6"

source_type = "NETWORK_SECURITY_GROUP"

source = oci_core_network_security_group.nsg_generators.id

stateless = true

tcp_options {

destination_port_range {

min = 443

max = 443

}

}

}

resource "oci_load_balancer_listener" "tcp443" {

count = var.lb_count

load_balancer_id = element(oci_load_balancer_load_balancer.lb.*.id, count.index)

name = "tcp-443"

port = 443

protocol = "TCP"

default_backend_set_name = element(oci_load_balancer_backend_set.bs.*.name, count.index)

ssl_configuration {

certificate_name = oci_load_balancer_certificate.lb_cert[count.index].certificate_name

verify_peer_certificate = false

protocols = ["TLSv1.3", "TLSv1.2"]

server_order_preference = "ENABLED"

cipher_suite_name = "oci-modern-ssl-cipher-suite-v1"

}

connection_configuration {

idle_timeout_in_seconds = 1200

backend_tcp_proxy_protocol_version = 2

}

}