Introduction

The conversation around cloud-native applications is shifting from simply running scalable microservices to enabling intelligent systems that can reason, automate, and adapt in real time. As organizations move beyond generative AI experimentation, the real challenge is no longer model capability—it’s how to operationalize AI securely, cost-effectively, and at scale within existing architectures.

This is where the combination of Oracle Kubernetes Engine (OKE) and OCI Generative AI becomes compelling. Kubernetes provides the foundation for modern application deployment, and when paired with OCI’s enterprise-grade AI services, it enables organizations to embed intelligence directly into workloads while maintaining strong control over performance, security, and cost.

Frameworks like kagent illustrate the potential of running AI agents within Kubernetes; when integrated with OKE and OCI Generative AI enterprises can move beyond isolated use cases to build intelligent, responsive applications that automate workflows and deliver richer user experiences in a secure, scalable cloud environment.

Core Concepts

kagent is designed to simplify the development and deployment of AI agents within Kubernetes environments. It enables users to define agents that interact with large language models and external systems, all managed through Kubernetes-native constructs. This makes it easier to integrate AI capabilities into existing microservices architectures without introducing complex external dependencies. OKE provides a managed Kubernetes service that ensures scalability, security, and seamless integration with broader OCI ecosystem. OCI Generative AI complements this by offering access to powerful large language models through secure APIs. These models can be invoked using REST endpoints or OpenAI-compatible interfaces, allowing developers to integrate AI capabilities using familiar patterns while maintaining enterprise-grade security and governance.

Architecture Overview

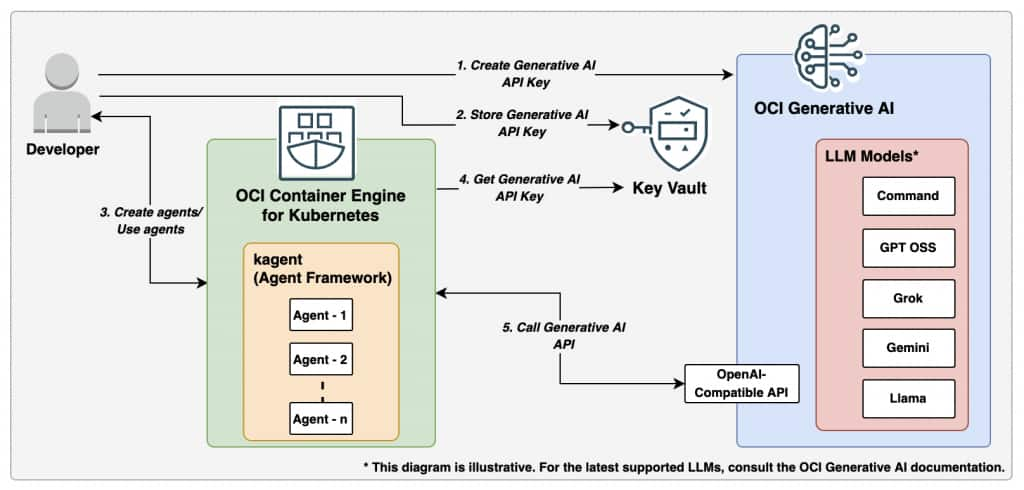

In this architecture, kagent runs as a set of services within an OKE cluster, where it manages agent execution and provides an interface for user interaction. Users begin by creating a Generative AI API key within OCI, which serves as the authentication mechanism for accessing OCI Generative AI services. This key is then securely stored in OCI Vault, ensuring that sensitive credentials are managed using enterprise-grade security controls rather than being embedded directly in application configurations.

When users create and interact with agents through the kagent UI, the system retrieves the required API key securely from OCI Vault. The OKE environment accesses the stored secret at runtime, enabling kagent components to authenticate requests without exposing the key. This approach ensures that credentials are centrally managed, auditable, and protected from unauthorized access.

The following diagram illustrates how kagent deployed on OKE interacts with OCI Vault and OCI Generative AI to process user requests and return responses in real time.

Once the API key is retrieved, kagent sends requests to OCI Generative AI using the OpenAI-compatible API endpoint. The service processes the request using the configured model and returns a response, which is then relayed back through kagent to the user. This interaction remains fully contained within the Kubernetes environment while leveraging OCI services for security and AI capabilities, resulting in a scalable, secure, and maintainable architecture.

Use Cases

Running kagent on OKE with OCI Generative AI enables a wide range of enterprise-grade AI applications:

- Intelligent Support Assistants

Automate customer or internal support by handling queries, resolving common issues, and guiding users through troubleshooting steps in real time.

- Workflow & DevOps Automation

Enable natural language–driven automation to trigger CI/CD pipelines, manage deployments, and execute routine operational tasks.

- Internal Knowledge Assistants

Provide context-aware access to enterprise documentation, wikis, and systems, helping teams retrieve accurate information instantly.

- Kubernetes Operations & Monitoring

Use AI agents to analyze logs, detect anomalies, and provide insights into cluster health, while assisting with scaling, debugging, and incident response.

- Incident Management & Root Cause Analysis

Accelerate resolution times by summarizing incidents, correlating logs and metrics, and suggesting possible root causes and remediation steps.

- Security & Compliance Assistance

Monitor configurations, flag potential security risks, and assist with compliance checks by analyzing policies and system activity.

- Data Exploration & Insights

Allow users to query data systems using natural language, generate summaries, and extract insights without needing complex queries.

- Multi-Agent Orchestration

Coordinate multiple AI agents to handle complex tasks across systems, enabling end-to-end automation of business and IT processes.

By combining OKE’s scalable Kubernetes platform with OCI’s secure and high-performance generative AI services, organizations can embed intelligence across their application landscape—driving efficiency, improving user experiences, and accelerating AI adoption at scale.

A quick demo:

A pod “demo-broken-backend” in namespace kagent has status “CrashLoopBackOff”

kubectl get pods -n kagent

NAME READY STATUS RESTARTS AGE

argo-rollouts-conversion-agent-df5fccb46-tdwgm 1/1 Running 0 2d18h

cilium-debug-agent-6fb5c8759c-h2l9n 1/1 Running 0 2d18h

cilium-manager-agent-677bcc54b8-26jpk 1/1 Running 0 2d18h

cilium-policy-agent-676d579c86-btk6l 1/1 Running 0 2d18h

demo-broken-backend 0/1 CrashLoopBackOff 0 4m39s

helm-agent-64b577b589-gfs44 1/1 Running 0 2d18h

istio-agent-746f86bc66-txvtq 1/1 Running 0 2d18h

k8s-agent-5b67d4674-245mv 1/1 Running 0 2d18h

kagent-controller-67df5599cd-9dl2q 1/1 Running 0 2d18hKagent provides detailed analysis of the error and applies the fix.

The pod “demo-broken-backend” in namespace kagent has status “Running”

kubectl get pods -n kagent

NAME READY STATUS RESTARTS AGE

argo-rollouts-conversion-agent-df5fccb46-tdwgm 1/1 Running 0 2d18h

cilium-debug-agent-6fb5c8759c-h2l9n 1/1 Running 0 2d18h

cilium-manager-agent-677bcc54b8-26jpk 1/1 Running 0 2d18h

cilium-policy-agent-676d579c86-btk6l 1/1 Running 0 2d18h

demo-broken-backend 1/1 Running 0 24s

helm-agent-64b577b589-gfs44 1/1 Running 0 2d18h

istio-agent-746f86bc66-txvtq 1/1 Running 0 2d18h

k8s-agent-5b67d4674-245mv 1/1 Running 0 2d18h

kagent-controller-67df5599cd-9dl2q 1/1 Running 0 2d18h

Key Benefits

- Run AI Natively on Kubernetes

Deploy AI-powered agents directly within OKE, leveraging built-in scalability, resilience, and managed operations for production-grade workloads.

- No Model Infrastructure Management

With OCI Generative AI, developers can access advanced language models without provisioning or maintaining underlying AI infrastructure—reducing operational overhead and accelerating development.

- Faster Development Cycles

Teams can focus on building intelligent application logic rather than managing AI platforms, enabling quicker iteration and time-to-market.

- Flexibility and Extensibility

Easily switch between models based on use case requirements, integrate external tools and workflows, and extend agent capabilities as applications evolve.

- Kubernetes-Native Deployment Simplicity

Using Helm and standard Kubernetes patterns simplifies installation, upgrades, and lifecycle management, making the solution developer-friendly and operationally consistent.

- Unified Access to Multiple Models

A single API key provides access to multiple models via OCI, eliminating the need to manage separate credentials across providers.

- Seamless Model Switching

Switch between models without modifying application code, enabling greater agility, experimentation, and optimization.

- Centralized Billing and Cost Visibility

Consolidated billing within OCI provides a unified view of usage and costs, simplifying financial management and governance.

- Enterprise-Grade Security

API keys are securely managed using OCI Vault, ensuring credentials are not exposed in application code.

- Strong Governance and Access Control

Integration with OCI IAM policies enables fine-grained access control and compliance with enterprise security standards.

- Production-Ready Operations

Supports ingress configuration, load balancing, autoscaling, and observability—ensuring deployments are scalable, reliable, and maintainable in real-world environments.

Summary

Running kagent on OKE with OCI Generative AI provides a powerful and practical foundation for building intelligent cloud-native applications. By combining a Kubernetes-native agent framework with OCI’s advanced AI capabilities and secure key management through OCI Vault, developers can create scalable, secure, and responsive systems that integrate seamlessly with existing workloads. This solution demonstrates how organizations can operationalize AI within their Kubernetes environments while maintaining strong security practices and centralized control over credentials. As AI adoption continues to grow, integrating tools like kagent with OCI Generative AI offers a straightforward path to building next-generation applications that are both intelligent and cloud-native.

References and Resources to explore more:

What is Generative AI? How Does It Work?

Generative AI and its use cases for enterprise applications