Introduction

What happens when you take a supercomputing network fabric built for microsecond latency, low jitter and RDMA semantics, and operate it as a cloud service? In that world, the network is not just “plumbing” here; it becomes an integral part of the compute path. That’s the premise behind OCI SuperClusters. The challenge for us is making that performance model coexist with cloud realities: dynamically allocated bare metal, tenants with root access, and the non-negotiable isolation expectations of a multi-tenant platform. InfiniBand is a natural fit for such high-performance HPC and AI workloads. But it wasn’t designed around such an adversarial tenant threat model. Securing InfiniBand in a multi-tenant environment while giving tenants bare metal root access is notoriously difficult. When we decided to introduce InfiniBand to OCI SuperClusters for the Nvidia GB200 GPU shape, we had to treat its security as a first-class design constraint, not an overlay. In this blog, we’ll walk through how we took InfiniBand’s primitives, pressure‑tested them under cloud assumptions, and turned them into repeatable, fleet‑scale controls: partitioning the fabric to enforce tenant boundaries, adding identity and topology‑based defenses to reduce spoofing risk, and hardening the management plane to keep the fabric both fast and defensible.

OCI GPU Clusters: the “Three Networks”

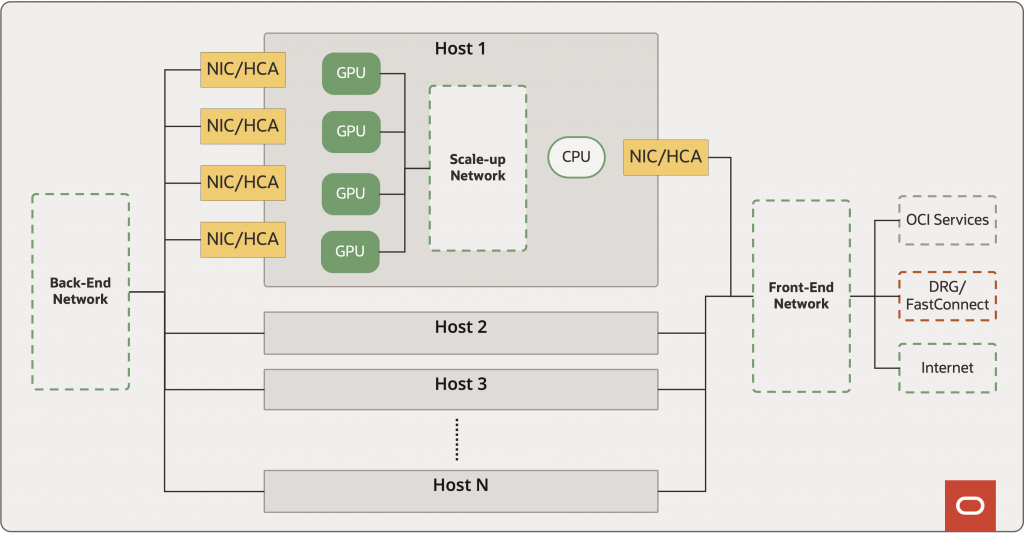

A useful way to reason about OCI’s GPU/HPC bare-metal systems is to separate three networks with different purposes and boundaries:

- Front-end (service) network: access to storage, other OCI services, and (when applicable) the Internet.

- Back-end (cluster) network: the high-performance interconnect between hosts—the fabric that is optimized to carry Remote Direct Memory Access (RDMA) traffic.

- Accelerator interconnect: an ultra-low-latency, high-bandwidth fabric with memory semantics (load/store) between accelerators, using technologies such as NVLink, UA Link, or CXL (within a host or spanning a few hosts depending on the platform).

When I mention “SuperCluster” or “the network,” I am referring to the back-end cluster network that carries RDMA traffic. Remote Direct Memory Access (RDMA) is a set of data transport semantics that lets one host move data directly into another host’s memory – both CPU RAM (Random Access Memory) and GPU HBM (High Bandwidth Memory) – with low CPU overhead and low latency. For GPU/HPC workloads, it’s a practical way to reduce host overhead, improve throughput, and keep tail latencies under control. RDMA data transfer is implemented in Host Channel Adapters (HCAs), aka Network Interface Cards (NICs). RDMA thus expands the relevant security surface area to these HCA/NICs and in a multi-tenant, bare-metal cloud, you should assume tenants can and will exercise those surfaces.

RoCE vs InfiniBand

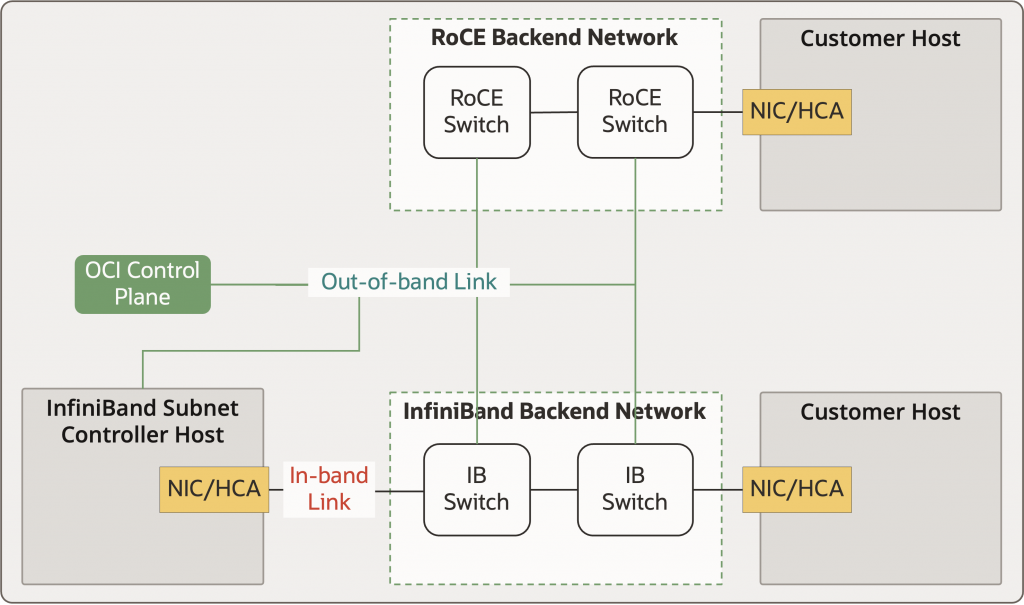

OCI’s first SuperClusters relied on RoCE (RDMA over Converged Ethernet). A big reason is pragmatic: Ethernet is deeply understood, operationally mature, and comes with a large body of security practice and tooling. RoCE keeps the RDMA programming model (verbs) while letting us stay in an Ethernet operating model. That matters at cloud scale, because the problem isn’t only high performance – considerations like tenant isolation and maintainability become critical.

On RoCE SuperClusters, the core isolation pattern is simple to state but strict in execution:

- A host has no access to the back-end fabric until it is admitted.

- Admission is authenticated using 802.1x, and the switch port is configured based on the cluster assignment made by the OCI control plane.

Two architectural details are important:

- The authentication/control infrastructure sits out-of-band from the back-end fabric.

- Once admitted, isolation is enforced in the network, not by trusting software running on the tenant host.

This model served us well as we scaled our RoCE SuperClusters to very large sizes.

InfiniBand: Novel Security Challenges

With our GB200 offering, OCI decided to add support for InfiniBand. InfiniBand is widely used in supercomputers because these workloads benefit from its performance characteristics (low latency of ~500 ns per switch) and fabric behavior (hop-by-hop credit-based flow control).

But InfiniBand is not just “faster Ethernet.” It was designed for high-performance environments that are often multi-user but not adversarial in nature – where the focus is performance and availability inside a single trusted administrative domain. That design center shows up immediately in the control plane: InfiniBand relies on a controller called the Subnet Manager (SM). The SM sits on the same fabric it manages, discovers topology, and programs a large portion of the configuration on switches and HCAs. InfiniBand does not use 802.1X-style port admission. In fact, The SM must communicate with HCAs over the fabric as part of normal operation, per the InfiniBand specification. Now add OCI’s key product constraint: bare-metal access. A tenant can run with root and aggressively exercise the HCA. That means customer hosts are not just traffic sources—they’re potential sources of fabric-management traffic, spoofing attempts, and denial-of-service pressure.

So, when OCI decided to introduce InfiniBand, we were working under a novel set of constraints that were not applicable to previously deployed clusters. This required us to do a complete rethink of how we secure this infrastructure.

Ethernet security has an enormous body of documentation. InfiniBand – especially in the context of multi-tenant, bare-metal, cloud-scale – had far less detailed, operator-grade security material publicly available. Early on, we regularly found high-level overviews that didn’t answer the concrete questions you need to operate a defensible service. To build the necessary depth, we worked closely with our hardware vendor to understand undocumented nuances, joined the InfiniBand Trade Association (IBTA) to access specifications, and then iterated: specification study, lab validation, troubleshooting, and automation until the controls were something we could run at fleet scale.

The combination of a new threat profile and limited practical public guidance made it an exciting challenge. In a multi-tenant bare-metal supercluster, this challenge was also a high-stakes one – getting this wrong means tenant boundaries can become porous, exposing OCI and our customers to security risks. Closing that gap required controls InfiniBand wasn’t built to provide out of the box—mechanisms that can hold up under root-level tenants and keep the fabric fast and performant.

InfiniBand Partitions — Setting boundaries for Tenants

Isolation in InfiniBand hinges on partitions, which are kind of like VLANs in RoCE. Every data packet that traverses the InfiniBand network carries a 16-bit identifier called the Partition Key (Pkey) in its header that tells the network which partition that packet belongs to. Every InfiniBand network contains the default partition (identified by 0x7fff). Every HCA and switch in the InfiniBand network belongs to the default partition. The SM (and any other IB service controllers) talks to all components in the fabric over the default partition. In addition to the default partition, HCAs could belong to other additional partitions over which they can communicate.

You must have noticed that the default partition is 0x7fff and must wonder what happened to the most significant bit in the PKEY. After all, the PKEY is a 16-bit identifier. The topmost bit identifies the type of membership in the partition. InfiniBand offers two types of memberships to a partition – limited or full. Limited members cannot communicate with other limited members. All other combinations of communication, i.e. full <=> full and full <=> limited, are allowed. The topmost bit of the partition key is set to 1 for full members of the partition. OCI operates its default partition in limited mode. The limited mode ensures that HCAs can only communicate with the SM, and with nothing else on the default partition

| Source/Destination | Limited | Full |

| Limited | 🚫 | ✅ |

| Full | ✅ | ✅ |

You may wonder – where are these partitions enforced? They are enforced at 2 locations, providing us defense in depth:

- Firmware on the HCA: Firmware on the HCA enforces that the customer host only has access to the partitions configured by the subnet manager. Though customers have root access to the HCA, they cannot change the firmware to a custom firmware they control. OCI enables the “secure firmware” feature on the HCAs which validates the signature of firmware binaries before installing them on the HCA. Only signed binaries can be loaded on these HCAs.

- Switch connected to the HCA: Switches act as bouncers in a bar. They do not let in any packets that deviate from the programmed Pkey entries for the HCA ports. They perform these PKEY checks both when receiving a packet from a host and when sending out a received packet out a port. They also send notifications (traps) to the subnet manager when packets are dropped due to mismatched Pkeys. Alerts are set up on these notifications so OCI may investigate and resolve any security issues.

Members of a partition are defined using their GUIDs, which are 64-bit hardware identifiers. The HCA’s membership in a partition is mapped using this GUID. The OCI control plane allocates a unique PKEY when a compute cluster is created. When the OCI control plane allocates a bare metal instance to this compute cluster, the GUIDs for the HCAs on that instance are securely programmed by the control plane into the SM and associated with the PKEY for that cluster. The SM thus contains the configuration for all non-default partitions with each non-default partition associated with a list of GUIDs that belong to that partition. Using this configuration, the SM programs the Pkey table on the HCAs and their connected switches in the network, allowing the magic of InfiniBand partitions to take effect. When the customer terminates an instance, a similar workflow occurs in reverse. The GUIDs on that instance are removed from the customer partition and those terminated hosts can no longer talk to the rest of the cluster. The OCI control plane periodically reconciles its control plane data with that in the SM to ensure there are no deviations.

To summarize:

- Every instance is a full member of its customer partition and a limited member of the default partition.

- The Compute Cluster you launch in OCI lives in its own partition—so only your gear can talk to each other.

- Customers can’t tinker with partitions — the SM’s partition configuration lives in a secure box, updated only via OCI’s internal protected pipeline.

GUID Spoofing — Sneaking Past the Bouncer

Remember how we said that GUIDs are 64-bit hardware identifiers that identify whether an HCA is part of a partition? Unfortunately, as we dug in deeper, we learned that, unlike MAC addresses, these GUIDs can be modified by a host which has root access to the HCA – exactly the scenario that is possible with bare metal access. Because GUIDs can be changed, crafty types might try to spoof a GUID and crash someone else’s party (or cluster in this case). In the best case, the SM would detect the duplicate GUIDs are disable both the HCAs, which could cause inconvenience to the customer who did nothing wrong. This is kind of like punishing the kid in the class whose report was copied by another sneaky kid because we could not distinguish whose work was the original one.

So, how do we teach the SM who is the original and who is spoofing their GUID? To provide this data, we use a feature of the SM called static topology specification (topospec for short). When topospec is enabled on the SM, the SM expects the user to provide a complete topology of the InfiniBand network fabric with the below schema. As the SM discovers the network, any links that do not match this intended topology are immediately disabled. If the GUID discovered at a specific switch port is not as expected from the topology file, only that port is disabled with no impact to the port that is expected to have that GUID.

| Topology File Schema |

| Switch GUID, Port Number, Neighbor GUID, Neighbor Port Number, Neighbor Type, Link State |

To be able to provide this detailed topology to the SM, we worked with our rack manufacturing teams to collect the GUIDs on all switches and hosts during rack integration testing in the factory. These GUIDs are then entered into OCI’s inventory management systems and made available to the OCI control plane in the region where the rack is installed. The OCI control plane uses this stored GUID information in the inventory systems and combines it with the known topology of the fabric to generate the topospec topology file. Every link in the network fabric is described and provided to the SM, locking down the complete physical layout of the InfiniBand fabric. The whole system is fully automated, all the way from provisioning to RMA. Take that, spoofers!

Taken together, OCI prevented GUID spoofing through:

- Every HCA and switch’s GUID is pre-recorded in our inventory system.

- The Subnet Manager expects a static topology of the network. If something crops up in the wrong spot, that link gets shut down faster than you can say “security breach.”

- The OCI control plane delivers a curated topology file of the InfiniBand network to the SM securely, with periodic automated updates and verification.

Rogue Subnet Managers? Not a Chance

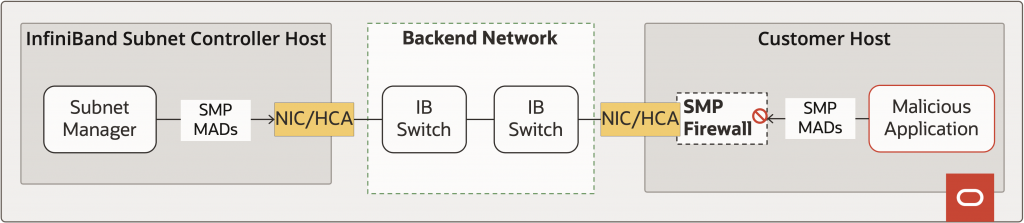

We’re paranoid, and for the cloud, that’s a good thing! The SM talks to switches and HCAs using Management Datagram (SMP MAD) packets. Since every customer bare-metal instance has access to the default partition, any host could theoretically generate SMP MADs to reprogram/control parts of the fabric. How do we protect from such attacks?

OCI protects its InfiniBand fabric using the MAD key. MAD keys are 64-bit keys that are programmed by the subnet manager on all devices. Once a MAD key is programmed on a device by the SM, the device will not respond to any write requests that do not contain the programmed MAD key. This essentially acts as an authentication mechanism for the SM. Most industry implementation of InfiniBand use a single MAD key for the entire InfiniBand subnet. OCI goes beyond this – we configured the SM to set a unique MAD key for each device in the subnet – every switch and every HCA on the hosts. Not just that, we set up the SM to generate and rotate these keys daily. Any unauthorized access is reported to the SM for investigation by our security teams. InfiniBand standard allows for graceful SM handover. We shut out imposters by operating the OCI SM at the highest priority and configuring it with an SM key that must match for the handover to happen.

MAD keys and SM keys sound like a good level of protection from rogue subnet managers. But what happens if the SM were temporarily absent? How do we protect the fabric in such cases? As you may have realized by now, at OCI, we believe in defense in depth, and we like to make sure our customers are protected from malicious behavior in all scenarios. To handle these cases, we enabled a feature on the customer HCAs called the SMP firewall. When the SMP firewall is enabled, the firmware on the HCA blocks the generation of SMP MADs from the customer’s host kernel by placing the Subnet Manager Interface (SMI) on the HCA under the exclusive control of the firmware. This blocks any application on the customer hosts from acting as an SM by sending SMP MADs to update/derive information about the SM/HCAs/switches. Can a malicious actor with root access to the bare metal just shut this feature off? No! The SMP firewall configuration is set and locked down during host provisioning using a secure private key. Once the instance is handed to the customer, the SMP firewall is set and cannot be modified.

In summary, we prevent imposter SMs as follows:

- Access to IB components is “authenticated” using MAD Keys. These MAD keys are generated and rotated per device on the fabric on a daily cadence.

- All customer hosts have SMP firewall configured to block any rogue SMs from accessing the fabric.

Fort Knox For the Subnet Manager

The subnet manager is exposed to MAD packets sent from customer hosts. As discussed before, the SMP firewall will block access to subnet management MADs originating from customer hosts. But other MAD packets (called GMPs or General Services Management Packets) that handle specific functions like Subnet Administration can still originate from the customer hosts. An obvious attack vector from the customer hosts is to initiate a Denial of Service (DOS) attack on the SM using these MADs. To avoid DOS attacks, these MADs are limited using MAD limiters configured on the SM’s HCA. The MAD limiter limits the global MAD rate and the per-node rate and burst sizes.

The subnet manager offers a functionality called Subnet Administration (SA) that returns non-algorithmic information about the subnet (like multicast, path records, service info etc.) as a response to SA Management Datagrams (MADs). OCI requires sensitive SA requests like GUID requests to contain the SA Key. As with the MKEY, these SA keys are configured on the SM and rotated periodically. Any requests received with the wrong SA Key are alerted upon for security review.

Briefly, the protections in place for the SM include:

- All MAD requests are rate-limited on multiple levels to prevent DOS attacks.

- Only a strict set of legitimate SA requests are allowed to avoid information leak/abuse.

Conclusion

InfiniBand delivers the performance profile that modern AI training and tightly coupled HPC workloads demand—but in a multi-tenant, bare-metal cloud, performance is only half the job. The fabric must remain stable and isolated even when you assume tenants have root, can exercise the HCA aggressively, and will eventually hit every edge case the spec allows.

What made securing InfiniBand in OCI SuperClusters challenging is that the usual “trusted cluster” assumptions do not apply. We had to take InfiniBand’s native mechanisms and make them enforceable at cloud scale: partitions as hard tenant boundaries and a constrained default partition, defense-in-depth enforcement in both switch silicon and HCA firmware, topology-aware controls that negate GUID spoofing as an attacker strategy, and a management plane that’s protected against both impersonation and pressure (per-device keys, rotation, firewalls, and rate limiting).

The broader lesson is that building cloud supercomputers is not about importing on-prem designs—it’s about re-deriving them under a stricter threat model, then automating the result so it stays correct as fleets grow, hosts churn, and hardware gets replaced. That’s the approach OCI took here: treat the fabric as part of the security boundary, assume adversarial behavior, and engineer controls that preserve the low-latency “magic” without relaxing our strict multi-tenant guarantees.