Building a Modern Video Delivery Architecture with a new Protocol

Digital video delivery has been sustained by HTTP-based protocols since streaming began gaining momentum nearly two decades ago, and it remains highly effective for VOD and buffered playback. However, large-scale live events introduced thundering herd patterns that HTTP delivery was not originally designed to handle efficiently. As real-time experiences have become central to internet media strategy, OCI conversations with content providers and ISVs consistently highlight the same core issue: premier live content is difficult to deliver predictably at peak concurrency. This led us to explore Media over QUIC (MoQ) for high-volume live workloads, and while promising, it soon became clear that transport alone would not solve live delivery at scale. What we learned is a complete solution requires a vertically integrated architecture with MoQ operating as an enabling mechanism.

This post kicks off a short series documenting our journey developing a multi-tenant video delivery service as we progress from beta toward general availability. It is a preview of our current direction, and specific implementation details will continue to materialize as the platform matures. Along the way, we’ll share what we set out to build, what worked, what didn’t, and the practical lessons uncovered while operating inside real media workflows rather than lab environments. The goal is not to present MoQ as a silver bullet, but offer a transparent look at how a new transport behaves when paired with production infrastructure, partner integrations, and capacity constraints.

What We Are Building

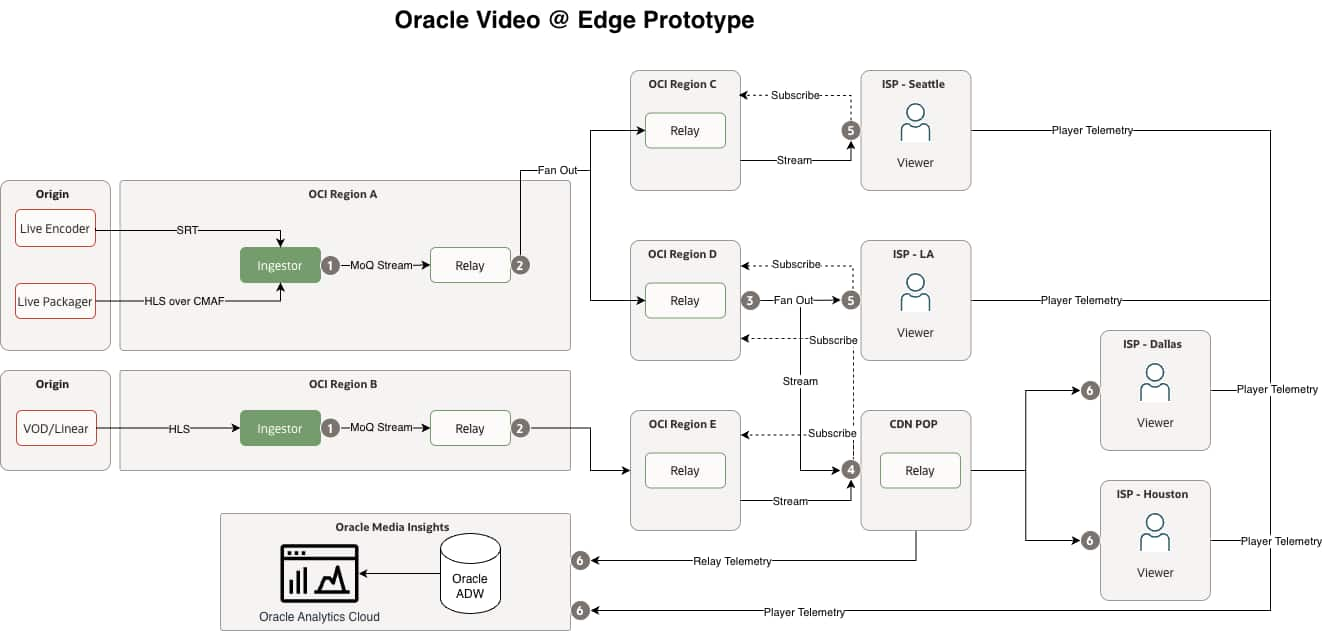

Oracle Video @ Edge (OVE) is a media delivery service designed for large-scale live and premium streaming workloads. It is a purpose-built layer that interoperates with CDNs, ISPs, and existing media stacks to complement the delivery ecosystem. The architecture aligns ingestion, transport, playback, and telemetry into a single operational fabric that can absorb burst demand while maintaining predictable performance and deep visibility (see Diagram 1). At a high level, the platform includes:

- Multi-protocol ingest that normalizes delivery feeds into MoQ object streams

- Cloud-native relay fleet for regional fanout and autoscaling

- Adaptive playback logic with bitrate-aware track switching under last-mile variability

- Flexible delivery paths with direct relay access and CDN federation models

- Player interoperability across commercial and open implementations

- Integrated telemetry for routing and performance optimization

From Protocol Exploration to Platform Capability

Development began with an open-source MoQ relay aligned with evolving draft semantics to validate object delivery and subscription behavior in realistic settings. Advancing toward a production-grade cloud primitive required hardening connection lifecycle management, integrating regional failover, autoscaling across OCI’s global footprint, and embedding telemetry from the outset. With proximity-aware relay placement and networking optimizations for high-throughput UDP workloads, MoQ evolved from a protocol experiment into an infrastructure-aligned transport layer focused on sustained concurrency and consistent performance at scale.

Designing for Live Playback in Constrained Conditions

Playback testing quickly showed that latency improvements do not automatically translate into a better viewer experience. Open player integrations exposed dropped frames during burst delivery, QUIC pacing sensitivities, and buffering models not suited for rapid object streams. So, we iterated across relay pacing, buffering behavior, and client logic as a single system, implementing adaptive bitrate switching and track selection based on last-mile conditions. The core lesson was clear: emerging transports stabilize only when the full delivery chain is tuned together.

New Protocol Adoption Without Disruption

A new delivery architecture must coexist with established media pipelines, and since most platforms output HLS over CMAF, SRT, or similar formats, forcing a replacement would increase operational risk. Instead, OVE ingestion accepts these traditional feeds and conforms them into deterministic object streams suitable for MoQ distribution while preserving timestamps, GOP boundaries, and playback integrity. Similarly, we implemented edge converting of MoQ streams into CMAF-compliant segments with dynamically generated HLS manifests at egress points. This allows OVE to sit between existing origin and playback systems without architectural overhaul as MoQ adoption grows.

Observability as a Core Differentiator

MoQ introduces new delivery dynamics, making deep visibility essential rather than optional, so observability was embedded as a core architectural layer. A high-throughput telemetry pipeline correlates infrastructure behavior with user experience in near real time, informed by player-side signals such as startup time, rebuffering, and effective bitrate. The approach is designed to coincide with existing analytics ecosystems rather than replace them, with alignment toward emerging and established standards such as CMCD and CMSD to ensure interoperability with third-party QoE platforms. Telemetry and analytics are centered around:

- Real-time player event ingestion at large scale

- QoE metrics including TTFF, rebuffer rate, and effective bitrate

- Relay and network performance correlation

- Data-driven routing and congestion mitigation

Ecosystem Integration and Interoperability

Protocol adoption is ultimately an ecosystem effort, so interoperability and standards alignment were prioritized from the start. Federation testing validated cross-infrastructure relay behavior while collaboration with player vendors, CDNs, and open implementations accelerated device and regional coverage. Because OCI already operates within a broad media ISV ecosystem from encoding, packaging, analytics, and delivery, MoQ is positioned as a supplementary transport layer that integrates into existing workflows rather than displacing established partners. The intent is to supercharge large-scale live delivery while preserving compatibility with current origins, CDNs, and analytics providers. Interoperability focus areas include:

- Distribution with external relay and CDN infrastructures

- Playback validation across a broad range of clients and devices

- Compatibility with origin and analytics stacks

- Incremental partner onboarding paths

What OVE Signals for the Industry

This work reflects an evolution toward purpose-built architectures for large-scale live streaming within the existing delivery ecosystem. The emphasis is moving beyond raw latency to predictability, visibility, and operational cohesion, where specialized live delivery can work alongside CDNs and analytics platforms to reduce cost, complexity, and fragility during peak events. Key implications include:

- Tightly coupled, purpose-built media architectures

- Telemetry-driven routing and optimization

- Incremental modernization without losing device reach

- Burst-resilient infrastructure designed for peak event concurrency

What Comes Next

As we move from beta toward general availability, our focus shifts to scale hardening, ecosystem reach, and operational determinism in real-world circumstances. This includes expanding interoperability, refining multi-tenant capacity modeling, and continuing to iterate on playback, relay behavior, and telemetry pipelines as a unified system. Near-term priorities:

- ISV integrations for streamlined ingest into MoQ namespaces

- CDN and ISP interconnect with expanded relay reach and resilience

- Player ecosystem growth and broader commercial support

- Capacity modeling with multi-tenant isolation and predictable scaling

- Monetization with dynamic ad delivery

- Content protection with digital rights management