Introduction: The Shift to Governed Execution

Enterprise AI governance is undergoing a fundamental transition. As AI systems evolve from passive text generators into stateful, tool-using agents that can mutate records, access enterprise systems, and operate across multi-step workflows, the core governance question changes. It is no longer mainly “Was the model response safe?” but “Is the next specific action authorized under the current policy, identity, approval state, data boundaries, and budget constraints?” Model-response safety, alignment, and answer quality still matter, but for agentic systems they are no longer sufficient on their own. Governance must also operate through a broader runtime control architecture. That shift sits at the center of the OCI AI Governance Framework and marks a change in the enterprise trust boundary itself.

This is not a minor extension of Responsible AI practice. It is a change in the unit of control. Enterprise GenAI systems are no longer limited to generating text; they can call APIs, read and update records, persist memory, delegate work, connect to external tools through protocols such as MCP, and continue across multiple turns or background workflows. In that environment, governance cannot remain a combination of static review, one-time evaluation, and output filtering. It must become a runtime control system that decides whether a specific action may execute now and emits evidence showing what happened, under which controls, and why.

The OCI framework addresses that shift with a governed execution model for enterprise LLMs, tools, and agents. It is intended for systems that operate beyond single-turn response generation, including tool-using agents, RAG applications, multi-turn workflows, MCP-connected systems, and deployments that require governance across build, admission, runtime, evidence, and day-2 operations. Its core artifacts make intended use, least privilege, delegated authority, memory posture, evaluation gates, and audit evidence enforceable at runtime. The design goal is explicit: approvals must bind to execution, runtime must be registry-gated, and every material decision must be replayable

1. Why Agentic AI Changes the Unit of Governance

The governance assumptions that supported early generative AI deployments are no longer sufficient. In a static prompt-response system, the unit of control is the model response, so governance focuses on model selection, dataset review, evaluations, and content safety. Even with retrieval-augmented generation, risk is still treated mainly as a property of the generated answer.

Agentic systems fundamentally change that model. They do not just generate text; they retain session state, use long-term memory, call APIs, update records, send communications, and delegate work across multiple autonomous steps. Once a system can act on enterprise state, governance can no longer focus only on the final answer. It must account for how the system moves from intent to action.

The relevant unit of governance is therefore the governed action trajectory. That trajectory captures the full execution path: proposed actions, identity and delegated authority, resource consumption such as budgets, state transitions, and side effects on enterprise data or records. Failures often emerge within that path rather than in a single response. That is why the OCI framework makes structured action representation an invariant: any output capable of causing a side effect must be represented as a schema-valid object so runtime enforcement can evaluate it before execution.

This is also why traditional AI governance is no longer sufficient on its own. Model-centric governance programs are good at defining intended use, running evaluations, and establishing guardrails, but they cannot answer the critical runtime question: may this system take the next specific action now? In practice, that requires real-time verification of tool permissions, destination approvals, delegated authority, approval validity, budget limits, and whether retrieved or tool-returned content is being trusted when policy says it must not be.

At that point, the deeper issue becomes controllability. Enterprises may agree on the need for human control, but without a runtime mechanism that mediates execution, they still lack a reliable way to ensure those constraints hold across multi-step behavior. That is the backdrop for the OCI threat model, which treats unauthorized tool use, unsafe data egress, denial-of-wallet loops, excessive autonomy, supply-chain compromise, state and memory poisoning, cross-session leakage, confused deputy behavior, and overbroad delegated authorization as primary risks rather than edge cases.

As governance shifts from response quality to action control, two previously implicit surfaces become critical: authority and state. The framework binds initiating, acting, and downstream principals to a specific approval context so high-impact actions cannot execute without clear authority. It also defines memory and state classes, retention and deletion rules, and isolation boundaries, because session state, long-term memory, and operational state can outlive the original request and shape later behavior. This leads to a necessary lifecycle distinction: promotion-time governance decides whether an agent is eligible to run within an approved control envelope, while runtime governance decides whether the next specific action is allowed at that moment.

2. The Four-Layer Control Architecture

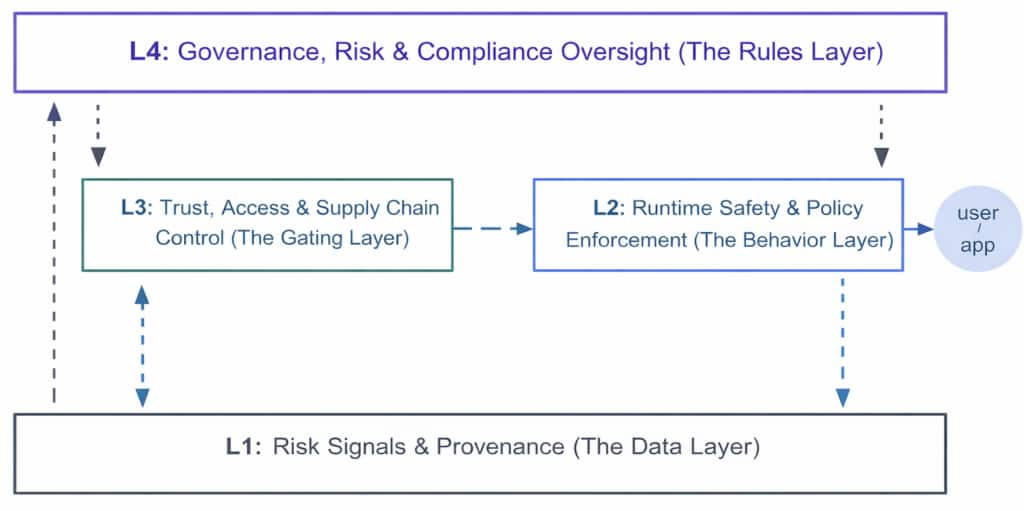

The framework transforms governance from a static policy into a non-bypassable mediation layer. This architecture (Figure 1) is organized into four linked control layers, each answering a critical question: what is allowed (L4), what is admissible (L3), what may execute now (L2), and what proves what happened (L1). Figure 1 reinforces that structure by placing oversight at the top, gating and runtime behavior in the operational middle, and evidence as the foundational layer feeding back into governance.

L4 — Rules layer (Governance, Risk, and Compliance Oversight) defines risk appetite, risk tiers, promotion gates, approval workflows, safe degradation patterns, and policy lifecycle controls.

L3 — Gating layer (Trust, Access, and Supply Chain Control) determines which models, tools, connectors, corpora, regions, and identities are eligible to be used at all. Least-privilege boundaries, registry gating, and artifact controls ensure runtime does not operate over unapproved assets.

L2 — Behavior layer (Runtime Safety and Policy Enforcement) decides whether the next action may execute. The Agent Runtime Controller evaluates schema-valid proposed actions against the active policy pack, identity and approval bindings, and current budget state before tools run or data is accessed.

L1 — Evidence layer (Risk Signals and Provenance) captures structured traces, evaluation results, provenance hashes, tool identities, tamper-resistant audit trails, and Structured Decision Records. It provides the evidence required for incident reconstruction, drift detection, and high-fidelity auditing.

The architecture is not only top-down; it is also a feedback loop. L1 feeds L4 by turning runtime behavior into attributable governance signals. Traces, drift signals, approval records, budget events, and decision logs flow back into oversight, where they inform policy review, threshold tuning, re-evaluation, and changes to risk posture. Evidence is therefore not just an audit artifact after the fact; it is an operating principle that keeps the governance system adaptive and decision-grade over time.

3. From Policy to Governed Execution

The framework becomes most concrete across three control moments: design time, promotion time, and runtime. At design time, teams define intended use, risk tier, tool and data scope, delegated identity posture, and memory rules. At promotion time, the framework requires eval suites, policy simulation, evidence packaging, and sign-off on the approved posture. Those stages establish the approved control envelope. Runtime is where that envelope is enforced.

At runtime, the Agent Runtime Controller evaluates each structured proposed action against the active policy pack, identity and approval bindings, and current budget state before tools run or data is accessed. It then returns enforceable outcomes such as ALLOW, ALLOW_WITH_REDACTION, REQUIRE_REVIEW, or DENY. Governance is no longer merely advisory; it becomes an execution-time decision function.

That runtime decision only matters if applications can consume it consistently. Evals serve as promotion gates and continuous-assurance mechanisms, while observability records what the system actually did under which identities, artifacts, and runtime conditions. The Governance Envelope turns policy into an operational contract. It packages the proposed action and its context into a machine-consumable result. This allows the application to consistently proceed, block, redact, or escalate based on a real-time decision function. In effect, it turns governance from periodic inspection into governed execution at the point of action.

The broader implication is architectural. Enterprise AI platforms are evolving into governed execution layers. A governed execution layer is not just a place to host models; it is the runtime control plane that enforces tool eligibility, policy decisions, identity and approval binding, budget controls, structured remediation, and evidence capture for each material agent action. OCI can provide telemetry, registry gating, policy enforcement hooks, and evidence interfaces, but customers and service owners still define risk appetite, approve policy packs, accept residual risk, and operate incident response. Runtime governance is therefore not just a software capability. It is the operating model for controlled autonomy.

Conclusion: Why Runtime Governance Matters

The enterprise control point is shifting away from the model endpoint and toward the governed runtime. In agentic systems, the core governance problem is no longer just whether a model can answer safely. It is whether the system can take the next action safely and lawfully: call an approved tool, operate under the correct identity, stay within budget, respect data and residency boundaries, satisfy approval requirements, and emit evidence that supports replay and audit.

The OCI AI Governance Framework addresses that shift by turning governance into an execution architecture. Policy defines what is allowed. Admissibility controls determine which tools, models, connectors, and identities may be used at all. Runtime enforcement decides whether a specific action may execute now. Evals and observability provide the signals and evidence needed to verify what happened and refine controls over time. The Governance Envelope makes those decisions operational by turning them into a machine-consumable execution contract that applications can enforce consistently at the point of action.

The era of simply exposing more powerful models is ending. In this landscape, durable advantage belongs to those who make autonomous behavior governable at the point of action — action by action, under policy, under authority, and with evidence.