April 20th, 2026

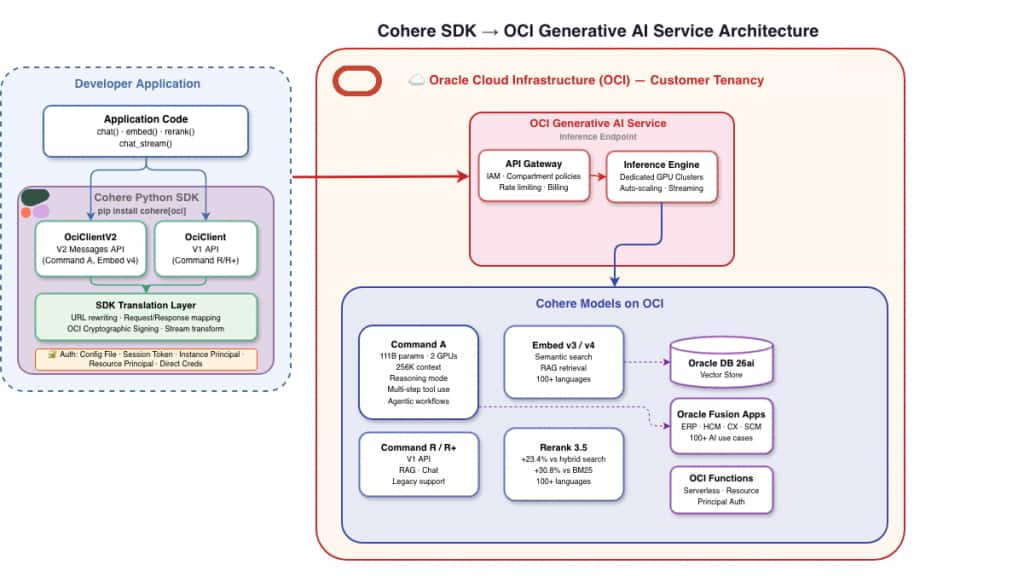

Developers building AI applications on OCI Generative AI can now use Cohere models — Command A, Command R, Embed, and Rerank — through the same Cohere Python SDK, with native support generally available today.

Why this matters now

Oracle has embedded Cohere’s Command, Embed, and Rerank models across more than 100 generative AI use cases in Oracle Fusion Cloud Applications — powering AI features in ERP, Supply Chain, HCM, and Customer Experience for 14,000+ enterprise customers.

But for developers building their own applications on OCI Generative AI, using Cohere models meant learning a separate API with different request formats, different authentication, and a different streaming protocol. Code written for Cohere’s API couldn’t move to OCI Generative AI without significant rework. That gap between “AI that Oracle built for you” and “AI you build yourself on OCI” was unnecessarily wide.

Native SDK support closes it. pip install cohere[oci], replace cohere.ClientV2(...) with cohere.OciClientV2(...), and the same Cohere application code runs against OCI Generative AI — with full OCI authentication, compartment-level governance, and inference that never leaves your tenancy.

The friction this removes is real. Deloitte’s 2026 State of AI survey of 3,200+ enterprise leaders found that while 74% of organizations expect AI to drive revenue growth, only 20% are achieving it today. The gap isn’t model quality — it’s operational: integration overhead, fragmented tooling, and the engineering tax of maintaining separate stacks for every provider.

One SDK, any environment

pip install cohere[oci] gives developers an OciClient and OciClientV2 that behave identically to their Cohere-hosted counterparts. Switching from Cohere’s hosted API to OCI Generative AI means replacing ClientV2 with OciClientV2 — the SDK handles URL rewriting, request and response format translation, OCI cryptographic request signing, and streaming event transformation behind the scenes.

Here’s what that means in practice:

Data residency and compliance. Inference stays within the customer’s OCI tenancy. OCI Generative AI is FedRAMP High and DISA IL5 authorized, HITRUST certified, and offers sovereign cloud regions for the most sensitive workloads.

Zero credential management. The SDK supports OCI’s full authentication stack — from instance principals on OCI Compute to resource principals on OCI Functions — eliminating API keys to manage or rotate.

Unified billing and governance. AI consumption appears on the same OCI bill as compute, storage, and database, governed by the same compartment-level IAM policies.

Command A and the V2 API on OCI Generative AI

Command A is a 111-billion-parameter model that delivers 150% the throughput of its predecessor Command R+ while requiring only two GPUs. It supports a 256,000-token context window and includes native multi-step tool use, making it a strong foundation for agentic enterprise workflows.

Command A and its variants are accessed through OciClientV2, which implements Cohere’s V2 API — the current generation interface that uses the OpenAI-style messages format, supports structured tool definitions, and aligns with how most developers already build chat and agentic applications.

Command A Vision extends Command A with multimodal capabilities — from document understanding and invoice processing to visual inspection workflows.

Command A Reasoning surfaces chain-of-thought output through its thinking parameter. It’s available through both on-demand inference and dedicated AI clusters, the latter offering uncapped output tokens, full 256K context, and isolated compute for regulated environments.

The V1 API remains available through OciClient for existing applications using the Command R family (command-r-plus, command-r-08-2024, command-r-16k). New applications should use OciClientV2.

Embed v4 and Rerank 3.5

Embed v4 is Cohere’s latest embedding model, now available on OCI Generative AI alongside the full Embed v3 family (English, multilingual, light, and image variants). It delivers improved retrieval quality for semantic search and RAG pipelines while maintaining compatibility with existing vector stores.

Rerank 3.5 outperforms hybrid search by 23.4% and BM25 by 30.8% on financial data across 100+ languages, based on Cohere’s internal testing. It slots into existing retrieval pipelines as a second-stage ranker, improving precision without changing the underlying search setup.

Together, Embed and Rerank give OCI Generative AI customers a complete retrieval stack — embed, search, and re-rank — all within their tenancy’s compliance boundary.

What enterprises can build

Grounded financial analysis. Embed 10-K filings with Cohere Embed, store vectors in Oracle Database 26ai, and generate citation-backed analysis with Command A — all within a single OCI compartment.

Custom Fusion extensions. Build extensions using the same Cohere models that power the 100+ built-in Fusion AI features — deeper purchase order analysis in ERP, candidate summarization in HCM, or service request triage in CX.

Event-driven serverless AI. With resource principal authentication, OCI Functions can call Cohere models with zero credentials in the deployment — event-driven AI that scales to zero and inherits the compartment’s security posture.

See it in action

Using Cohere on OCI Generative AI takes just a few lines. The following example shows chat, embeddings, and streaming — the same code patterns you’d use with cohere.ClientV2, pointed at OCI Generative AI:

import cohere

# Create an OCI client — uses ~/.oci/config by default

client = cohere.OciClientV2(

oci_region="us-chicago-1",

oci_compartment_id="ocid1.compartment.oc1...",

)

# Chat with Command A (V2 messages format)

response = client.chat(

model="command-a-03-2025",

messages=[

{"role": "system", "content": "You are a financial analyst."},

{"role": "user", "content": "Summarize the key risks in this filing."},

],

max_tokens=400,

)

print(response.message.content[0].text)

# Embeddings for semantic search and RAG

response = client.embed(

model="embed-english-v3.0",

texts=["Oracle Cloud Infrastructure", "Generative AI service"],

input_type="search_document",

)

for i, embedding in enumerate(response.embeddings.float_):

print(f"Text {i}: {len(embedding)} dimensions")

# Streaming responses in real time

for event in client.chat_stream(

model="command-a-03-2025",

messages=[{"role": "user", "content": "Explain RAG in three sentences."}],

max_tokens=400,

):

if (

hasattr(event, "delta") and event.delta

and hasattr(event.delta, "message") and event.delta.message

and hasattr(event.delta.message, "content") and event.delta.message.content

and hasattr(event.delta.message.content, "text")

and event.delta.message.content.text

):

print(event.delta.message.content.text, end="")The SDK supports five authentication methods — config file profiles, session tokens, direct credentials, instance principals, and resource principals — covering every deployment scenario from local development to serverless production.

Get started

pip install cohere[oci]

Resources:

- Cohere Python SDK

- OCI Generative AI Documentation

- Cohere API Documentation

- Oracle + Cohere: 100+ AI Use Cases

- Cohere Python SDK Documentation

What’s next

Rerank support for dedicated endpoints, async client support, and tighter integration with Oracle Database 26ai’s vector search are all on the roadmap. Try it today and share your feedback on GitHub.

References: