Enterprises don’t just want agents that summarize—they want teams of agents that can coordinate plans, hand off tasks, and safely take action across systems. In multi-agent settings, a single missing dependency, stale runbook, or unclear ownership chain can cascade into repeated errors at machine speed.

SKS exists to make organizational knowledge observable, governed, and decision‑ready, so every agent is grounded in the same up‑to‑date evidence base rather than improvising from isolated snippets.

In practice, SKS enables an agent (for example, one configuring a VCN peering policy) to receive canonical guidance, active work, code context, and named experts in one governed briefing: so the agent can recommend actions with the rigor humans expect from trusted experts.

What SKS Adds Beyond Similarity Retrieval

Many systems described as “RAG” primarily optimize for returning the most similar passages. Similarity retrieval is useful, but in real operations, the most decision-critical inputs often aren’t text-similar to the question:

- the incident ticket that is currently open,

- the PR that introduced a regression,

- the design doc that defines invariants and constraints,

- the on-call owner who can approve a risky action,

- the dependency chain that determines blast radius.

SKS is designed for complete, coherent retrieval needed for action. It standardizes and governs the additional signals agents need—provenance, freshness, relationship expansion, and accountable ownership: so recommendations can be defended with traceable evidence.

Concretely, SKS provides:

- Coherent briefings, not fragmented snippets. A single governed briefing fuses canonical documents, related work items, and expert contacts, supplying surrounding evidence up front.

- Accountability signals. Tiered provenance, release metadata, and explicit ownership tags distinguish canonical policy from emerging insight.

- Freshness by design. Continuous scouting with change detection triggers continuous re-indexing only for artifacts that changed, so guidance reflects fast-moving incidents and updates.

- Relationship intelligence. Validated graph connections (e.g., design lineage, dependency links, implementing code, ownership) bring in decision-critical artifacts that similarity-only retrieval often misses—while filtering noise.

Example: From “a runbook snippet” to an Actionable Response

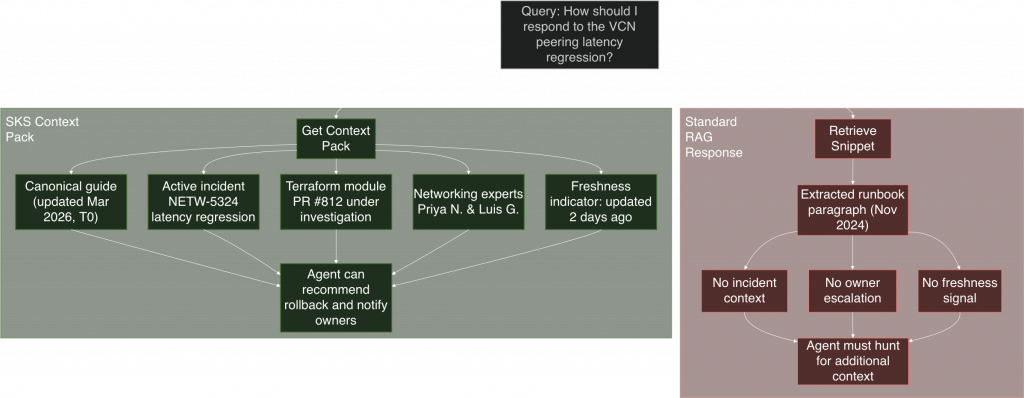

Consider the query: “How should I respond to the VCN peering latency regression?”

A similarity-focused pipeline might surface a 2024 runbook and stop there: leaving engineers unaware of an open latency incident, the suspected Terraform module, or who owns the fix.

SKS (see the diagram below), instead, assembles a Context Pack where enrichment and governed relationship edges connect the question to:

- the updated canonical guide,

- the active incident ticket,

- the recent commits / module under investigation,

- the named networking experts to escalate to.

That briefing supports an explainable recommendation (e.g., a safe rollback) with clear ownership and escalation paths.

Context Packs: Scientific Briefings for Agents

A Context Pack is SKS’s fundamental deliverable: a structured, decision-ready briefing designed for action (not just reading) optimized for explainability, so an agent can cite what evidence drove a recommendation and who can validate it.

Each pack aims to answer three questions simultaneously:

- What should I do?

- Why is this guidance credible?

- Who can validate it (ownership / escalation)?

A Context Pack typically includes:

- Curated evidence tiers. Canonical policy, team playbooks, and exploratory work are separated by trust tier, so agents know when they are citing governed guidance versus emerging insight.

- Active work surface. Open issues, pending approvals, and recent commits show whether the topic is stable or in flux, signaling when escalation is safer than automation.

- Expert map. Named maintainers, reviewers, and recent contributors provide escalation paths and accountability.

- Narrative context. Relationship templates explain how artifacts influence one another (“This PR resolves the incident tracked in…”) so agents can defend their recommendations.

- Freshness and coverage indicators. Recency stamps, update spans, and gap indicators act as confidence signals for agent decisions.

By packaging these elements together, Context Packs give agents the situational awareness that human operators assemble manually today.

How SKS Builds Context Packs

SKS uses a disciplined, measurable pipeline to transform organizational information into governed briefings. A key design principle is separating what happens offline (building the evidence base) from what happens at run-time (answering a query).

Offline: Continuously Build and Govern the Evidence Base

- Evidence curation (Scouting). Continuous observation brings in documents, tickets, code, and conversation trails (e.g. Confluence/KB pages, design docs, incident systems, Git repos/PRs, runbooks, postmortems, and team discussion threads). Normalization keeps metadata consistent. Change detectors ensure we only reprocess what moved.

- Feature enrichment. Automated annotators extract entities, temporal signals, ownership, and structural cues. Enrichments are treated as experimental features and promoted only when offline evaluation shows measurable lift in precision or freshness.

- Relationship intelligence. Enriched artifacts populate a governed knowledge graph capturing authorship, design lineage, dependency chains, and ownership. Tier-aware scoring and graph audits maintain calibrated trust.

Run-time: Retrieve, Expand and Assemble the Context Pack

- Seed retrieval. Hybrid search provides high-precision starting points.

- Context expansion. Graph expansion introduces surrounding context via validated edges (e.g., depends on, implements, resolves, owned by).

- Reranking. Models trained with human/editorial feedback choose the best evidence blend for the prompt; ablation studies quantify the incremental value of each stage.

- Briefing assembly. Evidence is organized into Context Pack facets with narrative templates, expert callouts, and freshness indicators. Feedback and telemetry refine the assembly heuristics.

This end-to-end discipline keeps Context Packs reproducible, auditable, and tuned for decision-making.

Scientific Provenance and Impact

Running SKS with disciplined instrumentation lets us measure impact instead of guessing. Every change to enrichment rules, graph weights, or assembly thresholds is paired with targeted evaluation (human editorial reviews, task-level regression checks, and freshness spot tests) so we know whether Context Packs improve decision quality before rolling them out broadly.

Current scale highlights the breadth of the evidence base SKS governs: over a million Confluence pages and twelve thousand documentation topics are harmonized into the corpus. That ingestion yields more than seventeen million graph nodes and forty-six million relationships, giving agents a dense map of experts, dependencies, and design lineage to operate responsibly.

FAQ: Why not just use MCP servers or native connectors?

Tool calling and MCP-style integrations can help agents act on sources, but they don’t by themselves solve the enterprise retrieval problem: assembling complete, governed, decision-ready context across many systems with consistent provenance, freshness signals, relationship expansion, and quality gates.

SKS focuses on building and continuously governing that evidence base and retrieval stack so tool-using agents don’t rely on ad hoc queries to each system’s native search whose ranking, metadata, and access patterns are often insufficient for high-stakes decisions.

Closing Thoughts

SKS exists because enterprise decision-making requires more than “relevant text”: when an agent recommends or executes a production change, it must justify not only what to do, but why that action is credible right now and who is accountable for validating it; signals that similarity retrieval often misses, such as open incident state, recent code changes, policy exceptions, ownership, and dependency-driven blast radius.

By producing Context Packs that combine tiered provenance, freshness indicators, active work context, relationship-backed expansion, and an expert map, SKS retrieves not just more context but the right governed context with explicit cues for when automation is safe versus when escalation is required. This becomes even more important in multi-agent workflows, where incomplete or stale shared context can cause fast, repeated errors across teams, while a consistent, auditable evidence base helps agents converge on explainable decisions. Finally, SKS is designed to improve measurably over time through instrumentation and acceptance gates, so enrichment features, graph relationships, and assembly heuristics evolve based on demonstrated gains in coverage, freshness, and decision quality.