Introduction

Let me share something I’ve learned building enterprise AI systems: the future isn’t about creating one massive, all-knowing agent. It’s about orchestrating specialized agents that work together seamlessly—like a well-coordinated team rather than a single superhero trying to do everything.

Think about your organization. You’ve got HR systems, finance platforms, IT tools, and countless other services. Each has its own logic, its own data, its own quirks. The traditional approach? Build custom integrations for every possible combination. It’s exhausting, fragile, and doesn’t scale.

Enter the Agent-to-Agent (A2A) protocol and the Model Context Protocol (MCP)—two standards that are changing the game. With A2A and MCP, we can build agent ecosystems where new capabilities simply plug in, no rewiring required. Deploy a finance agent on Tuesday, and by Tuesday afternoon, your employees can ask budget questions through the same chatbot they use for everything else.

In this article, I’ll walk you through an architecture we’ve built that demonstrates this approach. It’s working in the real world today, and honestly, it’s kind of magical how extensible it is.

Solution Overview

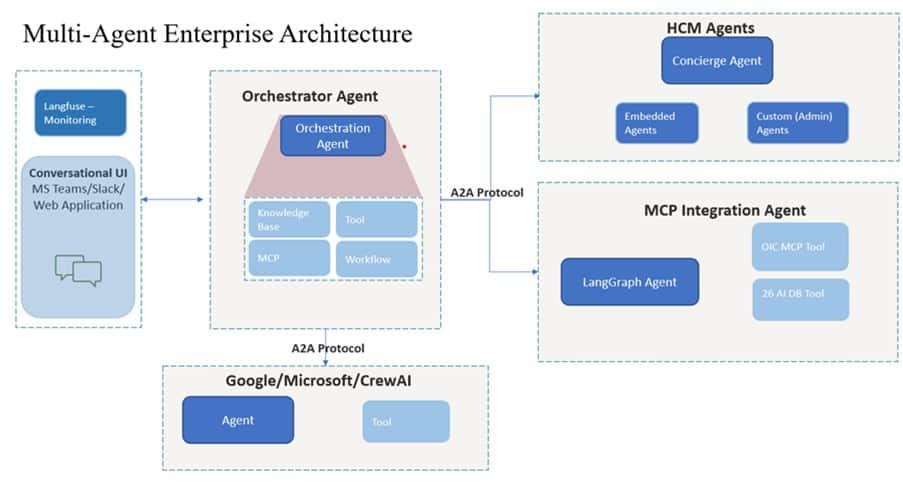

Here’s the setup: we’ve built a platform with four specialized agents, all talking to each other via A2A protocol. Let me introduce you to the team:

1. The Orchestrator Agent — Your Smart Router

This is the front door. When an employee asks a question through Teams or Slack, the Orchestrator figures out what to do. But here’s the clever part: it doesn’t just route requests—it has its own built-in superpowers.

Need to search the internet for current inflation rates? The Orchestrator handles it directly—no need to wake up another agent for that.

Uploaded a receipt image that needs text extraction? The Orchestrator’s OCR tool pulls out the data on the spot.

For domain-specific stuff like leave balances or payroll? That’s when it calls in the specialists.

2. The Oracle HCM Agent — HR Command Centre

This agent owns everything HR-related. Inside, it runs three sub-agents like a manager delegating to their team, eg:

- Timesheet Agent — “Submit 8 hours for today” goes straight here.

- Leave Tracker Agent — Handles vacation requests, balance queries, all that.

- Employee Hierarchy Agent — “Who’s my skip-level manager?” Boom, answered.

3. The MCP Integration Agent — The Bridge Builder

Not everything needs to be a full-blown intelligent agent. Sometimes you just need tools. That’s where MCP comes in. This agent connects to:

- Oracle Integration Cloud (OIC) — Talks to Microsoft Graph API for emails and calendar stuff.

- Company Database — Searches policies, FAQs, announcements

These aren’t agents—they’re tools. But through MCP, they plug into our agent network like first-class citizens.

4. The Framework-Based Agent — The Wildcard

Want to use CrewAI for complex approval workflows? Google’s Agent Development Kit for research tasks? Microsoft’s AutoGen for collaborative scenarios? Build whatever you want in whatever framework makes sense, wrap it with an A2A interface, and boom—it’s part of the ecosystem.

The magic sauce here is that the chatbot doesn’t know or care about any of these details. It just talks to the Orchestrator. Everything else happens behind the scenes through A2A protocol conversations between agents.

Technical Architecture/Design

How Agents Discover Each Other

Here’s where it gets interesting. Every agent publishes something called an Agent Card—think of it as a business card that says, “Here’s what I can do, here’s how to reach me, and here’s how to authenticate.”

The Orchestrator maintains a registry that checks for new Agent Cards every few minutes. When a new agent shows up, the Orchestrator learns about it automatically. No configuration files to update, no code to deploy, no downtime required.

Request Routing

LLM-powered intent detection matches requests to agents. For “Show leave balance and schedule a meeting,” the Orchestrator fires parallel A2A calls to Oracle HCM (leave data) and MCP Integration (calendar).

MCP Integration

Databases and APIs expose tools via MCP without becoming agents. The MCP Integration Agent bridges these to the A2A ecosystem.

What We’re Doing Today

Employees use this for everything:

- “Show my leave balance and book time with my manager” — multi-agent query handled seamlessly.

- “Extract the data from this invoice” — OCR tool in the Orchestrator handles it instantly.

- “Submit my timesheet for this week” — Straight to the Timesheet sub-agent.

What’s Coming Next

We’re adding:

- Compliance agents that monitor requests and flag policy violations before execution

- Proactive agents that notify teams about approaching deadlines

- Procurement agents for vendor management

The beautiful part? We add these by registering new agents. The Orchestrator discovers them. Employees can use them. No rewiring required.

Benefits

- Infinite Extensibility — Add domains via agent registration, not code.

- Protocol-Driven Interoperability — Oracle, CrewAI, custom agents coexist via A2A.

- Framework Flexibility — Mix LangChain, Google ADK, AutoGen as needed.

- Zero-Downtime Expansion — Deploy agents during business hours, live in 5 minutes.

- Isolated Failures — Agent crashes don’t cascade across the system.

Monitoring & Evaluation

Agent performance visibility is critical. We use Langfuse for LLM observability across the entire agent network. Every agent interaction—from the Orchestrator’s intent detection to Oracle HCM’s sub-agent routing to MCP tool invocations—is traced end-to-end.

Key metrics tracked: Agent response latency, routing accuracy, A2A call success rates, and token consumption per agent. Langfuse dashboards surface bottlenecks instantly—if the MCP Integration Agent suddenly spikes in latency, we see it in real-time. We also track LLM prompt performance: which intent classification prompts yield highest accuracy, where hallucinations occur, and which tool descriptions lead to routing errors.

For evaluation, we can use Langfuse’s dataset feature that powers our regression testing. Before deploying prompt changes or new agents, we can run them against historical queries to ensure routing accuracy doesn’t degrade. Production traces can become training data for fine-tuning intent classifiers. This closed-loop feedback will dramatically improve system reliability.

Conclusion

Here’s what we’ve learned from running this in production: building AI systems isn’t about creating the perfect monolithic agent. It’s about creating ecosystems where specialized agents can plug in, discover each other, and collaborate.

- Adding new domains can now take 2 weeks instead of 3–6 months.

- Employee queries can be fully automated.

- One conversational interface spans HR, IT, finance, and operations

- The system is genuinely future-proof—new frameworks and vendors slot right in

The real win isn’t any single metric. It’s the fact that when we need to add capabilities—and we will, because business needs never stop evolving—we can do it without touching the core system. That’s the promise of composable architectures.

We’ve gone from “How do we integrate this?” to “Just register the agent.” That shift in thinking. That’s how enterprises will scale AI from pilots to platforms.

Build for discovery, not integration. Let your agents find each other. The rest takes care of itself.

Future Enhancements

We started by assessing several agent orchestration approaches and building prototypes to test key design choices. As OCI capabilities in this area expand, we intend to integrate with OCI Enterprise AI where appropriate, using the Agent Spec protocol to maintain compatibility and portability.