As organizations accelerate the adoption of AI-driven applications, a new class of database workload has emerged. These workloads require transactional consistency, real-time analytics, and high-throughput vector similarity search, demanding not only performance, but also predictable and linear scalability.

Oracle AI Database with Oracle Real Application Clusters (RAC) transparently scales these new AI-native application workloads with its unique share everything architecture. By using RAC, organizations can seamlessly scale AI inference, similarity search, and other AI-native workloads without changing or re-architecting the application.

Scaling AI Workloads Without Complexity

Oracle RAC’s active-active architecture delivers a decisive advantage for CPU and memory-intensive AI database workloads. Organizations can achieve massive throughput and predictable response times for real-time AI workloads by using Oracle AI Database with RAC.

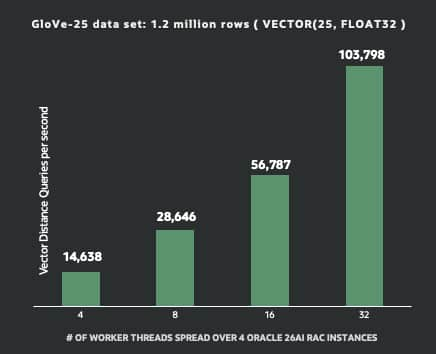

As shown in the graph, RAC enables linear scalability for AI-driven vector similarity searches, achieving more than 100,000 vector distance queries per second using GloVe workload over four nodes, helping organizations meet the demanding needs of production systems.

The GloVe workload used here contained pre-trained, 25-dimensional word vectors generated using the GloVe (Global Vectors for Word Representation) algorithm. Oracle RAC also enhances availability and operational resilience for these always-on AI services by eliminating single points of failure and enabling transparent failover across nodes. Because compute and memory resources scale out with each additional RAC node, teams can grow capacity incrementally while maintaining consistent performance as vector indexes and embedding volumes expand.

A Unified Platform for AI and Transactional Workloads

Vector similarity search introduces a fundamentally different workload profile compared to traditional indexed access. Instead of simple lookups, these queries involve high-dimensional computations, nearest-neighbor search, and large in-memory working sets, all of which must execute efficiently under high concurrency.

Oracle AI Database handles this natively. With optimized vector indexing such as HNSW, efficient distance calculations, and full SQL integration, vector search becomes part of the database engine itself, allowing semantic search, relational filtering, and transactional consistency to operate in a single execution path. SQL integration eliminates the need for separate vector databases and the complexity that comes with them. Data no longer needs to be duplicated or synchronized across systems, queries do not incur cross-system latency, and governance remains consistent. AI, relational, graph, document, and transactional workloads can run together on the same data, in real time.

Oracle AI Database with RAC extends this unified approach by scaling these workloads transparently across active-active instances. As demand grows, capacity can be added without redesigning the architecture, maintaining consistent performance while avoiding data, management, and security fragmentation and the duplication of tasks.

Enterprise-Grade, Real-Time AI at Scale – Built for Continuous Growth

Enterprise AI systems must deliver fast, consistent results under load while remaining continuously available. This becomes increasingly critical as both data volumes and query rates grow. Oracle AI Database with RAC enables this by distributing AI workloads across all active nodes, enabling high throughput and low latency with heavily concurrent access. At the same time, built-in high availability enables uninterrupted access for mission-critical applications.

Because AI processing happens directly where the data resides, organizations can avoid the delays introduced by data movement or cross-system calls. This allows AI to operate in real time, directly on transactional data, rather than through separate pipelines that may be delayed and become inconsistent. As data growth accelerates, this architecture provides a clear path forward: scale out incrementally, keep a single logical database, and support evolving AI workloads without introducing new systems or operational overhead.

Conclusion

Oracle RAC has long been the foundation for scalable and highly available database systems. With Oracle AI Database, it becomes a natural platform for high-throughput enterprise AI. Organizations can reduce architectural complexity and meet the demands of real-time AI workloads by integrating vector search, SQL-native AI processing, and transactional data within a single system and scaling it transparently across RAC.

Learn More

The result is a unified platform that delivers fast, consistent results, reduces data movement, and scales with growing demand – enabling AI to move from isolated use cases to a core part of enterprise systems.

- Run AI vector search without interruption: Leverage Oracle RAC’s built-in high availability to keep your AI workload queries and similarity searches online during planned maintenance and unplanned outages.

- Scale vector workloads linearly: Expand vector search and indexing across Oracle RAC nodes to handle growing data volumes and high-concurrency workloads.