Many thanks to our NVIDIA co-authors, Ikroop Dhillon, Director of Developer Relations and Manas Singh, Technical Product Manager.

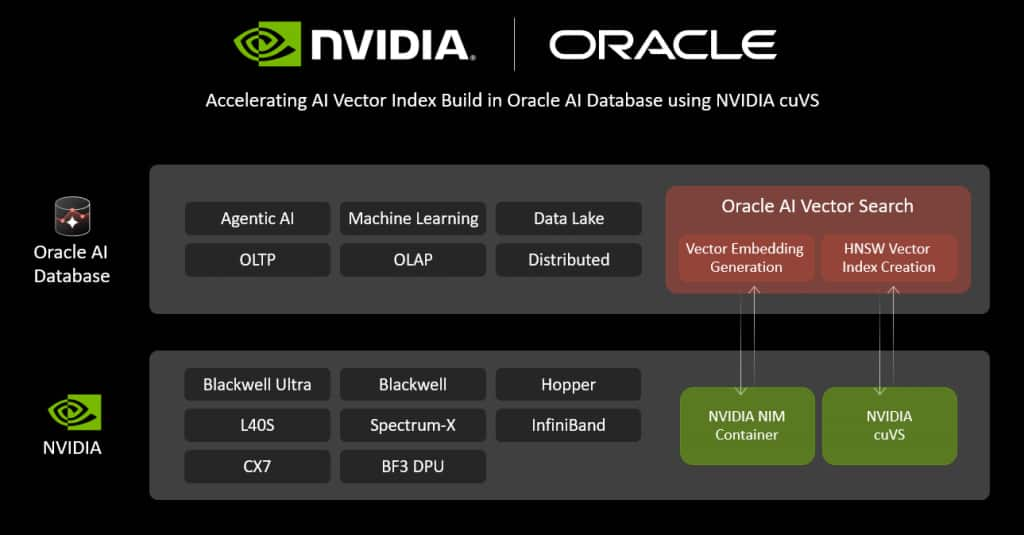

At NVIDIA GTC 2026, Oracle is sharing two advances designed to help organizations scale AI with better performance and cost efficiency using their own enterprise data. First, we are announcing the general availability (GA) of GPU-accelerated vector index generation in Oracle AI Database 26ai using NVIDIA hardware and NVIDIA cuVS. Second, we are showcasing an implementation for cost-efficient enterprise AI agents that integrates Open Agent Specification and the NVIDIA NeMo Agent Toolkit with Oracle AI Database, demonstrating how multi-step agents can dynamically select the right model for each step to balance quality, latency, and cost.

“Oracle AI Database brings AI capabilities directly to your enterprise data. With the general availability of GPU-accelerated vector index generation in Oracle AI Database 26ai using NVIDIA hardware and cuVS, we are greatly speeding up a highly compute-intensive part of the vector search pipeline. Customers can now build and refresh vector indexes much more quickly while keeping their applications simple and their data secure,” said Tirthankar Lahiri, Senior Vice President of Mission-Critical Data and AI Engines, Oracle Database.

“Fast, scalable vector indexing is essential for semantic search, RAG, and agentic AI. By combining NVIDIA GPUs and cuVS with Oracle AI Database vector search capabilities, customers can improve indexing throughput and efficiency so developers can upload their rapidly growing unstructured data faster into their AI applications for fresh and accurate responses,” said Ian Buck, VP / General Manager, Hyperscale and HPC, NVIDIA.

Enterprise AI is moving quickly from early experiments to production systems that must meet real requirements for latency, cost, security, and operational simplicity. The work we are highlighting at GTC reflects a shared focus: combining database-grade reliability with GPU acceleration and AI software to help customers build and run AI workloads at enterprise scale.

Enterprise AI is moving quickly from early experiments to production systems that must meet real requirements for latency, cost, security, and operational simplicity. The work we are highlighting at GTC reflects a shared focus: combining database-grade reliability with GPU acceleration and AI software to help customers build and run AI workloads at enterprise scale.

Accelerating Oracle AI Database Vector Index generation using NVIDIA cuVS

Vector indexes are essential for accelerating approximate nearest neighbor (ANN) search over enterprise embeddings. As vector datasets grow and indexes must be periodically refreshed as new content arrives, index creation and maintenance can become one of the most compute-intensive stages in an AI vector search workflow.

Building on our work shown at Oracle AI World 2025, Oracle has continued to collaborate with NVIDIA to strengthen the efficiency and robustness of GPU acceleration for vector workloads. Today, we are announcing that GPU-accelerated vector index generation is generally available in Oracle AI Database 26ai using NVIDIA hardware and cuVS.

Customers are using Oracle AI Database’s AI Vector Search capabilities with NVIDIA hardware across a range of real-world workloads. Biofy, a health-tech company using AI to rapidly identify bacterial infections, predict antibiotic resistance, and recommend targeted treatments in hours instead of days, encodes bacterial DNA as vectors and uses Oracle AI Vector Search for advanced sequencing workflows, where faster index builds and refresh cycles help teams move from data ingestion to actionable results more quickly. Sofya, an AI healthcare company providing real-time medical transcription and structured clinical documentation with over one million clinical encounters processed, is using Oracle AI Vector Search on NVIDIA hardware to support high-performance, cost-efficient real-time speech-to-text, reducing physicians’ daily documentation burden by enabling real-time transcription and structured clinical notes during consultations. Beyond transcription, the platform structures biomedical-grade patient data and applies a clinical reasoning layer aligned with evidence-based guidelines to support more consistent documentation and improved clinical workflows.

Oracle AI Vector Search is highly optimized to leverage modern CPU technology, and GPU acceleration complements that foundation by offloading the most “dense computation” portions of the workflow. This helps accelerate vector index generation while preserving the database’s core strengths for mission-critical transactions and analytics.

Optimized AI agents with Open Agent Specification, Oracle AI Database, and NVIDIA NeMo Agent Toolkit

As AI agents mature, they are increasingly composed of multiple steps including reasoning, validation, tool invocation, retrieval, and response generation, where each step has different quality, latency, and cost requirements.

A common challenge is that many implementations assign a single model to every step, which can drive up operating costs (overusing larger models) or degrade quality (overusing smaller models). A more systematic approach connects agent design to model-aware optimization.

At GTC, we are showcasing a reference implementation that brings together:

- Open Agent Specification (Agent Spec): A structured framework for defining multi-step agents with explicit reasoning, validation, and tool-invocation stages.

- Oracle AI Database: The trusted enterprise data layer where sensitive business data, including vectors, can reside securely and be accessed through structured SQL, APIs, and SDKs.

- NVIDIA NeMo Agent Toolkit: Profiling and optimization capabilities that evaluate which foundation model best fits each step.

Together, this enables hybrid model allocation: selecting different models for different steps to achieve strong outcomes while controlling token usage and operating costs.

Demo: “AI Investigator” agent

To illustrate the approach, we have implemented a representative AI Investigator agent (defined using the Open Agent Spec format) for a Financial Crime Compliance workflow:

- Customer transaction, account, and relationship data together with risk indicators, and historical records reside in Oracle AI Database.

- The agent interacts with the database via structured SQL generation, APIs, or SDKs.

- Each step can be executed using different Nemotron-3 models (for example, Nano for lower-cost steps or Super for more in-depth reasoning)

Using the NeMo profiler and optimizer, our demo then evaluates the agent workflow and identifies step-by-step model allocations that can deliver high-quality outcomes while also significantly reducing token consumption and operating costs. Finally, with NeMo Agent Studio, we visualize optimization trajectories and cost-quality trade-offs to make those decisions concrete and operational.

This reference implementation highlights a practical path toward production-grade agentic AI, helping customers understand: not only “can an agent work?”, but also “can it work well, predictably, and cost-efficiently using their own data?

See It In Action

At NVIDIA GTC 2026, we will share more on both the GA capability and reference implementation, including how these advances support common enterprise patterns such as semantic search, RAG, and multi-step agent workflows grounded in governed data.

- Session: Accelerate Oracle AI Database Embedding and Vector Index Creation With NVIDIA, Wednesday, March 18, 3pm PT (session link)

- Theater Talk: Expo Hall on Monday 3.15pm, Wednesday 12.15pm, and Thursday 12.15pm PT

- Demos: Visit the Oracle booth to see all the capabilities live and talk with our engineers.

Learn more

- NVIDIA Blog: NVIDIA for Modern Enterprise Data Processing

- Oracle AI Vector Search User’s Guide

- NVIDIA cuVS

- NVIDIA NeMo Agent Toolkit

- Try Oracle AI Database 26ai

- Open Agent Specification

Ikroop Dhillon is Director of Developer Relations for enterprise database and cloud at NVIDIA. Leading a specialized team of technical strategists, she drives partnerships that accelerate the development of cutting-edge, GPU-accelerated applications. By leveraging the full NVIDIA AI stack, she empowers enterprise partners to transform their industries with breakthrough AI innovations. Dhillon brings a robust background in product management and business development, with a proven track record of launching successful hardware and software products at leading full-stack companies including Oracle, Sun Microsystems, and Intel. Known for bridging the gap between deep technical innovation and market strategy, she builds scalable solutions for evolving enterprise needs. Dhillon holds a B.S. in Computer Science from the University of Washington and is an alumna of the Stanford Graduate School of Business, having completed the flagship Stanford Executive Program.

Manas Singh is the technical product manager for Accelerated Databases & Vector Search at NVIDIA. He has worked as an ML engineer at companies including Amazon and PwC building deep-learning based customer support chatbots training and fine-tuning mutlilingual LLM models. He studied Mechanical and Computer Science Engineering at Indian Institute of Technology (IIT), Bombay and holds an MBA from UC Berkeley.