Introduction

In the fast pace of today’s enterprise landscape, decisive action depends on immediate, trustworthy insights. Imagine if your executives could “talk” to your core databases as naturally as they talk to their teams by simply asking,

“Who are my top three customers with margin drop this quarter?” or

“Which region saw revenue grow but profitability fall?” and instantly receiving clear, actionable answers—visually presented, fully verified, and delivered without the need for dashboards, SQL, or traditional reporting cycles. This is the new reality made possible with Oracle’s suite of products transforming decision making:

- Executives get on-demand, trusted analytics straight from the source.

- Business teams are empowered to focus on strategy, not manual data pulls.

- IT reduces time spent mapping KPIs or fielding routine report requests.

What if your enterprise database could answer your questions as intelligently and promptly as your team members do? An agentic Natural Language to SQL (NL2SQL) solution powered by Oracle can do exactly that.

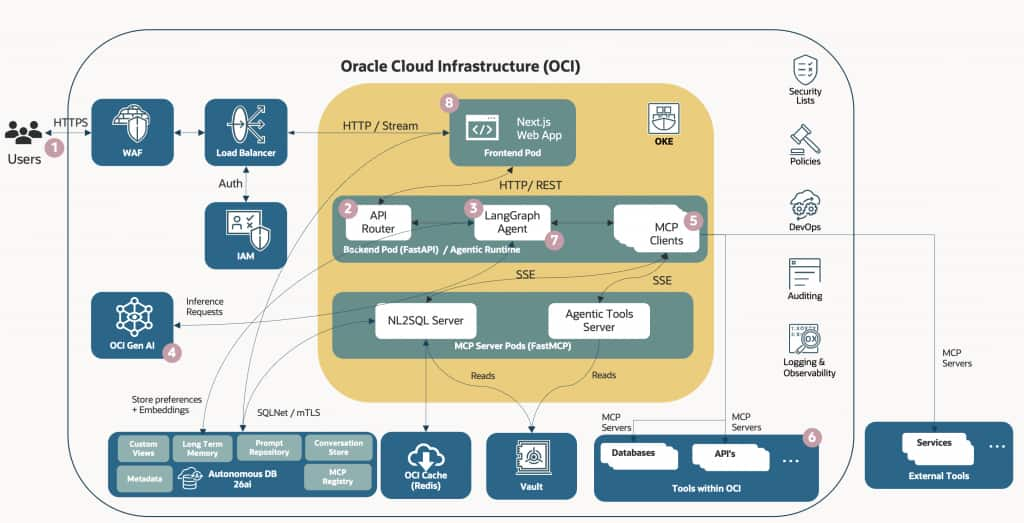

Here are the core OCI services and their roles that help achieve this outcome:

Oracle 26ai Database: Provides semantic vector search for schema mapping, glossary context, and secure, governed data access for accurate NL2SQL translation.

Oracle Gen AI Services: Utilizes advanced language models to convert natural language into precise, governed SQL, integrating business metadata and context in real time.

Oracle Container Engine for Kubernetes (OCI OKE): plays an important infrastructure and orchestration role in an MCP-based agentic AI solution. It is not the agent framework itself but the platform where agents, tools, and MCP servers run and scale.

The production architecture is built on a containerized microservices model orchestrated via Oracle Kubernetes Engine (OKE):

- Agentic Tools MCP Server: A FastMCP server running within the cluster. It provides standard agentic tools such as calculating math expressions, getting the current time etc.

- Frontend: A Next.js application running as a Kubernetes Deployment. It provides the user interface for chatting, displaying artifacts (markdown, code, charts), and a settings dashboard.

- Backend API: A FastAPI Python server running as a Kubernetes Deployment. It manages persistence (Autonomous DB), handles the LangGraph agent execution loop, streams LLM completions, and acts as the orchestrator for all internal MCP client connections.

- NL2SQL MCP Server: A FastMCP server operating over Server-Sent Events (SSE) within the cluster. It connects directly to the Oracle Database to perform schema searches, data sampling, secure read-only SQL execution, and data insight generation.

These integrated Oracle products expose database capabilities as MCP tools while enforcing enterprise security and role-based access control, delivering a conversational layer over your core databases (e.g., EBS, custom apps) and other MCP servers. Here’s how:

- Standardized, pluggable tools (MCP): Database functions (schema lookup, SQL execution, glossary, validation etc.) are exposed via MCP, enabling plug-and-play composition, faster integration, and easy failover.

- Security passthrough and RBAC (MCP): MCP tools inherit OCI identity, roles, RLS/VPD, and masking policies, supporting consistent enforcement across channels.

- Data Complexity Abstracted: Complex schemas and siloed data are made accessible through a semantic layer, enabling users to interact using natural business terms.

- Business Language Embedded: Your unique glossary such as “client,” “customer,” and “account” truly map to the corresponding data, automatically.

- Governed SQL via Gen AI: Generative AI translates natural language queries into accurate, governed SQL in real time.

- Secure, Scalable Infrastructure: All running securely on OCI—with visual insights delivered through dynamic interfaces, not static dashboards.

With capabilities standardized as MCP tools and security enforced end to end, the differentiator is how these tools coordinate per request. An agentic architecture turns intent into a governed, multi-step plan that adapts in real time.

Why Agentic Orchestration Matters:

- Stepwise planning: Breaks complex questions into sub-tasks (entity resolution, KPI logic, date filters, joins).

- Dynamic tool use: Chooses the right tool at each step (vector retrieve, glossary lookup, SQL generator, verifier, visualizer).

- Resilience/ fallbacks: Detects errors from tool outputs, routes around tool/model failures; re-tries with alternate paths.

- Iterative refinement: Compares candidate SQLs, evaluates metrics/row counts, and selects the best-performing query under governance constraints.

- SQL Auto fixing: SQL Auto Fix MCP is an agent tool that automatically detects, analyzes, and corrects SQL errors using LLM reasoning before executing the query.

- Context continuity: Maintains conversation/session state to support follow-ups like “show top 3” or “drill into APAC.”

Now, business users and executives begin with what they truly care about—critical business questions—and instantly receive verified, understandable answers, tailored in the language and visuals they need.

“Consult your organization’s privacy, security, and data governance policies before connecting to an MCP or exposing data outside your enterprise. Enterprise solutions should undergo appropriate privacy, security, and compliance review during presales, with additional audit and governance controls applied during implementation and deployment.”

Architecture: NL2SQL– Agentic – MCP and 26ai

How The Solution Works ?

1. User Input: The user submits a question via the Next.js chat interface.

2. API Orchestration: The frontend sends a POST (with message history) to the FastAPI backend.

3. Agent Loop Initiation: The backend initiates a LangGraph execution loop

4. LLM Reasoning: The OCI Generative AI model receives the conversation history and the list of available tools. It decides whether to answer directly or invoke a tool.

5. Tool Execution: If the LLM invokes a tool (e.g., “query the database for sales”), the backend routes that execution to the appropriate MCP server container.

6. Data Processing: The MCP server executes the action (e.g., runs Oracle SQL), formats the result, and returns it to the backend.

7. Final Response: The LangGraph loop feeds the tool result back to the LLM, which synthesizes the final natural language answer.

8. Streaming output: The final answer is streamed back to the Next.js frontend token-by-token alongside execution metadata (showing the user exactly what SQL was run).

Supremo Electronics: Executive Insights

To illustrate, let’s look at a fictional example of a global manufacturer called Supremo Electronics. Executives need real-time answers to sales, margin, and regional performance—using natural language.

The business glossary uses “deal,” “client,” and “profit drop” instead of technical database column names like doc_type, cust_id, or margin_deviation. The Supremo sales schema spans hundreds of tables, many custom. Executives often combine metrics across EBS, legacy, and new fusion modules.

The CEO, Jane, wants to know:

“Which customer accounts increased revenue but decreased profitability in APAC this quarter?”

With an Oracle powered NL2SQL solution, Jane can simply ask. Here’s how the NL2SQL architecture, supercharged with Oracle Gen AI & vector search in 26ai, makes it happen.

How Oracle 26ai Vector Store Empowers NL2SQL: 6 Critical Ways

1. Schema Discovery & Clarification

The Challenge: Supremo’s schema is vast and changes often. Business users say “customer” or “region” but don’t know DB objects or join logic.

Vector Solution: Every table, column, and relation is embedded as a vector in the Oracle 26ai store. When Jane asks her question, the system converts “customer accounts” to a vector and semantically matches it to relevant tables/fields (cust_account, customer_id, sales_region)—even as schemas evolve.

Result: No more guessing table names or relying on outdated data models. The solution “finds” the right data, dynamically.

2. Enriching Prompts with Business Glossary and Metadata

The Challenge: Terms like “profit drop,” “deal,” or “client” don’t always match schemas. Business context is often missing from traditional NL2SQL.

Vector Solution: Business glossary definitions and metadata (KPIs, synonyms, field descriptions) are embedded in the vector store. When Jane asks the question, the engine fetches the nearest glossary entries:

“Profit drop” = negative margin deviation

“Client” = mapped to customer_account

These are woven into the LLM prompt, so the AI understands and generates SQL grounded in Supremo’s context—not generic logic.

3. Dynamic Retrieval of K-shot Examples

The Challenge: Even with good base prompts, accuracy jumps when LLMs see relevant question/SQL pairs as examples—especially for complex, industry-specific queries.

Vector Solution: Thousands of prior NL/SQL query pairs are stored with embeddings. When Jane asks her question, the LLM fetches the most relevant k-shot examples dynamically—e.g., “Show margin trends by customer” and “List APAC accounts by profit deviation.”

Result: The AI is primed with contextual examples every time, allowing higher likelihood of accuracy even for novel query types.

4. Better Disambiguation and Reduced Hallucination

The Challenge: LLMs may invent fields or misunderstand business intent (“sales region” might mean different joins in different schemas).

Vector Solution: With prompt enrichment (see above) plus schema and glossary context, the LLM uses real definitions, field lists, and entity relationships—fetched semantically via vector search.

Result: The risk of hallucination is reduced. Jane gets actionable, well-formatted SQL every time, even when her wording is ambiguous.

5. Personalization and Contextual Awareness

The Challenge: Jane (the CEO) and Sam (the regional VP) have different intent—even if their questions sound similar.

Vector Solution: Each user’s context and query history are stored as vectors. When Sam asks, the system personalizes prompts with his department lingo and metrics (e.g., “net new clients in APAC”), retrieving past queries similar to his style.

Result: Answers match not just the database, but the user’s role and perspective. Adoption soars.

6. Cross-Domain Query Enablement

The Challenge: Supremo runs multiple overlapping systems: EBS, Fusion, and custom sales apps. Key business entities are called different things in different places.

Vector Solution: Semantic vectors link similar fields/entities across all data sources. When Jane asks about “APAC profit drop,” the engine resolves this across the legacy and modern tables, merging results, and mapping business logic seamlessly.Result: The CEO gets a unified answer—even if the data spans domains and changes terminology. Integration is natural and extensible.

How Oracle Gen AI Services Empower Agentic NL2SQL: 5 Key Advantages

1. Model Flexibility for Every Task

Choose from a suite of advanced language models (e.g., Grok, Cohere, Llama) optimized for specific tasks—like generating SQL queries, explaining analytic results in business terms, or recommending insightful visualizations.

2. On-Demand, Pay-Per-Use Efficiency

Invoke Gen AI services only when needed, allowing enterprises to benefit from elastic “pay as you go” scalability—no need to provision or manage dedicated AI infrastructure.

3. Low Latency and Cost Predictability with Dedicated AI Cluster

For high throughput use cases, Oracle Dedicated AI Cluster (DAC) option delivers predictable performance and cost, letting organizations run AI queries at scale with minimal latency.

4. Continuous Improvement with Feedback Loops

Seamlessly integrate user feedback into prompt tuning and model selection, so outputs become more accurate and relevant as the system learns from real business interactions.

5. Regulatory Compliance and Data Safety Oracle Gen AI services are delivered within the secure boundaries of OCI, providing enterprise-grade governance, privacy, and compliance support for sensitive business data.

NL2SQL Agentic Solution: Oracle 26ai + MCP Server Configuration

NL2SQL Agentic Solution is powered by a custom MCP (Model Context Protocol) server with tools specially designed for NL2SQL, enabling seamless integration with enterprise data. By embedding MCP tools, the platform allows agents to securely access and interact with business data, execute queries, and perform automated actions. Common MCP tools that can be integrated include Schema Explorer, Metadata Lookup, Business Glossary lookup, Data Profiling Tool, Execute Query, Data Insights etc. This setup enables users to ask natural language questions while the underlying MCP-enabled agent orchestrates database interactions and retrieves accurate insights from Oracle 26ai.

Executive-Ready Response to Jane’s Key Business Question

Here’s an example of an executive-ready answer Jane received when she posed the question, “what is the net profit by entity in fy25?”

Response

The Insight

Based on the data analysis:

Description: The dataset aggregates annual net profit for various entities in FY25, showing total net profit summed across months for each entity. Entities include codes like AEN, ALS, and others, with net profits ranging from 122,880,000 to 460,800,000

Patterns:

High-Performing Entities: Entities like DII, GMS , and QSS show the highest net profits at 460,800,000 each, indicating strong financial performance in these sectors.

Mid-Tier Entities: AEN and LGL follow with profits around 435,540,000 and 368,640,000, suggesting consistent but slightly lower performance.

Lower Profits: Entities such as EES, MPP, and RRE consistently report the lowest profits at 122,880,000, potentially indicating operational challenges or smaller scale.

Clustering: There is noticeable clustering in profit levels—three entities at the top (460M), a few in the mid-range (150M-400M), and several at the bottom (around 120M), which may reflect industry or operational differences.

Anomalies:

Uniform Low Profits: Multiple entities (EES, MPP, RRE) share identical low profits of 122,880,000, which could indicate data duplication, standardized reporting, or shared economic factors—worth verifying for accuracy.

No Negative Values: All profits are positive and relatively high, with no losses reported, which is unusual for a diverse set of entities and may suggest incomplete data or a focus on profitable operations only.

Potential Outlier: AEN’s profit (435M) is close to the top tier but not matching exactly, potentially an outlier if expected to align with similar entities. This analysis highlights top performers (DII, GMS, QSS) driving overall profitability, while lower-tier entities may need strategic improvements. If the query aims to understand “why” certain entities underperform, further breakdown by monthly trends or cost factors could provide deeper insights.

Conclusion

With Oracle-powered NL2SQL Agent, executives ask in plain language and receive trusted, actionable insights—fast. Vector search and Gen AI services ground results in your schema and glossary, while MCP and LangGraph provide governed, self-correcting orchestration at enterprise scale. Let’s make your data talk back—clearly, intelligently, and in the language that drives your business forward.

Watch this space: next, we’ll cover an agentic RAG architecture and how MCP + A2A connect your data and tool ecosystem—delivering faster, more accurate answers through parallel collaboration.