How Kubernetes supports enterprise AI digital assistants

Organizations transitioning from initial AI testing to production environments require platforms that can reliably operate multimodal workloads. Oracle Cloud Infrastructure provides the compute power, networking, and lifecycle automation needed to support conversational AI applications at scale. Using Kubernetes as the orchestration layer facilitates consistent deployment, rolling updates, and modular service separation, which can help improve performance and operational reliability.

Why GPU acceleration can help improve performance for AI assistants on OCI

Digital assistants built with large language models, advanced speech features, and real-time rendering engines benefit from GPU acceleration. Oracle Cloud Infrastructure offers NVIDIA A10 GPU shapes optimized for inference, delivering strong performance for avatar rendering, natural language processing, and multimodal workloads. Automation tools such as Terraform and Ansible provide consistent provisioning across development and production cycles.

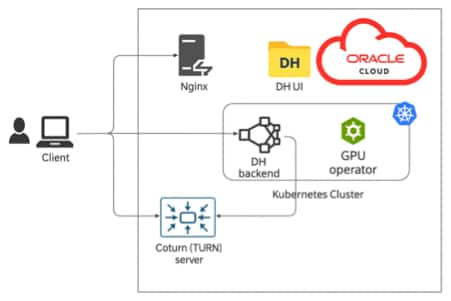

How the architecture enables modular and scalable digital assistant capabilities

The solution follows a microservices architecture, allowing each component—such as the avatar renderer, dialogue manager, or speech service—to scale independently. Kubernetes provides container orchestration, workload isolation, and automated failover. Red Reply expanded NVIDIA’s original implementation by adapting the reference code for OCI, adding deployment automation and enabling integration with additional AI features based on customer requirements.

How GPU orchestration works inside the Kubernetes cluster on OCI

GPU workloads are scheduled automatically within Kubernetes, allocating GPU resources only when needed and enabling efficient scaling. This approach provides predictable resource consumption and supports advanced workloads such as 3D avatar rendering or latency-sensitive conversational pipelines. Namespace-level GPU allocation helps address governance and budget control issues.

What is included in the technology stack

- Oracle Cloud Infrastructure

- Kubernetes

- Terraform and Ansible

- Blender for avatar modeling

- NVIDIA A10 GPUs

- Oracle Speech Services

How this architecture supports future expansion

As enterprise AI workloads continue to grow, this architecture can expand into multi-region deployments, integrate additional speech and avatar features, and adopt advanced GPU autoscaling strategies. The modular nature of the solution enables rapid innovation without major architectural redesigns.

What this means for organizations adopting AI on OCI

A Kubernetes-based digital assistant running on Oracle Cloud Infrastructure provides a scalable foundation for enterprise-grade conversational AI. With GPU acceleration, automated orchestration, and modular microservices, teams can build intelligent assistants that support real-world business operations.

Next steps: What you can do today

- Explore Oracle Kubernetes Engine documentation.

- Review GPU compute shapes available on Oracle Cloud Infrastructure.

- Experiment with the Oracle Cloud free tier.

- Contact Oracle or Red Reply for guidance on designing or deploying your own cloud-native digital assistant.

David Kelly

Managing Director – Red Reply GmbH

David is a Cloud Expert and Partner at Reply, his focus is AI and Sovereign Infrastructure for a wide range of customer use cases.

Rocco Leone

Project Manager – Red Reply GmbH

Network and Cloud DevOps specialist with extensive experience in designing and implementing resilient, scalable infrastructures. Certified OCI Architect skilled in Oracle Cloud Infrastructure and private cloud networks.