Introduction: Why Secure Data Sharing Matters

Data sharing sits at the heart of modern AI and analytics. Teams need access to data across domains to build models, generate insights, and deliver business outcomes faster. But as organizations scale, sharing data becomes less about access and more about control.

The challenge is not simply making data available, it is making it available securely, consistently, and without losing governance. Traditional approaches often rely on copying data between systems or granting broad access to entire datasets. This leads to duplication, inconsistent policies, and limited visibility into who is using what. At scale, these issues compound. What starts as a simple data exchange quickly turns into a governance problem.

Secure data sharing requires a different approach, one where access is intentional, governed, and auditable by design. It should allow teams to collaborate freely while ensuring that data remains protected, compliant, and trustworthy.

The Problem with Traditional Data Sharing Models

Traditional data sharing models were not designed for the scale and complexity of modern data platforms. As a result, they often introduce more problems than they solve.

One of the most common issues is data duplication. Teams frequently copy data across systems to make it accessible, creating multiple versions of the same dataset. Over time, this leads to inconsistencies, increased storage costs, and confusion around which version is the source of truth.

Access is also often granted too broadly. Instead of sharing only what is needed, entire datasets or schemas are exposed, increasing the risk of overexposure, especially when sensitive or regulated data is involved.

Permissions further complicate the picture. Different tools and environments enforce access in different ways, leading to inconsistent policies that are difficult to manage and audit. What starts as a simple access request can quickly turn into a web of manual permissions and exceptions.

Finally, there is limited visibility into how shared data is used. Without strong auditability, organizations struggle with lack of transparency, they cannot easily answer who accessed what data, when, and for what purpose.

These challenges highlight a fundamental limitation: traditional approaches rely on moving or copying data to share it. In contrast, modern approaches like Delta Sharing, used in AI Data Platform Workbench, enable secure, real-time access to data without duplication, while preserving governance and control.

A New Model: Governed Data Sharing in AI Data Platform Workbench

Oracle AI Data Platform (AIDP) Workbench introduces a different approach, one where data sharing is built into the platform’s governance model, not layered on afterward.

At the core of this model is Delta Sharing, which allows teams to share data directly from the source without copying or moving it. Instead of creating multiple versions of the same dataset, AI Data Platform Workbench enables secure, real-time access to governed data, ensuring that all consumers work from a single, consistent source of truth.

This means organizations no longer have to choose between speed and control. Teams can collaborate across domains and with external partners while maintaining:

- A single source of truth

- Consistent access policies

- Full visibility into data usage

In AI Data Platform Workbench, data sharing is not a workaround, it is a native capability, designed to scale securely as data adoption grows.

Sharing Data as Governed Assets, Not Files

In many environments, sharing data still means sending files exports, extracts, or copies of datasets passed between teams. While simple, this approach breaks governance. Once data leaves its source, control is lost, versions drift, and accountability becomes unclear.

AI Data Platform Workbench takes a different approach. Data is not shared as files, but as governed assets that remain within the platform. Instead of duplicating data, teams grant access to specific, well-defined datasets that continue to live in their original location.

This model is built around structured data layers like catalogs, schemas, tables, etc. that define how data is organized and accessed. When a dataset is shared, what is actually being shared is controlled access to that object, not a physical copy of the data itself.

The benefit is that it preserves a single source of truth, eliminating the need to reconcile multiple versions of the same dataset.

In practice, this means a team can expose a curated dataset for broader use without exposing upstream raw data or internal structures. Consumers get exactly what they need, and providers retain full control over how that data is accessed and used.

By treating data as a governed asset rather than a transferable file, AIDP enables sharing that is both precise and durable, even as usage scales across teams.

Controlling Access: Who Gets What and Why

Secure data sharing is not just about what is shared, it is equally about who receives access and under what conditions.

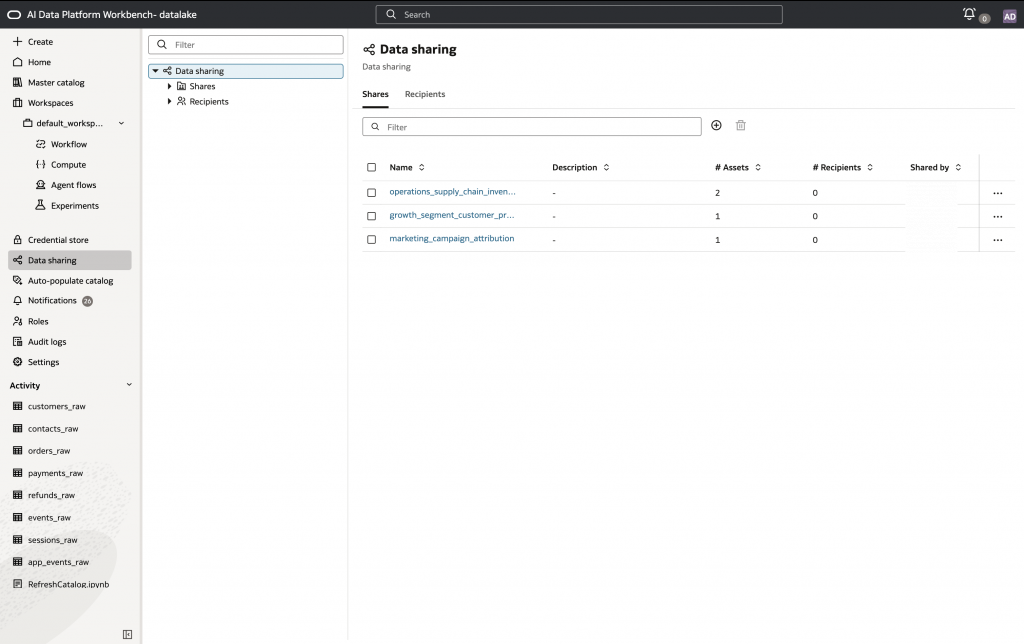

In AI Data Platform Workbench, access is controlled through a combination of explicit recipient management and permission-based governance. Data providers define recipients, internal users, groups, or external consumers and grant them access to specific datasets. This ensures that access is always intentional and scoped, rather than implicit or inherited.

Permissions are enforced at the level of the shared data objects. Instead of granting broad access to entire environments, providers can control access to specific tables or views, aligning permissions with the needs of each recipient. This allows organizations to follow a least-privilege model, where users receive only the access required for their role.

This approach also creates clear accountability. Because access is granted explicitly to defined recipients, it becomes easier to understand who has access to which data and why. There is no ambiguity introduced by indirect sharing or uncontrolled propagation.

By combining recipient management with fine-grained permissions, AI Data Platform Workbench ensures that data sharing remains controlled, auditable, and aligned with governance policies, even as it scales across teams and domains.

Governance That Stays Intact During Sharing

A common failure point in data sharing is that governance weakens once data is shared. Policies that were enforced at the source do not always carry forward, especially when data is copied or moved into new environments.

In AI Data Platform Workbench, this gap is avoided by design. Because data sharing is implemented without duplicating data, access continues to be governed at the source. Access to shared objects, such as tables or views, is controlled through defined permissions, ensuring that recipients can only access the data that has been explicitly shared with them.

This means that sharing does not introduce a separate or parallel access model. Instead, access remains centrally managed, and any updates to permissions on shared data are reflected in how that data can be accessed by recipients.

As a result, governance remains centralized and enforceable. Organizations retain control over how data is accessed, even as it is shared across teams or domains. This ensures that security, compliance, and policy enforcement are not compromised as collaboration increases.

Discoverability Without Risk

Secure data sharing is only effective if users can find and understand the data available to them. At the same time, discovery must not come at the cost of overexposing sensitive or restricted data.

In AI Data Platform Workbench, the Master catalog explorer provides a structured way to browse and discover data assets. Users can navigate catalogs, schemas, and tables through a unified interface, but only within the scope of what they are authorized to access.

This ensures that discovery is permission-aware. Users do not see all available data, they see only the assets they have access to, along with the relevant structure and context needed to use them effectively.

As a result, teams can explore shared datasets and understand what is available without requiring broad access or manual coordination. At the same time, data owners retain control over visibility, ensuring that sensitive data is not exposed unintentionally.

This balance allows organizations to scale data sharing while maintaining both usability and governance.

Putting It Together: Secure Collaboration Across Teams

Secure data sharing in AI Data Platform Workbench is not a single feature, it is the result of multiple capabilities working together as a unified operating model.

Data is shared directly from the source using Delta Sharing, eliminating duplication and ensuring a single version of truth. Access is granted explicitly to recipients, with permissions defining exactly what data can be accessed. These controls are applied at the level of governed data objects, ensuring that sharing remains precise and scoped.

At the same time, governance stays centralized. Access is managed consistently, and data remains controlled at the source even as it is shared across teams. This ensures that security and compliance are maintained without introducing additional complexity.

Finally, discovery is built into the experience. Through the Master catalog explorer, users can find and understand the data available to them without exposing assets they are not authorized to access.

Together, these capabilities create a model where teams can collaborate freely while data remains secure, governed, and trustworthy. Instead of trading off between access and control, organizations can achieve both. Enabling scalable, cross-team collaboration without compromising governance.

Conclusion: From Data Access to Data Trust

As organizations scale AI and analytics, the challenge is no longer just enabling access to data, it is ensuring that access is secure, governed, and reliable.

Oracle AI Data Platform Workbench enables this shift by embedding secure data sharing into its core operating model. Data can be shared without duplication, access is explicitly controlled, and governance remains intact from source to consumption. At the same time, users can discover and use data confidently within the boundaries defined for them.

The impact is clear. Teams can collaborate faster because they no longer depend on manual data movement or ad hoc access requests. Governance becomes stronger because policies are applied consistently and centrally. And AI initiatives scale more effectively because they are built on trusted, well-governed data.

This is the difference between simply providing access to data and building a foundation of data trust where collaboration, control, and confidence come together to support enterprise-scale innovation.

For more information

Check out the following resources: