A managed path to cited enterprise answers with OCI Generative AI and Oracle AI Database 26ai

Enterprise knowledge is rarely missing. It is usually scattered across contracts, policies, support tickets, engineering documents, regulatory submissions, and shared drives. The result is familiar: employees know the answer exists somewhere, but they still spend time searching, checking, and proving where the answer came from.

That is where the Oracle AI Accelerator Pack for the Enterprise Knowledge Agent, PaaS edition, creates value. It helps customers deploy a managed, citation-aware knowledge agent on Oracle Cloud Infrastructure (OCI). Users ask questions in plain language and receive grounded answers with source links back to the original content.

How this creates business value

The business value is straightforward: faster answers, less manual lookup, and better trust in AI-assisted work. For many enterprises, the first use case is not replacing a process. It is removing the slow search step that sits inside many processes.

Examples include:

- A support agent asking a product-policy question before replying to a customer.

- A compliance analyst checking the latest approved position on a regulatory topic.

- A procurement reviewer extracting key terms from a long contract.

- An operations lead comparing a new incident with past postmortems.

In each case, the goal is not only to generate an answer. The goal is to generate an answer the team can inspect, cite, and use.

Why a managed OCI approach matters

Many teams can build a proof of concept with a chatbot and a few documents. Production is different. Customers need identity, data controls, source grounding, model selection, vector search, evaluation, and a way to operate the application without becoming an AI platform team.

The PaaS edition is designed for that path. It uses OCI Generative AI for generation, Oracle AI Database 26ai for vector search, and a packaged application workflow for chat, document upload, information extraction, and source-linked responses. Customers can start with on-demand OCI Generative AI for pilots and shared workloads, then move production use cases that need isolated capacity or predictable throughput to a Dedicated AI Cluster.

That model gives customers flexibility without forcing an early infrastructure decision.

How it performs for customer-facing latency

The customer question that matters is straightforward: can users get cited answers quickly enough for day-to-day work?

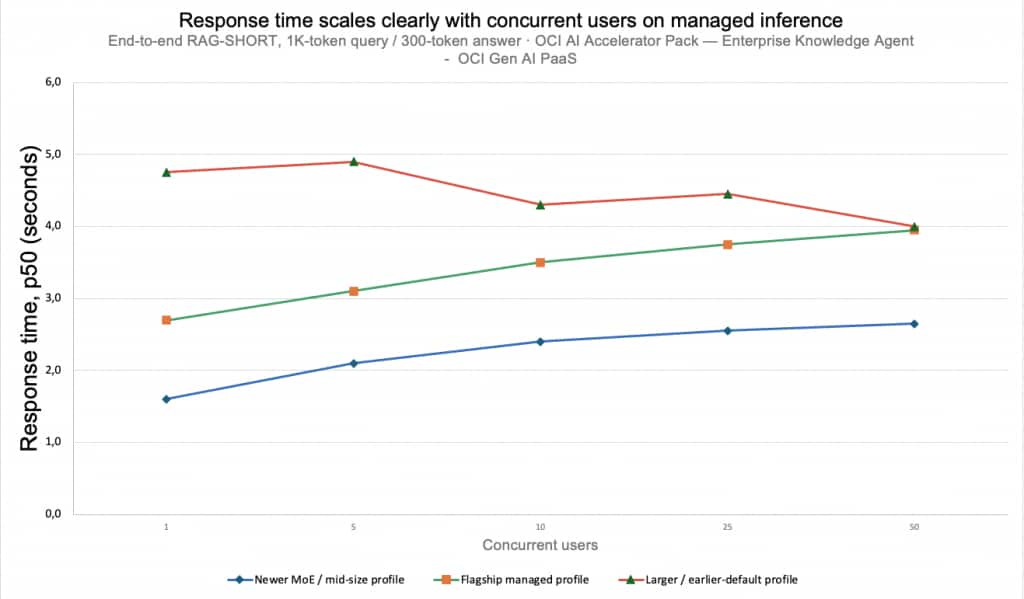

Figure 1: p50 end-to-end response time versus concurrent users for RAG-Short on OCI GenAI PaaS

The chart shows response time across representative managed-model profiles. A typical 1K-token user question with a 300-token answer returns in about 1.6 seconds at single-user load and stays under 2.5 seconds through 10 concurrent users on the recommended model configuration.

The key customer lesson is that model selection matters. OCI Generative AI offers multiple model families for chat, embedding, and reranking, including Cohere, Google Gemini, and Meta Llama models, with availability varying by region and service mode. The pack keeps model selection configurable so customers can balance latency, quality, cost, and regional availability without changing the application workflow.

For customers, the takeaway is simple: the pack is designed for interactive business workflows, not only offline document processing.

The numbers above reflect OCI Generative AI on the on-demand consumption mode used for pilots and shared workloads. A Dedicated AI Cluster (DAC) provides isolated capacity for production workloads that need predictable throughput or committed capacity. Customers should benchmark DAC performance against their own corpus, prompts, concurrency, and service targets before setting production latency commitments.

What customers can start with

The strongest pilots are narrow enough to measure and important enough to matter. Good starting points include:

| Workflow | Customer outcome |

| Support knowledge assistant | Reduce time spent searching policy and product documents |

| Compliance Q&A | Give analysts cited answers with source traceability |

| Contract extraction | Pull terms, obligations, and dates from long documents |

| Incident knowledge search | Compare new incidents with historical postmortems |

The common pattern is repeatable: choose one corpus, one business team, and a set of questions that are answered manually today.

How Oracle helps simplify the path

Customers do not need another reference architecture that leaves the hard work to the implementation team. They need a shorter path from “this could work” to “users are getting cited answers in production.”

The Oracle AI Accelerator Pack brings together the application workflow, OCI Generative AI, Oracle AI Database 26ai, document ingestion, and an evaluation harness. That lets teams spend more time validating business answers and less time assembling infrastructure pieces.

This is where Oracle adds practical value: validate the deployment pattern, simplify adoption, and help customers move from experiments to repeatable enterprise AI services on OCI.

What comes next

For customers building knowledge agents, the next step is to connect the agent more deeply with the systems where work happens: case management, ticketing, procurement, compliance review, and service operations. Over time, the opportunity is to make grounded enterprise Q&A feel less like a custom AI project and more like a standard operating capability.

Conclusion and next steps

The strongest customer story is not a benchmark number by itself. It is that enterprise knowledge work can become faster and more defensible when answers are grounded in source documents and delivered in seconds.

Read more on the Oracle AI Accelerator Packs page, or reach out to your Oracle account team or the OCI AI Centre of Excellence to plan a working session.

References

- Oracle AI Accelerator Packs: https://www.oracle.com/artificial-intelligence/ai-accelerator-packs

- OCI Generative AI: https://docs.oracle.com/en-us/iaas/Content/generative-ai/home.htm

- OCI Generative AI pretrained foundational models: https://docs.oracle.com/iaas/Content/generative-ai/pretrained-models.htm

- OCI Generative AI inference modes: https://docs.oracle.com/en-us/iaas/Content/generative-ai/modes.htm

- Oracle AI Database 26ai vector search: https://docs.oracle.com/en/database/oracle/oracle-database/23/vecse