Research universities manage a growing compliance workload across IRB protocols, conflict-of-interest disclosures, export controls, research security, and sponsor administration. In many cases, the guidance already exists. The harder problem is helping researchers and administrators find the right policy, from the right source, at the right moment.

Consider a principal investigator preparing an NIH renewal. She recently accepted a small equity stake in a startup and now needs to know whether that interest triggers a disclosure before submission, whether any related management steps are required, and whether the change affects an active IRB protocol. The answer may exist across institutional conflict-of-interest policy, IRB procedures, sponsor guidance, and office-specific workflows. Finding it quickly enough to be useful is often the real challenge.

That access problem creates real operational drag. Compliance and research administration teams are being asked to support more activity without a matching increase in staff, while researchers still need timely, usable guidance. The issue is not simply the volume of policy content. It is the fragmentation of that content across handbooks, web pages, PDFs, sponsor notices, and office-specific procedures.

This is a practical higher education use case for retrieval-augmented generation. With OCI Generative AI Agents, institutions can build a citation-grounded assistant that helps users find answers faster while keeping institutional policy and approved source material at the center of every response.

The compliance questions researchers actually ask

A modern university supports investigators across multiple overlapping compliance domains. Human subjects research may involve IRB review and, for some federally supported cooperative studies, reliance on a single IRB. NIH-funded work can require disclosure and management of significant financial interests under institutional conflict-of-interest processes. International collaborations may raise export control questions under EAR, ITAR, or OFAC. Research security expectations also continue to evolve through federal guidance and agency implementation.

Researchers usually are not asking for abstract policy summaries. They are asking practical, time-sensitive questions such as whether a new financial interest needs review before an NIH submission, what changes when a new study site is added to a federally supported protocol, or whether an international collaboration requires export control review.

In many institutions, the answers already exist in approved materials. A conversational assistant grounded in those materials can reduce time spent searching, improve consistency for routine questions, and give newer staff a stronger starting point when teams change.

A university-ready architecture on OCI

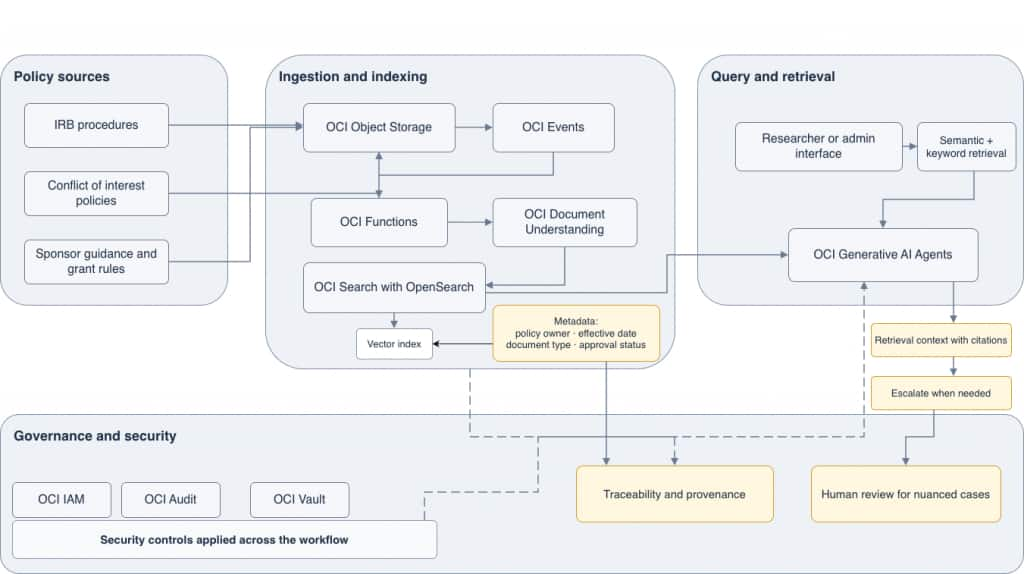

In this use case, the pattern is straightforward. Policy documents and procedures can be stored in OCI Object Storage. As new or updated documents arrive, OCI Functions and OCI Events can trigger processing so content can be extracted and prepared for retrieval. That content can then be indexed in OCI Search with OpenSearch, where institutions can combine semantic retrieval with document metadata such as policy owner, document type, effective date, sponsoring office, and approval status.

This matters for more than search quality. Vector-based retrieval can help the assistant find policy language by conceptual meaning as well as exact terms, while metadata gives the institution a clearer governance layer for provenance, filtering, and review.

When a researcher submits a question, OCI Generative AI Agents can use a RAG tool to retrieve relevant passages and generate a response grounded in institutional source material. For research compliance, citations matter as much as usability. Users need to see where an answer came from so they can validate it and follow up with the right office.

Why metadata and retrieval matter for governance

For universities, adoption is not judged only by response quality. Governance, traceability, and operational control matter from the beginning. OCI services let institutions keep policy content and orchestration inside the institution’s OCI tenancy, with access governed through OCI Identity and Access Management and activity logged through OCI Audit.

Indexing source content in OpenSearch with metadata can also strengthen traceability because responses can be tied back to the right document set, filtered by institutional context, and reviewed with clearer visibility into ownership, recency, and approval status.

Just as important, the assistant should be positioned as assistive rather than determinative. It can help users find relevant policy, summarize approved guidance, and route routine questions more efficiently. It should not replace institutional review, legal interpretation, export determinations, IRB oversight, or sponsor-specific compliance judgment.

How the use case can expand over time

For universities that start with question answering, this same use case can expand over time. A source-grounded assistant improves support when users ask questions, and the same foundation can also support a more proactive workflow.

Federal guidance changes over time, and institutions often discover meaningful updates only after staff manually review new notices, agency pages, or regulatory materials. With OCI Generative AI Agents, institutions can extend the architecture by using API endpoint calling tools to retrieve public agency guidance and compare that material with indexed internal policy using RAG-based retrieval. When the system identifies a possible mismatch, a function calling tool can route a summary to the appropriate compliance team for review.

That workflow does not make compliance determinations. It gives staff an earlier signal that something may need attention. For lean teams, that can be a practical way to move from purely reactive support toward earlier policy review.

Why research compliance is a strong first use case

Research compliance is a strong first use case because the demand is persistent, the burden is visible, and the source material already exists. A well-scoped assistant can help institutions improve access to policy, support consistency, and reduce administrative friction in high-stakes workflows.

With OCI Generative AI Agents, universities can start with a targeted use case for trusted policy access and expand over time into adjacent workflows such as policy monitoring, routing, and staff support.

Start exploring

If your institution is working through fragmented policy access, staff turnover, or reactive compliance workflows, this is a practical architecture to evaluate. Start by identifying one high-friction compliance domain, curating approved source documents, and testing a narrowly scoped assistant with clear governance boundaries.

To learn more, explore these Oracle resources:

- OCI Generative AI Agents overview and getting started

- Knowledge bases and RAG tools in OCI Generative AI Agents

- API endpoint calling tools in OCI Generative AI Agents

- Function calling tools in OCI Generative AI Agents

- OCI Search with OpenSearch

- OCI Document Understanding

- OCI Identity and Access Management

- OCI Free Tier

- Oracle Higher Education solutions