We are pleased to announce the certification of Oracle WebLogic Server on Kubernetes! As part of this certification, we are releasing a sample on GitHub to create an Oracle WebLogic Server 12.2.1.3, 12.2.1.4, and 14.1.1.0 domain images running on Kubernetes. We are also publishing a series of blogs that describe in detail the WebLogic Server configuration and feature support as well as best practices. A video of a WebLogic Server domain running in Kubernetes can be seen at WebLogic Server on Kubertnetes Video.

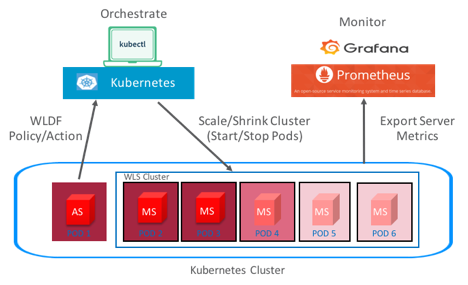

Kubernetes is an open-source system for automating deployment, scaling, and management of containerized applications. It supports a range of container tools, including Docker. Oracle WebLogic Server configurations running in Docker containers can be deployed and orchestrated on Kubernetes platforms.

For additional information about Docker certification with Oracle WebLogic Server, see My Oracle Support Doc ID 2017945.1. Support for running WebLogic Server domains on Kubernetes platforms other than on Oracle Linux with a network fabric other than Flannel, see My Oracle Support Doc ID 2349228.1. For the most current information on supported configurations, see the Oracle Fusion Middleware Supported System Configurations page on Oracle Technology Network.

This certification enables users to create clustered and nonclustered Oracle WebLogic Server domain configurations, including both development and production modes, running on Kubernetes clusters. This certification includes support for the following:

- Running one or more WebLogic domains in a Kubernetes cluster

- Single or multiple node Kubernetes clusters

- WebLogic managed servers in clustered and nonclustered configurations

- WebLogic Server Configured and Dynamic clusters

- Unicast WebLogic Server cluster messaging protocol

- Load balancing HTTP requests using Træfik, Voyager as Ingress controllers on Kubernetes clusters, and Apache

- HTTP session replication

- JDBC communication with external database systems

- JMS

- JTA

- JDBC store and file store using persistent volumes

- Inter-domain communication (JMS, Transactions, EJBs, and so on)

- Auto scaling of a WebLogic cluster

- Integration with Prometheus monitoring using the WebLogic Monitoring Exporter

- RMI communication from outside and inside the Kubernetes cluster

- Upgrading applications

- Patching WebLogic domains

- Service migration of singleton services

- Database leasing

In this certification of WebLogic Server on Kubernetes the following configurations and features are not supported:

- WebLogic domains spanning Kubernetes clusters

- Whole server migration

- Use of Node Manager for WebLogic Servers lifecycle management (start/stop)

- Consensus leasing

- MultiCast WebLogic Server cluster messaging protocol

- Multitenancy

- Production redeployment

- Flannel with portmap

We have released a sample to GitHub (https://github.com/oracle/docker-images/tree/master/OracleWebLogic/samples/wls-k8s-domain) that show how to create and run a WebLogic Server domain on Kubernetes. The README.md in the sample provides all the steps. This sample extends the certified Oracle WebLogic Server 12.2.1.3 developer install image by creating a sample domain and cluster that runs on Kubernetes. The WebLogic domain consists of an administrator server and several managed servers running in a WebLogic cluster. All WebLogic Server share the same domain home, which is mapped to an external volume. The persistent volumes must have the correct read/write permissions so that all WebLogic Server instances have access to the files in the domain home. Check out the best practices in the blog WebLogic Server on Kubernetes Data Volume Usage, which explains the WebLogic Server services and files that are typically configured to leverage shared storage, and provides full end-to-end samples that show mounting shared storage for a WebLogic domain that is orchestrated by Kubernetes.

After you have this domain up and running you can deploy JMS and JDBC resources. The blog Run a WebLogic JMS Sample on Kubernetes provides a step-by-step guide to configure and run a sample WebLogic JMS application in a Kubernetes cluster. This blog also describes how to deploy WebLogic JMS and JDBC resources, deploy an application, and then run the application.

This application is based on a sample application named ‘Classic API – Using Distributed Destination’ that is included in the WebLogic Server sample applications. The application implements a scenario in which employees submit their names when they are at work, and a supervisor monitors employee arrival time. Employees choose whether to send their check-in messages to a distributed queue or a distributed topic. These destinations are configured on a cluster with two active managed servers. Two message-driven beans (MDBs), corresponding to these two destinations, are deployed to handle the check-in messages and store them in a database. A supervisor can then scan all of the check-in messages by querying the database.

The follow up blog, Run Standalone WebLogic JMS Clients on Kubernetes, expands on the previous blog and demonstrates running standalone JMS clients communicating with each other through WebLogic JMS services, and database-based message persistence.

WebLogic Server and Kubernetes each provide a rich set of features to support application deployment. As part of the process of certifying WebLogic Server on Kubernetes, we have identified a set of best practices for deploying Java EE applications on WebLogic Server instances that run in Kubernetes and Docker environments. The blog Best Practices for Application Deployment on WebLogic Server Running on Kubernetes describes these best practices. They include the general recommendations described in Deploying Applications to Oracle WebLogic Server, and also include the application deployment features provided in Kubernetes.

One of the most important tasks in providing optimal performance and security of any software system is to make sure that the latest software updates are installed, tested, and rolled out promptly and efficiently with minimal disruption to system availability. Oracle provides different types of patches for WebLogic Server, such as Patch Set Updates, and One-Off patches. The patches you install, and the way in which you install them, depends upon your custom needs and environment.

In Kubernetes, Docker, and on-premises environments, we use the same OPatch tool to patch WebLogic Server. However, with Kubernetes orchestrating the cluster, we can leverage the update strategy options in the StatefulSet controller to roll out the patch from an updated WebLogic Server image. The blog Patching WebLogic Server in a Kubernetes Environment; explains how.

And, of course, a very important aspect of certification is security. We have identified best practices for securing Docker and Kubernetes environments when running WebLogic Server, explained in the blog Security Best Practices for WebLogic Server Running in Docker and Kubernetes.

These best practices are in addition to the general WebLogic Server recommendations documented in Securing a Production Environment for Oracle WebLogic Server 12c documentation.

In the area of monitoring and diagnostics, we have developed for open source a new tool The WebLogic Monitoring Exporter. WebLogic Server generates a rich set of metrics and runtime state information that provides detailed performance and diagnostic data about the servers, clusters, applications, and other resources that are running in a WebLogic domain. This tool leverages WebLogic’s monitoring and diagnostics capabilities when running in Docker/Kubernetes environments.

The blog Announcing The New Open Source WebLogic Monitoring Exporter on GitHub describes how to build the exporter from a Dockerfile and source code in the GitHub project https://github.com/oracle/weblogic-monitoring-exporter. The exporter is implemented as a web application that is deployed to the WebLogic Server managed servers in the WebLogic cluster that will be monitored. For detailed information about the design and implementation of the exporter, see Exporting Metrics from WebLogic Server.

Once after the exporter has been deployed to the running managed servers in the cluster and is gathering metrics and statistics, the data is ready to be collected and displayed via Prometheus and Grafana. Follow the blog entry Using Prometheus and Grafana to Monitor WebLogic Server on Kubernetes that steps you through collecting metrics in Prometheus and displaying them in Grafana dashboards.

Elasticity (scaling up or scaling down) of a WebLogic Server cluster provides increased reliability of customer applications as well as optimization of resource usage. The WebLogic Server cluster can be automatically scaled by increasing (or decreasing) the number of pods based on resource metrics provided by the WebLogic Diagnostic Framework (WLDF). When the WebLogic cluster scales up or down, WebLogic Server capabilities like HTTP session replication and service migration of singleton services are leveraged to provide the highest possible availability. Refer to the blog entry Automatic Scaling of WebLogic Clusters on Kubernetes for an illustration of automatic scaling of a WebLogic Server cluster in a Kubernetes cloud environment.

In addition to certifying WebLogic Server on Kubernetes, the WebLogic Server team has developed a WebLogic Kubernetes Operator. A Kubernetes Operator is “an application specific controller that extends the Kubernetes API to create, configure, and manage instances of complex applications”. Please refer to the WebLogic Kubernetes Operator documentation for Quick Start guides, samples, and information about how the operator can manage the life cycle of the WebLogic domain.

The certification of WebLogic Server on Kubernetes encompasses all the various WebLogic configurations and capabilities described in this blog. Our intent is to enable you to run WebLogic Server in Kubernetes, to run WebLogic Server in the Kubernetes-based Oracle Container Engine that Oracle intends to release shortly, and to enable integration of WebLogic Server applications with applications developed on our Kubernetes-based Container Native Application Development Platform. We hope this information is helpful to customers seeking to deploy WebLogic Server on Kubernetes, and look forward to your feedback