Zero copy networking has always been the goal of Linux networking, and over the years a lot of techniques have been developed in the mainline Linux kernel to achieve it. This blog post highlights recent enhancements to zero copy networking 1. All of these enhancements are included in UEK6.

Zero Copy Send

Zero copy send avoids copying on transmit by pinning down the send buffers. It introduces a new socket option, SO_ZEROCOPY, and a new send flag, MSG_ZEROCOPY.

To use this feature the SO_ZEROCOPY socket option has to be set and the send calls have to be made using the flag MSG_ZEROCOPY.

setsockopt(fd, SOL_SOCKET, SO_ZEROCOPY, &one, sizeof(one));

send(fd, buf, sizeof(buf), MSG_ZEROCOPY);

Once the kernel has transmitted the buffer it notifies the application via the socket MSG_ERRORQUEUE that the buffer is available for reuse. Each successful send is identified by a 32 bit sequentially increasing number, so the application can co-relate the sends and buffers. A notification may contain a range that covers several sends. Zerocopy and non zero copy sends can be intermixed.

recvmsg(fd, &msg, MSG_ERRQUEUE);

Due to the overhead associated with pinning down pages, MSG_ZEROCOPY is generally only effective at writes larger than 10 KB.

MSG_ZEROCOPY is supported for TCP, UDP, RAW, and RDS TCP sockets.

Due to various reasons, zerocopy may not always be possible, in which case a copy of the data is used.

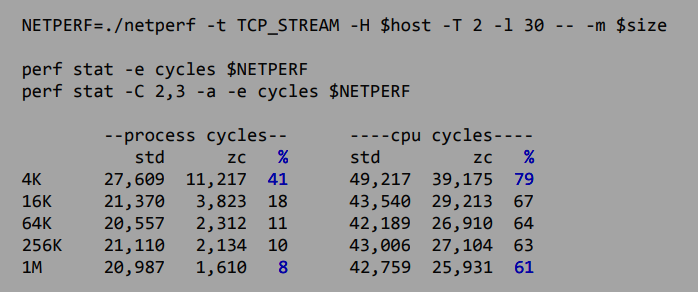

Performance

Process cycles measurements were made over all CPU’s in the system.

CPU cycles measurements were made only over CPU’s on which the application ran.

Cycles measured are UNHALTED_CORE_CYCLES.

TCP Zero Copy Recv

Zero copy receive is a much harder problem to solve as it requires data buffer to be page aligned for it to be mmaped. The presence of protocol headers and small MTU size inhibits this alignment.

The new zero copy receive API introduces mmap() for TCP sockets. mmap() calls on a TCP socket, carve out address space where incoming data buffer can be mapped provided the data buffer is page aligned and urgent data is not present. Note it does not require the size to be a multiple of page size.

To use this feature, the application issues a mmap() call on the TCP socket to obtain an address range for mapping data buffers. When data becomes available reading the application issues a getsockopt() call with the TCP_ZEROCOPY_RECEIVE option to pass the address information to the kernel. The following structure is used to pass the mapping information, address contains the address where the mapping begins and length contains the length of the mapped space.

struct tcp_zerocopy_receive {

__u64 address; /* Input, address of mmap carved space */

__u32 length; /* Input/Output, length of address space , amount of data mapped */

__u32 recv_skip_hint; /* Output, bytes to read via recvmsg before reading mmap region */

};

If the getsockopt() call is successful, the kenel would have mapped the data buffer at the address passed in. On return length contains the length of the mapped data. In certain circumstances it may not be possible to map the entire data, in which case recv_skip_hint contains the length of data to be read via regular recv calls before reading mapped data. If no data can be mapped getsockopt() returns with an error and regular recv calls have to be used.

Once the data has been consumed, mmap address space can be freed for reuse via another TCP_ZEROCOPY_RECEIVE call. The kernel will reuse the address space for mapping the next data buffer. munmap() can be used to unmap and release the address space. Note that reuse does not require an mmap() call.

This API shows performance gains only when conditions pertaining to buffer alignment are met. So this is not a general purpose API.

On a setup using header splitting capable NIC’s and an MTU of 61512 bytes, data processing went from 129µs/MB to just 45µs/MB.

AF_XDP, Zero Copy

AF_XDP sockets have been enhanced to support zero copy send and receive by using Rx and Tx rings in the user space.

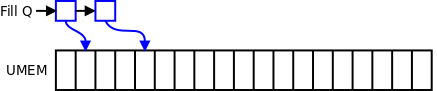

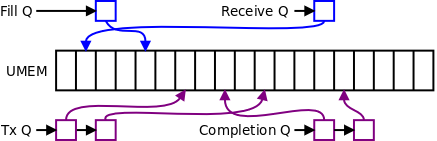

A large chunk of memory called UMEM is allocated. This memory is registered with the socket via the setsockopt XDP_UMEM_REG. The block of memory is further divided into smaller chunks called descriptors, which are addressed by their index within the UMEM block. Descriptors are used as send and receive buffers. UMEM can be shared between different sockets and processes.

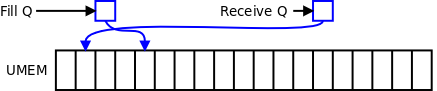

To use the chunks as Rx buffers, two circular queues ‘fill queue’ and ‘receive queue’ are created. ‘fill queue’ is used to pass the index of the descriptor within UMEM to be used for copying data, ‘receive queue’ is updated by the kernel with indexes that have been used.

‘fill queue’ is allocated via the setsockopt XDP_UMEM_FILL_QUEUE and is mapped in user space.

‘receive queue’ is allocated via the setsockopt XDP_RX_QUEUE and is also mmaped in user space. Once the kernel has copied data in one of the descriptors whose index was provided in the fill queue, it places that descriptor’s index in this queue to indicate that it has been used and data is available to be read.

A similar mechanism is used for Tx. A transmission queue ‘Tx Q’ is created via the setsockopt XDP_TX_QUEUE and UMEM indexes of packets to be transmitted are placed in this queue. A ‘Completion Q’ is created via the setsockopt XDP_UMEM_COMPLETION_QUEUE. The kernel adds UMEM indexes of buffers that have been transmitted to the Completion Q.

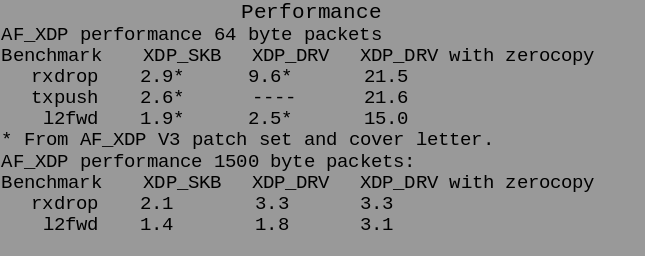

Performance

Performance improved from 15 Mpps to 39 Mpps for Rx and from 25 Mpps to 68 Mpps for Tx. This enhancement makes XDP performance on par with DPDK.

Use of this feature requires that the application create it’s own packets by inserting all the necessary headers and also perform protocol processing. It also requires use of libbpf. Similar requirements exist when using DPDK.

Conclusion

UEK6 delivers continued network performance enhancements and new technology to build faster networking products.

References:

Material presented has been borrowed from several sources, some are listed below.