Originally published March 26, 2020

High performance database deployment relies on effective management of system resources. Only Oracle Exadata provides fully integrated management of system resources including Oracle databases, server compute resources, and I/O.

In this article, we will explore how resources can be managed across Container Databases and across non-Container databases.

Demo Video

This demo video shows how to manage resources BETWEEN Container Databases and non-Container databases running on a single Exadata Quarter Rack. The demo shows how Resource Manager controls system resources across databases including CPU and I/O.

The full demo can be viewed here: https://youtu.be/sPxaHvG0_yA

Across Databases & Within Databases

The two major use-cases for Oracle Resource Manager are to manage resource allocations across databases and within databases. Resource Manager is used to ensure databases each receive their allotted resources and prevents the “noisy neighbor” problem. Resource Manager can also be used within a single database using Consumer Groups to prevent users or jobs from consuming too many resources.

Virtual Machines & Resource Manager on Exadata

While Oracle Exadata has commonly been deployed in on-premises environments using a Bare Metal configuration, use of Virtual Machines on Exadata has become much more common. All Exadata machines in the Oracle Public Cloud and Cloud at Customer are deployed using Virtualization. Oracle Database Resource Manager (DBRM) is completely independent from the server configuration, and manages resources within either Bare Metal database servers or Virtual Machines on Exadata. Of course Exadata I/O Resource Manager (IORM) is independent from database server configuration, but it tightly integrated with DBRM to control I/O resources associated with each Database.

Full Stack Resource Management on Exadata

The full stack of resources can be managed with a single set of resource controls on Exadata using the combination of Oracle Database Resource Manager (DBRM) and Exadata I/O Resource Manager (IORM). These tools are fully integrated with each other to provide single set of controls as we will see in this blog and accompanying video. The resources that need to be managed are:

- CPU

- Memory

- Processes

- Storage Space

- I/O

CPU is managed by Oracle Database Resource Manager (DBRM) for Container Databases, Pluggable Databases, and conventional non-Pluggable Databases. While our previous demo showed how to manage CPU between Pluggable Databases that reside within a single Container Databases using Shares and Limits, this demo shows how to manage CPU between Container Databases and Non-Pluggable Databases using the Instance Caging feature of Resource Manager.

Memory for a database (PDB, CDB, or non-PDB) is controlled using the database instance level settings for System Global Area (SGA) and Process Global Area (PGA). The memory within a Bare Metal server or Virtual Machine is a finite resource that should never be over-subscribed.

Processes on a Bare Metal server or Virtual Machine are another finite resource that needs to be managed for systems to perform properly. The number of processes used by a database is controlled through parameters for sessions and parallel query processes.

Storage space (disk and/or Flash) is controlled through Oracle Automatic Storage Management (ASM) disk group configurations on Exadata. Storage space is allocated through ASM to Bare Metal Clusters or Virtual Machine Clusters.

I/O resources are managed on Exadata using IORM (I/O Resource Manager). I/O resources include IOPS (Input/Output Operations Per Second), I/O bandwidth, and FlashCache space allocated to each database. Exadata also provides automatic prioritization of critical I/O, solving a common source of performance issues seen on non-Exadata platforms.

Resource Shapes on Exadata

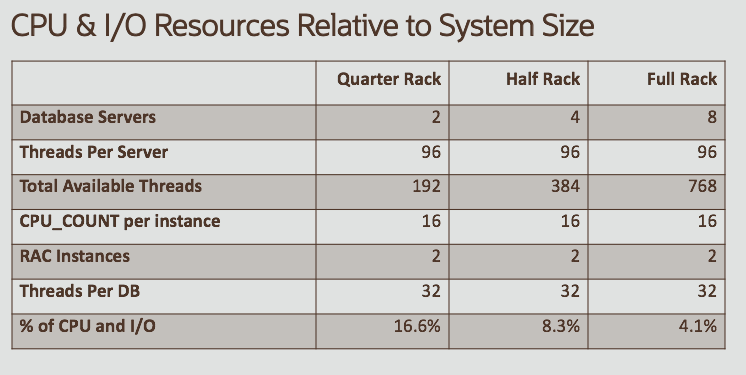

For databases consolidated on a single Exadata system, Oracle recommends establishing standardized resource shapes consisting of common ratios for CPU, Memory, Processes and I/O, then assigning those resource shapes to each database. The use of Resource Shapes simplifies system administration and capacity planning. Using this approach, CPU resource settings are controlled using DBRM, and the CPU ratios are then applied to I/O resources by setting the IORM Objective to “auto”. Thus, a single setting controls both CPU and I/O resources. CPU and I/O resources within a resource shape are relative to the overall size of the system. For example, a database with a given resource need (16 cores in each of 2 RAC instances) is 16.6% of the resources on a 1/4 rack, while the same database would be 8.3% of resources on a half rack, etc.

Using Resource Shapes with IORM Objective “AUTO” means customers only need to manage the CPU resources, and I/O is simply a function of CPU resources as shown in the table above. For more information on using Resource Shapes on Exadata, please refer to the Maximum Availability Architecture (MAA) white paper on Database Consolidation here: https://www.oracle.com/technetwork/database/availability/maa-consolidation-5648225.pdf

Demo Workload

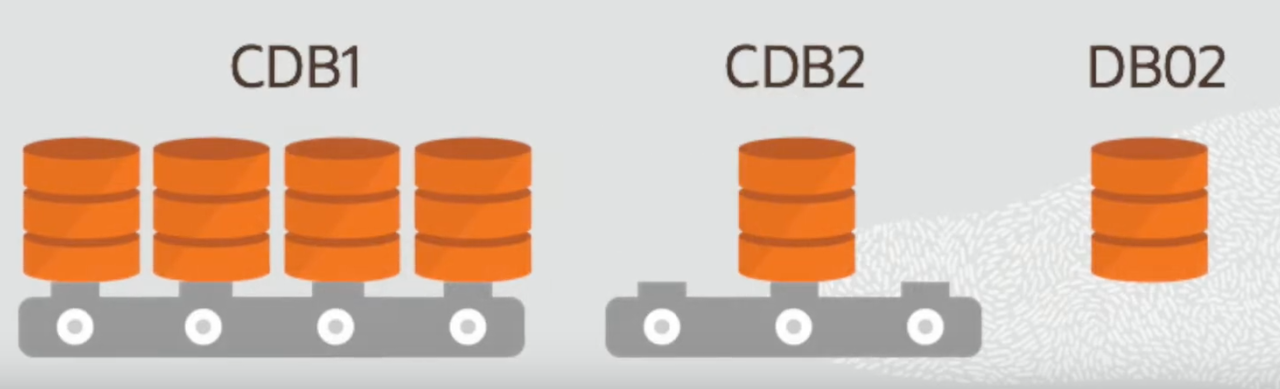

As with our previous Resource Manager demo, this second demo also uses Swingbench (from Dominic Giles) to drive the workloads on the demo system. This demo uses 6 databases in total, including 4 databases from the previous demo, 1 Container Database with a single Pluggable Database, as well as 1 non-Pluggable database. The purpose of this demo is to show how resources are managed across Containers or Databases rather than simply within a Container.

Of course Swingbench can be downloaded from Dominic’s web site here: http://dominicgiles.com/swingbench.html

Instance Caging

When Resource Manager is not enabled, the CPU_COUNT setting for a database (CDB, PDB, or Non-PDB) simply reflects the number of CPU hyperthreads on the host database server, or each Instance in a Real Application Clusters (RAC) environment. However, when Resource Manger is enabled, the CPU_COUNT can be used to restrict the amount of CPU hyperthreads each instance can use. This feature is known as Instance Caging.

Default Plan & Default CDB Plan

The Instance Caging Feature is enabled by simply setting DEFAULT_PLAN for non-Pluggable (non-Container) databases, and by setting DEFAULT_CDB_PLAN for Container databases. Each Pluggable Database within a Container can then use either Shares & Limits as seen in the previous video, or using CPU_COUNT for each Pluggable Database. Either of these approaches (Shares & Limits or CPU_COUNT) results in each Pluggable Database having access to a certain amount of CPU resources.

Preventing The “Noisy Neighbor” Problem

In any consolidation environment, one mis-behaving database (or users within a database) has the potential to impact performance in other databases on the same system. Oracle Resource Manager prevents this “noisy neighbor” problem by restricting the resources one database can consume. While CPU resources can also be allocated at the system level using Virtual Machines, Oracle Resource Manager is more flexible, simpler to manage, and is completely dynamic, with changes taking effective immediately. Oracle recommends using Virtual Machines for purposes of isolation rather than for resource management. Excessive use of Virtual Machines (such as 1 database per VM) dramatically increases administrative burden, but also makes much less efficient use of computing resources, which leads higher operating costs.

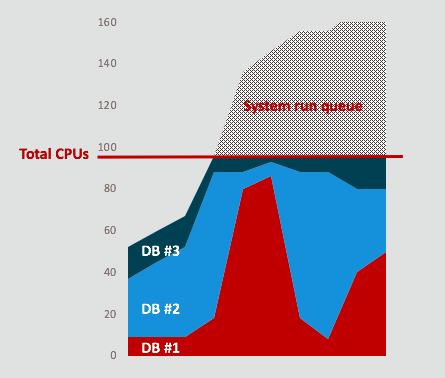

The following graph illustrates what might happen when 3 database share the same server without Resource Manager. Performance issues in DB #1 (shown in RED) are impacting the other 2 databases, as DB #1 consumes more CPU than it’s supposed to consume. Resource Manager prevents this by limiting the amount of CPU each database can consume.

Resource Manager “Causing Problems” or “Doing Nothing”

Resource Manager watches resource usage (i.e. “doing nothing”) and takes action to resolve resource conflicts (i.e. “causes problems”) when there is a conflict. One common complain is that Resource Manager causes performance “problems”, but this is simply a misunderstanding. Resource Manager will LIMIT the amount of CPU a database can consume. If the database exceeds that amount, Resource Manager will slow the database down using the following wait event:

- resmgr: cpu quantum

Of course “resmgr” is Resource Manager, and cpu quantum is a quantity of CPU. When this wait event appears, Resource Manager is limiting the amount of CPU that the database can use. In the other extreme, we often hear users complain that Resource Manager is “doing nothing”. Of course Resource Manager is simply watching for resource conflicts and not taking any action if there isn’t a conflict between resource consumption and the rules Resource Manager has been requested to implement.

Noisy I/O Neighbors

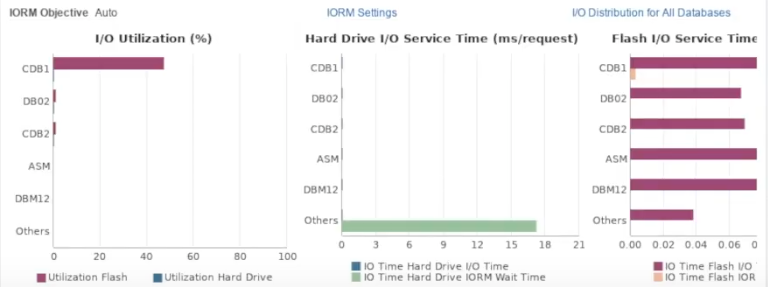

While we often talk about Noisy Neighbors in terms of CPU, the same issue also applies in the I/O layer on any system where multiple databases share storage resources such as Flash or Disk. As seen in the demo video, the Exadata Plug-In for Oracle Enterprise Manager (OEM) shows that IORM Object is set to “Auto” and we can see that databases are getting the same percentage of I/O resources equal to their percentage of CPU resources.

Using the single setting of CPU_COUNT, Database Administrators are able to easily control both the CPU and I/O resources a database is allowed to consume. Pluggable Databases can also use shares and limits to control the amount of CPU allocated to each database, and serves to enable sharing of any idle CPU and I/O resources within the Container DB. As we have seen in this demo and the previous demo, changes to CPU resource allocations are entirely dynamic and take effect immediately.

See Also…