Originally published September 17, 2019

Oracle Exadata Database Machine X8M has just been announced at Oracle OpenWorld by Larry Ellison (stay tuned for the link), and is available now. Building on the Exadata X8 state-of-the-art hardware and software, the Exadata X8M family adopts two new cutting-edge technologies:

- RDMA over Converged Ethernet (RoCE) network fabric, enabling 100 Gb/sec RDMA, and

- Persistent Memory, adding a new shared storage acceleration tier

Exadata X8M delivers record-breaking performance, attaining 16 Million OLTP read IOPS (8K I/Os) and OLTP I/O latency under 19 microseconds. It’s not just the new components: Exadata’s ability to evolve its architecture to incorporate new technologies, integrated with co-designed software enhancements, multiplies the value of individual component technologies. In Exadata X8M, data movement is accelerated by faster RDMA (via RoCE) and a new persistent memory tier, using database-aware protocols and software.

RDMA over Converged Ethernet (RoCE) Network Fabric

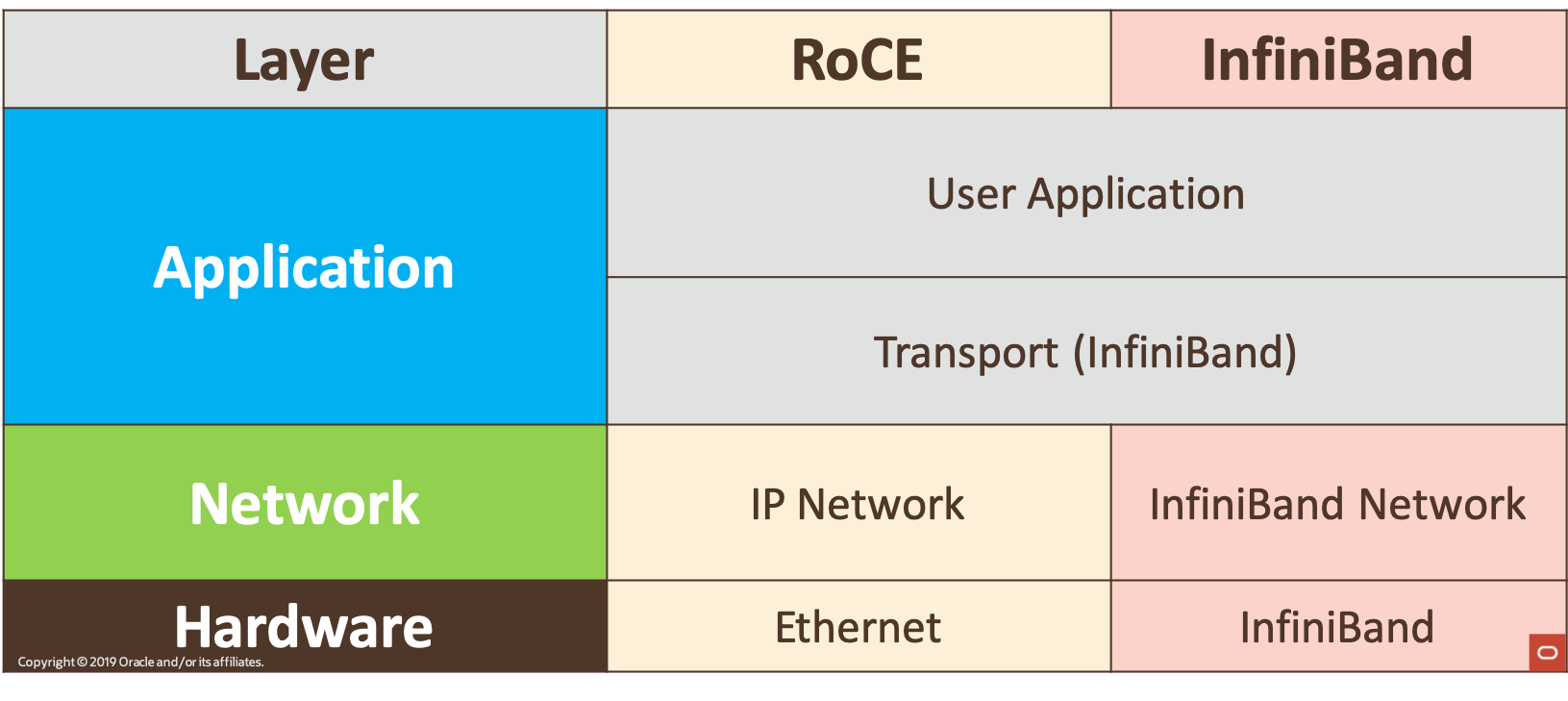

Remote Direct Memory Access (RDMA) is the ability for one computer to access data from a remote computer without OS or CPU involvement: the network card directly reads/writes memory with no extra copying or buffering resulting in very low latency. RDMA was introduced to Exadata with InfiniBand and is a foundational part of Exadata’s high-performance architecture. RDMA enables several unique Exadata features, such as Direct-to-Wire Protocol and Smart Fusion Block Transfer.

RDMA over Converged Ethernet (RoCE) is a set of protocols defined by an open consortium, developed in open-source, and maintained in upstream Linux. RoCE’s protocols enable InfiniBand RDMA software to run on top of Ethernet. This allows the same software to be used at the upper levels of network protocol stack, while transporting the InfiniBand packets across ethernet as UDP over IP at the lower level. As the API infrastructure is shared between InfiniBand and Ethernet, all existing InfiniBand RDMA benefits are also available on RoCE, including over a decade’s worth of performance engineering on Exadata.

Fig.1 Exadata X8M Shared Network Heritage

Exadata RoCE Network fabric provides transparent prioritization of traffic by type, ensuring the best performance for critical messages requiring the lowest latency. Low latency messages such as cluster heartbeat, transaction commits and cache fusion, are not slowed by higher throughput messages (such as backups, reporting or batch messages).

Exadata RoCE Network also optimizes communications by ensuring packets are delivered on the first try without costly retransmissions. Exadata RoCE avoids packet drops by utilizing RoCE protocols to manage the traffic flow, requesting the sender to slow down if the receiver’s buffer is full.

Through smart Exadata System Software 19.3.0, Exadata X8M also practically eliminates database stalls due to failure by immediately detecting server failures. Server failure detection normally requires a long timeout to avoid false server evictions from the cluster, however it is hard to distinguish between a server failure, and a slow response to the heartbeat due to a busy CPU. Exadata X8M Instant Failure Detection is not affected by OS or CPU response times, as it uses the hardware based RDMA to quickly confirm server response. Four RDMA reads are sent to the suspect server across all combinations of source/target ports. If all four reads fail, the server is evicted from the cluster. If a port responds, the hardware is available, even if the software is slow.

Fig.2 Exadata X8M Instant Failure Detection

Persistent Memory Acceleration

Persistent memory is a new silicon technology, adding a distinct storage tier of performance, capacity and price between DRAM and Flash. For the Exadata X8M release, 1.5 TB of persistent memory is added to High Capacity and Extreme Flash Storage Servers. Persistent memory enables reads at memory speed, and ensures writes survive any power failures that may occur.

In combination with the new RoCE 100Gb/s Network Fabric, smart Exadata System Software is able to fully leverage the benefits of persistent memory on remote storage servers via specialized data and commit accelerators.

Persistent Memory Data Accelerator

Exadata X8M Storage Servers transparently incorporate persistent memory in front of flash memory. This opens up the ability for Oracle Database to use RDMA instead of I/O to read remote persistent memory. By bypassing the network, I/O software, interrupts and context switches, latency is reduced from 250 microseconds to less than 19 microseconds, over 10x improvement. Adding persistent memory to the storage tier makes the aggregate performance across all storage servers dynamically available to any database on any server. Also, as only the hottest data is moved into persistent memory, it means a better utilization of persistent memory capacity can be achieved. For fault-tolerance, Exadata’s smart software mirrors this cached data across storage server’s persistent memory tier, protecting data from hardware failures.

Fig.3 Persistent Memory Data Accelerator

Persistent Memory Commit Accelerator

Consistent low latency for redo log writes is critical for the performance of OLTP Databases. Transaction commits can be completed only when redo logs are persisted, that is permanently written to storage. With the persistent memory commit accelerator, Oracle Database 19c is able to directly place the redo log record in persistent memory on multiple storage servers using RDMA. Since the database uses RDMA for writing the redo logs, up to an 8x faster redo log writes are seen. Since the redo log is persisted on multiple storage servers, it provides resilience.

The persistent memory log on the storage server is not the database’s entire redo log, it only contains the recently written records. Therefore hundreds of databases can share a pool of buffers enabling consolidation with consistent performance.

Fig.4 Persistent Memory Commit Accelerator

Security and Management are automated. PMEMCache and PMEMLog, (the software layer above the persistent memory hardware), is configured automatically on installation. Persistent memory is only accessible to databases using database access controls, so no OS or local access is available, ensuring end to end security of data. Hardware monitoring and fault management is performed via ILOM and includes persistent memory hardware modules. And when decommissioning or reinstalling storage servers, a secure erase is automatically run on the underlying persistent memory modules. This makes the addition of persistent memory in Exadata X8M effectively transparent.

Summary

Recall Exadata X8 was released in April 2019, and hit 6.57 Million 8K Read IOPS during benchmarking, breaking 6 Million IOPS within a single rack for the first time. This was a huge milestone, and confirmed that year over year Exadata continues improving the performance, cost-effectiveness, security and availability of the highest performing, most strategic platform for Oracle Database.

Exadata X8M continues this great tradition of seamlessly integrating new technologies, leveraging unique co-designed hardware and database-aware software, to further increase the advantages of the flagship platform for running the Oracle Database. While each new technology is cutting edge, over a decade of engineering excellence and thousands of engineer-years of experience insure that your data is safe and secure, provides consistency at the highest performing rate, with easy management, for the same price. All the while not requiring any application changes.

Exadata X8M benefits OLTP, Analytics and mixed workloads significantly. For Analytics and mixed workloads, the combination of persistent memory and RDMA frees up CPU cycles of the storage servers, allowing more processing power for more smart scan operations, and the 100Gb/sec RoCE network gives a higher net throughput for operations that need it. For OLTP, the near-instant retrieval of cached data from storage, near-instant write of commit records and extreme increase in IOs, makes Exadata X8M the best platform to run Oracle Database.

For more information on Exadata X8M, see oracle.com/exadata.

Footnote

By the way, the (1) on the first image reads: “These are real-world end-to-end performance figures measured running SQL workloads with standard 8K database I/O sizes inside a single rack Exadata system, unlike storage vendor performance figures based on small I/O sizes and low-level I/O tools and are therefore many times higher than can be achieved from realistic SQL workloads. Exadata’s performance on real database workloads is orders of magnitude faster than traditional storage array architectures, and is much faster than current all-flash storage arrays, whose architecture bottlenecks on flash throughput.”