Originally published May 19, 2021

An incredible amount of engineering work has gone into the latest generation of Exadata focused on maintaining and extending our lead in Database performance using Intel Optane Persistent Memory (PMEM) combined with Remote Direct Memory Access (RDMA). In this article, we will concentrate on advancements in the 3 primary areas of database performance and scalability that are critical to performance of transactional applications including:

- Reading of Individual Database Blocks from Storage

- Writing to the Database Transaction Log

- Transfer of Database Blocks Across the Cluster Interconnect

These latency-sensitive operations deep within the Oracle Database have been greatly improved once again, putting Exadata yet another generation ahead of the competition in both on-premises and Cloud deployments.

Transaction Processing

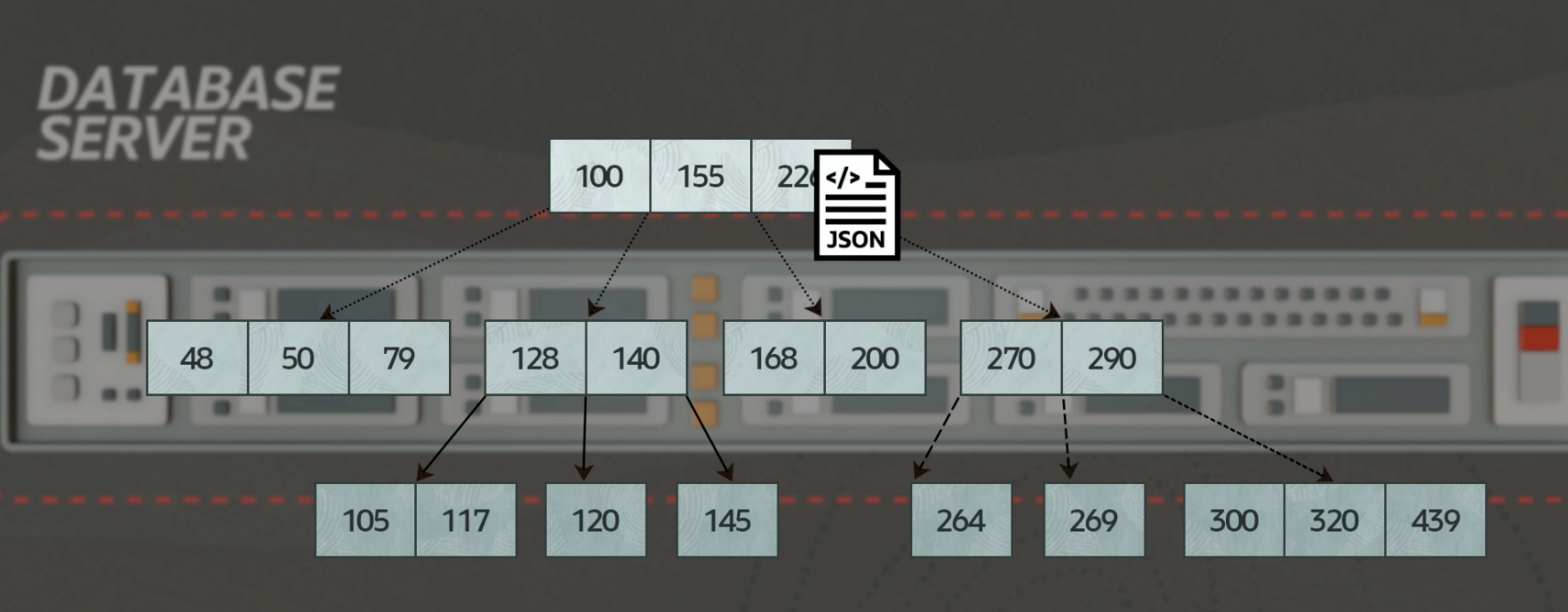

The example used in the video above shows what happens in one of the simplest and most common examples of a database transaction, which is inserting a web shopping cart into a database. The application in this example has modeled data using JSON (Java Script Object Notation) so all of the complexities of the data are encapsulated into a single object that simply needs to be inserted into the database. This application design minimizes the back & forth interactions between application and database, but will be accelerated even further using Exadata technology. The same fundamental behavior occurs with JSON objects as well as with relational tables because indexes are fundamentally the same in either case. Inserting the JSON object includes updating the index, and some of the index blocks need to be read from storage. The final result must be committed to the database and made durable as we will show.

Latency is Key

Input/Output Operations Per Second (IOPS) is an important measure of overall performance system-wide, but latency (response-time) is critical for performance of each transaction. Exadata delivers millions of IOPS, which is dramatically higher than competitors as a measure of total bandwidth. For example, the smallest Exadata X8M available in the Oracle Cloud delivers 11.5 times more IOPS than Oracle Database running on the latest AWS RDS instances (3,000,000 IOPS vs. 260,000 for AWS RDS). Exadata Cloud Service also scales to many times this size by simply adding more Database Servers and Storage Servers. While Exadata delivers this incredible scale, the response-time or latency of individual database operations is critical to transaction performance including single block reads and redo writes during commit processing.

Single Block Reads (<19µsec)

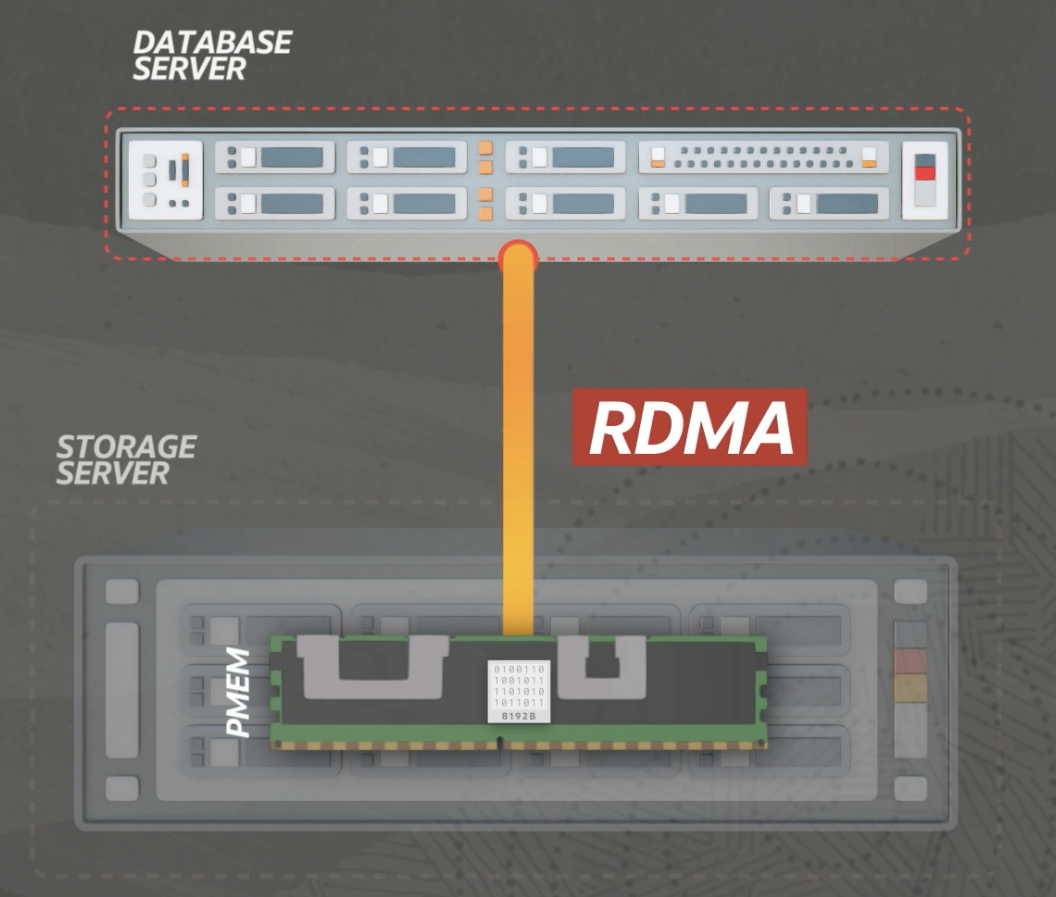

The first time-critical operation to consider is the single block read. As shown in the transaction example above, databases are often too large to fit into memory and some blocks of data must be read from storage. Even a small amount of I/O can impact transaction performance if those I/O operations are not fast enough. The following graphic shows the high speed connection between Exadata Database Servers and Storage Servers, which enables the software to use Remote Direct Memory Access (RDMA).

Oracle Database on Exadata reads single blocks of data from storage in less than 19µsec. This can be easily measured via Automatic Workload Repository (AWR) in the “cell single block physical read” metric. The equivalent AWR metric is “db file sequential read” on non-Exadata systems. There is simply no other solution available that provides this level of performance along with data integrity and fault resilience.

Intel Optane Persistent Memory (PMEM) is used as a caching layer in Exadata, specifically targeted at caching blocks that are the subject of single-block reads, as well as caching writes to the Database transaction log. Caching data into Persistent Memory allows the Oracle Database to access those contents much faster compared to any storage technology that uses a request/response processing model. The Oracle Database software does not “request” a block from storage. Rather, the Database simply reads the contents of the PMEM and retrieves the blocks it needs. The Exadata Storage Server software is responsible for identifying the “hot” blocks of data and placing them into PMEM.

Accelerating Commits with Persistent Memory (~25µsec)

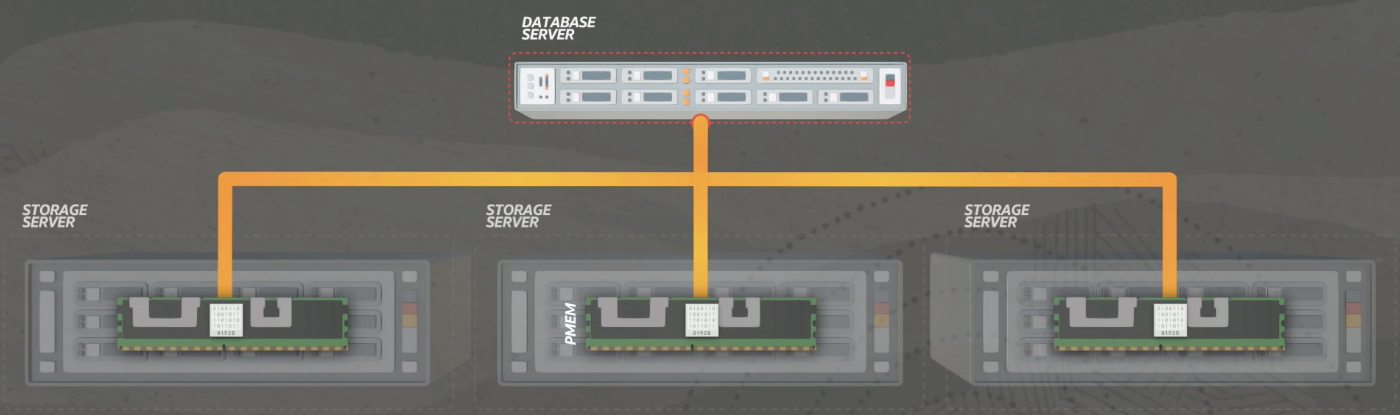

The second time-critical operation for transaction processing that Exadata addresses is writing to the database transaction log, specifically during COMMIT processing. Writes to the transaction log are always critical, but especially when those writes contain commit markers. It is not simply the OBJECT being written (redo blocks), but the context under which that write is happening (during commit). Oracle has built-in numerous optimizations that accelerate the transaction log, and the latest innovation is use of Persistent Memory to accelerate commit processing. As shown below, the transaction log (redo blocks) are written to 3 Storage Servers in unison.

Writes to the database transaction log will vary depending on the amount of data being processed, but will generally complete in about 25µsec. The critical redo log write operations can be seen under the AWR metrics “log file sync” and “log file parallel write” in any Oracle Enterprise Edition Database. Transaction processing inside of an Oracle Database occurs primarily in memory, but the transaction log is persisted to storage for durability, and Exadata will persist these writes to 3 Storage Servers simultaneously in a HIGH redundancy configuration (standard in Oracle Cloud). This operation inside of an Oracle Database has simply gotten more critical as processing volumes have increased. Exadata delivers innovations such as the Persistent Memory Commit Accelerator to keep pace with modern transaction processing applications.

Remote Direct Memory Access for Cluster Interconnect (~10µsec)

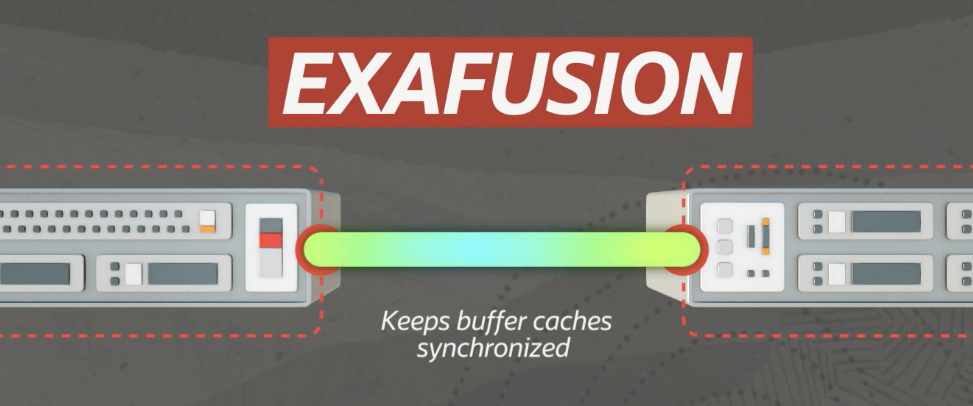

The third time-critical operation to consider is transfer of blocks across the cluster interconnect. Remote Direct Memory Access (RDMA) is the technology that allows a process on one computer to directly access the memory of another computer such as reading single blocks and writing to the Oracle Database transaction log as discussed above. RDMA is also the basis for the fastest cluster interconnect available and is the foundation for the Exafusion technology that keeps Oracle Database buffer caches synchronized on Exadata.

The Oracle Cache Fusion protocol is known as Exafusion on the Exadata platform because of enhancements that provide even greater performance. Oracle Database on Exadata uses RDMA to access data in other nodes in the range of ~10µsec, providing greater performance and scalability than other platforms. Traditional networking technology used in non-Oracle Clouds see latencies in the range of 500 to 1,000µsec (50-100 slower).

Further Reading

This article covers the end-to-end processing in Exadata and unique innovations that deliver the highest levels of performance possible for transaction processing. This builds upon the same technology covered in our article on Persistent Memory Magic in Exadata.