Oracle Cloud Infrastructure ecosystem is open and ubiquitous, making available different standardised approaches to promote integration between services and making the life of developers and cloud architects much simpler.

The OCI Service Broker for Kubernetes was the de facto approach to make Oracle Container Engine and Kubernetes interact with OCI. But now, Oracle recommends the use of OCI Service Operator for Kubernetes (OSOK) to interact with Oracle Cloud Infrastructure services using the Kubernetes API and Kubernetes tooling. Take a look on the documentation here to start, but before that, get a coffee or a cup of tea, and during 10 minutes read this article and you’ll be good to go.

Note: If you’re interested in Kubernetes, OCI has a lot to offer! Sign up for an OCI Free Tier account today.

Well, more or less. This blog is just a complement for the Github project which really contains everything you need to know. What I offer you here is just an head start 🙂 .

In this post, I’ll connect Oracle Container Engine with the OCI Streaming service in a secure way. The OCI Streaming service is a real-time, serverless, Apache Kafka-compatible event streaming platform for developers and data scientists.

OSOK is based on the Operator Framework, an open-source toolkit used to manage Operators in an automated and scalable way.

A fundamental concept is that of the Operator.

And what is an operator?

To explain what an operator is, we need to start by explaining what a Customer Resource means in Kubernetes.

The documentation is straightforward: a custom resource is simply an extension of the Kubernetes API which is not necessary available by default, i.e., its a resource which isn’t built in the K8s core. To define the new resource template we create a Customer Resource Definition CRD object, which is what in fact creates a new custom resource with a name and schema that you specify. After creating the new CRD, you will be able to manage its objects lifecycle with kubectl just as if they were any other default Kubernetes resource such as volumes or pods.

Without a controller, a resource will not do anything. Each resource , whether custom or built in, will have a specific controller responsible to manage and monitor the status of each one of its objects.

The controller is basicaly code running in a controlled loop listening to specific events affecting the type of resource the controller is looking at.

The job of the controller is to systematically monitor a resource while permanently watching for any changes or events occurring and associated with the resource.

However, custom resources and custom controllers are separate entities and we need to manage each one separately if we want to use them. This is when the Operator Framework makes its appearance as it allows to package and manage together Customer Resources and Custom Controllers, including deploying them and managing all their lifecycle.

https://sdk.operatorframework.io/

What can I do with Operator SDK?

The Operator SDK provides the tools to build, test, and package Operators. Initially, the SDK facilitates the marriage of an application’s business logic (for example, how to scale, upgrade, or backup) with the Kubernetes API to execute those operations. Over time, the SDK can allow engineers to make applications smarter and have the user experience of cloud services. Leading practices and code patterns that are shared across Operators are included in the SDK to help prevent reinventing the wheel.

The Operator SDK is a framework that uses the controller-runtime library to make writing operators easier by providing:

- High level APIs and abstractions to write the operational logic more intuitively

- Tools for scaffolding and code generation to bootstrap a new project fast

- Extensions to cover common Operator use cases

The other important concept is the Operator Lifecycle Manager which “provides rich mechanisms to keep Kubernetes native applications up to date automatically”

Lets start

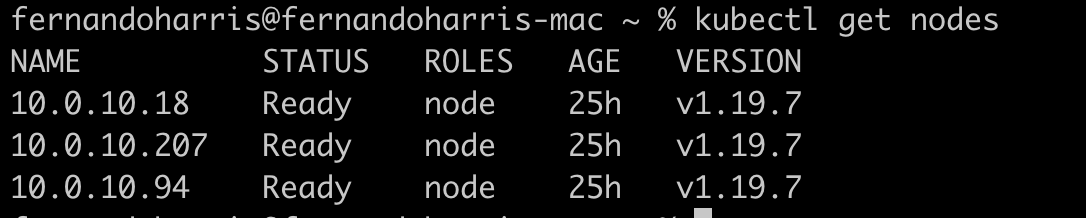

Make sure you have an OKE cluster and proper access to it by running kubectl commands against the Kubernetes API. For example: kubectl get nodes

Don’t make a confusion with the OCI Service Broker:

Instead of the OCI Service Broker for Kubernetes, Oracle now recommends you use the OCI Service Operator for Kubernetes to interact with Oracle Cloud Infrastructure services using the Kubernetes API and Kubernetes tooling.

Again, the detailed instructions to install is in the OCI Service Operator for Kubernetes documentation in the Github repository. In summary, there are 3 sequential steps to follow:

- Install OCI Service Operator for Kubernetes.

- Secure OCI Service Operator for Kubernetes.

- Provision and bind to the required Oracle Cloud Infrastructure services.

We’ll be following the documentation and Github and do this step by step.

Part 1: Install OCI Service Operator for Kubernetes.

Install the Operator SDK.

In my case, I’m using a Mac:

Install from Homebrew (macOS)

brew install operator-sdk

2. Install the Operator Lifecycle Manager (OLM)

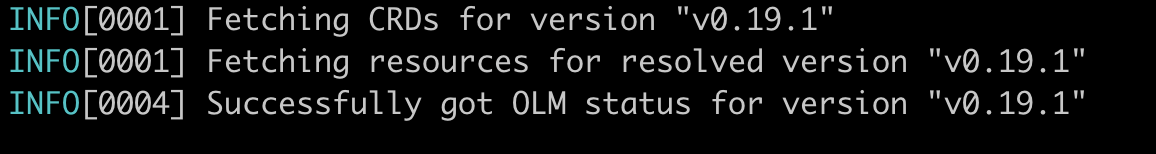

$operator-sdk olm install

$operator-sdk olm status

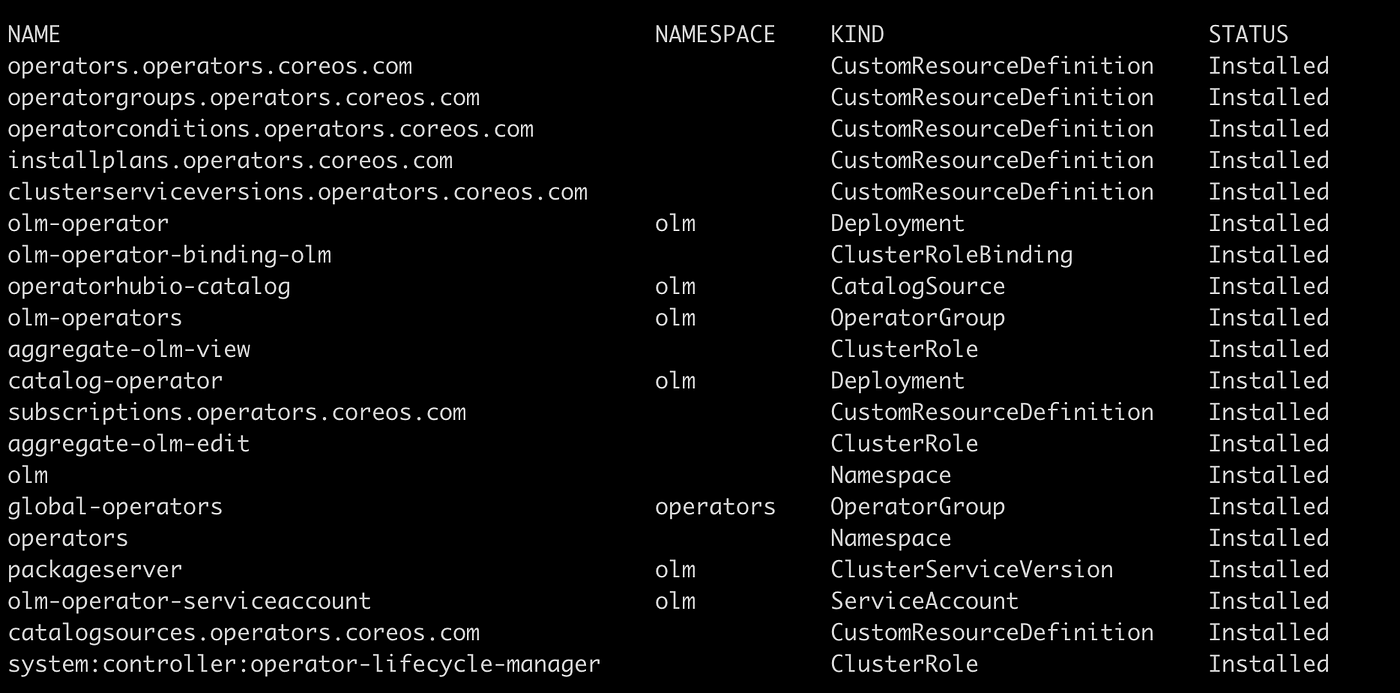

This will install a bunch of new resources in your cluster, including the creation of a couple of new namespaces: olm and operators:

Now, let’s deploy the OCI Service Operator for Kubernetes.

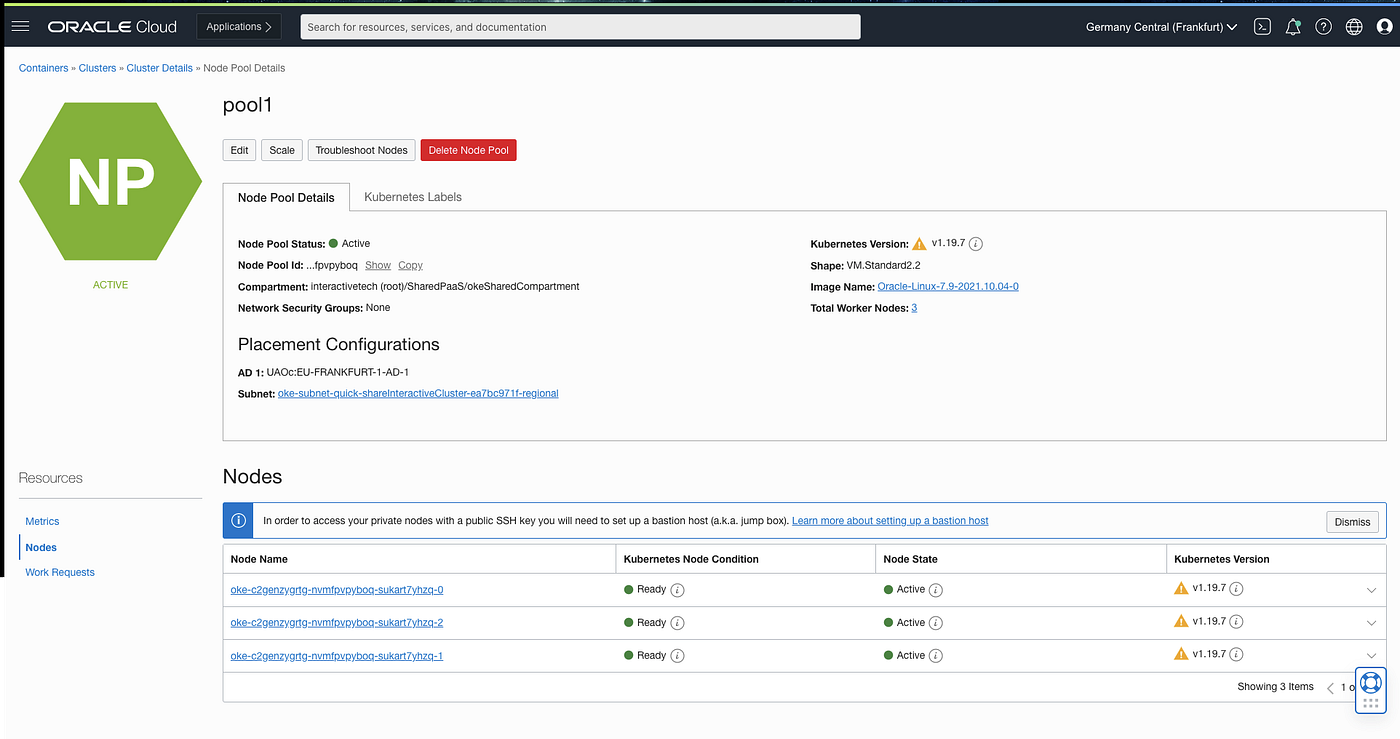

First things first, let’s get the OCID from the instances running in the node pool in the K8 cluster. You should be able to do this by visiting your cluster details and clicking the Nodes on the Resources on your OCI Console.

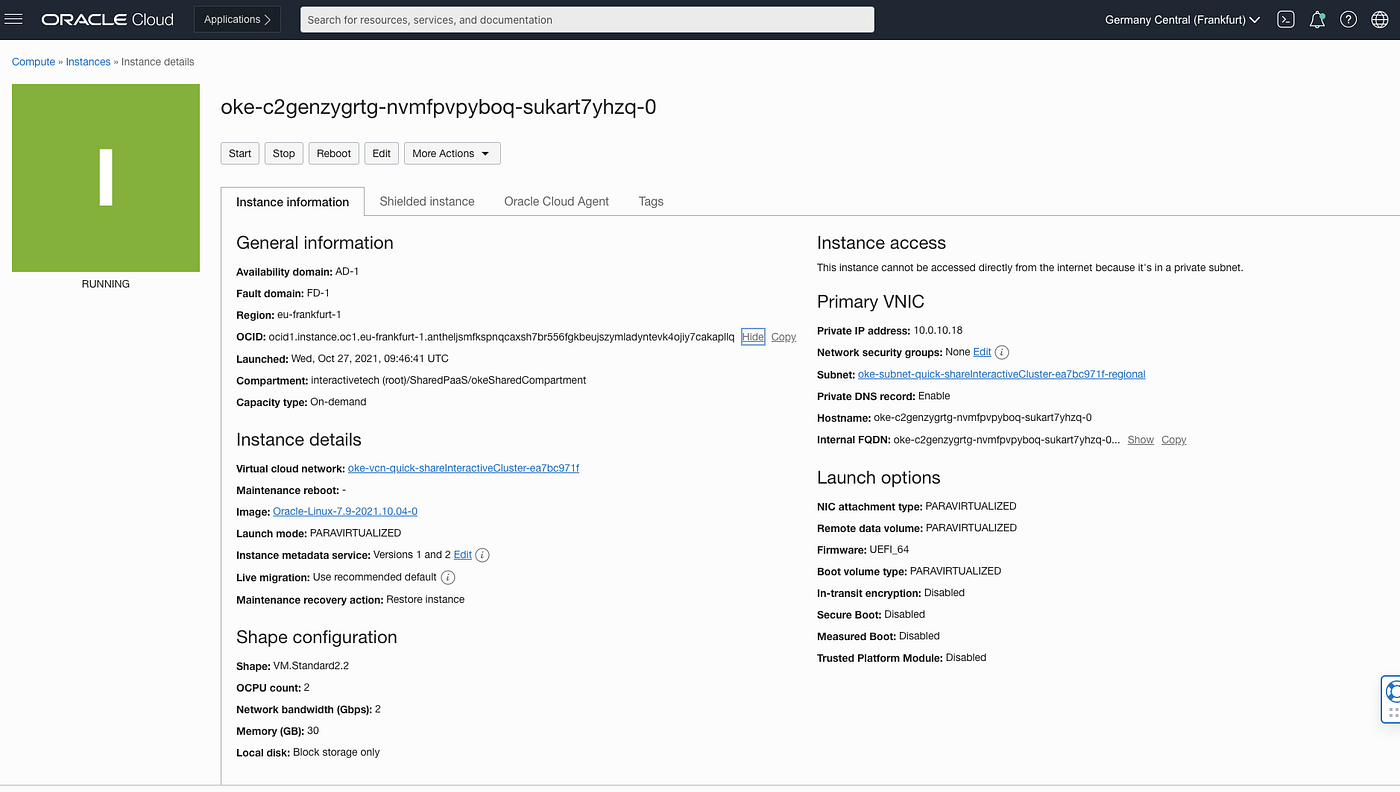

Click each of the instances and copy its OCID to your notepad:

As mentioned, repeat this last step for the other 2 instances running in the node pool. You should end up with something like this:

Worker node 1:

ocid1.instance.oc1.eu-frankfurt-1.antheljsmfkspnqcffjxhmiej2xrrwnjkj2r6wkesxwzg3ucij4v373ckaja

Worker node 2:

ocid1.instance.oc1.eu-frankfurt-1.antheljsmfkspnqcirlw72wfoczulmjfgr5kls3imqloep2nrxgkakebtboq

Worker node 3:

ocid1.instance.oc1.eu-frankfurt-1.antheljsmfkspnqcaxsh7br556fgkbeujszymladyntevk4ojiy7cakapllq

We are doing this because we need a way to let the node pools in the Kubernetes cluster interact with other OCI Services without the need to execute the typical authentication process with the OCI Client and SDK. We want to achieve proper Service interaction without the trouble of having to manage authentication against OCI through the OCI Client, SDK or whatever.

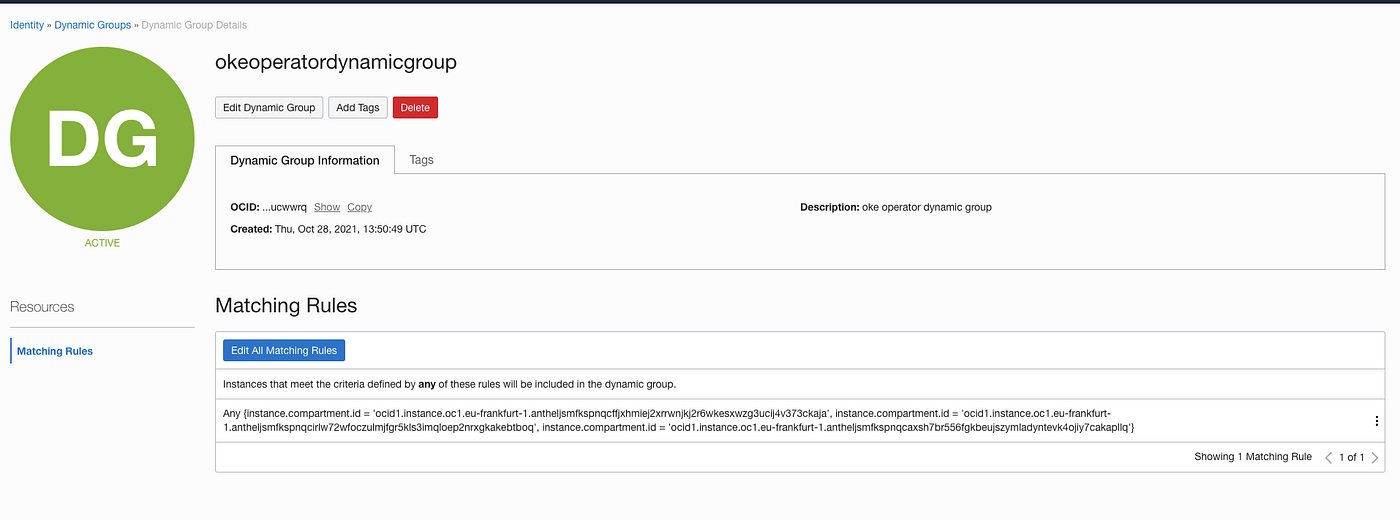

The standard way to do this in OCI is with the Instance Principals and Dynamic Groups. According to the documentation, Dynamic groups allow you to group Oracle Cloud Infrastructure compute instances as “principal” actors (similar to user groups). You can then create policies to permit instances to make API calls against Oracle Cloud Infrastructure services. When you create a dynamic group, rather than adding members explicitly to the group, you instead define a set of matching rules to define the group members. For example, a rule could specify that all instances in a particular compartment are members of the dynamic group. The members can change dynamically as instances are launched and terminated in that compartment.

To create a dynamic group using the Console:

- Open the navigation menu and click Identity & Security. Under Identity, click Dynamic Groups.

- Click Create Dynamic Group.

- Enter the following:

- Name: A unique name for the group. The name must be unique across all groups in your tenancy (dynamic groups and user groups). You can’t change this later. Avoid entering confidential information.

- Description: A friendly description.

Enter the Matching Rules. Resources that meet the rule criteria are members of the group.

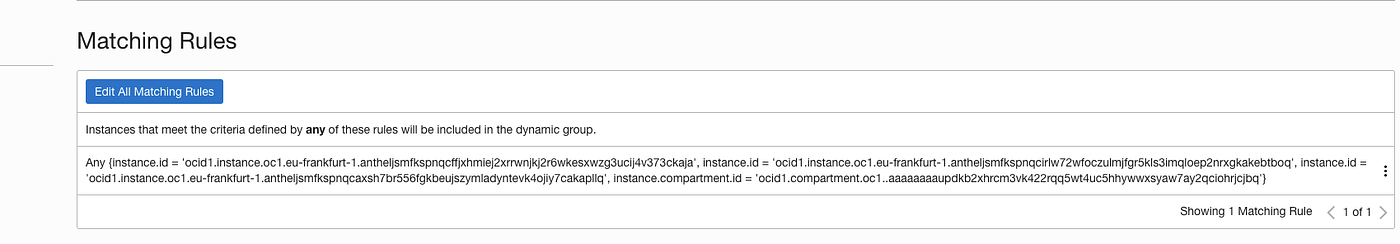

The rule matches the kubernetes worker instance ocid or the compartment where the worker instances are running, which you got it above:

You should be able to build something like this:

Any {instance.id = ‘ocid1.instance.oc1.eu-frankfurt-1.antheljsmfkspnqcffjxhmiej2xrrwnjkj2r6wkesxwzg3ucij4v373ckaja’, instance.id = ‘ocid1.instance.oc1.eu-frankfurt-1.antheljsmfkspnqcirlw72wfoczulmjfgr5kls3imqloep2nrxgkakebtboq’, instance.id = ‘ocid1.instance.oc1.eu-frankfurt-1.antheljsmfkspnqcaxsh7br556fgkbeujszymladyntevk4ojiy7cakapllq’, instance.compartment.id = ‘ocid1.compartment.oc1..aaaaaaaaupdkb2xhrcm3vk422rqq5wt4uc5hhywwxsyaw7ay2qciohrjcjbq’}

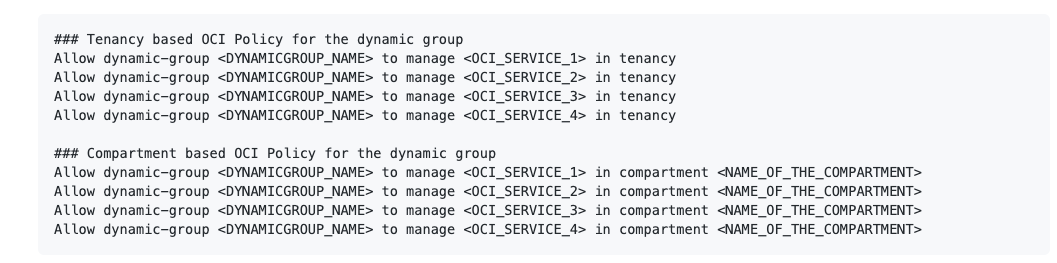

Next, to give the dynamic group the permissions you need, you will need to write a policy. The policy must be tenancy wide or in the compartment for the dynamic group created above:

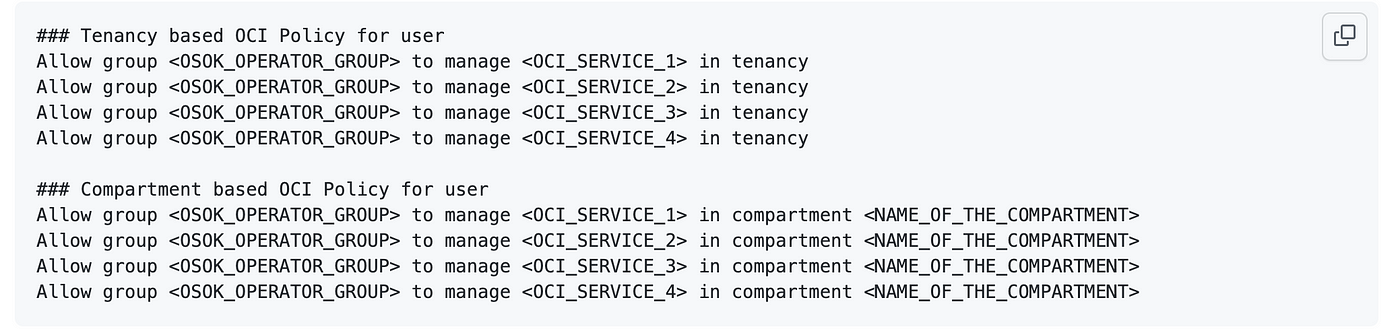

From the documentation in Github we can get a couple of examples:

The references in the example for <OCI_SERVICE_1>, <OCI_SERVICE_2>, etc, represents the OCI Services like “autonomous-database-family”, “instance_family”. Keep in mind that at the moment I write this article, 3 OCI Services are supported by the Operator: Autonomous Database, MySQL PaaS and OCI Streaming.

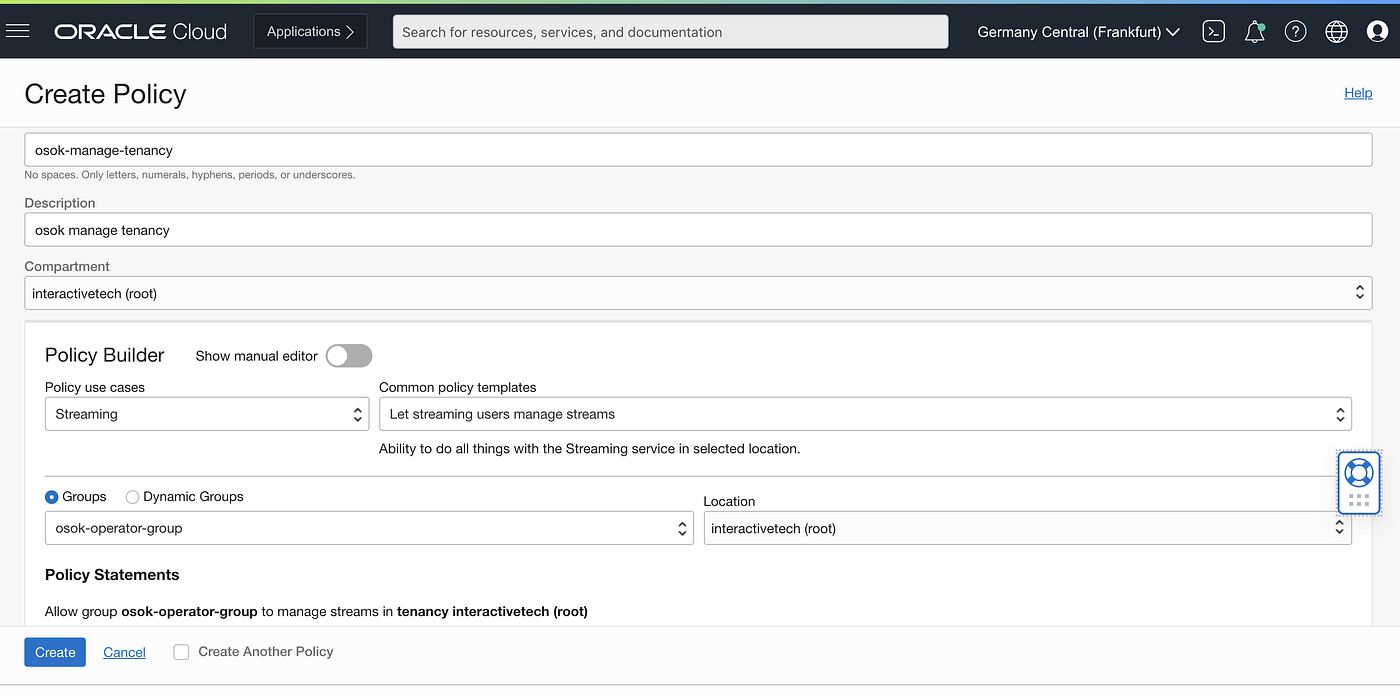

In our example we will work with the OCI Streaming.

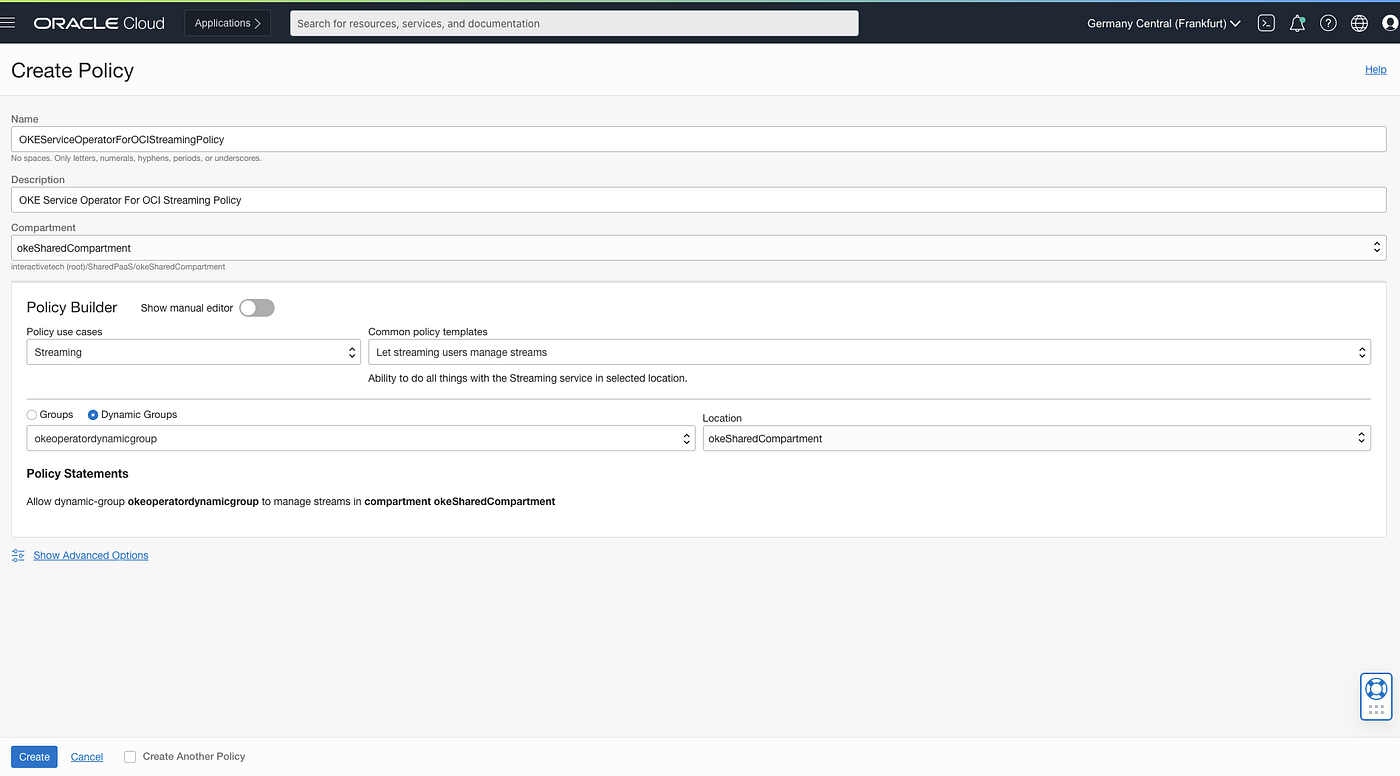

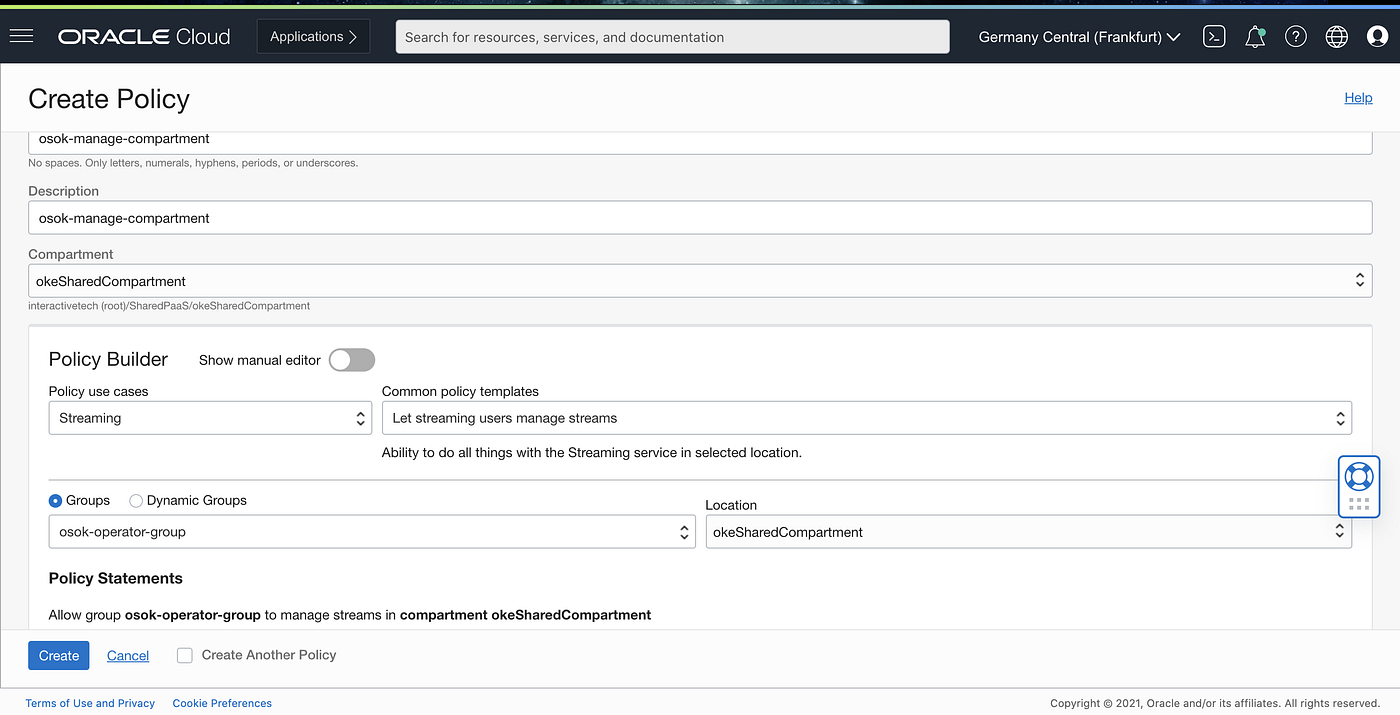

The OCI Policy Builder is a very intuitive tool to build these policies. Take a look on the below link to find more info about Policies for accessing Services in OCI:

Policy Statements

Allow dynamic-group okeoperatordynamicgroup to manage streams in compartment okeSharedCompartment

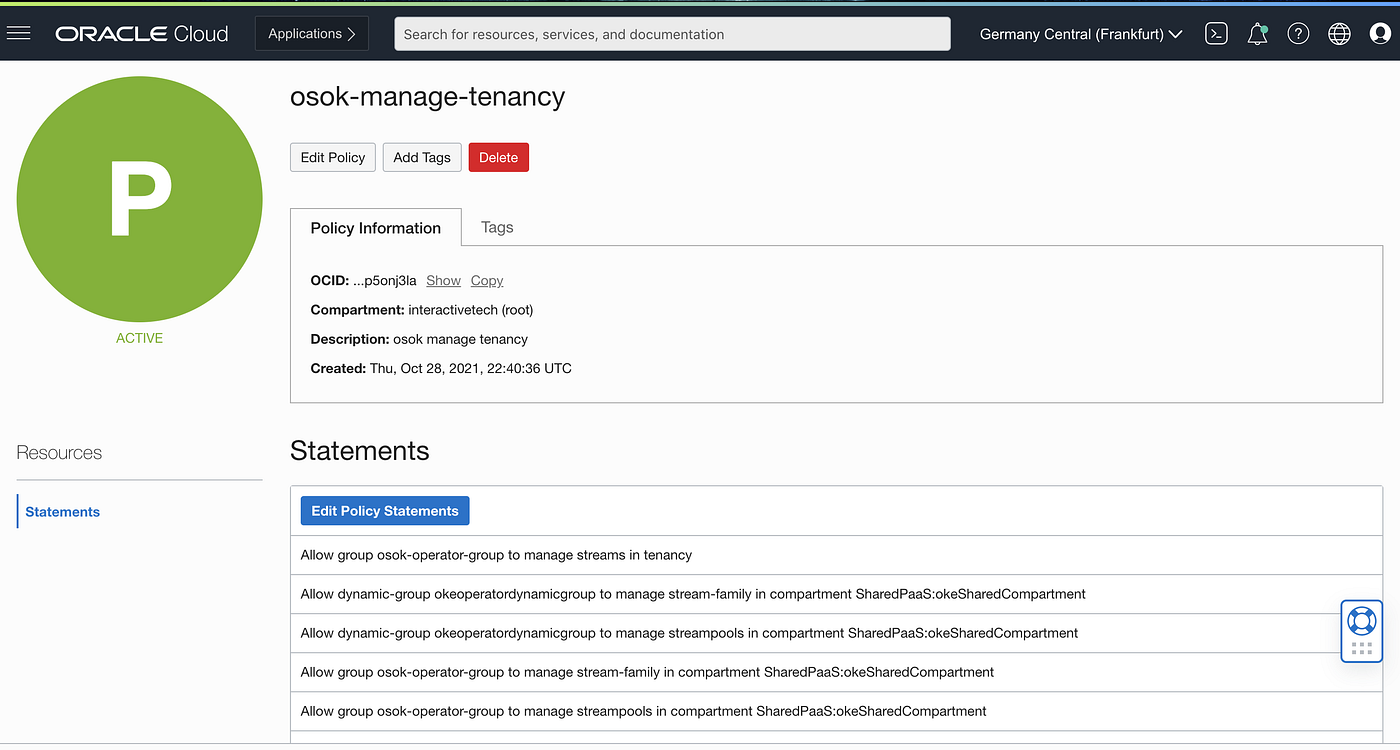

Don’t forget to create a similar policy on the Tenancy level as well:

And thats it. Let’s now move to the second part and secure the OCI Service Operator.

Part 2: Secure OCI Service Operator for Kubernetes

Best practices for the OCI Service Operator for Kubernetes demands the OCI user credentials details to provision and manage OCI services and resources in the tenancy.

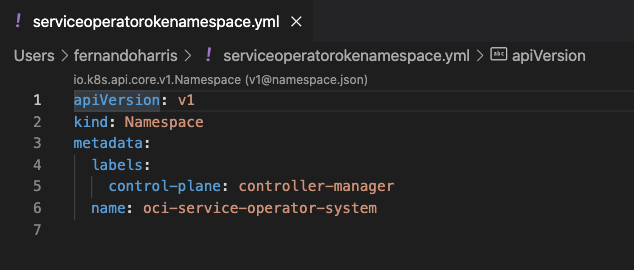

The OSOK will be deployed in oci-service-operator-system namespace. For enabling user principals, we need to create the namespace before deployment.

Create a yaml file using the example below:

In my case:

$ kubectl apply -f serviceoperatorokenamespace.yml

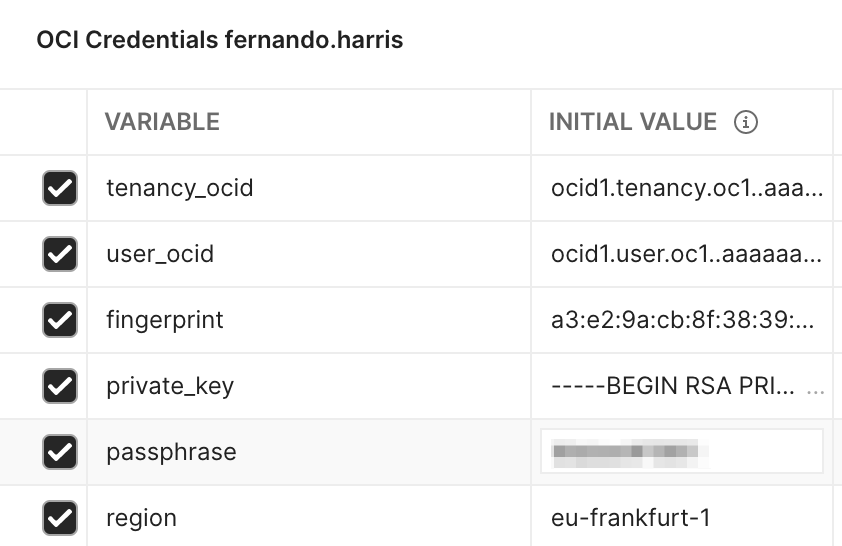

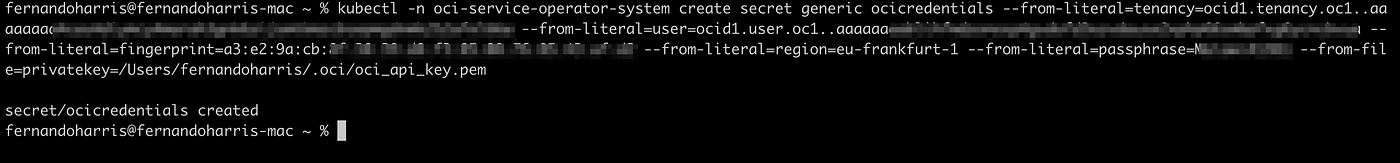

The user is required to create a Kubernetes secret as detailed below.

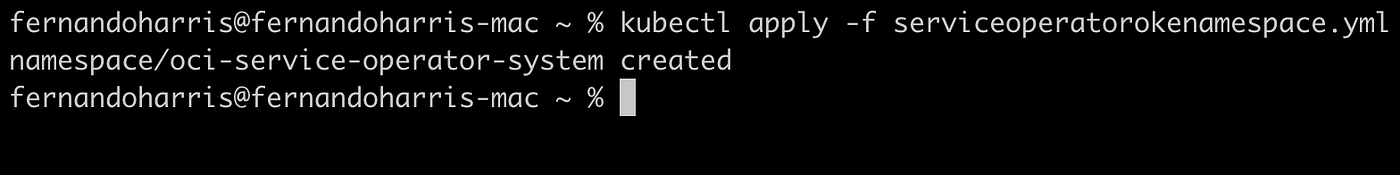

For that get the OCI user credentials to create a Kubernetes secret. I’m going to use my own OCI user, but in theory a specific user to enable the User Principle should be used:

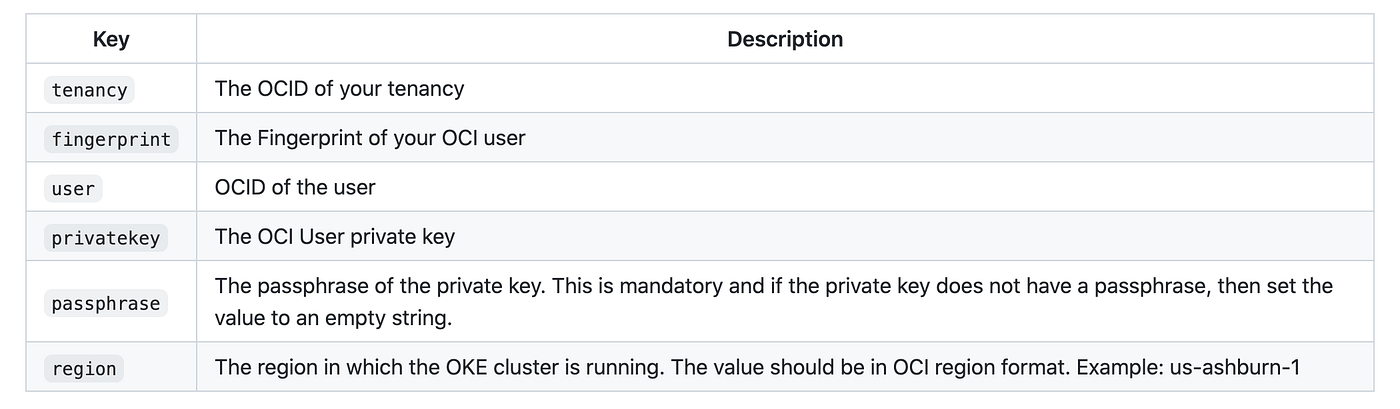

The name of the secret will passed in the osokConfig config map which will be created as part of the OSOK deployment. By default the name of the user credential secret is ocicredentials. Also, the secret should be created in the oci-service-operator-system namespace.

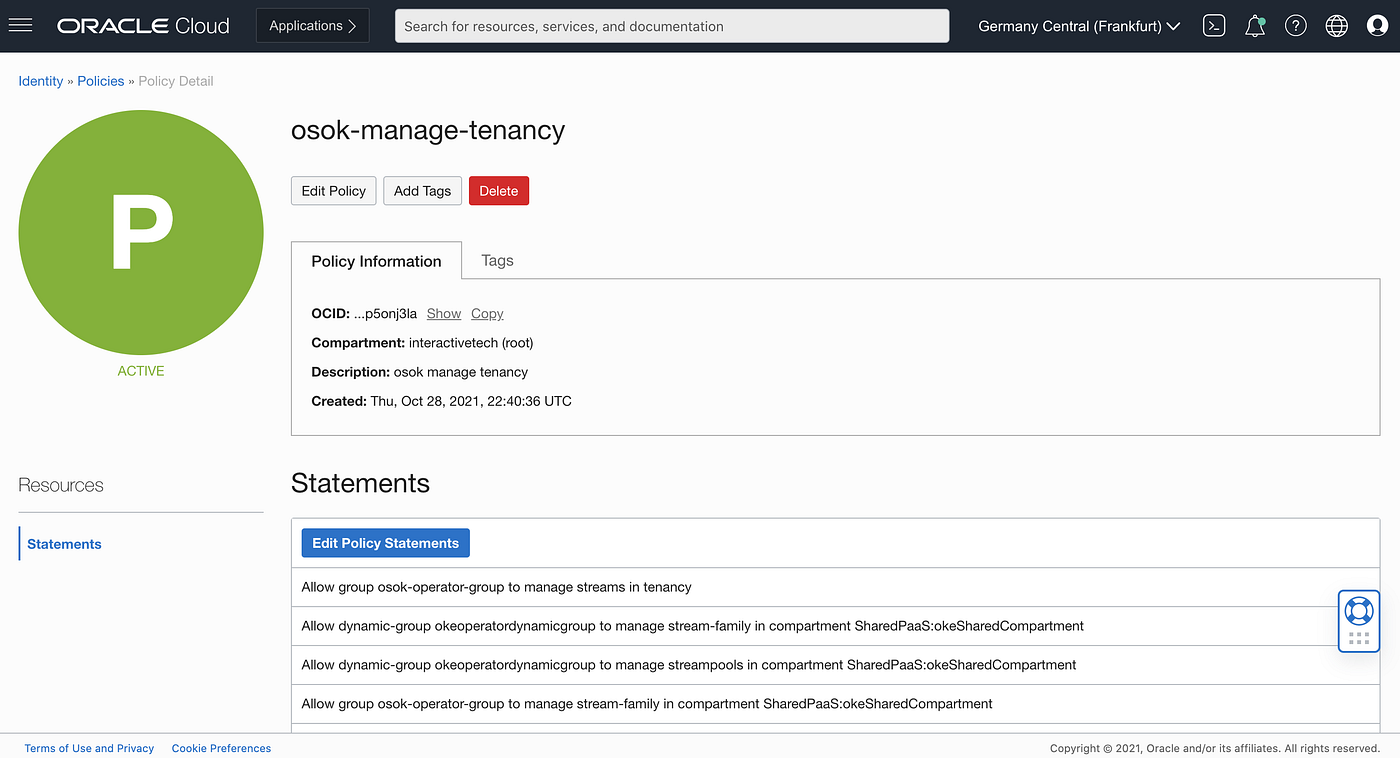

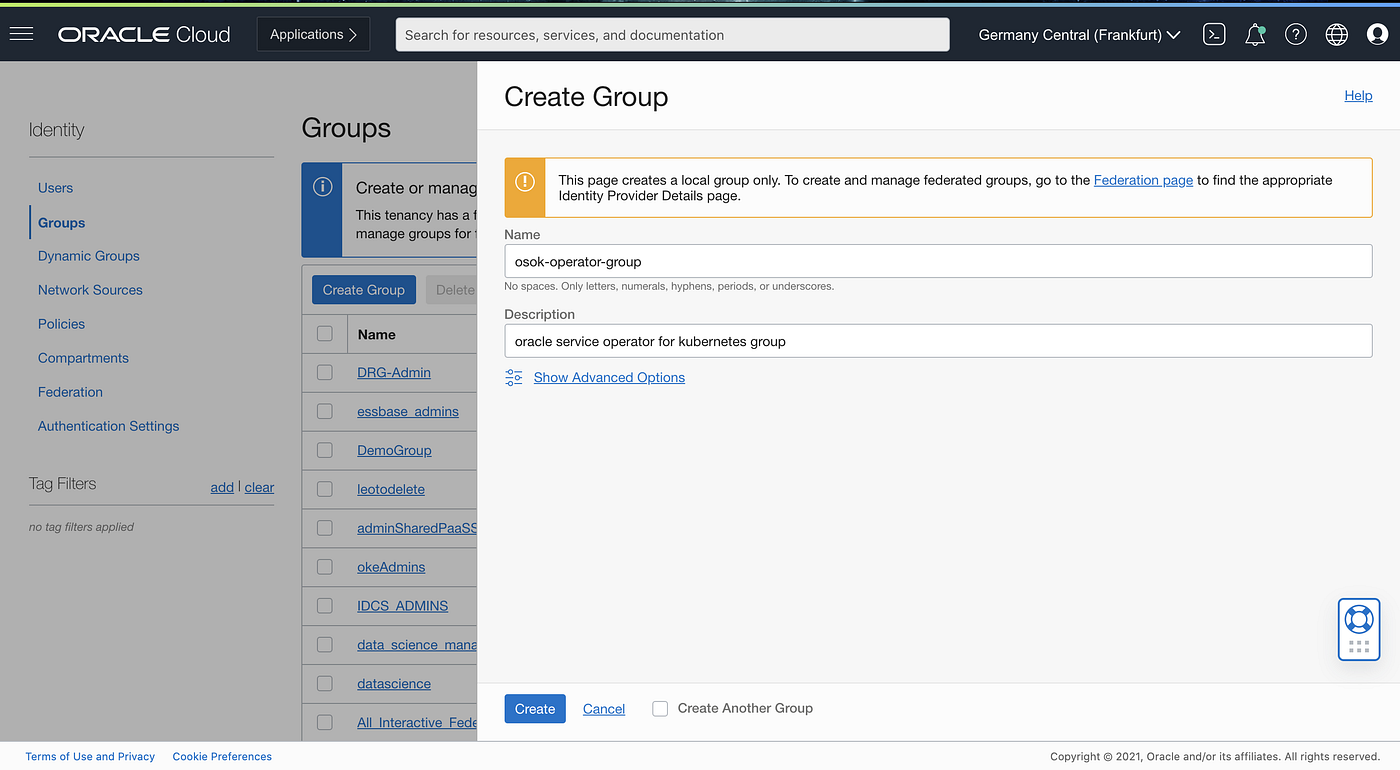

The OCI user owning the above credentials should be added to a IAM group osok-operator-group. An OCI Policy that can be tenancy wide or in the compartment to manage the OCI Services should also be created.

### Tenancy based OCI Policy for user

Allow group <osok_operator_group> to manage <oci_service_1> in tenancy### Compartment based OCI Policy for user

Allow group <osok_operator_group> to manage <oci_service_1> in compartment <name_of_the_compartment></name_of_the_compartment></oci_service_1></osok_operator_group></oci_service_1></osok_operator_group>

Allow group osok-operator-group to manage streams in tenancy interactivetech (root)

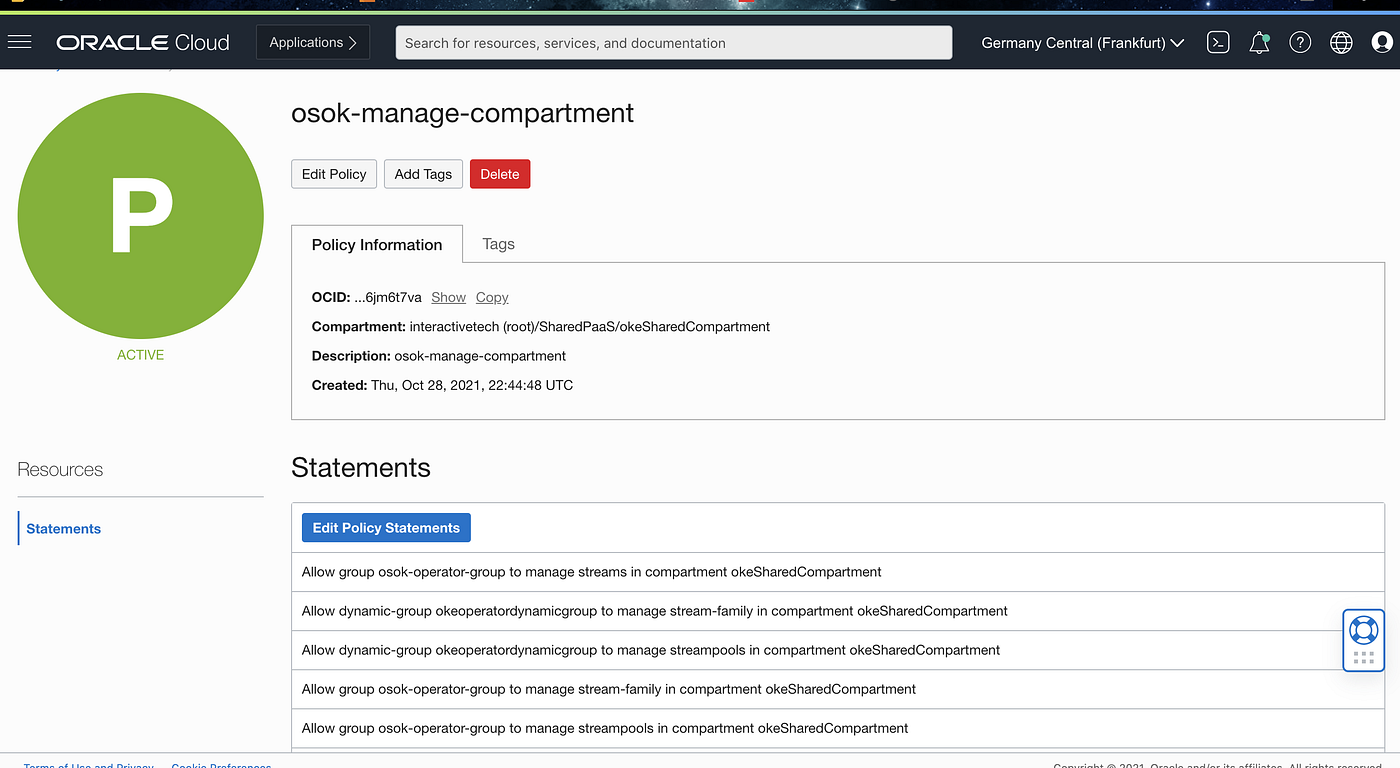

You should end up with a set of policies as described in below print screens:

Policies resume at Tenancy level:

Policies resume at Compartment level:

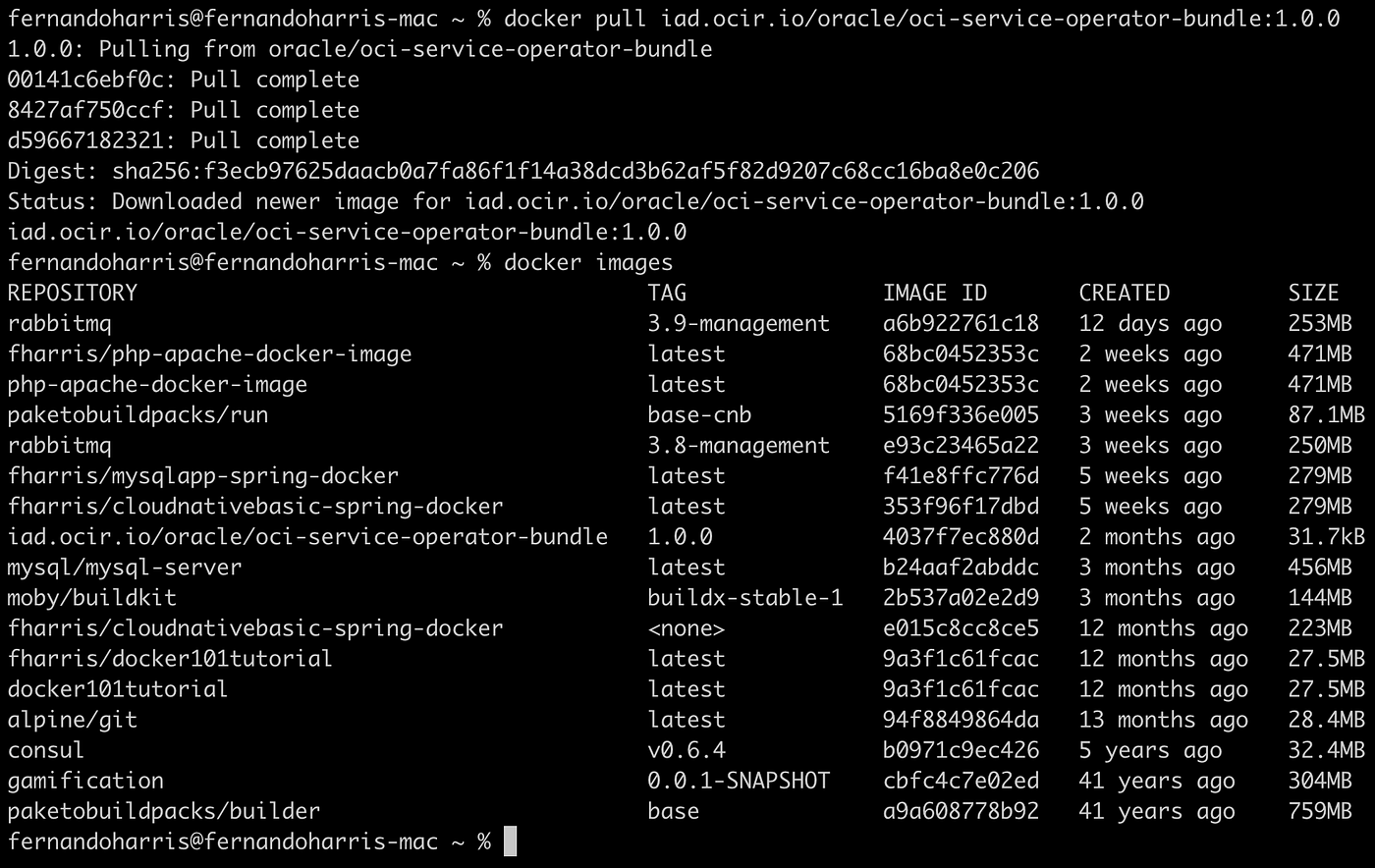

Deploy OSOK on K8

Having everything in place on OCI side, we can get back to the Operator and Kubernetes:The bundle can be downloaded as docker image using below command. You’re probably very familiar with this process, but make sure your Docker engine is running 🏃♀️ 🏃♂️ …I always forget…. 😉

The OCI Service Operator for Kubernetes is packaged as Operator Lifecycle Manager (OLM) Bundle for making it easy to install in Kubernetes Clusters. The bundle can be downloaded as docker image using below command.

$ docker pull iad.ocir.io/oracle/oci-service-operator-bundle:1.0.0

The OSOK OLM bundle contains all the required Kubernetes resources such as CRDs, RBACs, Configmaps, deployments which will install the OSOK in your Kubernetes cluster.

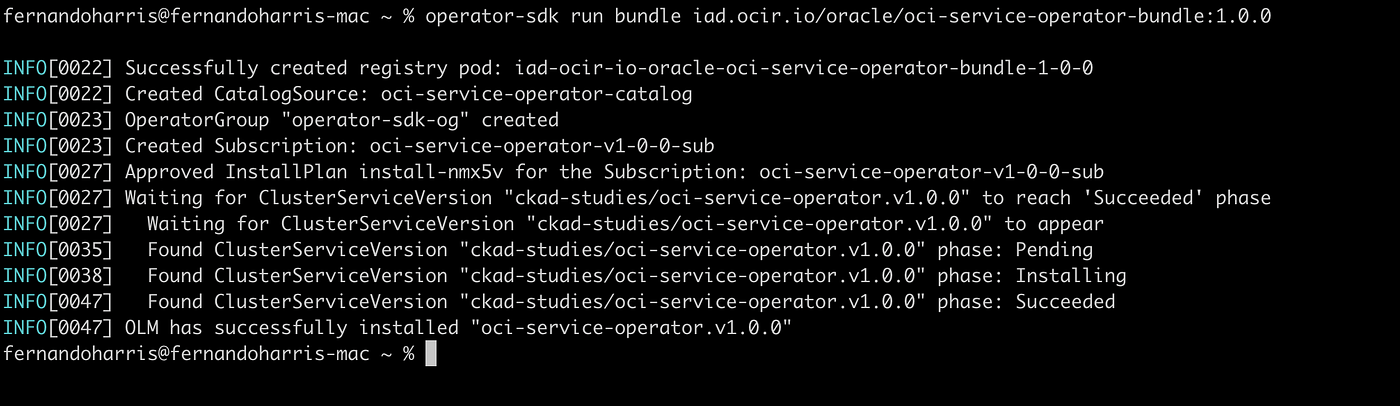

Run below command to start installing the resources in the cluster:

$ operator-sdk run bundle iad.ocir.io/oracle/oci-service-operator-bundle:1.0.0

Part 3: Provision and bind to the required OCI services.

The 3rd and final step is here and is when you will basically do things such as:

- Provide service provision request parameters.

- Provide service binding request parameters.

- Provide service binding response credentials.

Click here to take a quick look and check the examples in the documentation. They are not very detailed, but simple enough to understand the dynamic to create the bindings with OCI Services such as Autonomous Database, MySQL PaaS and OCI Streaming.

Oracle Streaming Service and OSOK

Here, you will find more detailed information on how to implement the samples for the OCI Streaming service.

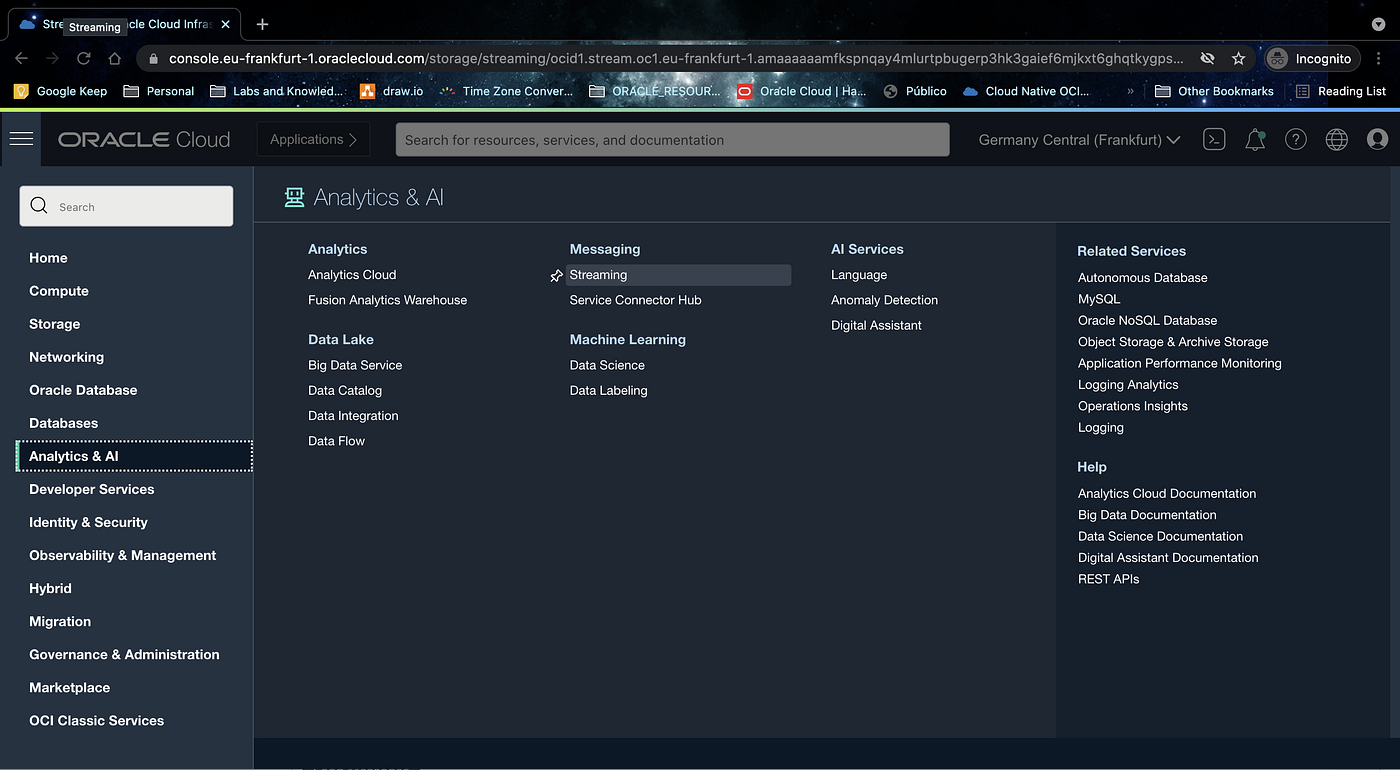

Let’s start by creating a binding with an existing stream. In OCI, go to Analytics & AI an then Streaming:

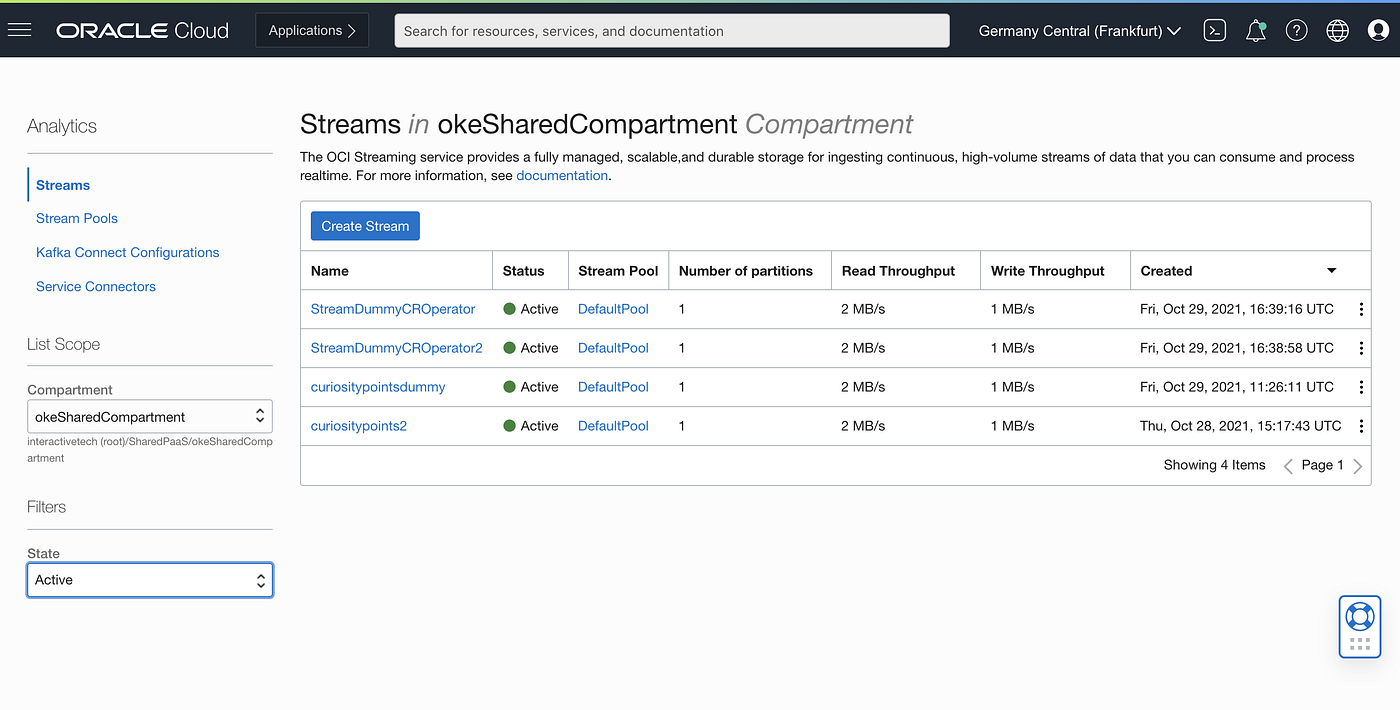

See the list of active Streams in the compartment:

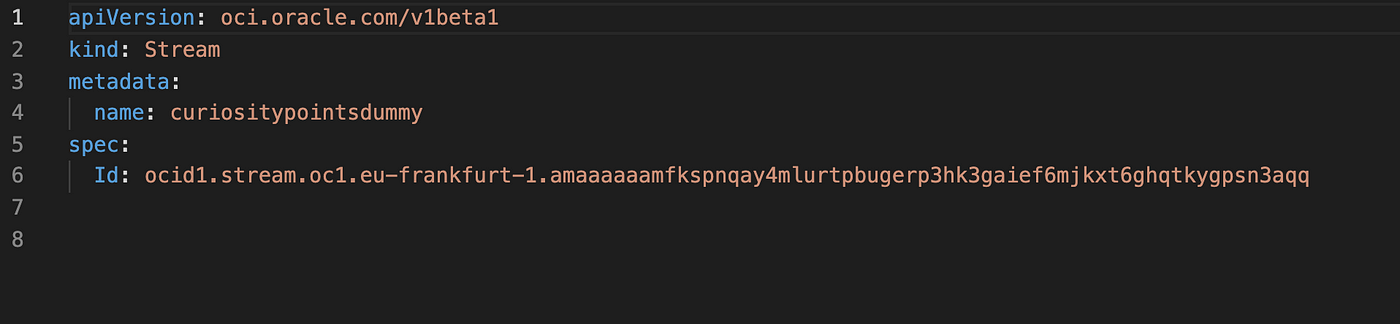

We want to bind with the curiositypointsdummy stream. To do that, we need the stream OCID. Then prepare a yaml manifest to send to your K8 cluster. I’ve named it as CR_OSOK_BindExistingStream.yaml and its content is below:

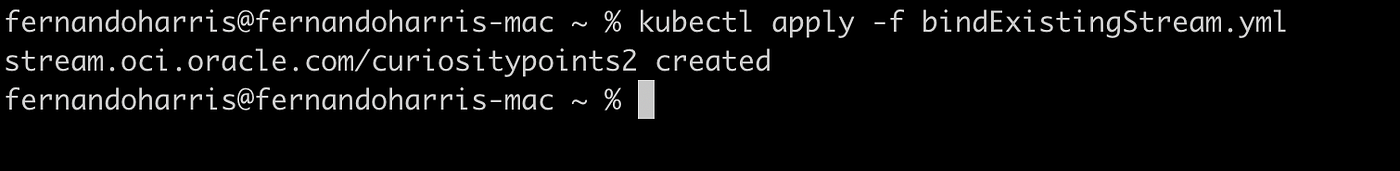

run $kubectl apply -f CR_OSOK_BindExistingStream.yaml

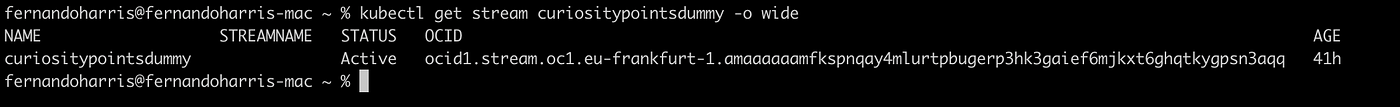

run $kubectl get stream curiositypointsdummy -o wide and get the details for the binding:

Let’s now create a new stream:

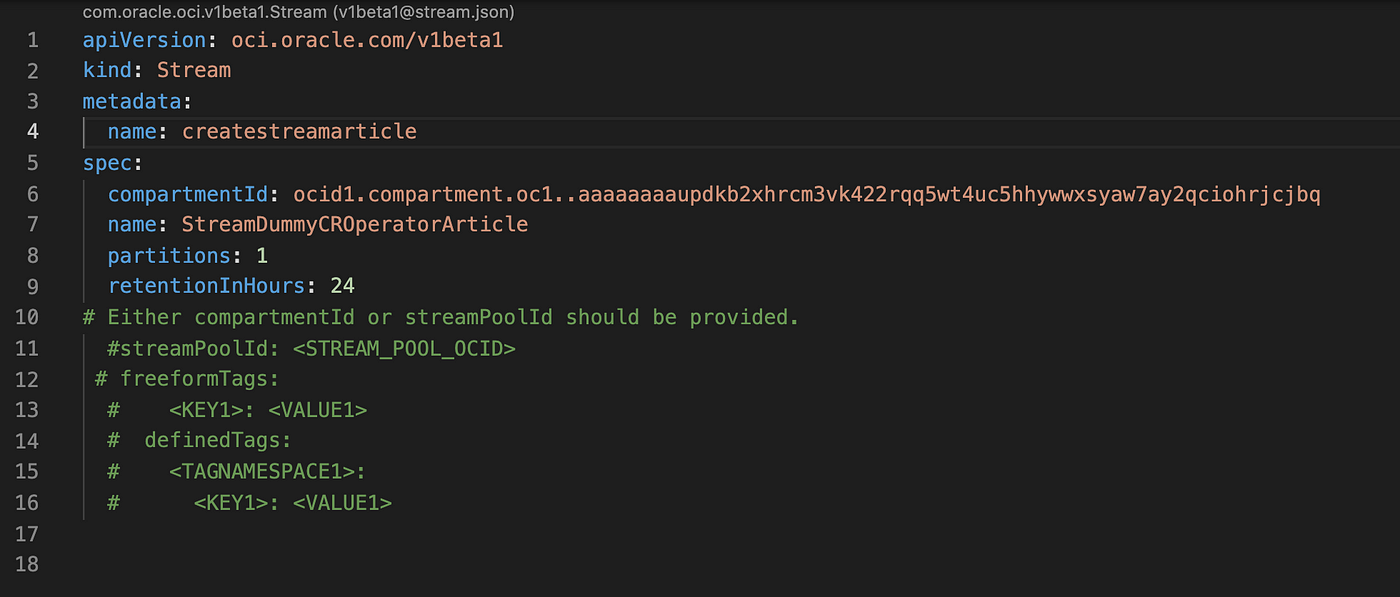

Prepare a yaml template like the example below:

In my case I named it as CR_OSOK_CreateStream.yaml. Run

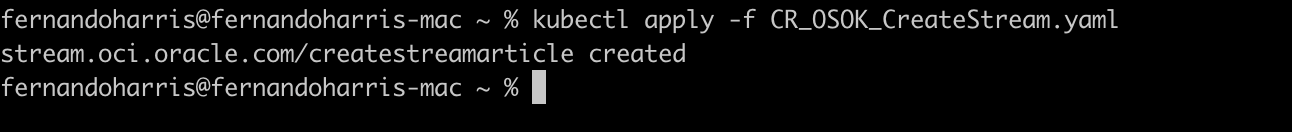

$ kubectl apply -f CR_OSOK_CreateStream.yaml

run :

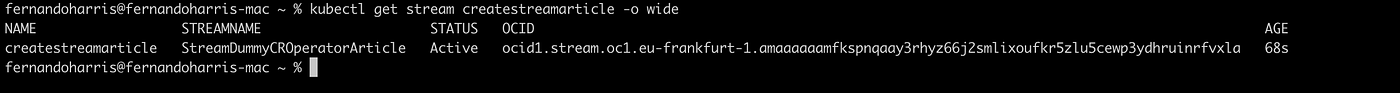

$ kubectl get stream createstreamarticle -o wide

You’ll see you now have a new stream created and active with its OCID.

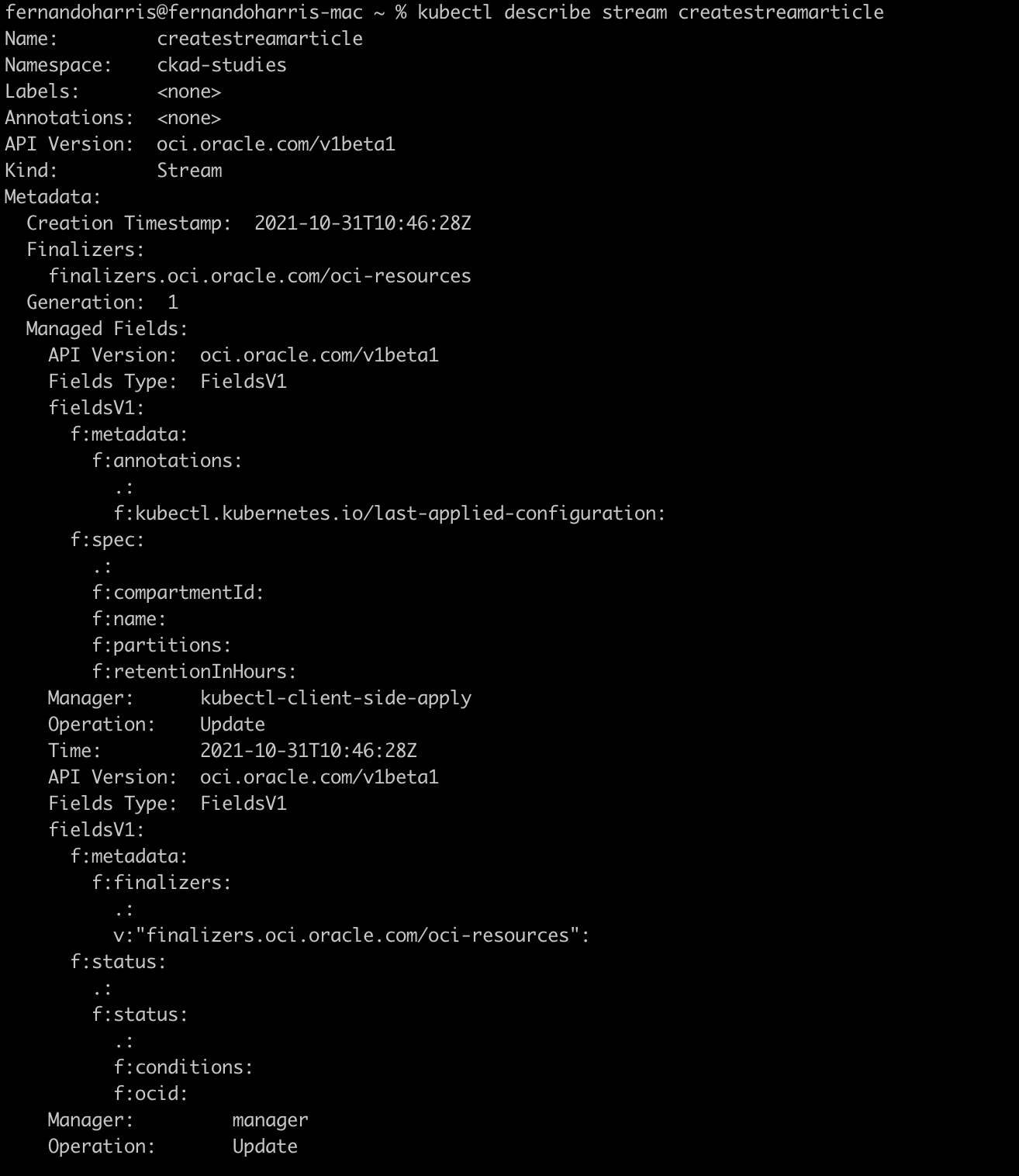

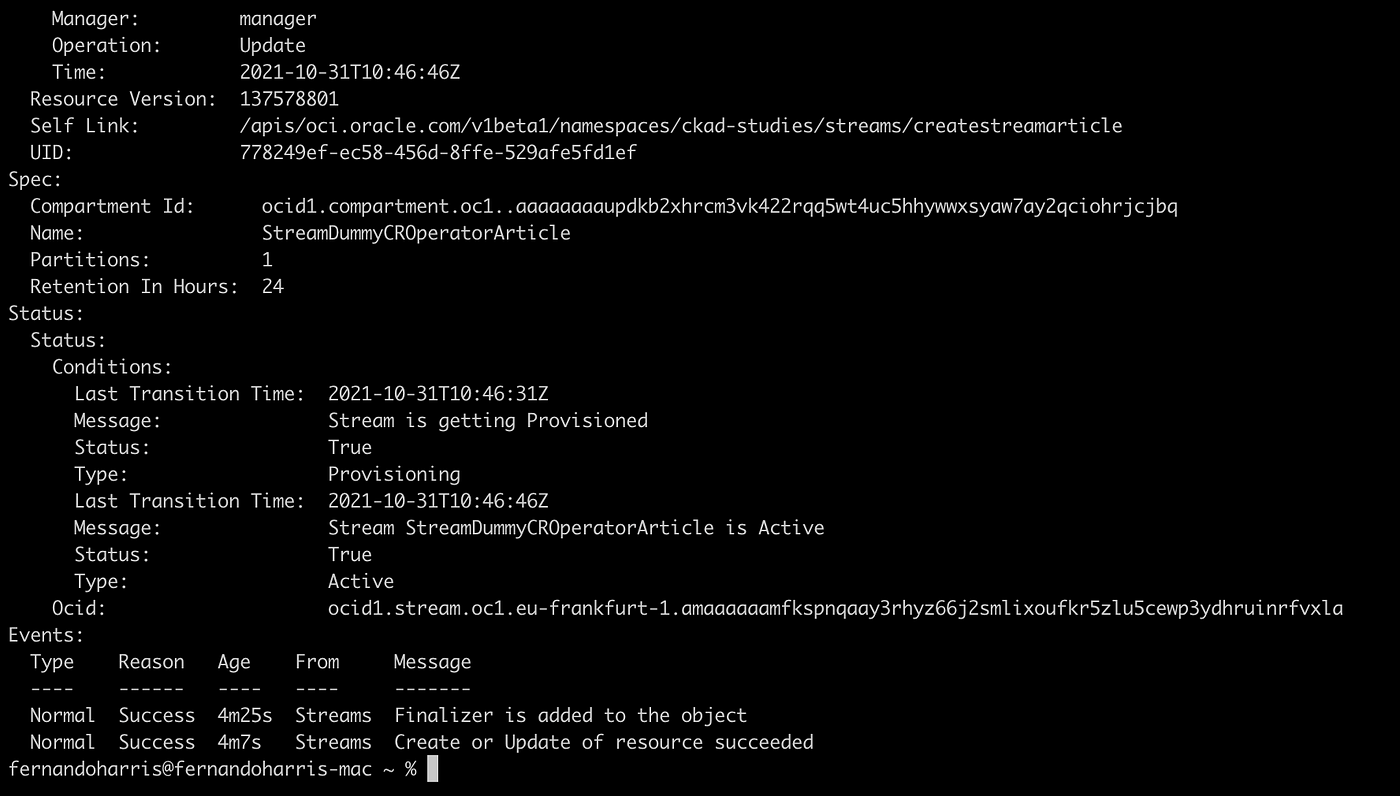

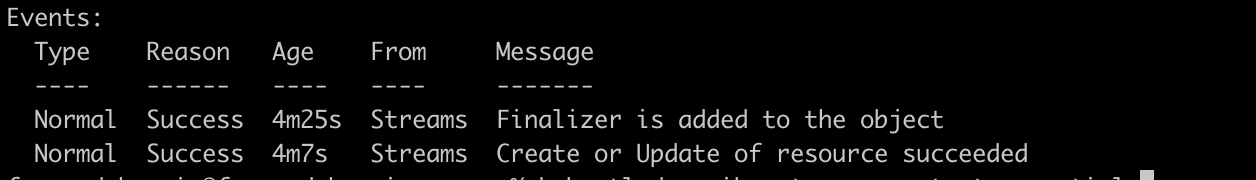

To get more details about this resource run:

$ kubectl describe stream createstreamarticle

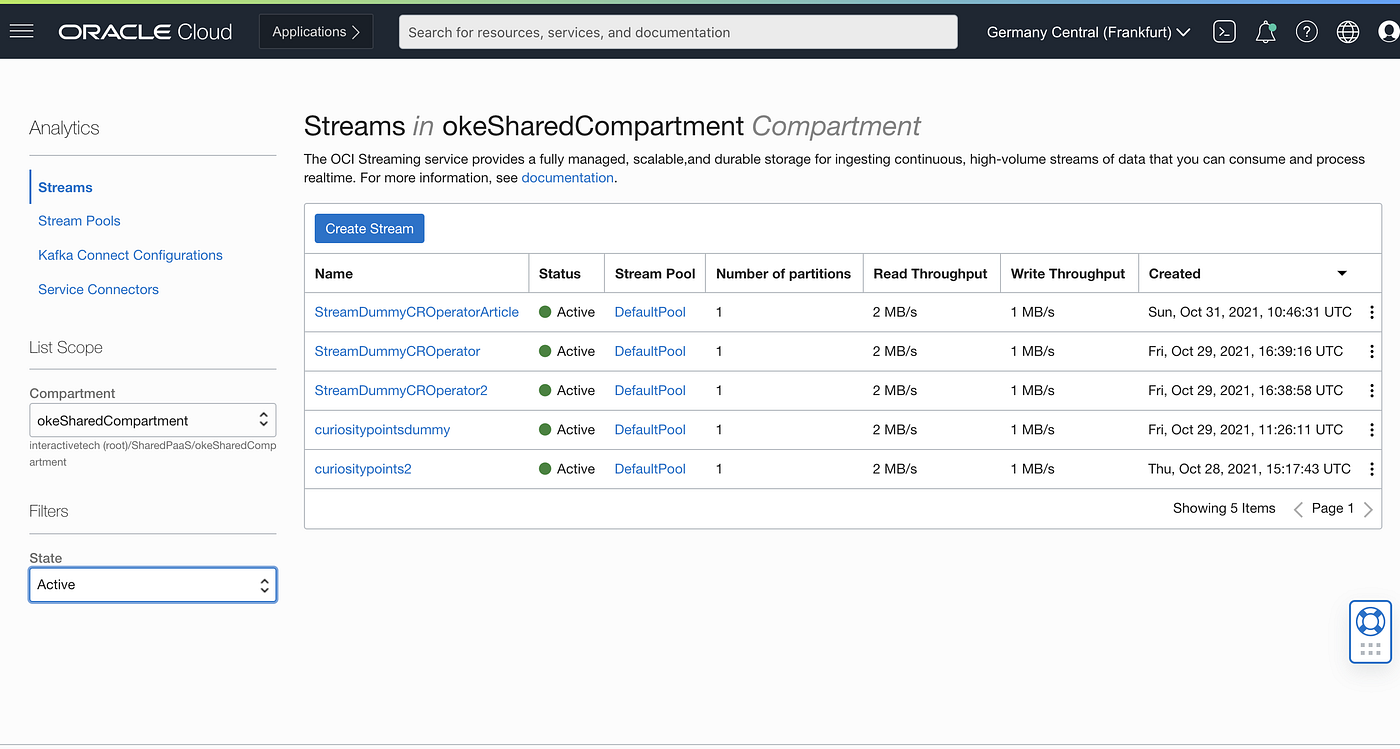

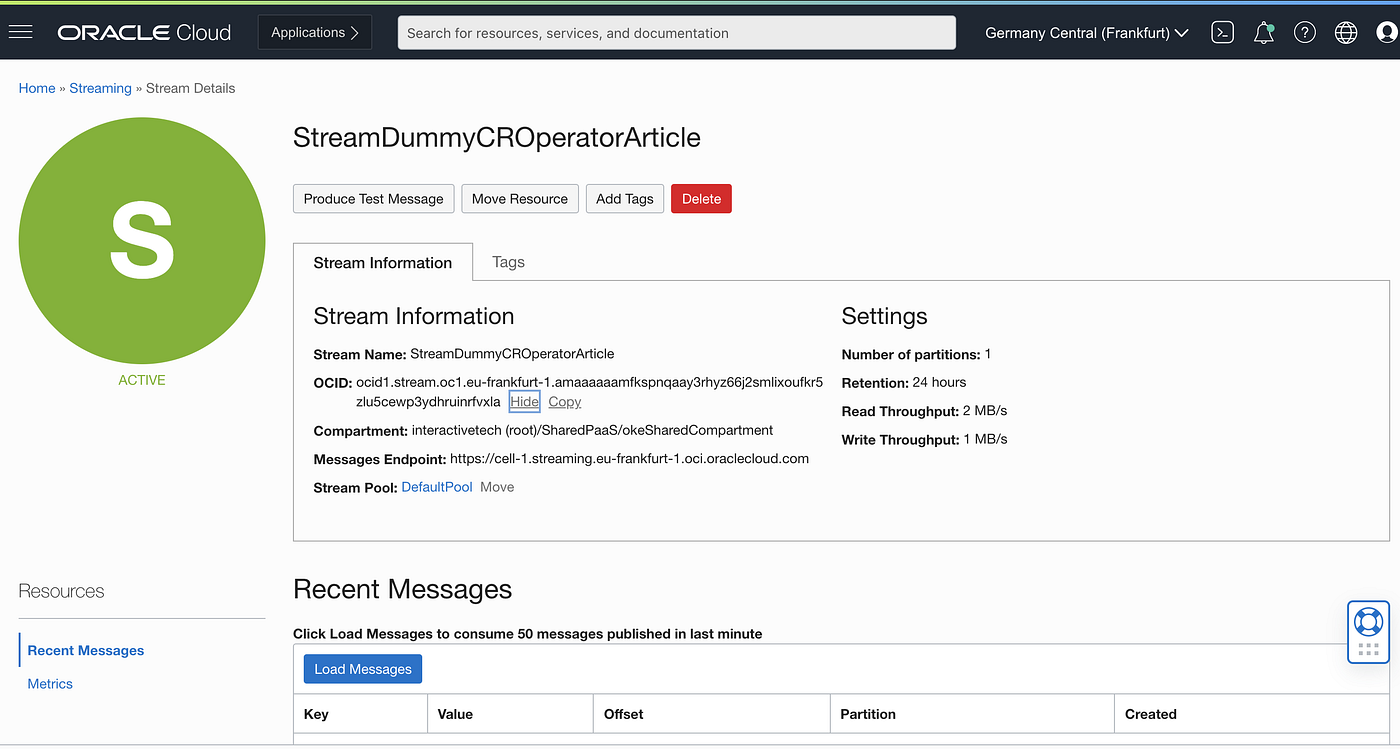

Let’s validate in the OCI Console:

As expected, a new stream called StreamDummyCROperatorArticle is now created.

Some final notes and recommendations from my experience:

Check your permissions, policies and groups and dynamic groups. If you get a 404 error, it’s highly probable that your are doing something wrong on one of these steps. The kubectl describe command is essencial to troubleshoot this type of event.

When you run a kubectl describe and it seems the list of events are not getting updated , it can be because OCI is not allowing or releasing the creating of new streams, maybe your partition limit was hit. In my case, after deleting a Stream with many partitions I start seeing immediately the creation of the new streams. This type of behaviour will not happen if you are trying to bind to an existing stream.

In the OCI Streaming link you’ll find more policies created in OCI. The print screens I shared above as a summary of the needed policies**, reflect already that.