Image credit: ServeTheHome

Oracle’s Raspberry Pi Supercomputer, the largest Raspberry Pi cluster known to exist, got awarded one of the Top 10 Raspberry Pi Projects of 2019 from Tom’s Hardware.

Here is its story.

The birth of an idea

In 2018, a small group of enthusiastic presales people from Oracle Switzerland presented their 12-node Raspberry Pi cluster at the Hackzurich hackathon. The cluster was stacked up in a 3×4 node configuration, mimicking an Oracle Cloud data center with 3 availability domains. It ran a fully operational Kubernetes cluster on top of it and could demo node and availability domain failovers. The cluster turned out to be quite the people magnet. Flashy LEDs, a cute looking cluster, and the unsatisfiable curiosity of “what is it doing?” The foundation for the Raspberry Pi Supercomputer has been laid.

Image credits: Oracle Switzerland

Going BIG

Coming back from the hackathon we had this idea in our heads of a portable demo station that we can take to conferences and showcase what cloud technology is all about. Not only would we be able to showcase simple failover and keep-up-and-running scenarios but with something like Kubernetes or Oracle’s Fn project on top, we could build many self-contained demos, put them into a catalog on the internet, and then showcase live at the conference what and when the attendees wanted to see. The catalog would also allow us to easily add to it throughout the year and anywhere in the world without having to have physical access to the cluster. And, by going with such a self-contained software stack, any other hardware that uses the same stack, Raspberry Pi cluster or not, would be able to leverage the very same demos as well. It was clear that a lot of possibilities were opening up by doing this but the next question was how big we shall go.

At first, we were thinking about building a modular cluster of 128 nodes. Modular in the sense that we would create 8 individual units of 16-node clusters that we could then rack together to bigger clusters (8×16, 4×32, 2×64, 1×128). This would provide us with several benefits, such as having multiple clusters that can travel the world and be at different conferences at the same time. In case we needed more processing power, or a bigger event was happening where we wanted to show off a bigger cluster, we could just easily stack them up to the size we wanted. For marquee events, such as Oracle Code One, we would just put all of them together into one big cluster.

As said, at first we were thinking about 128 nodes but that has obviously changed. As we were going through this, we asked ourselves who else has done something like this before, and more importantly, what is the biggest cluster out there today? So we took a step back and did some research and soon found out that 128 nodes were nothing special anymore these days. There was, for example, The Beast v2, a 144-node Raspberry Pi cluster that was designed as a demo rig for resin.io, now balena.io. We also found The Bolzano Raspberry Pi Cloud Cluster Experiment which represented a 300-node Raspberry Pi cluster. The largest Raspberry Pi cluster that we could find was done by the Los Alamos National Laboratory’s High-Performance Computing Division with a skyrocketing 750-node Raspberry Pi cluster. With that, we had a clear goal: whatever we do, it had to go beyond 750 nodes. And with us being geeks, we knew that the next logical bigger number was 1,024 and so we set off to build a 1024-node Raspberry Pi cluster.

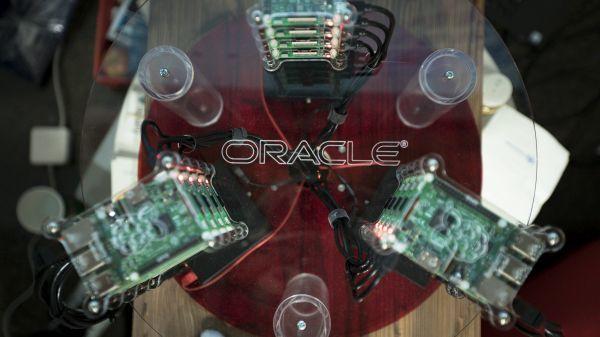

The construction of the Supercomputer

As we started the construction of a 1024-node, modular cluster, our chief engineer Chris Bensen soon realized that our plans did not necessarily work for the deadline we had in mind: Oracle Code One 2019. Building a modular cluster added significant additional work and time to the project, potentially too much to complete in time for Code One 2019. Given that we didn’t need the modular aspect of the cluster for the conference as we wanted to have one big cluster for Code One anyway, we put the idea on pause and decided to go for one single Blue Box. Inside you would find:

- 5 x 2 Meter high racks

- 1 Supermicro 1U Xeon server

- 25 USB power supplies

- 26 network switches

- 1,050 customer 3D printed Raspberry Pi holders

- 1,050 Raspberry Pis

Wait, 1,050 Raspberry Pis? Yes! As it turned out, the 1,024 Raspberry Pis were a great idea but putting the rack together we found that we still had space inside it. So what do you do? Waste the space or fill up the rack with additional Raspberry Pis? Well, we decided for the latter, and so our 1024-node Raspberry Pi cluster became a 1050-node Raspberry Pi cluster.

Next, we started looking at the components needed. 1,050 Raspberry Pis do add up quite a bit. Any operation that you have to perform, whether it’s plugging in a network cable or putting in a screw, you will have to do that at least 1,050 times. Add 1,050 SD cards to the mix and you will spend quite some quality time flashing these cards, not to speak of plugging them into each Raspberry Pi and potentially installing additional software onto them. So instead of buying 1,050 SD cards, we decided to network boot all of them from one central server (the Supermicro 1U Xeon server). The network booting allowed us to install and configure the software only once, and if any changes needed to happen, they would happen only once as well. Being Oracle, it was natural for us to boot up Oracle Linux for ARM instead of the default Raspbian.

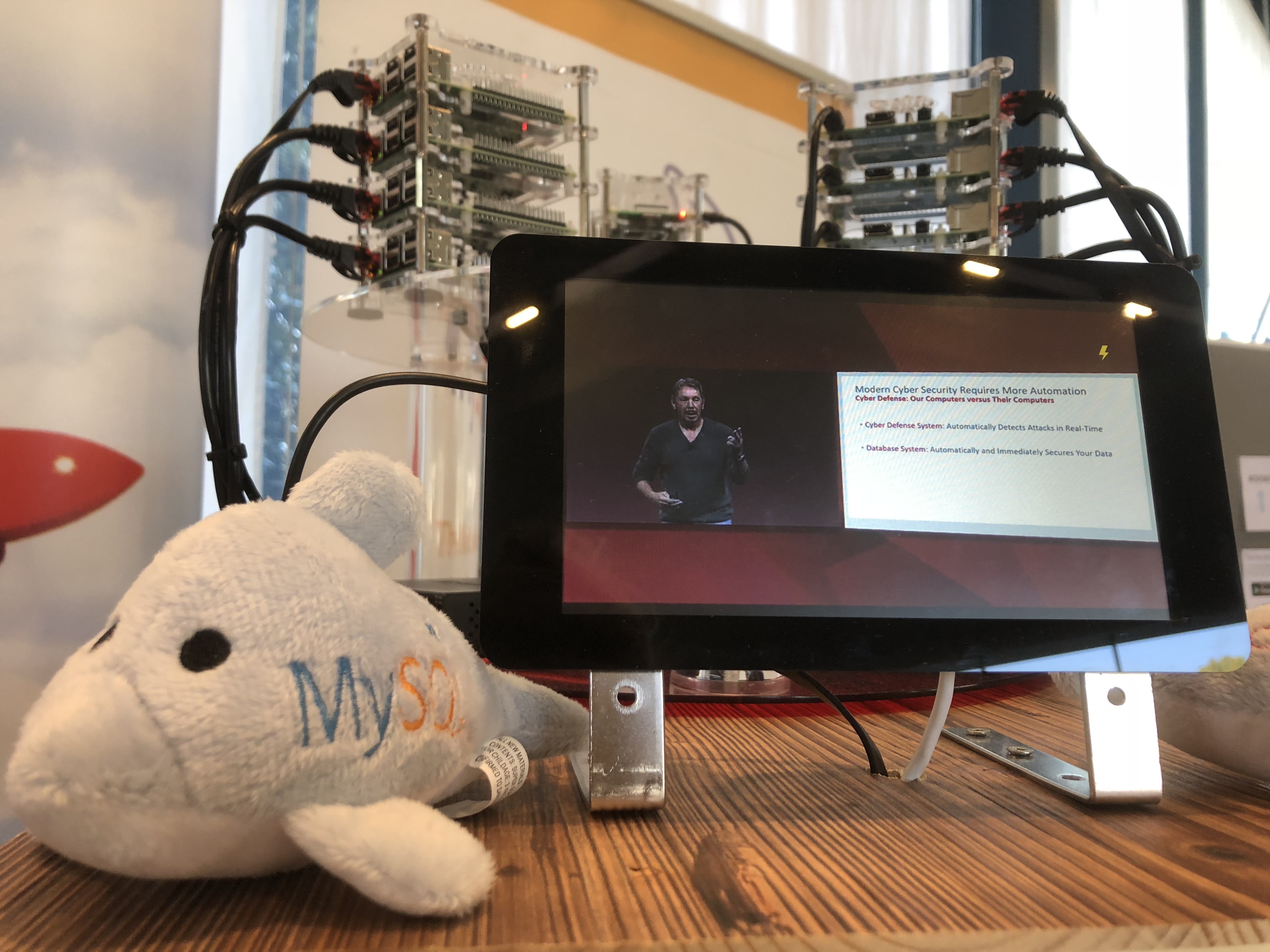

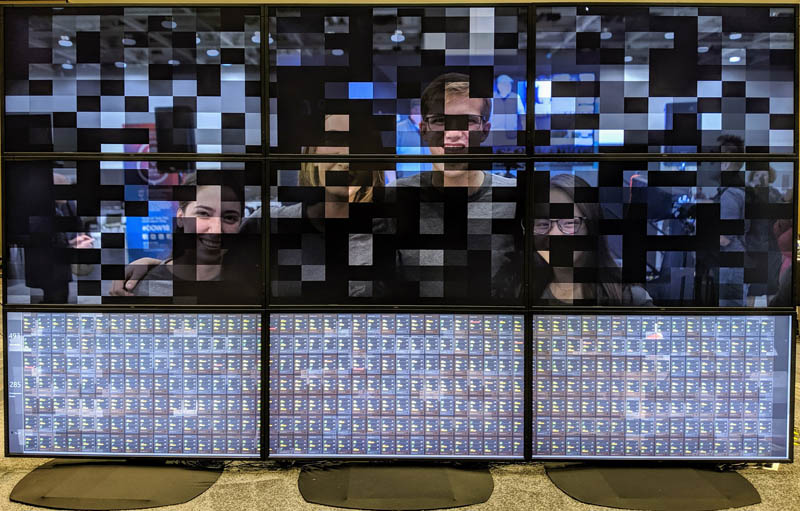

At Oracle Code One 2019

We successfully managed to build and showcase the cluster at Oracle Code One 2019. We decided on an engaging demo built with Java that not only showed the cluster utilization on a big screen but also an obfuscated picture of the conference. Attendees could send messages to the cluster to free up that image. Once the message was received, a random node would go ahead and free up a part of the picture in real-time. The challenge was to send as many messages as fast as possible to get the entire picture cleared up. This way attendees could not only get excited about the hardware engineering that was at work here but also interact with the cluster in a fun way. The demo also encouraged attendees to use a crowd-sourcing approach and to work together, which indirectly helped them to network with their fellow attendees. Once the picture was all clear, we would put up a new one and start all over again. It certainly got people’s attention, not only from the attendees at Code One but also on Social Media and folks like ServeTheHome and Tom’s Hardware.

We thank you all!

Image credit: ServeTheHome

Beyond Oracle Code One 2019

After Oracle Code One 2019 was over, we went back to the list of ideas. There were still many items outstanding that we thought about but didn’t get around to yet. But which one to tackle first? Well, having one big cluster was cool but we really, really liked the idea of having smaller clusters that could travel the world at the same time. So, for the next goal, we decided to go for mini clusters. And what’s the best way of getting anything done? By setting a deadline, which for this project we set for Open World London 2020 that happened just two weeks ago.

And behold, we give you the 84-node Raspberry Pi mini-cluster:

This time we decided to help the Search for Extraterrestrial Intelligence (SETI) with our cluster via the SETI@Home project. Once again, our cluster was a big success and got the attention from around the world, despite it being smaller than its big brother.

The road ahead

So what lies ahead? There are still many ideas on the board that will keep us busy for the foreseeable future. One of our goals is still to make these Raspberry Pi clusters fully cloud-native and run technologies such as Kubernetes and Fn Project on them. We also still like the idea of having a demo catalog that can be used by any cluster anywhere in the world at whichever event it is currently present. And, of course, we haven’t given up yet on the idea of making these clusters modular either. But in the meantime, we also got some new ideas. One of them is to have the (big) cluster become the heart and center for an IoT enabled Oracle Code One experience and let it capture and process many different sensor readings from the event in real-time with the help of Oracle Cloud.

Which one of these ideas we will tackle next has yet to be decided. But one thing is already clear: the road lies still ahead, and we are looking forward to it…