Welcome back to the Maven series. Now that we have covered the basics we’re ready to continue the journey. As it happens dependency resolution is one of Apace Maven’s greatest assets but also a source of frustration when things do not go as expected. We’ll circle back to dependency resolution a couple of times along as this series progresses, today we’ll do so by looking at optional dependencies.

The Apache Maven site has a page on Optional Dependencies and Dependency Exclusions. This resource explains the reasons for adding <optional> to a dependency declaration on a producer project (say a library or framework) and the impact it has on a consumer project. Depending on which side you look from (producer or consumer) the use of this tag will have and effect which may not be the one you want or expect. Let’s have a closer look at an example.

Let’s assume you are the author of a library (let’s call it producer) that requires a couple of dependencies at compile time to create its own artifact. It also requires a handful of dependencies that let it perform additional behavior, when those dependencies are present in a consumer project, however it also requires some classes provided by these dependencies in order to create its own artifact. Thus you must set all of these dependencies in the compile scope. But wait a second, we just said some of those dependencies are not needed all the time, they are “optional” depending on the use cases required by the consuming projects. If we leave those “optional” dependencies as is then they will always be pulled and added to the consumer classpath when the consumer resolves its dependencies. What do we do now? As the guide linked earlier one alternative (the preferred way) is to split the producer project in different modules, where the additional dependencies are set into separate modules as needed but also as required dependencies. If you cannot break the producer project into submodules then the alternative is to apply <optional> to those dependencies that enable additional behavior, like it’s shown in the following POM file

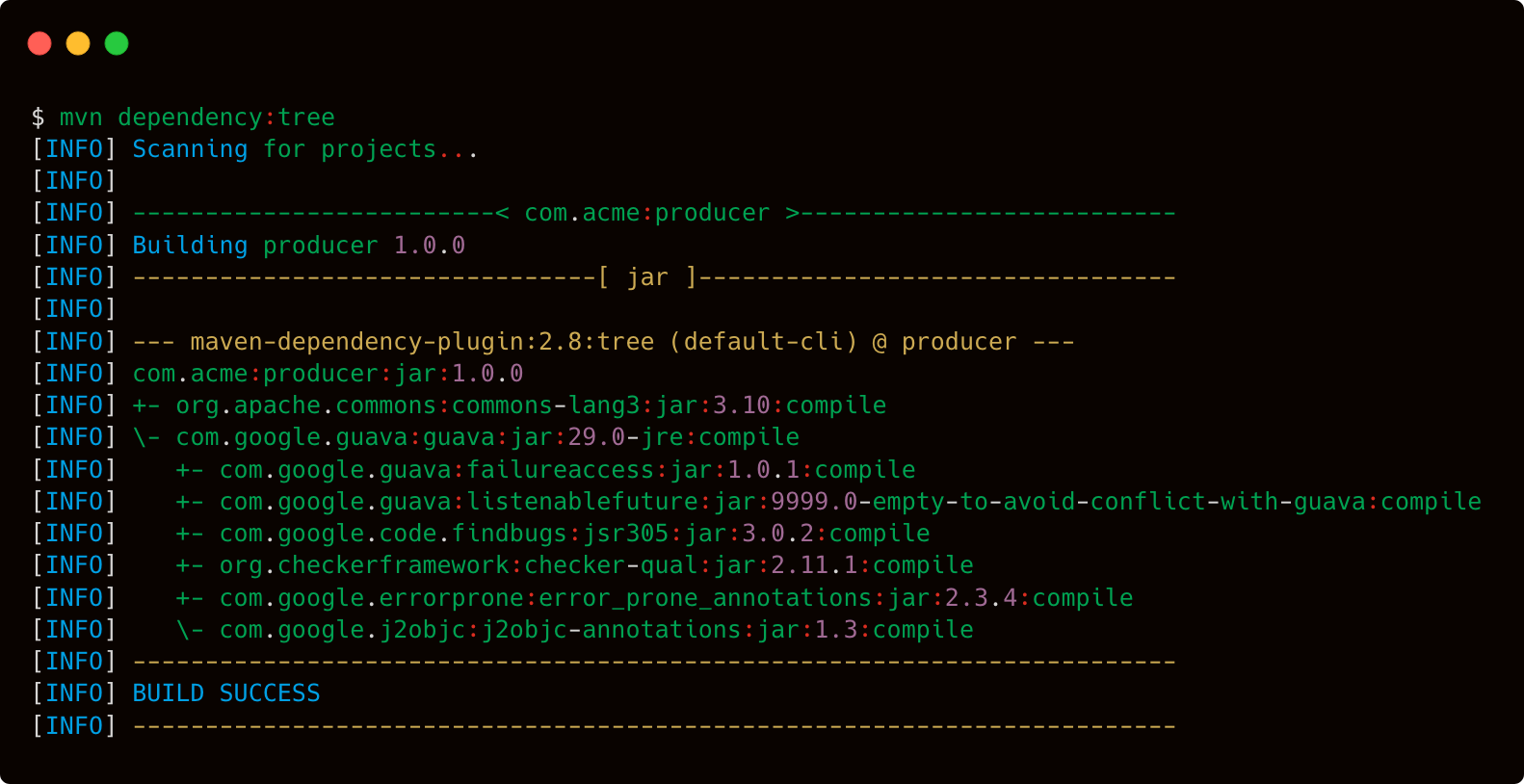

The previous POM declares that it needs 2 dependencies for building its own artifact: commons-lang3 and guava. However only commons-lang3 is a strict dependency while guava is an additional one, thus it’s marked with <optional>. If we were to resolve the dependency graph for the producer project we’ll get the following

So far so good, let’s turn to a consumer project, aptly named consumer whose POM looks like the following one

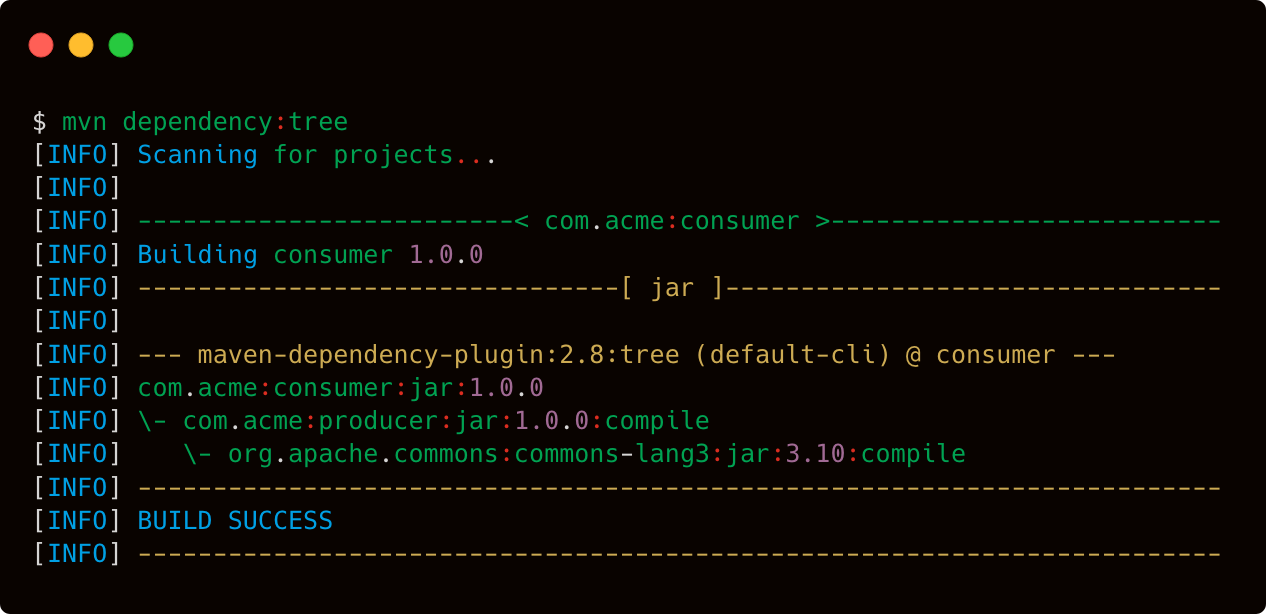

Note that we only define a dependency on producer. We expect this dependency to bring in every other dependency it requires as part of its transitive closure; if we did things right resolving the dependency graph for consumer should result in the following output

As you can appreciate, guava is nowhere to be found as part of consumer‘s dependency graph, it works! The use case we just fulfilled is to have producer declare the minimum set of dependencies it requires to perform its main duties, and consumer is happy with that, it’s not even bothered with additional dependencies that are not needed to fulfill the main use case. Great. Let’s turn this up a notch, shall we?

Suppose that consumer now has to cover the use case when additional behavior becomes available if guava is in its classpath, thus activating additional behavior provided by producer. How would it be done? Well consumer would have to add an explicit dependency to guava on its POM. But how would the author of consumer know this fact? If the only artifact they have to look at is producer‘s POM then they can get a hint as guava is marked as <optional>. The better solution of course would be to have proper documentation in place that can let them discover the additional use cases, the requirements to make those use cases work, and any additional configuration that might be needed. In the end, the fact that developers can see the full POM for producer doesn’t give them too much information on the additional use cases, they will have to turn to docs, blogs, screencasts, and the like; that is, the POM is not as it stands is not enough. It’s by sheer coincidence that the hint of additional dependencies can be seen by consumers. In all fairness, those additional dependencies should probably have been added to the provided scope instead and left unmarked with <optional>. The reason why I say sheer coincidence is that as of Maven 3.6.3 the published POM (read by consumers) for an artifact is identical to the POM used to build said artifact. This is likely to change in a future version of Maven as the development team has hinted of a feature known as “consumer POM” where only the minimum instructions required by consumers will be published as a POM.

Now given the small set of dependencies we’ve seen in this trivial example one may be inclined to think “using <optional> it’s not so bad, look, the POM is self-documenting!”. Well, yes, kind of. What happens then when more dependencies are added? And a good number of those happen to fall into the “optional” set? Does the use of <optional> scale? What if some dependencies must be moved to the runtime scope instead? I suppose that as long as the initial comment found in the guide holds true (the project can’t be broken into submodules) then there’s no other option, or is it? One possibility would be to make use of BOM files (which we’ll discuss) however be warned in advance this option those not forego proper documentation and more importantly, sample dependency configuration.

Bottom line is, as library or framework authors, we make choices that impact our consumers based on how we build our own artifacts. Some of these choices may be strict, others may be laxer. The use of <optional> has faded as time has passed since the days of Maven 2.x (waves to 2005!) but there may be times when there’s no other recourse.

Credits:

Photo by Jiří