Oracle introduced Autonomous Data warehouse over a year ago, and one of the most common questions that customers ask me is how they can move their data/schema’s to ADW (Autonomous Data Warehouse) with minimal efforts. My answer to that is to use datapump, also known as expdp/impdp. ADW doesn’t support traditional import and export, so you have to use datapump. Oracle suggests using schema and parallel as a parameter while using datapump. Use the parallel depending upon the number of OCPU that you have for your ADW instance. Oracle also suggests to exclude index, cluster, indextype, materialized_view, materialized_view_log, materialized_zonemap, db_link. This is done in order to save space and speed up the data load process. At present you can only use data_pump_dir as an option for directory. This is the default directory created in ADW. Also you don’t have to worry about the performance of the database since ADW uses technologies like storage indexes, Machine learning, etc to achieve the optimal performance. You can use the file stored on Oracle Object Storage, Amazon S3 storage and Azure Blob Storage as your dumpfile location. I will be using Oracle Object storage in this article.

We will be using the steps below to load data:

1) Export the schema of your current database using expdp

2) Upload the .dmp file to object storage

3) Create Authentication Token

4) Login into ADW using SQLPLUS

5) Create credentials in Autonomous Data Warehouse to connect to Object Storage

6) Run import using Datapump

7) Verify the import

Step 1 : Export the schema of your current database using expdp

Use the expdp on your current database to run export. Copy that dump file put it in a location from where you can upload it to object storage.

Step 2 : Upload the .dmp file to object storage.

In order to upload the .dmp file on object storage log in into your cloud console and click object storage:

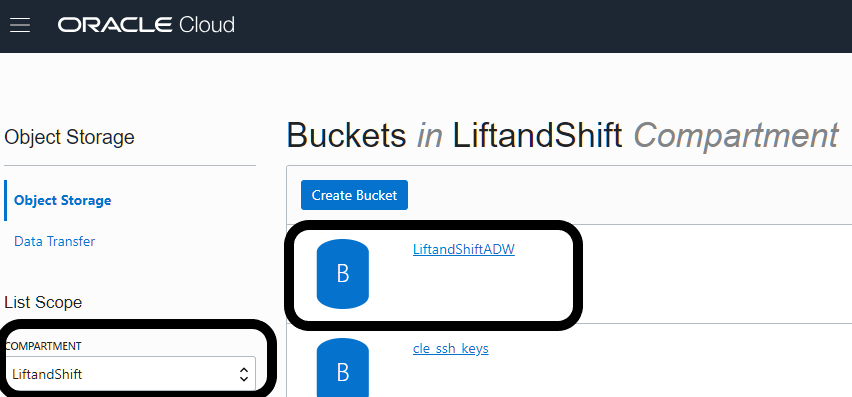

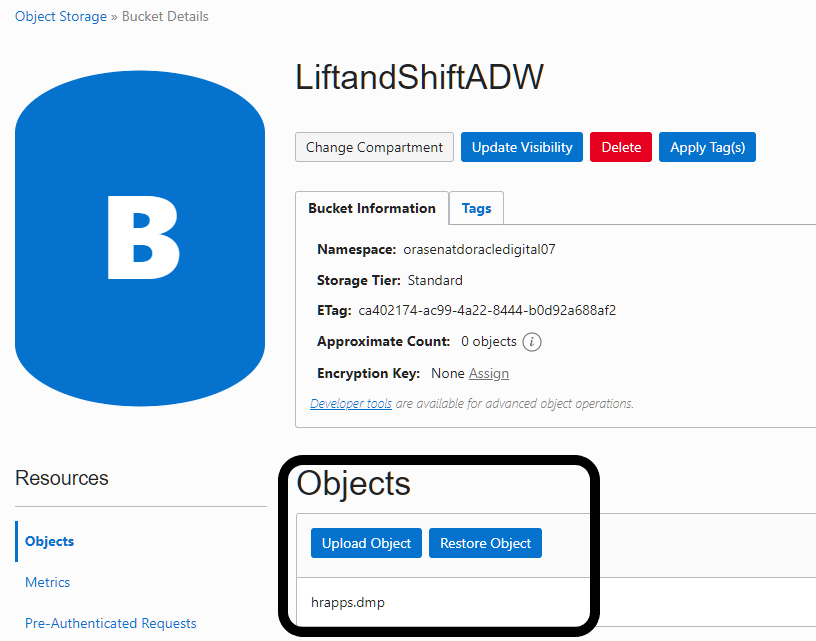

Once in object storage, select the compartment that you want to use and create a bucket. I am going to use compartment “Liftandshift” and create bucket “LiftandShiftADW”.

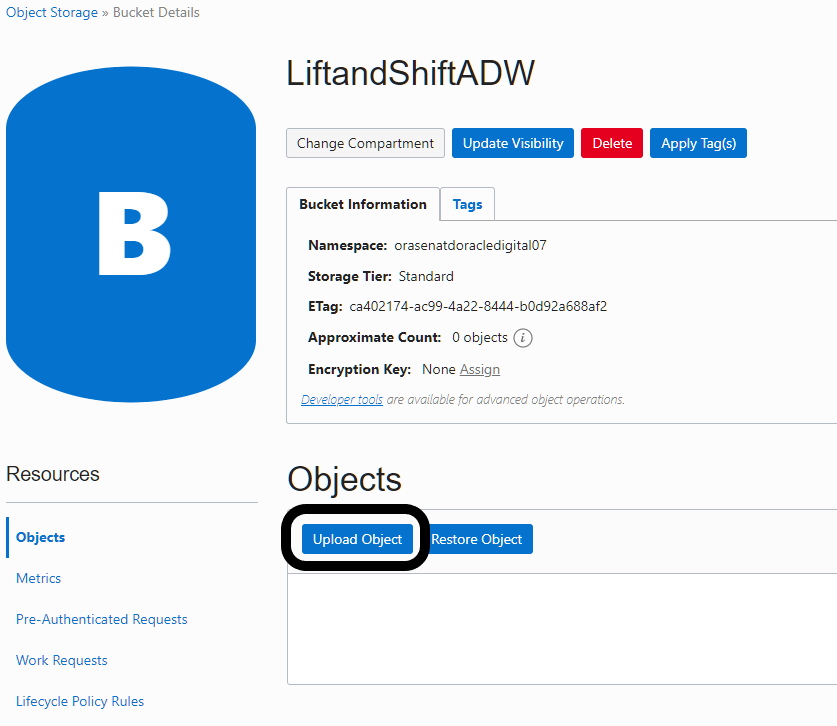

Next click on the bucket and click upload to upload the .dmp file.

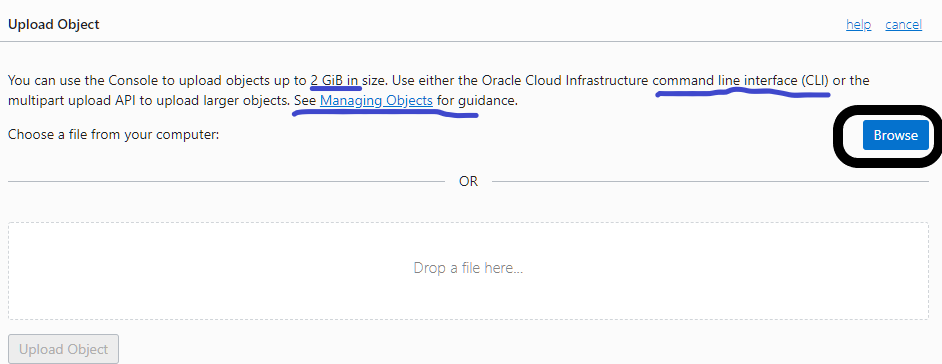

At this point either you can use CLI (Command line Interface) or GUI (Graphic User interface) to upload the .dmp file. If your .dmp file is larger that 2Gib then you have to use CLI. I am going to use GUI since i have a small schema for the demonstration purpose.

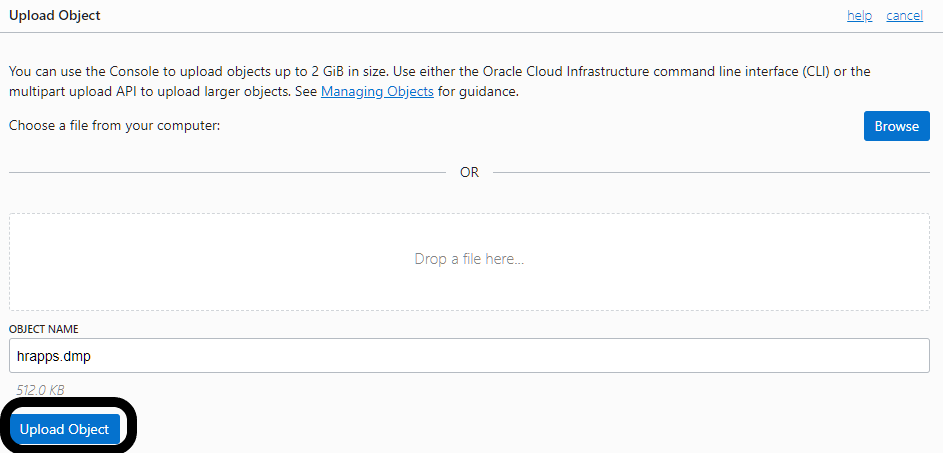

Select the .dmp file that you want to upload to object storage and then click upload object.

Once you’re done, your .dmp file will show up under objects in your Bucket Details Section

Step 3 : Create Authentication Token

Authentication Token will help us access Object Storage from Autonomous DB.

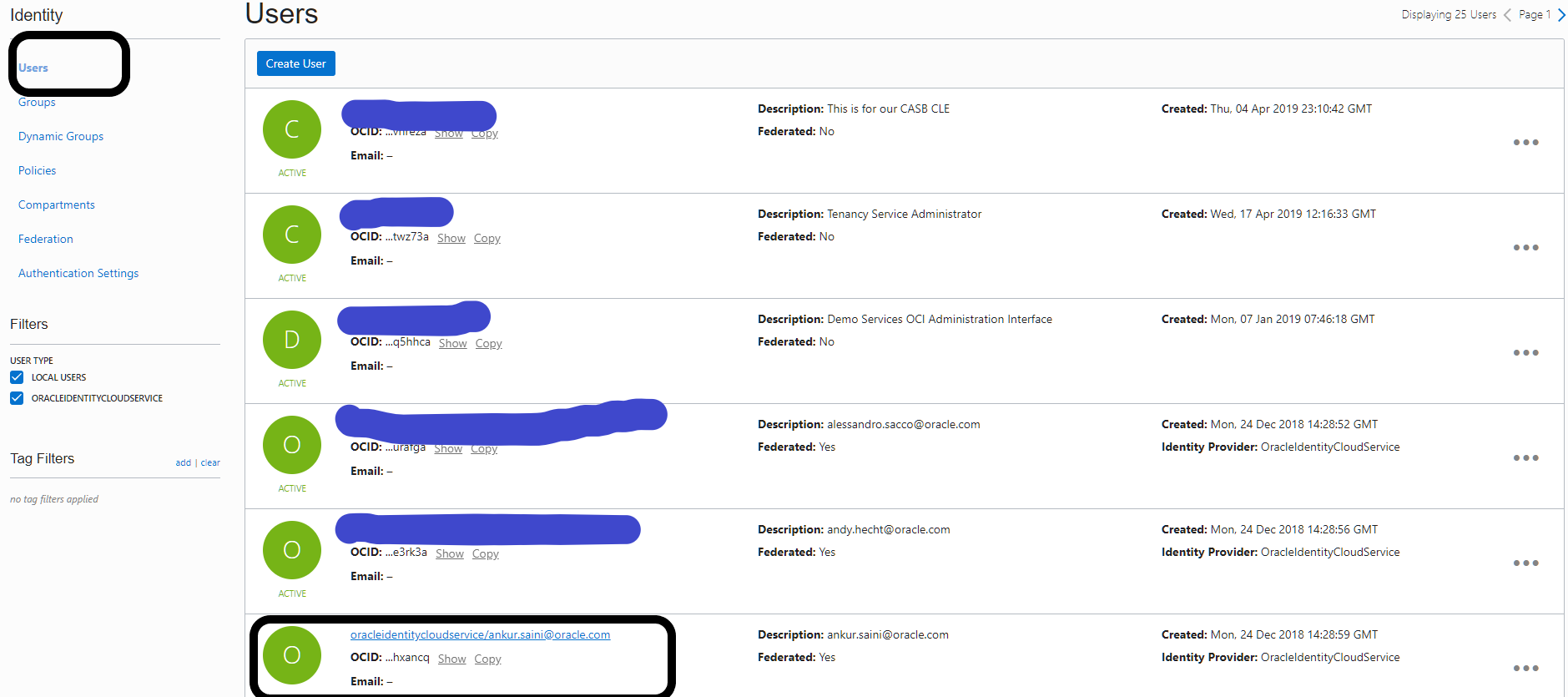

Under Governance and Administration Section, Click on Identity tab and go to users

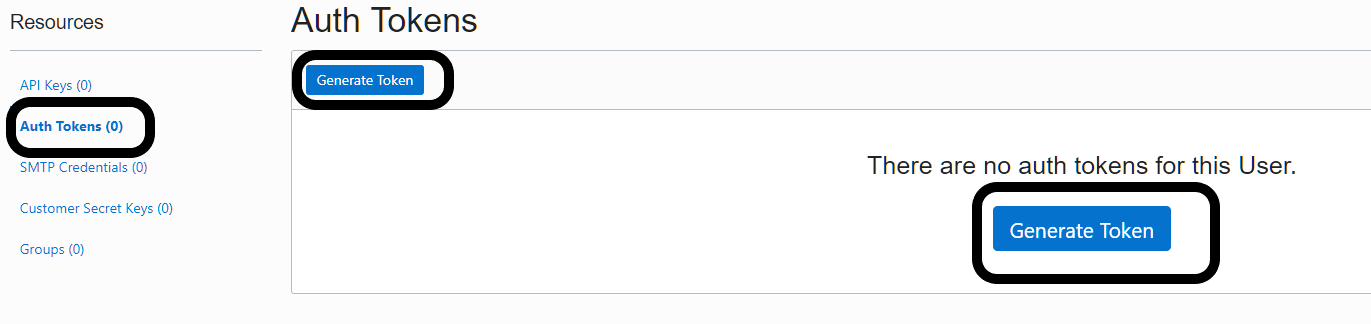

Click on authorized user id and then click on Auth Token under resources on the left side to generate the Auth token.

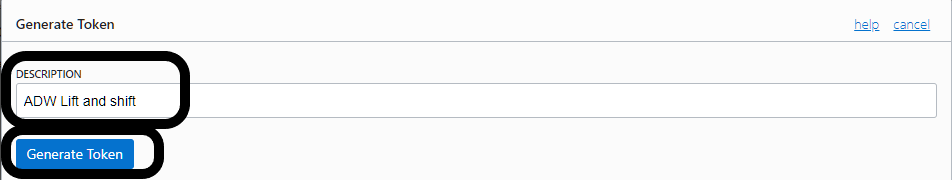

Click Generate Token, give it a description, and then click Generate token again and it will create the token for you. Remember to save the token. Once the token is created and saved, you won’t be able to retrieve it again.

You can click on the copy button and copy the token to a notepad. Remember to save the token because you will not be able to see the token again. Once done, you can hit the close button on the screen.

Step 4 : Login into ADW using SQLPLUS

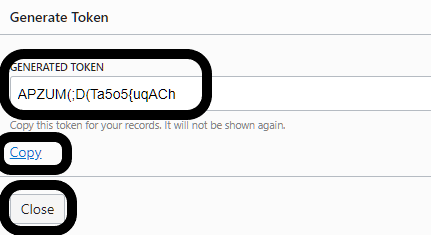

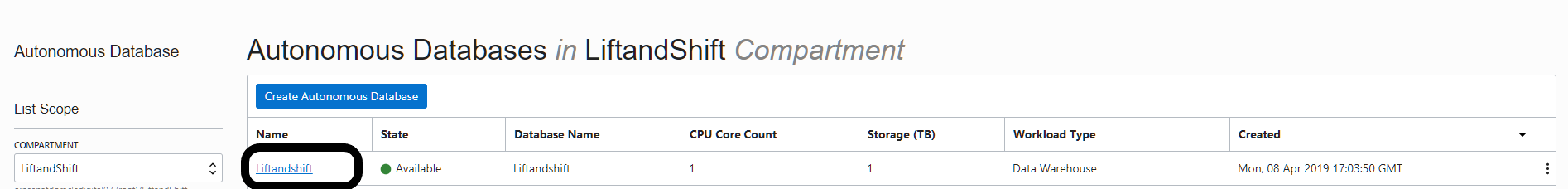

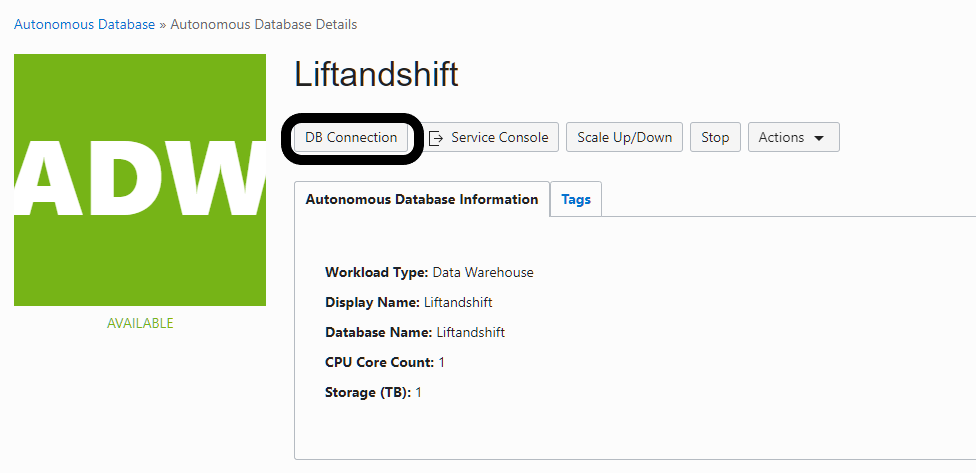

Go to ADW homepage and click on the ADW database you have created.

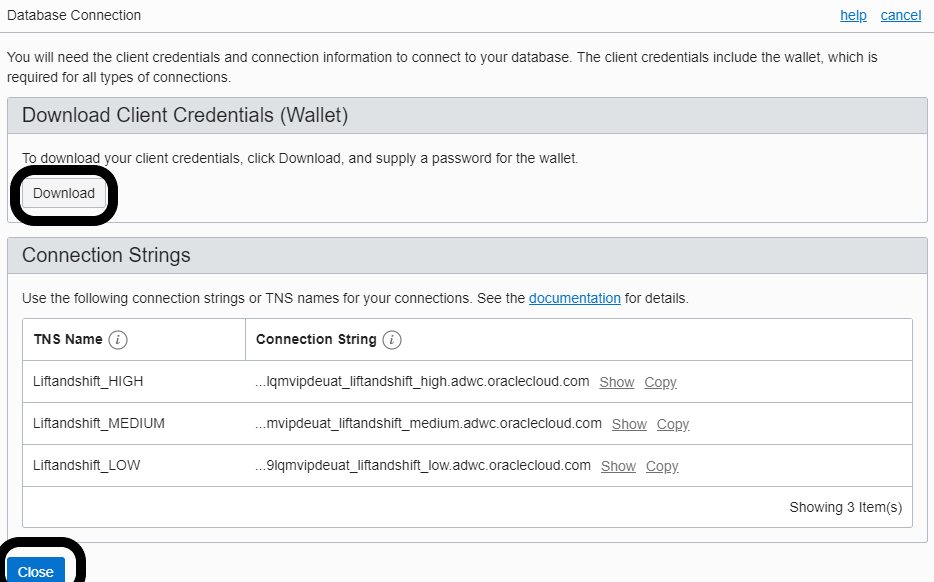

Once in the database page click on DB Connection.

Click on the Download button to download the wallet. Once the zip file is downloaded, hit the close button.

Download the latest version of instant-client from Oracle website : https://www.oracle.com/technetwork/database/database-technologies/instant-client/downloads/index.html

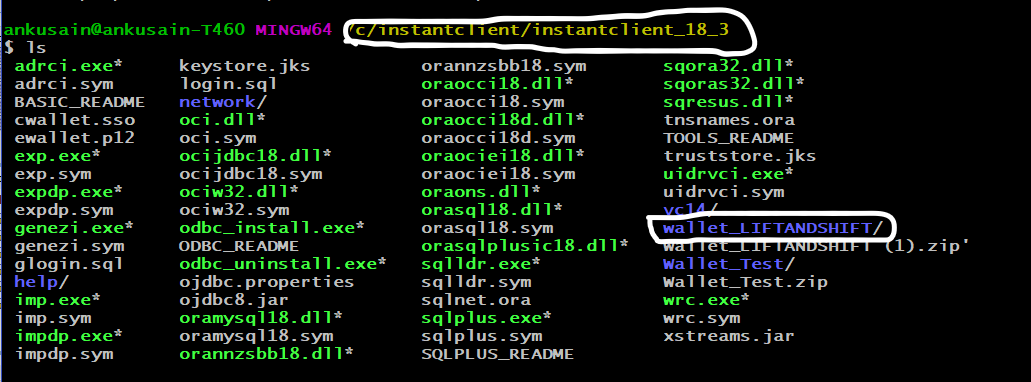

Unzip all the files in one location. I used the location “C:\instantclient\instantclient_18_3” on my system. Once unzipped you will be able to use sqlplus.exe and impdp.exe at that location. Also move the compressed wallet file to that location and unzip the file.

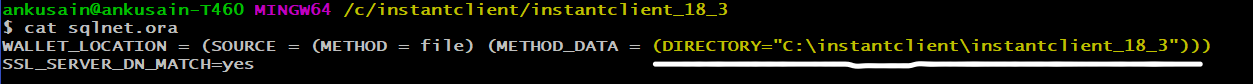

Next update the entries in the sqlnet.ora file and point it to the location of your wallet. I have changed mine to “C:\instantclient\instantclient_18_3” as shown below.

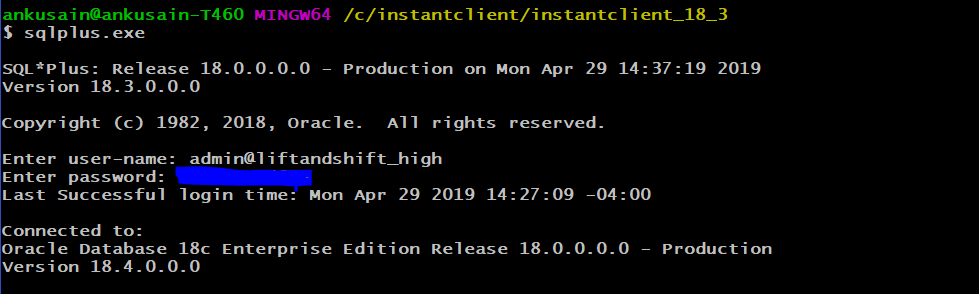

Test the connectivity using sqlplus.exe and make sure you are able to connect using the user-id admin.

Step 5: Create credentials in Autonomous Data Warehouse to connect to Object Storage

Use the below script to create credentials in ADW, and use the Authentication token created earlier as the password.

BEGIN

DBMS_CLOUD.CREATE_CREDENTIAL(

credential_name => ‘DEF_CRED_NAME’,

username => ‘oracleidentitycloudservice/ankur.saini@oracle.com’,

password => ‘<password>’ <———— (Use Authentication token Value here instead of the password)

);

END;

/

Step 6 : Run import using Datapump

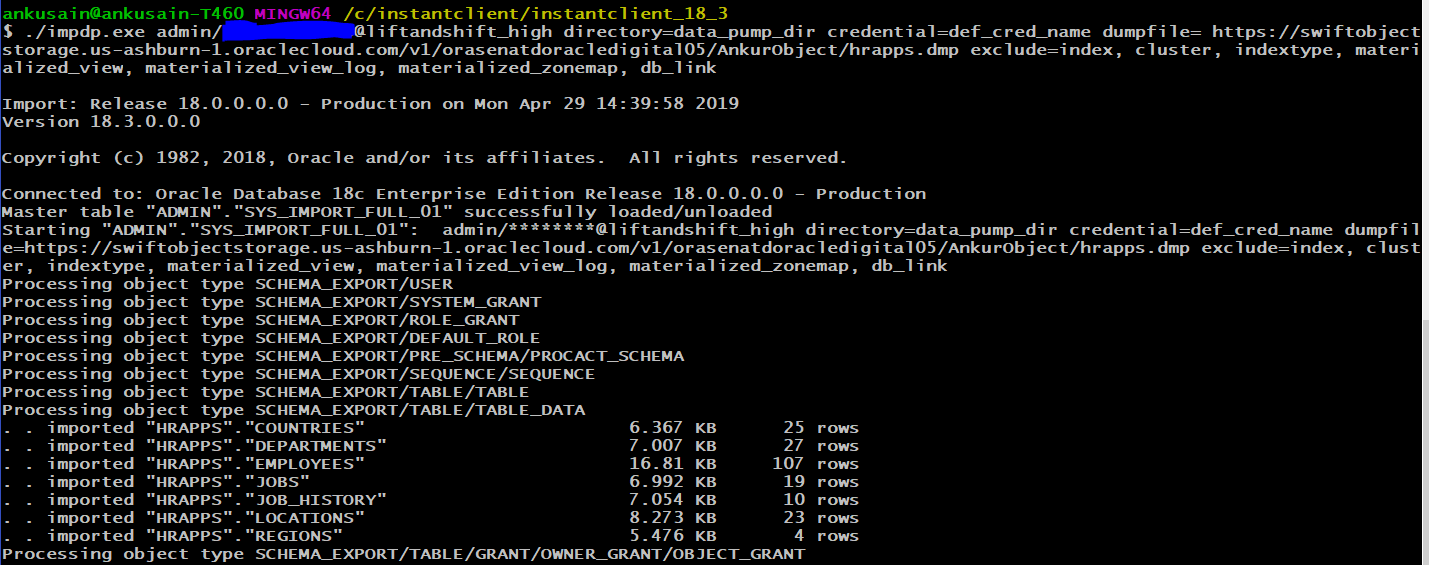

Since my ADW instance is built using 1 OCPU, I won’t be using parallel as an option. I used the script below to run the import:

./impdp.exe admin/<Password>@liftandshift_high directory=data_pump_dir credential=def_cred_name dumpfile= https://swiftobjectstorage.us-ashburn-1.oraclecloud.com/v1/orasenatdoracledigital05/AnkurObject/hrapps.dmp exclude=index, cluster, indextype, materialized_view, materialized_view_log, materialized_zonemap, db_link

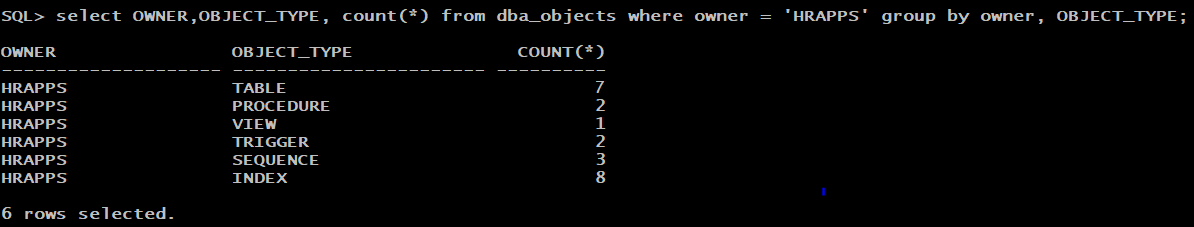

Step 7: Verify the import

Login into the database using sqlplus or sqldeveloper and verify the import.

You can see how easy it is to move the data to ADW, and that there is not a huge learning curve. Now you can be more productive and focus on your business.

Reference: