Oracle Database 19c Running @Memory Speed

Introduction

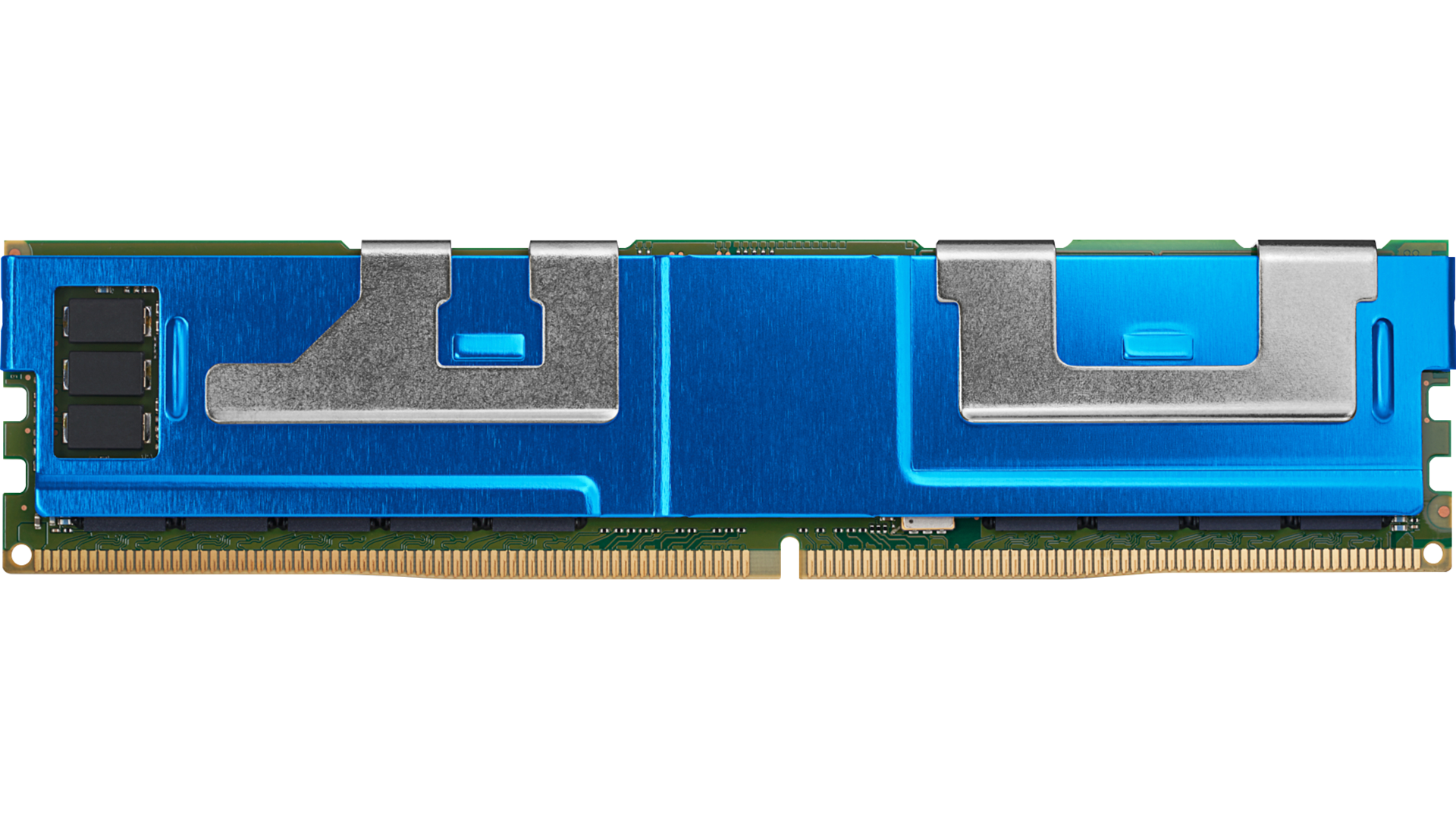

Intel Optane Persistent Memory is a new memory technology and Oracle has harnessed to deliver the highest possible database performance today. This technical advancement is a fusion of memory with storage capabilities.

Oracle Database has been able to use Persistent Memory since it was first introduced using what is known as Memory Mode, which enables much larger memory configurations than conventional Dynamic Random-Access Memory (DRAM). Starting with Oracle Database version 19.12, Oracle Database can also use what is known as AppDirect Mode. Persistent Memory in AppDirect mode (which we address in this blog) allows, PMEM to be used as a persistent data store for data and related files.

Oracle Database can now reside on Persistent Memory and take advantage of the byte addressable capabilities of PMEM with much lower latency than even the fastest Flash storage can provide. To further improve performance, data sitting on PMEM device is accessed directly by the Oracle Database eliminating the overhead of copying data into the database buffer cache. In this blog, we will go through the process of setting up PMEM devices and creating file systems on top of them. Later we will clone an active source Oracle Database onto these file systems. Oracle Database can also be created from scratch (using CREATE DATABASE command) once the file system is set up. In this blog, we will see how we can use the standard RMAN ACTIVE DUPLICATE command to bring an active database from another server.

What is Persistent Memory

Persistent Memory is a solid-state high-performance byte-addressable memory (like DRAM) that resides on memory bus, giving DRAM-like access to data and DRAM-like speed in the range of nanoseconds. DRAM memory is ephemeral, meaning the contents disappear when a server is rebooted. Storage has traditionally been a distinctly separate tier in the form of disk or Flash drives. For any computer application to work, the application data stored in disk or Flash must be loaded in memory, introducing additional latency in the process. Persistent Memory (in AppDirect Mode) operates both like memory and storage. It is a storage device that sits on the memory bus and is byte-addressable like memory, but also has the ability to persist data across server reboots.

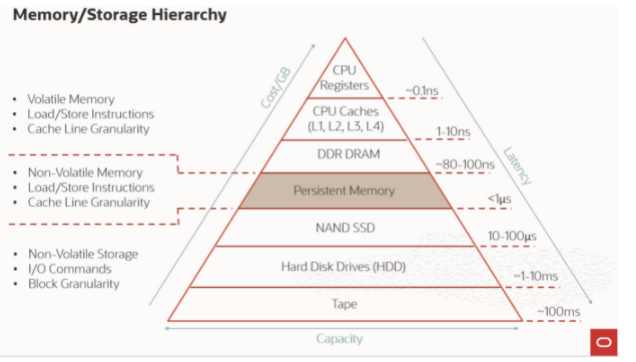

With advancements in infrastructure technology (compute, storage, memory, networking etc.), and fast database systems, there has always been a struggle to optimize database workloads and improve performance. Bringing data from storage into memory is a performance bottleneck. PMEM devices reside directly on the memory bus, which reduces IO latency. In the later part of the blog, we will show how to set up an existing Oracle database on a Persistent Memory store. First, let us talk about the Memory/Storage hierarchy.

Memory Storage Hierarchy

In the below diagram, you can see where Persistent Memory sits in the memory and storage hierarchy and the latency involved in bringing data to the compute.

How does Oracle utilize Persistent Memory?

Persistent Memory with Oracle Databases fits into the system architecture in 2 different ways. Persistent Memory can reside within servers or within storage. Oracle supports both of these systems architectures as follows:

Server-Side Persistent Memory

- Oracle Memory Speed (OMS) file system (Oracle version 19.12+). Also available in 21c.

- Directly Mapped Buffer Cache (Oracle version 21.3+).

Storage-Side Persistent Memory

- Exadata uses PMEM in the storage tier accessed using RDMA (Remote Direct Memory Access)

In this blog, we will focus on Oracle Database 19c (19.12 and later) using the OMS (Oracle Memory Speed) file system. The Directly Mapped Buffer Cache feature of Oracle 21c will be covered in a later blog post. Oracle Memory Speed (OMS) file system fully utilizes the potential of persistent memory to reduce storage latencies. So, what is OMS? Let’s find out.

Introduction to OMS (Oracle Memory Speed)

As we have seen in the Memory/Storage hierarchy above, Persistent Memory is a new tier in the hierarchy that fits between DRAM and Flash Storage. PMEM performance is close to the performance of volatile memory (DRAM). However, unlike DRAM, the data residing on PMEM devices is not lost (persists) across system restarts or power failures. PMEM devices are usually deployed with a DAX (Direct Access Filesystem). Since PMEM devices are byte addressable unlike storage devices (HDD, SSD) that are block addressable, there are cases where data stored in DAX enable filesystems may become corrupt in certain scenarios. Oracle Memory Speed (OMS) file system is designed to avoid these corruptions known as “fractured blocks” or “torn writes” while still delivering the full performance potential of Persistent Memory

Avoiding Fractured Blocks (aka torn writes) and Data loss with Persistent Memory

Persistent Memory, like DRAM, operates byte-by-byte (hence byte addressable) rather than in blocks as with conventional disk or Flash storage. With PMEM, data is persisted 8-bytes at a time rather than in terms of 4K O/S pages or storage sectors. If an Oracle Database writes a block of data (8192 bytes = 8K), the data would be persisted into PMEM in 1024 chunks (1024 chunks of 8 bytes each). Any disruption (power failure for example) during the time of this write, can leave data “fractured” or “torn” with inconsistent data in some of those 1024 chunks (old and new data). OMS eliminates this “fractured” block problem by doing an out of place copy into DRAM and the metadata is updated to point to the new block only after the entire block is written.

With a firm understanding of Persistent Memory and how it works, let’s get into details of how to configure Oracle Database with Persistent Memory. We start with system configuration and basic installation, then move to create a backing file to store Oracle data files, redo logs, temporary files etc.

Requirements and guidelines for Oracle Memory Speed:

Requirements:

-

- An Intel Cascade Lake or Ice Lake based server or later.

- Persistent Memory devices (PMEM) in each socket capable of memory interleaving.

- Oracle Linux with Huge-Page support.

- A DAX capable (DAX) file system such as XFS.

- Oracle version 19.11 with patch – 33192793 (DBRU 19.13)

-bash-4.2$ cd $ORACLE_HOME/OPatch

-bash-4.2$ ./opatch lspatches

33192793;Database Release Update : 19.13.0.0.211019 (33192793)

Guidelines:

OMS file system is only supported for a single instance Oracle database deployment and is not supported with Oracle Real Application Clusters (RAC). Each OMS file store also supports a single Oracle Database. This means, you can have Oracle datafiles from one database on one OMS file store. To deploy multiple databases on a server (or Virtual Machine), we can create multiple OMS file stores on the server.

Let us start setting up:

- Setup hardware and Operating System Components. In our setup, we have two OMS file systems configured with 12 PMEM (128 GB each) devices. 12×128 = 1536GiB which is 1.5 TiB.

- The following command lists some of the XFS file systems created using PMEM devices

df -hT

/dev/pmem0p1 xfs 1.5T 1.4T 57G 97% /mnt/pmem12

/dev/pmem1p1 xfs 1.5T 1.4T 57G 97% /mnt/pmem13

- Configure PMEM devices and set up OMS file store:

# ipmctl show -memoryresources

Capacity=3029.4 GiB

MemoryCapacity=0.0 GiB

AppDirectCapacity=3024.0 GiB

UnconfiguredCapacity=0.0 GiB

InaccessibleCapacity=5.4 GiB

ReservedCapacity=0.0 GiBsources

- Verify if regions are configured in AppDirect mode

# ipmctl show -region

SocketID | ISetID | PersistentMemoryType | Capacity | FreeCapacity | HealthState

====================================================================================

0x0000 | 0x97407f48df982ccc | AppDirect | 1512.0 GiB | 0.0 GiB | Healthy

0x0001 | 0xfb907f48d59a2ccc | AppDirect | 1512.0 GiB | 0.0 GiB | Healthy

Here, there are two PMEM regions; one per socket. Each PMEM region is 1512 GiB and supports AppDirect mode.

- Check for namespaces

In the below example, two namespaces namespace0.0 and namespace1.0 are now available for NUMA nodes 0 and 1 respectively. the block device path is /dev/pmem0 and /dev/pmem1

# ndctl list -u

[

{

“dev”:”namespace1.0″,

“mode”:”fsdax”,

“map”:”dev”,

“size”:”1488.37 GiB (1598.13 GB)”,

“uuid”:”f8ec094d-5a69-4f87-9198-fb9117f2ea8e”,

“sector_size”:512,

“align”:2097152,

“blockdev”:”pmem1″

},

{

“dev”:”namespace0.0″,

“mode”:”fsdax”,

“map”:”dev”,

“size”:”1488.37 GiB (1598.13 GB)”,

“uuid”:”8924aa35-701f-40cc-ba08-654aa5729611″,

“sector_size”:512,

“align”:2097152,

“blockdev”:”pmem0″

}

]

- Format the device by specifying the stripe unit size (su) as 2 MiB and stripe width size (sw) as 1. Repeat the step for both the file system.

# mkfs.xfs -f -d su=2m,sw=1 /dev/pmem0

…

…

data = bsize=4096 blocks=777240064, imaxpct=5

= sunit=512 swidth=512 blks

naming =version 2 bsize=4096 ascii-ci=0 ftype=1

log =internal log bsize=4096 blocks=379511, version=2

= sectsz=4096 sunit=1 blks, lazy-count=1

realtime =none

In this example, the data section of the output has bsize=4096 (=4K blocks). So, the data block size for this file system is 4096 bytes. The sunit=512 and the swidth=512 blks. This implies that the stripe unit is 512*4096 bytes = 2 MB and the stripe width is 512*4096 bytes = 2 MB. A single stripe of this file system is composed of a single stripe unit (512 blocks / 512 blocks per unit).

A minimum contiguous allocation of 2 MiB or contiguous allocations in exact multiples of 2 MiB is enforced for data to enable the address space to be represented with HugePages mappings and to bypass the page cache. (Huge Pages in Linux are 2MiB in size)

Huge Pages – Typically for a large Oracle database we configure Hugepages to map SGA memory. This gives a substantial benefit in virtual memory management by reducing page faults (standard page size is 4K, HugePage size is 2MiB) to 512x less.

Similarly, with PMEM devices, we create a hugepage aware filesystem (DAX) that has mmap mapping to be 2MiB and block allocation is 2MiB as well. Partitions created on top of PMEM namespace should be 2MiB aligned. By default, fdisk creates partitions that are 1MiB (1024 sector) aligned. A filesystem built on top of this partition won’t be able to provide DAX with 2MiB aligned block allocations. We need to have our partition begin at 2MiB aligned boundary.

Now we mount the device using the dax option

# mount -o dax /dev/pmem0 /mnt/pmem0

# mount -o dax /dev/pmem1 /mnt/pmem1

Verify if the filesystem is mounted with the dax option

# mount | grep dax

/dev/pmem0p1 on /mnt/pmem12 type xfs (rw,relatime,attr2,dax,inode64,rtdev=/dev/pmem0p2,noquota)

/dev/pmem1p1 on /mnt/pmem13 type xfs (rw,relatime,attr2,dax,inode64,rtdev=/dev/pmem1p2,noquota)

- Now we are ready to create a uber file for Oracle Memory Speed (OMS) file system

An uber file is like a volume in a traditional kernel-based file system. The uber file stores all the metadata and data for OMS.

-

- Use fallocate to create uber files in the DAX-mounted file systems.

# fallocate -l 1400G /mnt/pmem12/omsuberfile.tst19oms

# fallocate -l 1400G /mnt/pmem13/omsuberfile.tst19oms

# chown oracle:dba /mnt/pmem12/omsuberfile.tst19oms

# chown oracle:dba /mnt/pmem13/omsuberfile.tst19oms

# chmod 644 /mnt/pmem12/omsuberfile.tst19oms

# chmod 644 /mnt/pmem13/omsuberfile.tst19oms

In this example above, fallocate creates two 1400 GiB files in each of the DAX-enabled file systems. Ensure that the size of the file is an exact multiple of 2MiB. This aligns the file extents on 2 MiB boundaries and enables access through HugePages virtual memory mappings.

Also note down the string “tst19oms” at the end of the uber file. This means that this uber file can be used by only one database instance “tst19oms”. The suffix tst19oms is tied to an Oracle SID (unique name for Oracle database instance).

- Creating and mounting OMS file systems. Here we will create two directories and make them read only.

$ mkdir /home/oracle/product/oradata/TST19OMS

$ chmod -wx /home/oracle/product/oradata/TST19OMS

$ mkdir /home/oracle/product/redo/TST19OMS

$ chmod -wx /home/oracle/product/redo/TST19OMS

- Link Oracle binaries for OMS use

cd $ORACLE_HOME/rdbms/lib

make -f ins_rdbms.mk oms_on

cd $ORACLE_HOME/bin

chmod +x oms_daemon

chmod +x omsfscmds

- Setting up the OMS daemon:

(IMPORTANT: ORACLE_HOME, ORACLE_BASE and ORACLE_SID must be setup in the shell before attempting daemon startup)

$ cd $ORACLE_HOME/bin

$ ./oms_daemon

OMS daemon process with the name (oms_tst19oms – oms followed by SID_NAME) will be created with trace file located in $ORACLE_BASE/diag/oms/

$ ps -ef | grep oms

oracle 10303 1 0 Feb16 ? 00:00:00 oms_tst19oms

- Once you are in OMS command prompt, we can mount the OMS file systems using directories created in step 8 above.

OMS> mkfs /mnt/pmem12/omsuberfile.tst19oms

OMS:mkfs:No blocksize specified, using 4K

OMS:mkfs: Device /mnt/pmem12/omsuberfile.tst19oms formatted with blocksize 4096

OMS> mount /mnt/pmem12/omsuberfile.tst19oms /home/oracle/product/oradata/TST19OMS

OMS:mount: Mounted /mnt/pmem12/omsuberfile.tst19oms at /home/oracle/product/oradata/TST19OMS

OMS> mkfs /mnt/pmem13/omsuberfile.tst19oms

OMS:mkfs:No blocksize specified, using 4K

OMS:mkfs: Device /mnt/pmem13/omsuberfile.tst19oms formatted with blocksize 4096

OMS> mount /mnt/pmem13/omsuberfile.tst19oms /home/oracle/product/redo/TST19OMS

OMS:mount: Mounted /mnt/pmem13/omsuberfile.tst19oms at /home/oracle/product/redo/TST19OMS

Let us now validate the mount status

OMS> lsmount

fsindex : 0

Mountpt : /home/oracle/product/oradata/TST19OMS

Deviceid: /mnt/pmem12/omsuberfile.tst19oms

fsindex : 1

Mountpt : /home/oracle/product/redo/TST19OMS

Deviceid: /mnt/pmem13/omsuberfile.tst19oms

- Create an init file for the instance and startup the target instance in NOMOUNT mode

Sample initialization file (inittst19oms.ora). From the init file, we can see that we are creating control files on a PMEM device. I am also using db_file_name_convert and log_file_name_convert parameters to convert directory paths for the files that we will be bringing from a source active database. The destination strings are on PMEM device.

*.audit_file_dest=’/home/oracle/product/admin/tst19oms/adump’

*.audit_trail=’db’

*.compatible=’19.0.0′

*.control_files=’/home/oracle/product/oradata/TST19OMS/control1.ctl’,’/home/oracle/product/oradata/TST19OMS/control2.ctl’

*.db_block_size=8192

*.db_file_name_convert=’/u01/app/oracle/oradata/TST19DB/TST19DB/datafile’, ‘/home/oracle/product/oradata/TST19OMS’, ‘/u01/app/oracle/oradata/TST19DB/TST19DB/D82AA715ADA54DD1E053A15F1F0A8DE5/datafile’, ‘/home/oracle/product/oradata/TST19OMS’

*.log_file_name_convert=’/u01/app/oracle/oradata/onlinelog/TST19DB/TST19DB/onlinelog’, ‘/home/oracle/product/fast_recovery_area/TST19OMS/onlinelog’,’/u01/app/oracle/oradata/fast_recovery_area/TST19DB/TST19DB/onlinelog’, ‘/home/oracle/product/fast_recovery_area/TST19OMS/onlinelog’

*.db_name=’tst19oms’

*.db_unique_name=’tst19oms’

*.db_recovery_file_dest=’/home/oracle/product/fast_recovery_area’

*.db_recovery_file_dest_size=20g

*.diagnostic_dest=’/home/oracle/product’

*.dispatchers='(PROTOCOL=TCP) (SERVICE=tst1dbXDB)’

*.enable_pluggable_database=true

*.local_listener='(DESCRIPTION=(ADDRESS=(PROTOCOL=TCP)(HOST=<host_name>)(PORT=1522)))’

*.log_archive_format=’%t_%s_%r.dbf’

*.nls_language=’AMERICAN’

*.nls_territory=’AMERICA’

*.open_cursors=300

*.pga_aggregate_target=1072m

*.processes=320

*.remote_login_passwordfile=’EXCLUSIVE’

*.sga_target=3214m

*.undo_tablespace=’UNDOTBS1′

- Startup the instance in NOMOUNT mode

$ cd $ORACLE_HOME/dbs

sqlplus / as sysdba

startup nomount pfile=’inittst19oms.ora’

when the instance is started, you should watch out for these messages in alert log to confirm Oracle Memory Speed (OMS) is used

2021-12-09T12:01:40.402435-08:00

Oracle instance running with ODM: OMS (Oracle memory speed) ODM Library, Version: 2.0 without DataGuard.

- Make sure you can connect to the source database, and to the PMEM DB (that we are going to create) using the same SYS password. You may want to create static listener for the PMEM DB so that you can connect to it using SYS in nomount mode.

- We will use RMAN ACTIVE DUPLICATE command to clone the source database to the PMEM DB.

We have a script that we will use to run for the DUPLICATE command. tst19db is the source database running on a different host. Tst19oms is the target database that will be a PMEM database.

connect target sys/<password>@tst19db;

connect auxiliary sys/<password>@tst19oms;

RUN {

ALLOCATE CHANNEL d1 TYPE DISK;

ALLOCATE CHANNEL d2 TYPE DISK;

ALLOCATE CHANNEL d3 TYPE DISK;

ALLOCATE CHANNEL d4 TYPE DISK;

ALLOCATE AUXILIARY CHANNEL aux1 TYPE DISK;

ALLOCATE AUXILIARY CHANNEL aux2 TYPE DISK;

ALLOCATE AUXILIARY CHANNEL aux3 TYPE DISK;

ALLOCATE AUXILIARY CHANNEL aux4 TYPE DISK;

DUPLICATE TARGET DATABASE TO TST19OMS

FROM ACTIVE DATABASE

NOFILENAMECHECK;

RELEASE CHANNEL d1;

RELEASE CHANNEL d2;

RELEASE CHANNEL d3;

RELEASE CHANNEL d4;

RELEASE CHANNEL aux1;

RELEASE CHANNEL aux2;

RELEASE CHANNEL aux3;

RELEASE CHANNEL aux4;

}

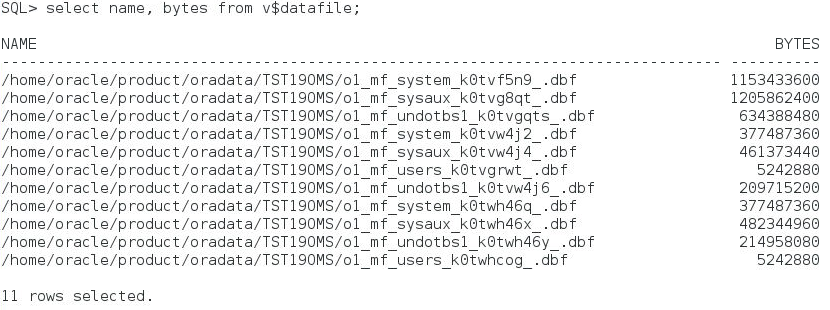

- Once the database is cloned, let us validate the data files and their destination file system.

To validate datafiles are located on the right mount point, query the name and size of the datafiles.

Resources and Further Reading

Deploying Oracle Memory Speed File System

Persistent Memory Primer – By Chris Craft

Conclusion:

In this blog, we have seen how we can clone an Oracle database running on a different server to a PMEM enabled file system. From the memory-storage hierarchy diagram earlier in the blog, we can see that PMEM devices are about ~3000x faster than Flash SSDs. We have significantly reduced the IO latency when our database run on an PMEM device. With OMS, the files are accessed in similar fashion to a traditional database where data is copied to buffer cache in DRAM (memory) and written back to OMS on PMEM. You may wonder about the capability of key features of Oracle database – Scalability and Availability. PMEM databases can scale horizontally using Oracle database Sharding capability. Availability of databases can be achieved by using local Data Guard or remote site Data Guard set up. In the next blog, we will explore the Directly Mapped Buffer Cache feature in Oracle database 21.3 and later releases, where Oracle Database accesses data directly from PMEM file store, bypassing the I/O layer entirely. Persistent Memory storage and buffer cache become one in Oracle21c. Stay tuned….