The commonly missed first steps of tuning a SQL statement

By Connor McDonald

Fire up your favorite search engine, enter “SQL tuning” as the search term, and you are likely to detect a common theme in the results. They typically relate to modification of the database structure, such as adding or removing indexes; modification of the execution environment, such as gathering or modifying optimizer statistics; or more-substantial modifications such as rewriting the SQL or changing the physical design of the database. What is often missing in those same search engine results is a warning that any such modifications carry an inherent risk to the entire database environment in which the SQL statement is running. The larger the modification, the higher the risk.

As Oracle Database has evolved, the number of tuning tools has grown and the level of sophistication of each of these tools has also increased, giving developers and DBAs a plethora of options to explore. One consequence is that it is easy to dive straight into utilizing these tuning tools without stepping back and asking a few key questions about the SQL statement first:

- What is the business functional requirement being served by the SQL?

- Is the SQL correct?

- Can this SQL ever run fast enough to meet the requirements?

Even without diving into low-level tools, it is easy to forget these questions. I frequently visit clients to assist with performance tuning issues, and upon my arrival, often the first thing presented to me is a single SQL statement, with no context or explanation surrounding it, and this plea: “This is the problem! Please solve it.” It may seem counterintuitive, but the first step of SQL tuning is to forget about the SQL.

The Business Requirement

No organization I’m aware of has ever had a business model of “Let’s make sure SQL runs fast,” unless that business was a SQL tuning consultancy! SQL statements, the applications that run them, and the IT departments that build and support those applications exist to meet a suite of business functional requirements. Those requirements may be created by the business as part of its desire to thrive commercially, or they may be imposed on the business by regulatory bodies. In either case, satisfying business requirements must be kept uppermost in a developer’s mindset when it comes to tuning SQL, because it drives the very decisions made by developers in creating the database design and the SQL that runs on it. Ignoring the underlying business requirements is a catalyst for poorly performing SQL.

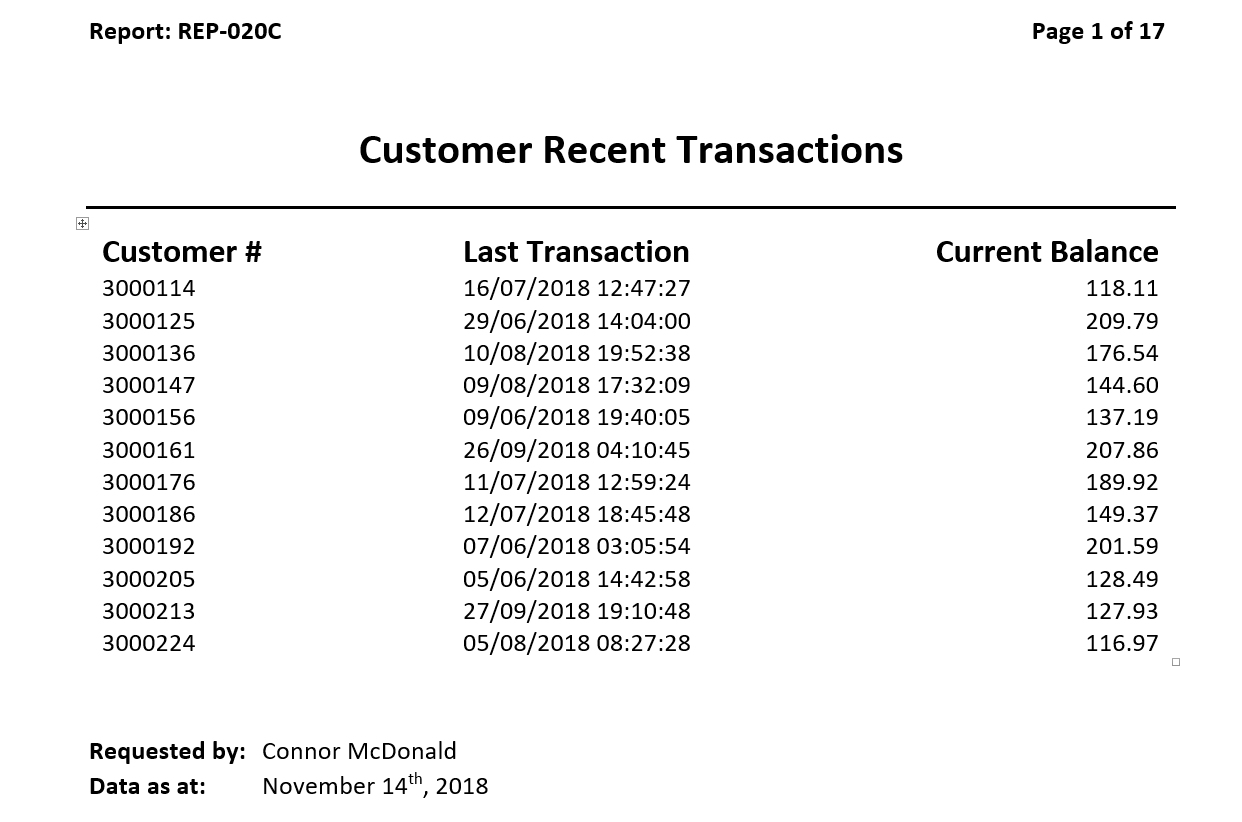

I’ll demonstrate that with a real example of my experience with a client that requested some SQL tuning assistance. For the sake of anonymity, the descriptions and the SQL code are obfuscated, but other than that, this is a reasonably accurate depiction of the events that transpired. The client had a typical online retail presence, where customers could create an account with the business to get access to discounts, promotional offers, and the like. Each customer (and hence each customer account) would buy goods from the business, and each purchase would be termed a customer “transaction.” The business had a report that showed the last time each customer had completed a transaction, similar to what you see in Figure 1. As customer transaction volume grew over time, the report had started to run more slowly, which is what prompted the business to request my assistance.

Figure 1: Customer transaction report

The SQL statement to produce the data for the report was a simple aggregation query, as shown in Listing 1.

Listing 1: Customer transaction query

SQL> select CUSTOMER_NUM, 2 max(TRANS_TIMESTAMP) LAST_TS 3 from CUSTOMER_TRANSACTIONS 4 group by CUSTOMER_NUM 5 order by 1;

This returns one row per customer, which is then joined back to the CUSTOMERS table to get the current account balance in dollars.

I made the mistake (which in part motivated this article) of focusing solely on how to improve the performance of the SQL statement, rather than stepping back and investigating the business requirement.

First I tackled the problem by using the query shown in Listing 2, colloquially referred to as the KIWI (kill it with iron) approach, where more server resources are thrown at the query via parallelism.

Listing 2: More hands making less work

SQL> select /*+ PARALLEL(t) */ CUSTOMER_NUM,

2 max(TRANS_TIMESTAMP) LAST_TS

3 from CUSTOMER_TRANSACTIONS t

4 group by CUSTOMER_NUM

This improved the performance of the report, but to the detriment of other functions of the application, which subsequently struggled to obtain sufficient I/O resources from the server, because it was being hammered by parallel I/O slaves. Also, the response time of the report became less predictable, because it was dependent on the number of concurrent executions of the report and how many parallel slaves were available to a given report request. Variability of response time is often more frustrating to application users than slow but consistent performance; hence, parallelism was dismissed as a permanent solution.

The next alternative was to make the transaction table “thinner.” This is a common technique in which the fields contained in either the SELECT clause or the predicates are added to an index so that only the index, rather than the full table, needs to be scanned. The index becomes a “thinner” version of the table. Listing 3 shows this strategy and the resultant query execution plan.

Listing 3: Using an index as a thin table

SQL> create index CUSTOMER_TRANS_IX

2 on CUSTOMER_TRANSACTIONS( CUSTOMER_NUM, TRANS_TIMESTAMP )

SQL> select CUSTOMER_NUM,

2 max(TRANS_TIMESTAMP) LAST_TS

3 from CUSTOMER_TRANSACTIONS

4 group by CUSTOMER_NUM

5 order by 1;

——————————————————————————————————————————————————

| Id | Operation | Name |

——————————————————————————————————————————————————

| 0 | SELECT STATEMENT | |

| 1 | INDEX FAST FULL SCAN | CUSTOMER_TRANS_IX |

——————————————————————————————————————————————————

This decreased the report response time, but I stressed to the client that this was only a temporary solution, because as the transaction volume continued to increase, even an index being used as a thin version of the transaction table would ultimately mean that the performance problems would recur.

Other solutions (partitioning, compression, and materialized views) were considered as well, the details of which I’ll omit for brevity’s sake, but in each case, the performance benefits also came with side effects that were undesirable for this client. This is not to dismiss any of these or the above techniques out of hand. All of the solutions are potentially valid in other use cases, but were just not for this particular client.

I convinced the development team to ask the business users what the motivation for this report was. After all, a report that shows the “most recent” of any high-volume activity is always out of date the moment it has been run. So I was curious to discover what value the report gave the business. The response was that the report was used to identify those customers who had not visited the website recently, so that they could be offered incentives via promotional offers to return to the site and become active again.

Understanding the business requirement made the solution to tuning the SQL trivial. All it took was a look at the columns in the CUSTOMERS table, as shown in Listing 4.

Listing 4: CUSTOMERS table

SQL> desc CUSTOMERS

Name Null? Type

—————————————————————————————————— ———————— ——————————————

CUSTOMER_NU NOT NULL NUMBER(8)

CURRENT_BALANCE NOT NULL NUMBER(14,2)

...

...

...

LAST_LOGIN_TIME NOT NULL TIMESTAMP(6)

There was no need to query the large CUSTOMER_TRANSACTIONS table at all! The last visit to the website for each customer was already being captured in metadata on the CUSTOMERS table. The LAST_LOGIN_TIME had been implemented as part of a password expiry mechanism but could now also be used for recent-activity checks.

This is a key point of “tuning” SQL. Without consulting with the business users and getting their requirements, there is no way the original SQL could have been recast to avoid querying the CUSTOMER_TRANSACTIONS table. Before you tune a SQL statement, you must understand the business requirement that led to it.

SQL Correctness

Once the business functional requirement has been confirmed, you can turn your attention to the SQL statement. Just because a SQL statement returns without error, or even if the statement returns the correct results, that does not constitute a guarantee that the SQL statement is actually correct. Often the cause of poorly performing SQL is malformed construction, which can easily slip through testing cycles if the query results are still plausible (especially with small test datasets). Hence, when I’m asked to tune a SQL statement, I will spend a few minutes before tackling any performance-related avenues making sure that the SQL statement does not have any obvious syntax errors. Here are some of the common errors I see that typically cause SQL statements to be misdiagnosed as performance problems.

Order of operations. My children are just completing primary school, and in their mathematics classes, they use the acronym BIMDAS. Many (many!) years ago, when I was a similar age, it was called BODMAS, but the premise was the same. The acronym is a simple way of remembering the order of mathematical operations (BIMDAS = brackets first, then indices, then multiplication/division, and finally addition/subtraction), which is why “2 times 3 plus 5” evaluates to 11, not 16.

The same rules apply to the processing order of logical operations within the predicates of a SQL statement, and failing to observe the correct ordering can lead to performance issues. Business requirements are often given in language within which there is a natural or implicit ordering of operations, which can lead to errors when transposed to SQL code. For example, the requirement

could yield the SQL query shown in Listing 5.

Listing 5: Business requirement translated to SQL query

SQL> select REGION, max(SALE_AMOUNT) 2 from EMP e, 3 SALES_TRANSACTIONS s 4 where e.JOB = 'SALESMAN' 5 and s.EMPNO = e.EMPNO 6 and s.LOCATION = 'CA' 7 and s.TAX_LEVY > 10 8 or s.GOVT_SUBSIDY > 0 9 group by REGION;

But this is probably an incorrect translation, due to the ordering of operations implied, but not explicitly stated by, the business requirement. It is most likely (and worth confirming with the business stakeholders) that the requirement when phrased with more precision was

- “First, identify the set of transactions for sales consultants from regions in California.

- Then, with that set of data, filter where the tax levy is more than 10% or the government subsidy is nonzero.

- Then, with that reduced set of data, find the highest transaction amount per region.”

The SQL in Listing 5 does not fulfill this requirement and, moreover, is likely to have performance issues, because the final predicate of OR GOVT_SUBSIDY > 0 becomes a standalone predicate that is not associated with the other conditions or even the joins. Because the query performs an aggregation on the REGION column, such errors can easily slip through testing phases unnoticed, since even though vastly more rows will be processed by the query, the aggregation will reduce the set down to a small list of regions. Recalling the BIMDAS lessons of my children, the query should be corrected as shown in Listing 6.

Listing 6: Corrected SQL query

SQL> select REGION, max(SALE_AMOUNT) 2 from EMP e, 3 SALES_TRANSACTIONS s 4 where e.JOB = 'SALESMAN' 5 and s.EMPNO = e.EMPNO 6 and s.LOCATION = 'CA' 7 and ( s.TAX_LEVY > 10 8 or s.GOVT_SUBSIDY > 0 ) 9 group by REGION;

Missing join predicates. Developers who are still coming to grips with relational databases can make the mistake of thinking that joins are operations used solely to collect additional attributes for a result set, rather than being data filters. For example, when the requirement is to show a department name along with employee details, a join is required to collect the additional detail, as shown in Listing 7.

Listing 7: Simple join

SQL> select e.*, d.DNAME

2 from EMP e,

3 DEPT d

4 where e.DEPTNO = d.DEPTNO;

A common coding mistake happens when each table in the SQL query has its own set of predicates. Because developers assume that all of the required filtering is handled by these predicates, the crucial join condition is forgotten, as in the example in Listing 8.

Listing 8: Missing join predicate due to presence of other clauses

SQL> select d.DNAME, max(e.SAL) 2 from EMP e, 3 DEPT d 4 where e.JOB = 'SALESMAN' 5 and d.LOC = 'NORTH' 6 group by d.DNAME;

As already mentioned, aggregations can mask the incorrectness of the SQL statement. It is only when the execution plan is examined that a Cartesian join, which can be a predictor of a future performance problem for large volumes of data, is detected (see Listing 9).

Listing 9: Missing join predicate leading to Cartesian cross-product

——————————————————————————————————————

| Id | Operation | Name |

——————————————————————————————————————

| 0 | SELECT STATEMENT | |

| 1 | HASH GROUP BY | |

| 2 | MERGE JOIN CARTESIAN | |

|* 3 | TABLE ACCESS FULL | DEPT |

| 4 | BUFFER SORT | |

|* 5 | TABLE ACCESS FULL | EMP |

——————————————————————————————————————

Even without GROUP BY aggregations, a common but erroneous approach I see to “fixing” SQL statements that have missing join conditions or an incorrect order of operations is adding the DISTINCT keyword. Listing 10 starts with the query in Listing 8 but just lists employee details without the GROUP BY.

Listing 10: Employee details still with the missing join predicates

SQL> select e.*

2 from EMP e,

3 DEPT d

4 where e.JOB = 'SALESMAN'

5 and d.LOC = 'NORTH';

EMPNO ENAME JOB MGR HIREDATE

————————— ————————— ———————— ————————— —————————

7499 ALLEN SALESMAN 7698 20-FEB-81

7499 ALLEN SALESMAN 7698 20-FEB-81

7499 ALLEN SALESMAN 7698 20-FEB-81

7499 ALLEN SALESMAN 7698 20-FEB-81

7521 WARD SALESMAN 7698 22-FEB-81

7521 WARD SALESMAN 7698 22-FEB-81

7521 WARD SALESMAN 7698 22-FEB-81

7521 WARD SALESMAN 7698 22-FEB-81

The duplicated results are typically a clear enough indication to developers that the SQL is not correct, but rather than add the missing join predicates, they might add a DISTINCT keyword to “fix” the SQL, as in Listing 11.

Listing 11: Incorrect SQL with a false appearance of correctness

SQL> select DISTINCT e.*

2 from EMP e,

3 DEPT d

4 where e.JOB = 'SALESMAN'

5 and d.LOC = 'BOSTON';

EMPNO ENAME JOB MGR HIREDATE

————————— ————————— ————————— ————————— —————————

7654 MARTIN SALESMAN 7698 28-SEP-81

7521 WARD SALESMAN 7698 22-FEB-81

7499 ALLEN SALESMAN 7698 20-FEB-81

7844 TURNER SALESMAN 7698 08-SEP-81

Using DISTINCT does not correct the SQL query, because the missing join predicate means that the results are still incorrect. Moreover, DISTINCT can be the cause of a perceived performance issue, because reducing a set of rows down to a distinct set of values is a resource-intensive operation. I remember that in the very first SQL tuning course I attended, in the early 1990s, the instructor gave us a simple maxim: “Yes, the DISTINCT keyword has valid uses, but in general, Mr. Distinct is not our friend.” Whenever I see the DISTINCT keyword in a SQL statement, I take some time to double-check that it is not masking some other error in the SQL query text.

Lack of aliasing. Aliasing tables in SQL statements and fully qualifying the columns referenced with those aliases represent more than just a maintenance convenience for the next developer who comes along to alter the SQL code. These steps also protect against silent errors in the SQL text that can cause performance problems. Consider the example in Listing 12, which identifies all sales conducted in regions that offered a “Black Friday” promotion.

Listing 12: Promotions on Black Friday

SQL> select * 2 from sales 3 where region in 4 ( select region 5 from promoted_locations 6 where campaign = 'BLACK FRIDAY');

The SQL query looks plausible given the stated requirement and runs without error. However, it will most probably run poorly, because it will potentially return every single row in the SALES table. This is not discernible from the query text until I look at the column definitions for the PROMOTED_LOCATIONS table, as shown in Listing 13:

Listing 13: Columns for PROMOTED_LOCATIONS

SQL> desc PROMOTED_LOCATIONS

Name Null? Type

——————————————————————— ———————— —————————————

ID NOT NULL NUMBER

CAMPAIGN NOT NULL VARCHAR2(128)

PROMOTED_REGION NOT NULL VARCHAR2(24)

Note that there is no column called REGION and yet the SQL statement in Listing 12—with its references to the REGION column—runs without error. This is because the columns were not fully qualified in the SQL statement, so it becomes logically equivalent to the text in Listing 14:

Listing 14: Promotions on Black Friday

SQL> select sales.* 2 from sales 3 where sales.region in 4 ( select sales.region 5 from promoted_locations 6 where promoted_locations.campaign = 'BLACK FRIDAY');

In the absence of a REGION column in the PROMOTED_LOCATIONS table in the subquery, the reference to the column will then resolve to that of the outer SALES table. On the assumption that there is at least one row in PROMOTED_LOCATIONS, the subquery becomes an “always true” result and thus all rows from the SALES table are returned, most likely with performance problems associated with processing a large volume of rows. For this reason, I advocate to developers that they always fully qualify all expressions in a SQL statement with appropriate aliasing. In the example above, full aliasing would have immediately informed the developer of the wrong-column-name error in the SQL text, as shown in Listing 15.

Listing 15: Aliased SQL query

SQL> select s.* 2 from sales s 3 where s.region in 4 ( select p.region 5 from promoted_locations p 6 where p campaign = 'BLACK FRIDAY'); ERROR at line 4: ORA-00904: "REGION": invalid identifier

The Laws of Physics

Once business requirements are validated and SQL statements have been confirmed as being syntactically and functionally correct, there’s one more step to take before you start digging into the lower-level performance tuning steps: Analyze the amount of work the SQL statement must do with the current database design. For example, if the business requirement is

—unless that artifact is stored in some way by the existing database design or there are appropriate database structures (such as indexing) in place to satisfy that query—a SQL statement to handle that requirement will need to scan every transaction in the company’s history. Business stakeholders can be unaware of the resource cost of requirements, especially when the requirement can be very simply phrased, as in the example above.

Sometimes a SQL “tuning” exercise is simply the task of explaining to business stakeholders what work the database will be undertaking to satisfy the business requirement. That can then lead to a decision about whether to modify the requirement or take action to modify the database structures to better align with that requirement.

Summary

For IT professionals, there is always the temptation of “Let’s just jump into the code,” no matter what language the code is written in, and SQL code is no exception. And when it comes to the tuning of SQL, that temptation is even greater, considering that Oracle Database offers such a plethora of SQL tuning facilities. Throughout the various versions of Oracle Database, technologies such as SQL profiles, stored outlines, SQL plan baselines, tuning advisors, and optimizer hints all provide enormous opportunities for developers to improve SQL code performance.

But these technologies work on the underlying assumption that the SQL statement is correct in terms of its construction and that it is meeting the intended business requirement. It is important for developers and DBAs to validate this correctness before diving deep into the various tuning techniques at their disposal.

In my next article, I’ll explore how to proceed with SQL tuning once the prerequisite steps of requirements validation and correctness testing have been completed.

Next Steps

LEARN more about SQL tuning.

DOWNLOAD Oracle Database 18c.

READ “A Higher-Level Perspective on SQL Tuning, Part 2.”

Illustration by Wes Rowell