Last month, we released the Oracle Cloud Infrastructure API Gateway service.

This service, along with Oracle Apiary, lets cloud native developers design APIs and configure policy enforcement quickly to get their projects up and running. The API implementation can range from Oracle Functions to applications running on Container Engine for Kubernetes and many more services running on Oracle Cloud Infrastructure.

A key tenet of cloud native is infrastructure as code (IaC), meaning that the configuration of your infrastructure is maintained in files that can be edited and version controlled. This approach eliminates manual configurations and reduces human error. By using a tool like Terraform, your runbooks become blueprints that can be version controlled the same way that your application code is. Terraform is useful for defining a configuration, identifying the deltas between your system and the configuration, and performing the necessary updates.

For example, I previously used it to set up Oracle Cloud Infrastructure for running Oracle SOA Cloud Service. It helped me eliminate user error and ensure that all the necessary dependencies were in place. In that case, however, after the setup of the infrastructure, other legacy management systems assumed the ongoing management lifecycle. But using Terraform to manage the complete lifecycle, from setup to ongoing changes, is ideal.

In this post, I use Terraform to begin the journey of managing the API development lifecycle. The API Gateway service is designed with the cloud native developer in mind, and like all Oracle Cloud Infrastructure services, it’s included in the OCI Terraform provider.

Getting Started

To give myself a project, I created a simple application called fieldService that manages service tickets. It’s a simple RESTful service for application developers to use when they’re making their field service applications.

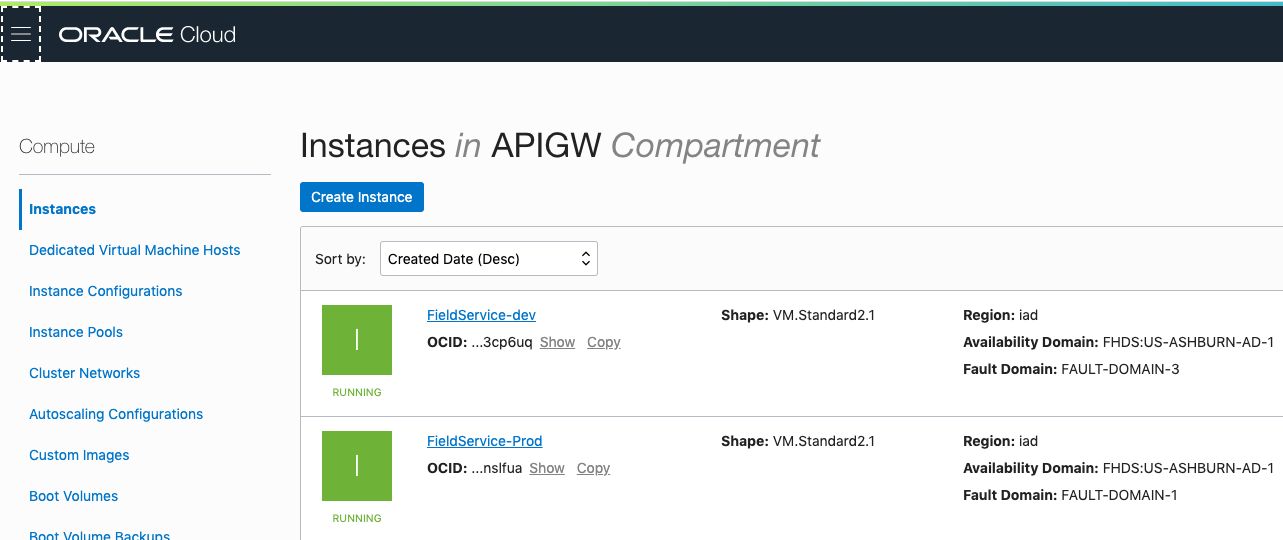

To start, I set up two simple Compute instances as development and production instances. If I were developing this app for real-world production, I would use a Kubernetes cluster on Oracle Cloud Infrastructure. But, for now my application is running in Docker containers in regular Compute VMs.

To start, I set up two simple Compute instances as development and production instances. If I were developing this app for real-world production, I would use a Kubernetes cluster on Oracle Cloud Infrastructure. But, for now my application is running in Docker containers in regular Compute VMs.

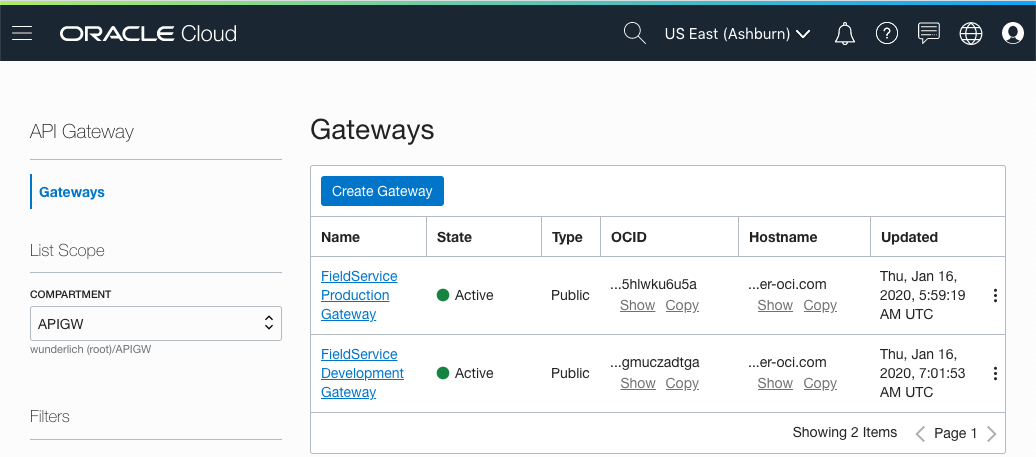

With API Gateway, I can easily provision a gateway with my project to develop my API, and then clean up everything with a terraform destroy command. This lets me quickly develop my APIs without needing an operations person to provision the gateway beforehand.

Using a well-defined pipeline management system, the API configurations and the project code can go through testing and ultimately to production onto gateways that are often controlled by the operations teams. In this scenario, I’m setting up a development gateway and a production gateway, and I’ll show how token replacement can be used to create the API policy configuration and deploy it in multiple contexts.

Designing the API

Great APIs begin with a well-formed design. So, my project will include an API design specification, documentation, and the ability to prototype the API before I invest in any of the implementation.

To do this, I use Oracle Apiary to create my API specification.

By using Oracle Apiary, I can design my API and rapidly prototype it to ensure that it meets the needs of my users. The documentation is automatically generated, which will be helpful for my users when my API is available. Oracle Apiary also connects to GitHub, which makes it even easier for me to manage my API design with my implementation and deployment configuration.

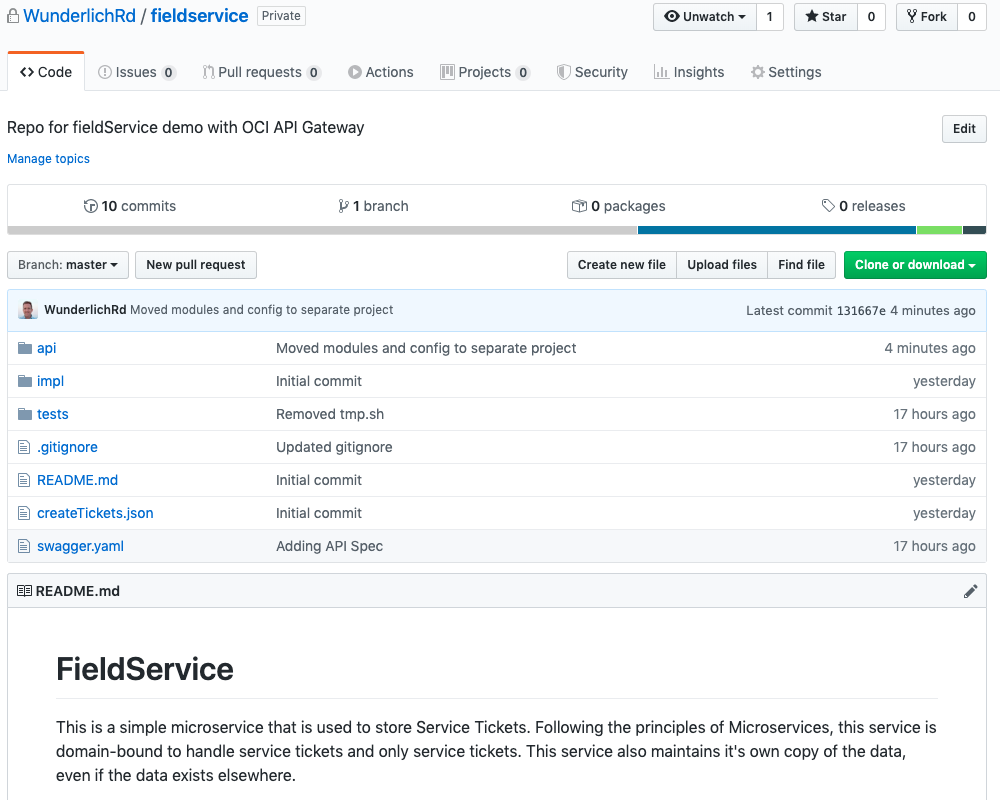

I’ve structured my project as follows:

- api: The Terraform files that define the API deployment specification

- impl: The implementation of my API, which is a set of microservices running in Docker containers

- tests: Testing for the API

I also have a README.md file that describes my project, a sample payload to populate some test data, and a Swagger file that Oracle Apiary created.

What is not displayed here is a configuration and the gateway module definition. The configuration depends on my tenancy, compartment, and so on. I can maintain that in a separate project in which I have a local copy that targets my deployment to my development environment. The administrator of the production gateway could then have a configuration for the production tenancy, which would be used in the build pipeline.

The gateway module is also in a separate project. As a developer, I use it as a template for creating my local gateway, but I wouldn’t have access to the project that defines it to make a change against other gateways. Gateway configurations are often managed by production operations teams. Of course, I can make updates in the gateway project and submit pull requests to be reviewed and rolled into the gateway configurations as needed.

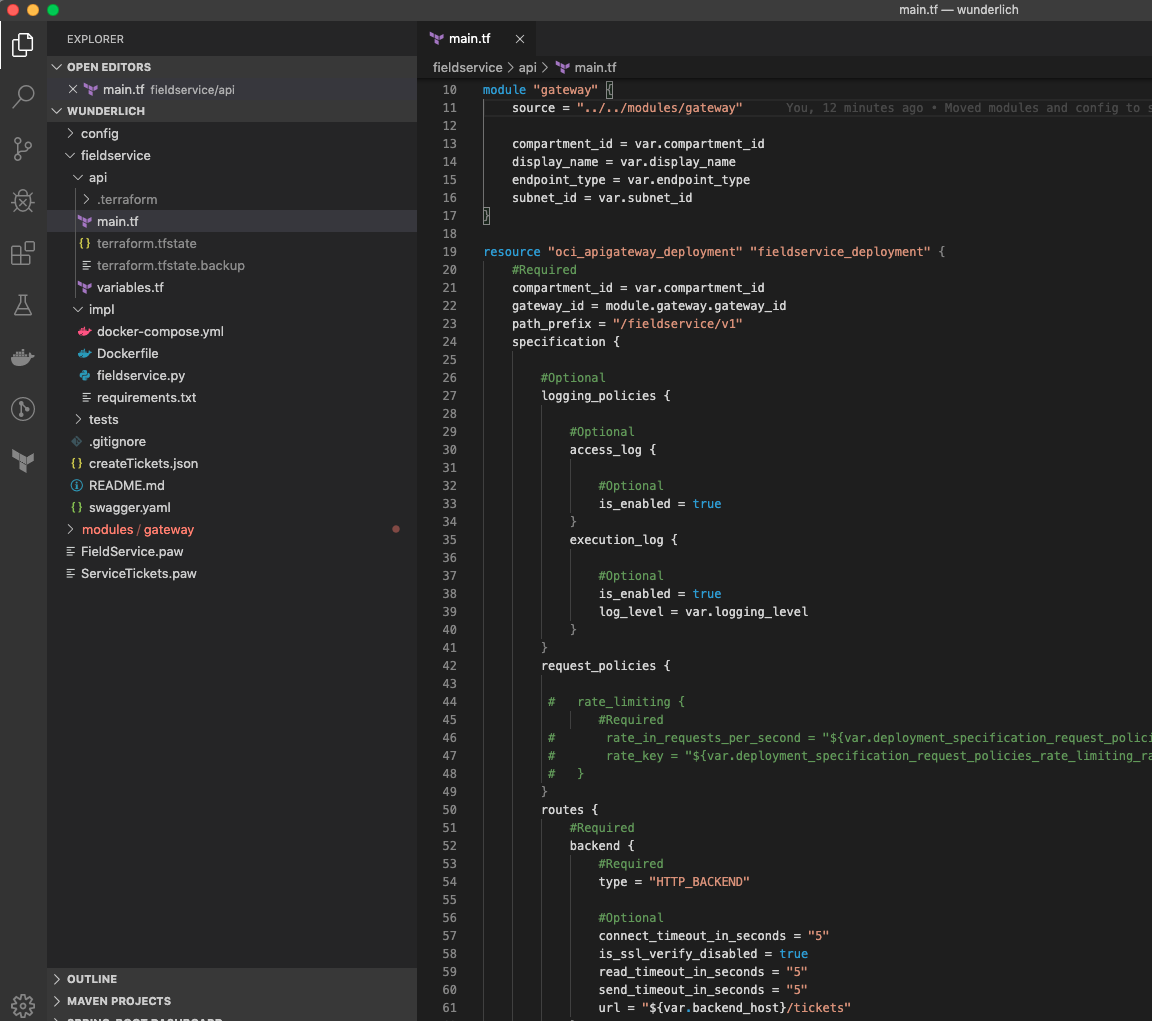

Details of the API Deployment Specification

Terraform allows many approaches, but in this case I chose to define my environment-specific variables (in the config directory), and I use interpolation in Terraform within my gateway and API Terraform files. This approach makes my API deployment specification flexible enough to be deployed in different environments without loading it up with routing decisions at runtime.

Let’s explore this further in the API specification. First, note that much of the Terraform file is commented out. I’m beginning with a simple API, essentially a proxy pattern. Over time, I’ll build up my API, using the features of the API Gateway service to protect my service implementation with the proper interfaces.

Note the following elements in the deployment specification:

- gateway_id, in the fieldservice_deployment resource

- url, under routes, also in the fieldservice_deployment resource

The gateway_id is derived from the gateway module. Because I am a developer, my Terraform configuration can stand up a gateway and create my deployments for me to work on my project. When I’m finished, destroying my deployment also cleans up my gateway. If I were the operator of the production gateway, I might want to use a modified gateway module that will always return the existing gateway, so I can manage my gateway lifecycle separately from all of the API deployments.

The url shows part of the power of using Terraform, where interpolation makes the API deployment specification dynamic. In my deployment environment, I’m maintaining the backend URL, likely to a test instance, but it could also be a service that is created and bootstrapped as part of my Terraform configuration. When I deploy the API, the URL will be properly defined for my testing environment. When I’ve finished my work and checked in my code, the automated pipeline that my operations team maintains would respond to the check-in and promote the API to the next phase. That process would have the appropriate variable to substitute for the backend to be used in that phase.

Updating the Deployment

Part of the power of Terraform is that it maintains state. When the configuration is changed, it compares the state and makes the changes. I can issue a terraform plan command to see the changes that will be made before they are made.

To see this in action, I’ll make a change to the API deployment. My initial deployment only allows a GET action; I can only query or display tickets.

But, I want to be able to create service tickets, too, so I add POST as an available method. (Of course, in a real-world scenario, I would also be adding security!)

Now, I can run terraform plan to see what modifications would be made.

The actual output is more extensive, but here I can clearly see what part will change and what the change will be. Now I can run terraform apply to update my deployment. When I’m satisfied with my changes, I can then push my updated configuration to my source control repository.

Conclusion

Using Terraform with Oracle Cloud Infrastructure opens up opportunities for development teams to effectively manage their infrastructure and build systems that are easier to set up and maintain because all the configuration resides with the code. Services like API Gateway and your API deployments can be managed with Terraform in an API development lifecycle that will help your teams better manage and support the critical services that run the business.