It’s been just over a year since we announced the Oracle Cloud Infrastructure Container Engine for Kubernetes (OKE) and Registry (OCIR) offerings, foundational pieces in Oracle’s cloud for developing, deploying, and running container native applications. With KubeCon upon us, it’s a good time to consider not only how Oracle’s cloud offerings have evolved, but also the macro challenges facing Kubernetes and managed Kubernetes offerings, as they target deeper adoption by enterprise developer and DevOps teams.

An interesting starting point is to review the challenges that folks are facing adopting containers and Kubernetes, and how that has evolved in the last year or so. The Cloud Native Computing Foundation (CNCF) survey measures these challenges, and the following graphic shows the results of four consecutive surveys:

Source: CNCF Survey

An interesting takeaway from these results is that the purely “technology-related” challenges, such as networking and storage, have dropped fairly dramatically. This drop could be attributed to continued standardization in the CNCF with efforts like Container Networking Interface (CNI) and Container Storage Interface (CSI), and to the rise of managed Kubernetes offerings, where storage and networking are pre-integrated for users and should “just work.”

At the same time, it’s interesting to see the prevalence of the “non-technical” challenges (acknowledging that some of these are new questions in the latest survey), things like “complexity,” “cultural changes,” and “lack of training.” Let’s take a look at these through the lens of enterprise customers and users.

Enterprise Needs and Culture

Oracle Cloud Infrastructure Container Engine for Kubernetes has been built with the needs of enterprise developers in mind. We have the luxury of building on a Gen 2 cloud and being able to marry the best in managed open source with leading edge compute, network, and storage cloud technologies to provide a secure, compliant, and highly performant offering to our customers.

With highly available clusters (and control plane) across multiple availability domains, bare metal and node pool support (of bare metal and VMs), and native integration with Identity and Access Management and Kubernetes RBAC, Container Engine for Kubernetes is ready for the most demanding enterprise workloads. Even better, customers get access to these capabilities while paying only for the underlying IaaS resources (compute, storage, network) consumed.

(Just an aside—running Kubernetes clusters on bare metal makes a big difference for our customers; see this interesting comparison).

A great example of cultural change here is the separation of concerns often present in enterprise IT teams, for example, between the network team and development team. With Container Engine for Kubernetes, compartments can be leveraged to provide the network team with control over the shared network aspects (VCNs, subnets, and so on) while enabling developers to control their clusters (and cluster nodes).

Tackling Complexity

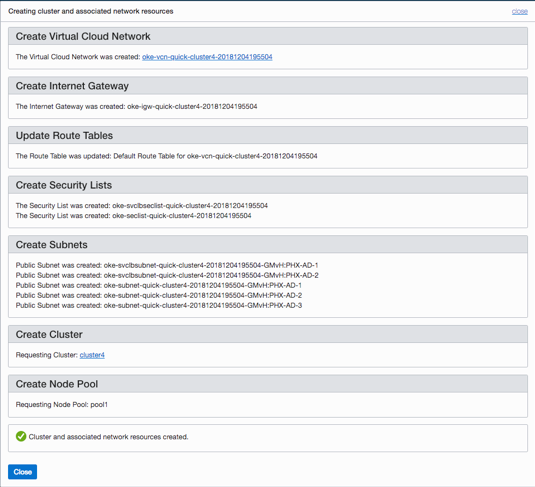

Complexity has been the genesis of managed Kubernetes offerings. The majority of customers we speak to want to leverage the technology to build their applications faster and further their business, but they don’t want to continually maintain the Kubernetes infrastructure itself. In addition to making it easy for users to upgrade to new Kubernetes versions, Container Engine for Kubernetes installs common tooling into those clusters by default (Kubernetes dashboard, Tiller, and Helm). Users can leverage the “quick cluster” capability to get a new cluster in a couple of clicks with a sensible set of defaults (see the following screenshot). Container Engine for Kubernetes worker nodes also leverage the standard Docker runtime that’s familiar to so many developers. Managed Registry users can help manage container image sprawl by setting global retention policies (or targeting specific repositories), for example, to remove images that haven’t been pulled or tagged within a certain time frame.

Container Engine for Kubernetes “Quick Cluster”

As more developers and DevOps teams leverage infrastructure as code, they want to leverage those capabilities against their Kubernetes clusters. In addition to full API and CLI support, Container Engine for Kubernetes also supports Terraform and Ansible providers, so DevOps teams can use the common open tooling that they’re already familiar with to provision and use their clusters, and to scale them, and even do so as part of their CI/CD pipelines.

Openness Is Key

Finally, a major point for tackling “complexity” and “lack of training” is being able to leverage the great resources available in the open source community. To that end, it’s critical that any managed Kubernetes offering conforms to true upstream open source, so that customers can be assured of portability and avoid lock in. As a platinum member of the CNCF, Oracle participates in the Certified Kubernetes Conformance Program, and we ensure that every new minor release on Container Engine for Kubernetes is conformant.

Beyond conformance, it’s also important to understand the typical (open) tooling and solutions that customers are deploying. For example, key features like the “mutating webhook admission controller” must be enabled in the (managed) control plane to enable advanced features like automatic sidecar injection for Istio deployments.

Solutions and best practices are part of the key to handling complexity. Many of these are available in the community, including from Oracle, such as monitoring (Prometheus), logging (EFK), Istio, Confluent, Couchbase, and Hazelcast. Others are provided by partners, including Twistlock and Bitnami.

As Kubernetes continues to grow and its charter continues to expand as the de facto operating system of the cloud, tackling complexity continues to be an ongoing challenge for the community. In this blog, we’ve looked at how our Container Engine for Kubernetes can help to address this.

This week we will be at Kubecon 2018. If you are there too, please stop by at our booth P4 and the nearby Oracle lounge!