Oil and gas industry professionals locate oil and gas under the earth and bring it to the surface in the most cost-effective manner possible. Drilling wells is expensive—the cost to drill a single well may be more than $500 million USD. To make the best possible decisions about where to drill, industry professionals use simulation techniques to improve accuracy. Advanced reservoir simulation plays a pivotal role in improving decision-making by providing estimated oil and gas production from an oilfield before drilling the wells.

Oracle Cloud Infrastructure has enabled the best performance for oil and gas reservoir simulations, going further than any other public cloud vendor to make high-performance computing (HPC) viable in the cloud. Reservoir simulations use linear solvers to solve mass balance equations that account for the flow of oil, gas, and water across locations in the model. These equations are complex and require significant compute power for a large field-scale model, beyond the capability of a single server. Instead, a cluster of servers is necessary to handle significant quantities of data exchanged across a large, tightly coupled model. Completing large-scale simulations effectively depends on the speed of the interconnect network that handles the continuous broadcast of flow quantity data between these servers. Oracle’s low-latency remote direct memory access (RDMA) interconnect for bare metal HPC servers is ideal for this type of work.

Testing an RDMA-Enabled HPC Environment for Reservoir Simulation

To demonstrate the capabilities of our HPC infrastructure to support the oil and gas reservoir simulation, we used a transparent and repeatable methodology. With both commercial and open source reservoir simulators available, we chose the open source simulator Open Porous Media (OPM) Flow. For the benchmarking tests, we used the Norne reservoir model, which includes data from a Norwegian oil field that’s part of the OPM Flow datasets. We also modified the Society of Petroleum Engineers (SPE) Comparative Solution Project SPE5 model to develop an 11.025 million cell reservoir model. This model contained one oil producer, one water injector well, and had roughly 100 times more total grid cells than the Norne model.

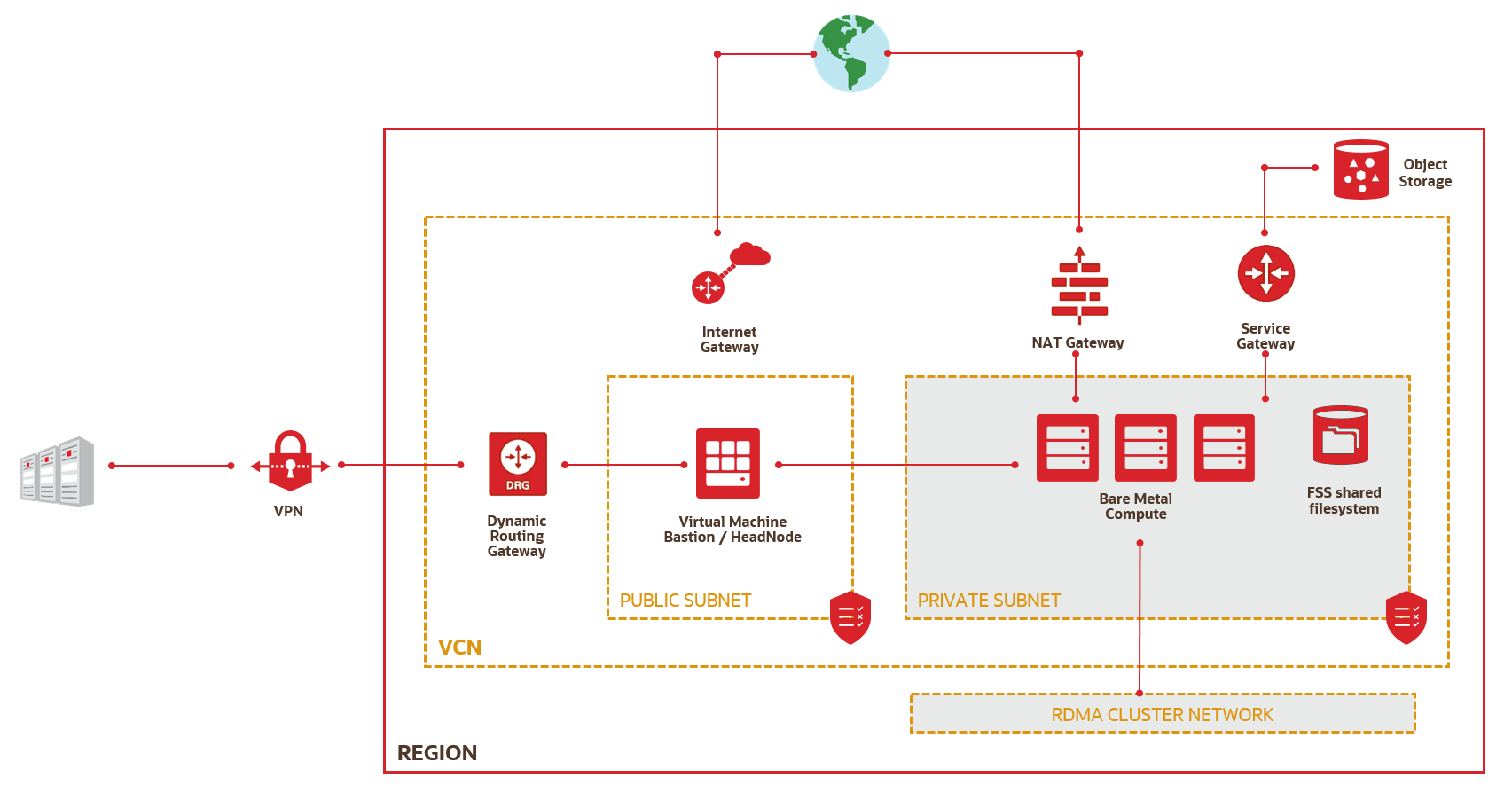

We performed simulations using OPM Flow on Oracle Cloud Infrastructure bare metal HPC instances with RDMA cluster networking. Figure 1 shows the architecture of an RDMA-enabled HPC environment on Oracle Cloud Infrastructure.

Figure 1: Architecture of an RDMA-Enabled HPC Environment on Oracle Cloud Infrastructure

Bare metal BM.HPC2.36 shapes feature 36 Intel 6154 cores with a clock speed of 3.7 GHz, 6.4 -TB local NVME drive, and 384 GB of memory. For the tightly coupled reservoir simulation systems, ultra-low-latency cluster networking is crucial. The HPC shapes give users the world’s first public cloud bare metal RDMA network, enabled by a Mellanox 100 Gbps network card. Oracle Cloud Infrastructure uses RDMA over Converged Ethernet (RoCEv2), which delivers 1.5-microseconds single-hop latency—unparalleled in the public clouds. A single RDMA cluster on Oracle Cloud can scale up to 20,000 cores. The bare metal compute and networking approach uses no hypervisor or server agents, and allows no resource oversubscription. This combination eliminates OS and network jitter, delivering consistent, predictable high performance.

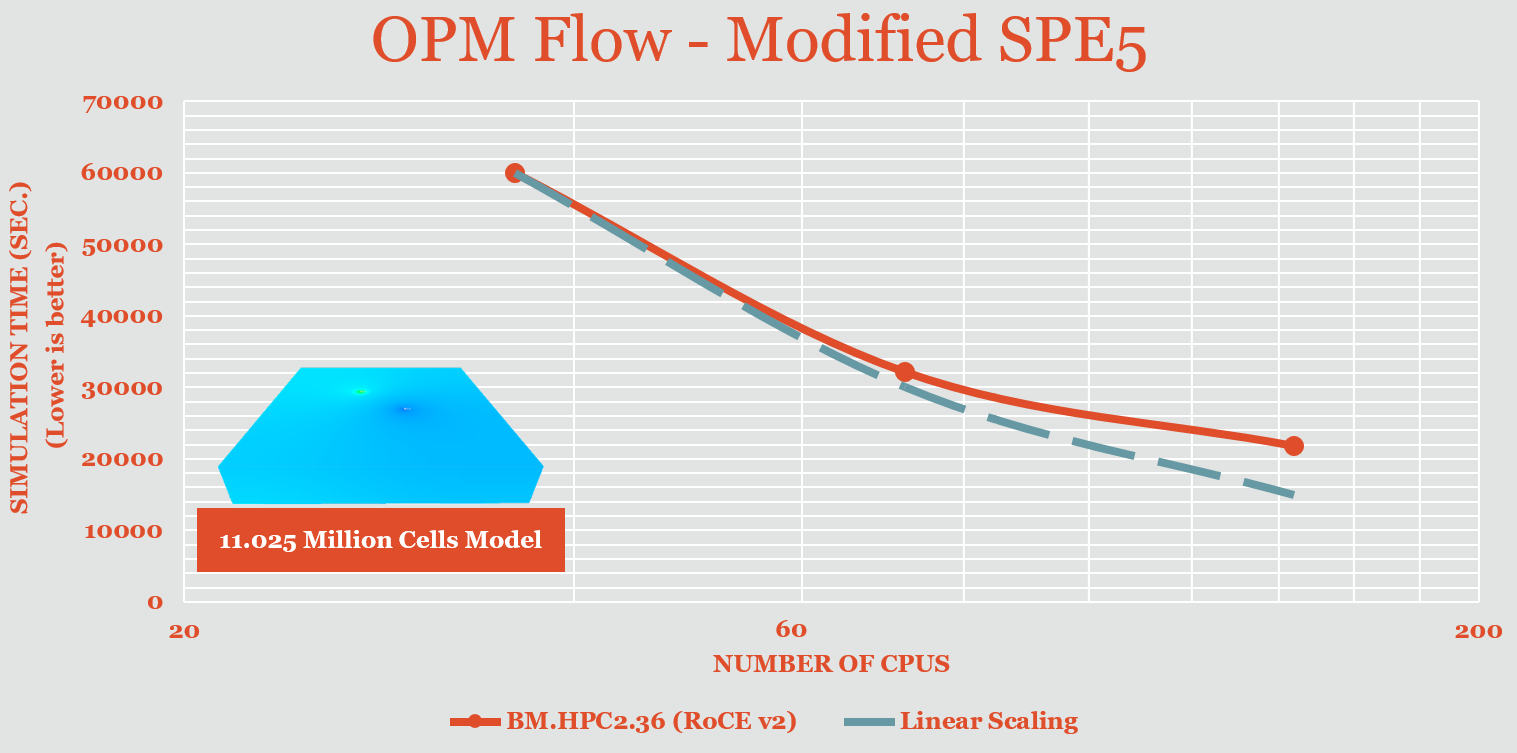

Linear Scaling for Reservoir Simulations

The benchmarking simulations of the modified SPE5 model demonstrate strong scaling for reservoir simulation on HPC clusters. When a workload increases performance equal to the number of processors, as compared to the performance on a single processor, it’s called linear scaling. Figure 2 shows the scaling of a model with 11.025 million cells, along with the theoretical linear scaling showed by the gray dotted line. The HPC infrastructure can achieve close to linear scaling for reservoir simulations using simulation models with tens of millions of grid cells. The bare metal compute and cluster network offered by Oracle Cloud makes this result possible.

Figure 2: Scaling of OPM Flow Reservoir Simulations with a Modified SPE5 Model on Oracle Cloud Infrastructure Bare Metal HPC Clusters

Performance Compared with Other Clouds

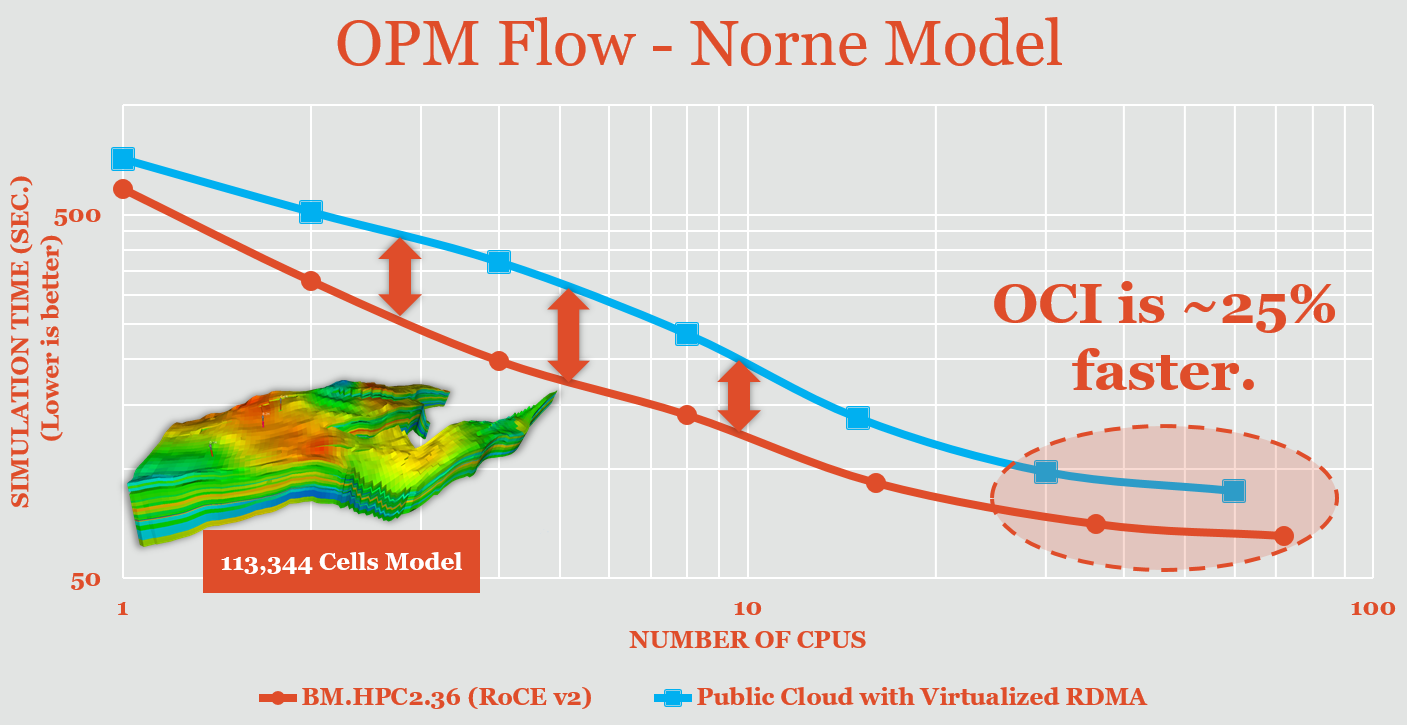

Next, we compared reservoir simulation performance on Oracle Cloud Infrastructure with performance on other public clouds. Figure 3 compares the benchmarks for the Norne model performed on the bare metal HPC cluster with the publicly available benchmarks from another public cloud that uses virtualized RDMA.

Figure 3: Comparison of Norne Model Performance Between Oracle Cloud Infrastructure (Bottom, Red Line) and Another Public Cloud with Virtualized RDMA (Top, Blue Line)

Oracle Cloud Infrastructure provides better performance for the entire benchmarking range and almost 25% faster performance than the public cloud with virtualized RDMA for the simulations run on multiple cluster nodes. These benchmarks demonstrate a significant advantage offered by Oracle Cloud Infrastructure’s bare metal compute and RDMA cluster networks for reservoir simulation workloads.

We strongly believe that Oracle offers oil and gas industry customers an advantage in processing key HPC workloads. Try it yourself—sign up for a free trial of Oracle Cloud Infrastructure or learn more about Oracle Cloud Infrastructure’s HPC solutions. For more examples of how Oracle Cloud Infrastructure outperforms the competition, follow #LetsProveIt on Twitter.