We’re proud to announce our validation of the Quobyte file system on Oracle Cloud Infrastructure (OCI). After automating the client and server deployment on OCI, we tested it for a range of use cases with large sequential IO and small files. We found that Quobyte is ideal for traditional high-performance computing (HPC) workloads with large sequential IO and parallel read and writes. It also demonstrates strength for more demanding small file workloads, such as fluid dynamics or electronic design automation (EDA).

Why Quobyte?

This file system is a great fit for EDA workloads for a few key reasons. Concurrent, lockless file access is necessary for running simulations. Quobyte’s policy-based data management helps optimize performance for small file and metadata. Finally, Quobyte’s lack of storage bottlenecks enables manufacturers and researchers to limitlessly scale performance by adding hardware.

Quobyte is a parallel-distributed, nothing-shared POSIX file system that supports parallel file sharing and network file system workloads on one storage system with a range of interfaces to facilitate data sharing. It includes native drivers for MPI-IO, Linux, Windows, HDFS, S3, and TensorFlow.

The policy-based data placement allows us to combine the performance of local NVMe solid-state drives (SSDs) with the cost efficiency of persistent block volume storage. Choose to store the same volume on both or the transparent tier between the two backends. This flexibility allows you to optimize cost, performance, and data durability.

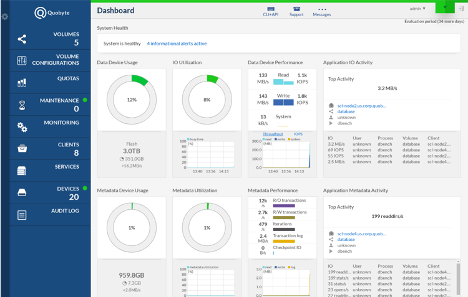

Quobyte is simple. We’ve built out an auto-deploy template that we cover soon, but it doesn’t end there. Use the web interface or CLI to manage clusters, see live performance metrics, or migrate data with a click.

Quobyte delivers scalable performance without storage bottlenecks. Adding more storage servers increases bandwidth, capacity, and IOPS to boost performance. Plus, it’s easy to grow storage by adding more persistent block volumes to existing storage servers or add storage servers.

Quobyte for Oracle Cloud Infrastructure

OCI and Quobyte are a great match because they’re both tailor-made for the high-end compute market. “We’re very excited to work with Oracle Cloud. Their approach to bare metal and fast networking is the perfect fit for the scale-out workloads Quobyte is designed for. Quobyte and Oracle Cloud offer the reliability enterprises need to run HPC and scale-out applications.” said Bjorn Kolbeck, Co-Founder and CEO, Quobyte. Quobyte is a cloud native solution. Storage scales out for maximum performance and enables cloud flexibility by enabling you to deploy new clusters or add resources in minutes.

Quobyte also benefits from our non-oversubscribed high-performance networking to deliver maximum network throughput and lower latency. Since OCI supports demanding scale-out workloads, they’re a perfect fit.

Quobyte enables OCI customers to build a high-performance file server with both local NVMe SSDs and network-attached block volumes for low latency, high IOPS, and high IO throughput. It’s as easy as deploying Quobyte with our automated Terraform template, oci-quobyte.

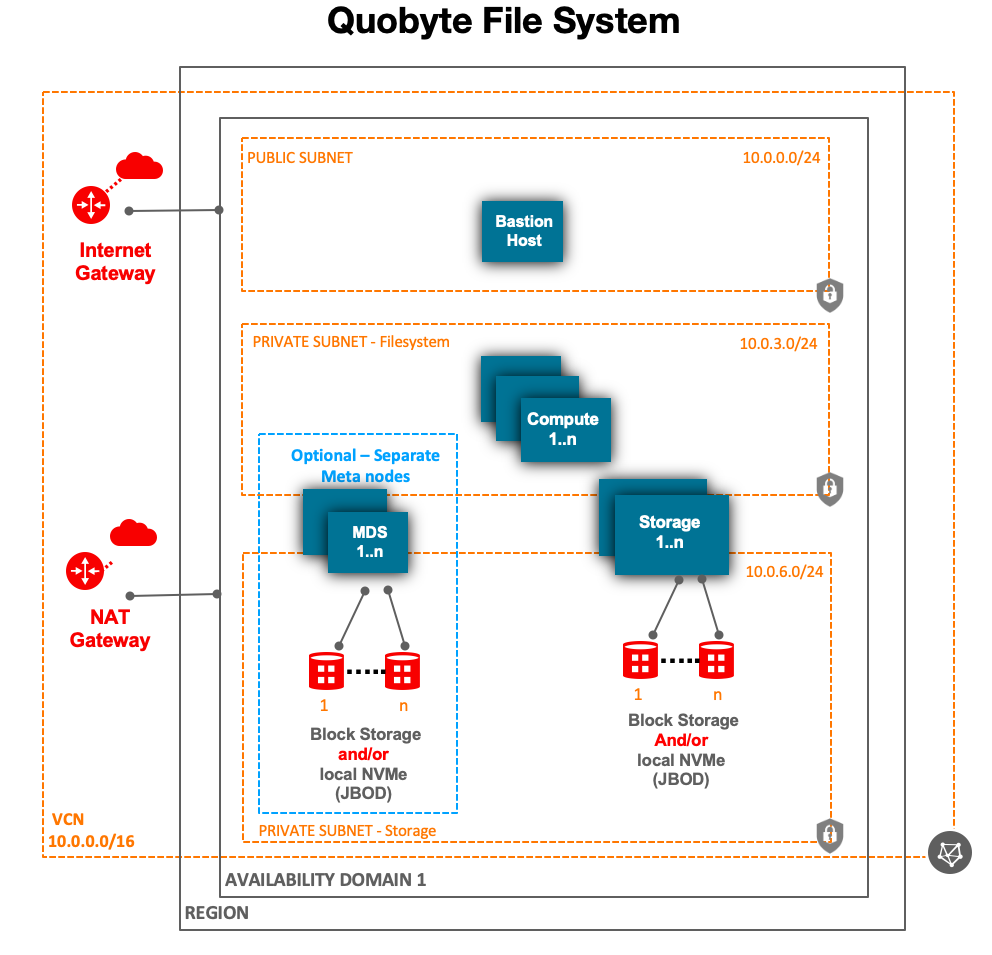

High-level architecture

Our Terraform template starts by provisioning the required OCI resources, like compute, storage, and network. This script then deploys and configures the Quobyte file system.

Quobyte clusters have three core services: registry, metadata, and data. The template deploys them all on the same node, though metadata intensive uses, such as EDA, can use Quobyte to provision separate metadata nodes.

Choose between bare metal (or virtual machines (VMs) in standard or DenseIO Compute shapes. We recommend bare metal for optimal throughput, because these instances come with two physical 25 gigabits/sec network interface cards (NICs), allowing you to use one NIC for all traffic to block volume storage and another for incoming data to the data and metadata nodes.

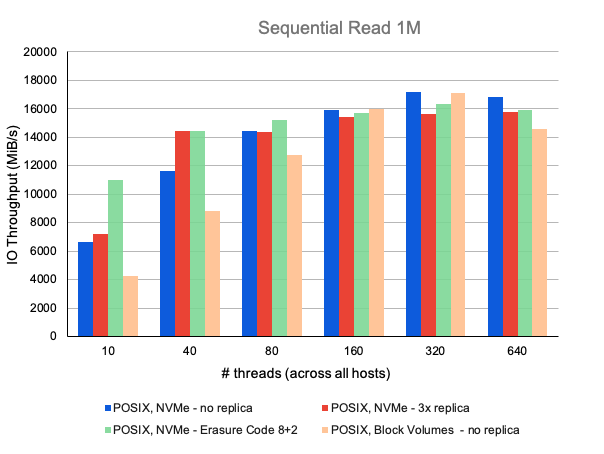

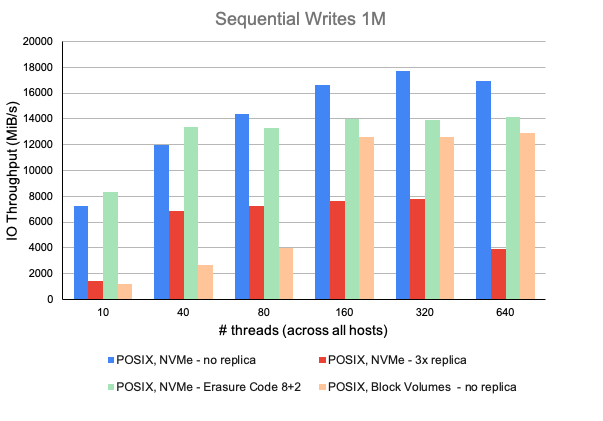

Benchmarking

Our testbed consisted of six Quobyte storage nodes and 10 client nodes. Quobyte storage nodes had two 25-Gbps network connections, one for cluster communication and one for client access. Client nodes had one 25-Gbps network connection. Storage nodes were built using BM.DenseIO2.52 and client nodes used BM.Standard2.52. Each Quobyte storage node had eight 6.4TB Intel NVME SSD devices and either 1TB block volumes with balanced performance tier. All machines ran on Oracle Linux Server 7.7 with Linux kernel 4.14.35, Java 11, and Quobyte 2.25.1.1.

We ran tests with the standard Quobyte OCI deployment and no extra tuning. On the clients, we enabled unaligned IO for parallel workloads. We also disabled xattrs and set default permissions for maximum metadata performance.

The following charts show our IOR performance benchmark results.

Management GUI

Management GUI

Administrators can configure Quobyte through an easy-to-manage web GUI or directly from the command line. Deploy machines, set permissions, provision quotas, set automatic data tiers, and more.

Conclusion

We’re excited to partner with Quobyte, because we recognize the unique value that this solution brings to the cloud. Believe it or not, we only covered a few of Quobyte’s key features. This file system enables everything from policy-based data placement to file striping to IP and X.509 based access controls. To learn more, see the Quobyte documentation.

Quobyte lets you work like a hyperscaler, without forcing you to choose between performance and reliability. Get started today by setting up Quobyte on Oracle Cloud Infrastructure.