This post is the second in the four-part series on Autoscaling a load balanced web application. You can find links for the entire series at the end of this post.

In Part 1, we set up the scenario of TheSmithStore, a fictional online retailer who needs their web application to respond to variable amounts of customer traffic. TheSmithStore has decided to harness the scalability of Oracle Cloud Infrastructure to ensure the availability of their web application, even during unexpected traffic spikes.

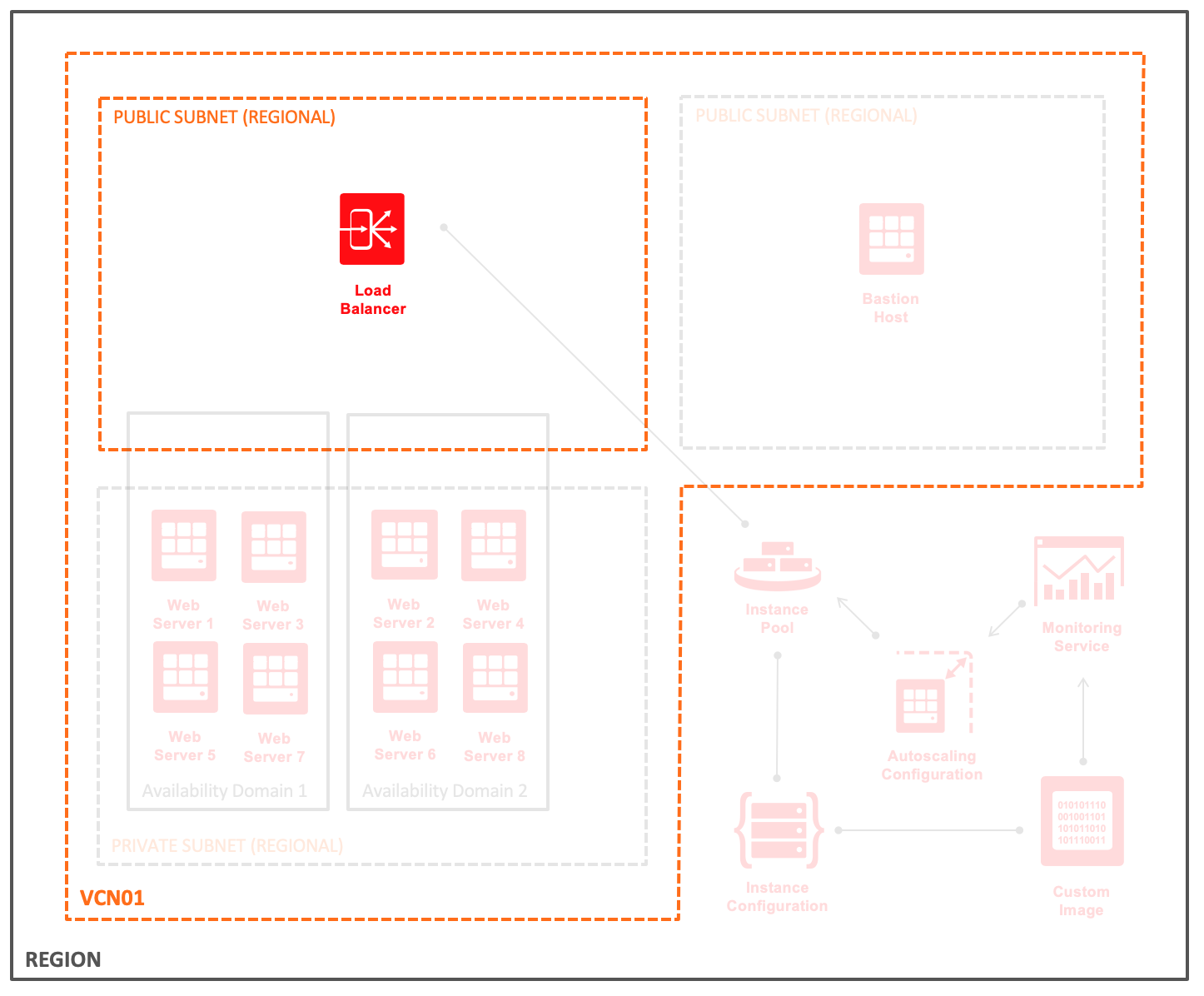

The first component of TheSmithStore configuration is the Load Balancing service. The following diagram was introduced in Part 1, but here it highlights the load balancer components. Before we look at details for TheSmithStore load balancer, let’s look at an overview of the Oracle Cloud Infrastructure Load Balancing service.

Load Balancing Service Overview

The essential features of any load balancer are distributing load across backend resources, determining the health of backend resources, and being fault-tolerant. The Oracle Cloud Infrastructure Load Balancing service includes all of these features and more.

The core of the Load Balancing service is the load balancer itself. Load balancers can be used with public or private IP addresses and are provisioned based on needed bandwidth. Every public load balancer is regional in scope and includes a primary and standby. Placement of the redundant load balancers depends on whether you use a regional subnet (recommended) or two availability domain–specific subnets.

Each load balancer has a backend and a frontend. The backend of the load balancer distributes incoming client requests to a set of servers for processing. The frontend of the load balancer processes incoming client connections.

The backend of a load balancer can be configured to distribute traffic based on three types of policies: round-robin, IP hash, and least connections to the backend server. Each backend server is a member of a backend set. A load balancer can have multiple backend sets, each with a health check and the ability to use SSL encryption for communication with the load balancer. The backend set of the load balancer is where the instance pool is connected (see Part 4 of the series).

The frontend consists primarily of a listener that is configured to handle HTTP, HTTPS, or TCP traffic. The frontend of a load balancer can also be associated with virtual hostnames and route incoming requests to a particular set of backend servers based on a ruleset. Additionally, the frontend of a load balancer can have a set of SSL certificates, private keys, and certificate authorities for each listener.

For more information about the Load Balancing service, including topics like session persistence and HTTP “X-” headers, see the service documentation.

Improved Workflow for Creating Load Balancers

In June, the Oracle Cloud Infrastructure Console was updated with a new workflow for creating load balancers. The workflow simplifies the process of deploying a load balancer into three steps:

- You specify details about the core load balancer, the backend, and the frontend.

- After you complete the workflow, the load balancer is provisioned, typically in less than one minute.

- After the load balancer is provisioned, you configure any virtual hostnames, path routes, and HTTP header rulesets.

Now let’s walk through the new workflow to set up the load balancer for TheSmithStore.

Configure the Load Balancer for TheSmithStore

We configure the load balancer for TheSmithStore to be in a regional public subnet and to respond to traffic by using the HTTPS protocol.

The Load Balancers menu is under the Networking section of the Oracle Cloud Infrastructure Console main menu. On the Load Balancers page, click Create Load Balancer to start the creation workflow.

-

On the Add Details page of the workflow, you specify type, bandwidth, and network details.

TheSmithStore uses a small, public load balancer, placed in a regional subnet.

-

On the Choose Backends page of the workflow, specify the load balancing policy, backend servers, and health checks.

TheSmithStore uses the weighted round-robin distribution. The instance pool created in Part 4 of this series attaches as a backend set for this load balancer. The default check to determine the health of backend servers is sufficient for TheSmithStore.

Note: Because the backend set name is a required reference for setting up the instance pool in Part 4, we specify thesmithstore-webservers as the name in the Advanced Options.

-

On the Configure Listener page of the workflow, specify details related to the frontend, or listener, of the load balancer.

For TheSmithStore, HTTPS is the protocol for client connections. A private key, certificate, and CA certificate are required when using HTTPS.

-

After you create the load balancer, copy the load balancer OCID from the details page. In addition to the backend set name, the load balancer OCID is required when you set up the instance pool in Part 4.

Note: The public IP address of the load balancer is displayed only after provisioning is complete. This address automatically comes from the Oracle Cloud Infrastructure public IP pool.

And we’re done. A highly available load balancer for TheSmithStore is ready to be used with the instance pool created in Part 4.

Wrapping Up

The load balancer is the public endpoint that web requests from TheSmithStore customers hit first. Until backend servers are attached to the load balancer, connections receive a 502 Bad Gateway error, and the overall health of the load balancer is Unknown. In Part 4 of this series, the backend set attaches to an instance pool that is managed by an autoscaling configuration. Before that, Part 3 covers a few things to consider when using Compute autoscaling.

As always, you can try all these features for yourself with a free trial. If you don’t already have an Oracle Cloud account, go to https://www.oracle.com/cloud/free/ to sign up today.

Blog Series Links

Part 1: Autoscaling a Load Balanced Web Application

Part 2: You are here.

Part 3: Designing Images for Compute Autoscaling

Part 4: Using Compute Autoscaling with the Load Balancing Service