Additional contributors: Leo Leung and Akshai Parthasarathy, OCI Product Marketing

The following blog is intended to outline our general product direction. It’s intended for information purposes only and may not be incorporated into any contract. It isn’t a commitment to deliver any material, code, or functionality, and shouldn’t be relied on in making purchasing decisions. The development, release, timing, and pricing of any features or functionality described for Oracle’s products can change and remains at the sole discretion of Oracle Corporation.

Core cloud infrastructure is the foundation for your cloud estate. Like the engine of your car, its purpose is to get the job done, consistently and quietly. But, just as our automobile engines have been evolving from gasoline-powered to hybrid and electric motors, that do the same thing, but more efficiently, your core cloud infrastructure can – and should – continue to evolve and improve.

OCI has been evaluated by industry analysts as providing required IaaS and PaaS capabilities for cloud workloads. We are continuing to innovate and today at Oracle Cloud World, we announced new compute, storage, and networking capabilities that can simplify how you use core cloud infrastructure. They range from compute instances and block volumes that can dynamically adjust to meet demand to high performance NVIDIA GPU instances that can dramatically simplify AI training and graphics workloads. These capabilities are intended to provide flexibility, ease of use, security, and high performance.

Flexible infrastructure

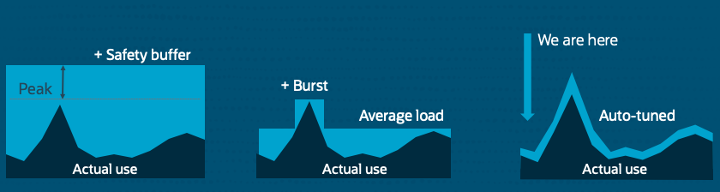

According to Omdia, cloud providers package resources, such as standard compute instances with 2 CPUs and 8 GB of memory. This configuration creates a burden on customers to appropriately size these resource packages for workloads. In addition, if you only use half the resources in a package, cloud infrastructure may be wasteful and inefficient from a cost perspective. Furthermore, 451 Research indicates that there are over 3 million cloud compute SKUs in the market today. While other vendors might offer over 500 compute SKUs, OCI has taken a simpler approach.

OCI already provides features including compute instance pools, burstable compute instances, preemptible instances, and flexible compute and load balancer. This set of products covers the same breadth of capabilities as other cloud providers – with fewer, more customizable options than most. For example, if you require a VM with 9 CPUs and 27 GB of memory, you can deploy exactly that with flexible compute. We also simplified storage by offering a single, high-performance block volume option which includes a performance SLA and up to 300k IOPS per volume.

OCI has taken things a step further with the ability to autotune resources based on workload requirements, reducing both waste and cost. We’re announcing the following features that put OCI at the edge of dynamically scaling core infrastructure:

-

Unlimited mode for burstable VMs: A feature that will enable continuous burst, which is essential for running enterprise workloads cost-effectively. With Unlimited mode, you pay for a fraction of the CPU that you’re using with the ability to continuously burst. This feature is planned to launch next year. For more details, contact your account team.

-

Block Volume autotuning: Block storage that dynamically scales performance within bounds that you configure to meet workload demands. This feature recently launched and is available today.

Both capabilities help improve efficiency and reduce costs because you’re only using what your workload needs. You only pay for actual usage, not peak need provisioning.

Easy-to-use services

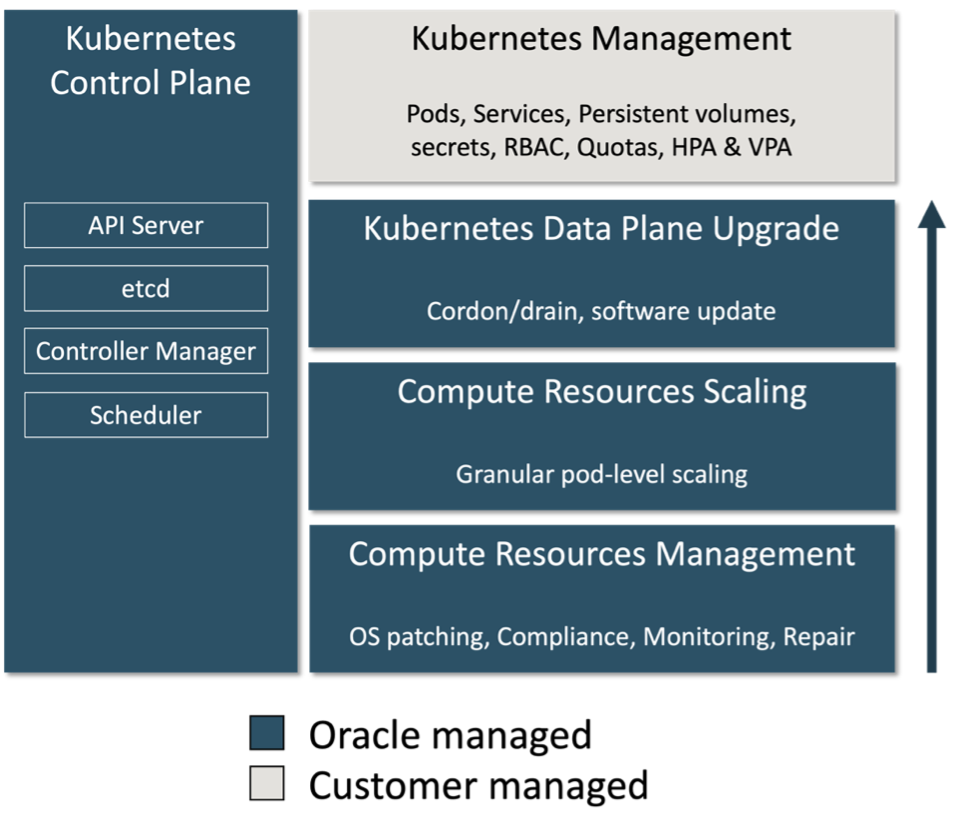

According to a recent Cloud Native Computing Foundation (CNCF) survey, complexity in Kubernetes operations is a major pain point. Kubernetes is also going “under the hood” like Linux with 90 percent of organizations using cloud-managed services.

While OCI already offers a managed Kubernetes service, we wanted to simplify running container-based applications in the cloud even more with a complete serverless experience. Serverless solutions can enable customers to run applications without having to operate any servers, helping to reduce the complexities and overhead of managing, scaling, upgrading, and troubleshooting infrastructure. We’re announcing the following capabilities that make Kubernetes and containers simpler on OCI:

-

Oracle Container Engine for Kubernetes (OKE) support for Virtual Nodes: Customers can create Kubernetes clusters that use Virtual Nodes for a complete serverless experience. With Virtual Nodes, OCI manages the full lifecycle and infrastructure operations of the Kubernetes’ worker nodes, enabling enterprises to improve reliable operations at scale without the operational burden. This feature is expected to be available soon.

-

OCI Container Instances: A new serverless compute service suitable for customers who want to run containers in the cloud for use cases that don’t require container orchestration like Kubernetes. Customers will be able to instantly launch containers with a single CLI command or guided GUI wizard without having to manage infrastructure. Customers are charged only for the CPU and memory resources allocated to their instances at the same rate as regular OCI Compute. With simple experience, seamless operations, and no extra charge for the serverless experience, Container Instances are intended to offer the best value for running containers in the cloud. This service is expected to be available soon.

OCI continues to simplify other aspects of core cloud infrastructure through services such as Cloud Advisor, which identifies both underutilized resources and performance bottlenecks. It provides visibility across networks with features for topology visualization, troubleshooting and diagnostics, network operations planning and traffic monitoring. Flow logs provide network traffic metadata to detect security anomalies or gain traffic insights.

We’re announcing Network Command Center, a single pane of glass to provide complete visibility in the network topology, performance and security policy. Customers can use Network Command Center for troubleshooting their network configuration, plan for workload placements based on performance and security needs and monitor traffic for compliance and security analysis. Network Command Center brings all the tools that a network operator needs in a unified and easy to use interface.

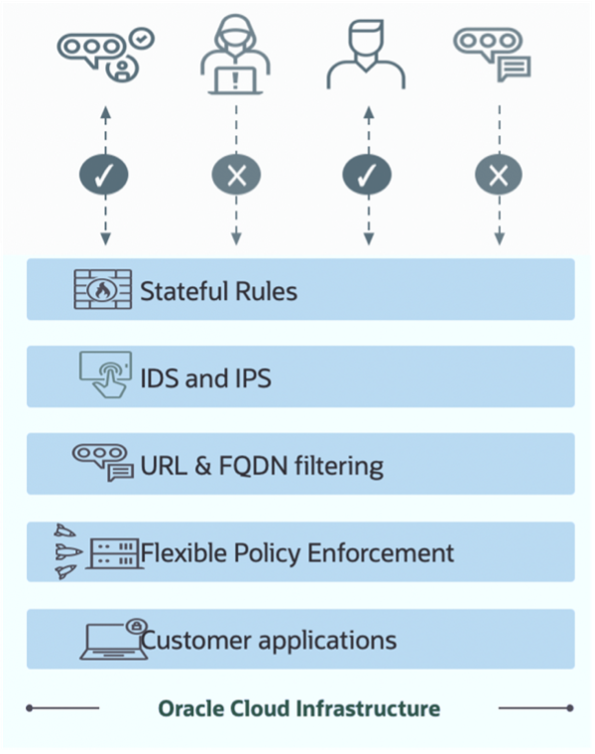

Built-in security

OCI offers simple, prescriptive, and integrated security built into the OCI platform so customers can easily secure their cloud infrastructure, data, and applications. Our encryption protects data both at rest and in transit. In addition, OCI provides easy-to-use identity and access management (IAM) policies that helps you manage access and entitlements across a wide range of cloud and on-premises applications. OCI IAM’s zero-trust strategy positions identity as the security control mechanism for expanding IT landscapes.

Further, Oracle Cloud Guard simplifies the detection of security misconfigurations and insecure activities across tenants, as well as risky activities performed by operators and end users. Many security capabilities, including Oracle Cloud Guard, security zones, and vulnerability scanning, are included with a paid OCI tenancy, preventing you from having to choose between security and cost. To help our customers secure their infrastructure, data, and applications, we’re announcing the following services:

-

OCI Confidential Computing: Advanced security to protect your data in-use by encrypting it in memory with enhanced virtualization using AMD Secure Encrypted Virtualization (SEV). This feature will be ideal for workloads that need extra security, and customers will be able to enable it at the time of instance creation. Confidential Computing is expected to be available by 2023.

-

OCI Network Firewall: A cloud native managed next generation firewall service built using Palo Alto Networks technology. This turn-key firewall-as-a-service has built-in high availability that provides advanced firewall features without the need to configure and manage more security infrastructure. With the service, you can easily apply granular security rules to detect and help protect against malware, spyware, command-and-conquer (C2) attacks, and vulnerability exploits on inbound (north-south), outbound, and lateral (east-west) traffic flows to your application and network workloads. This service recently launched and is available today.

High performance

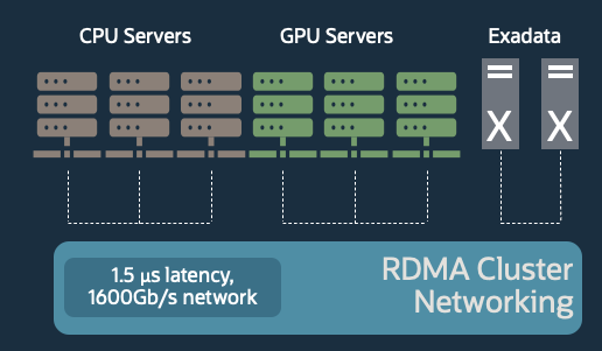

OCI provides readily available capabilities for high-performance CPU and GPU infrastructure. We offer nonblocking networks designed to match on-premises data centers. RDMA cluster networking with 1.5 microsecond latency enables you to scale your workloads on up to 512 GPUs and 20,000 HPC CPUs.

The demand for AI training and inferencing semiconductors is expected to grow to $65B by 2025. With AI training in the cloud using GPU clusters, engineers have more access to vast amounts of processing capability to improve their model accuracy. We’re now announcing availability of the following GPUs on OCI:

-

OCI GM4 instances: Bare metal Oracle Cloud Infrastructure GM4 instances powered by NVIDIA A100 80GB Tensor Core GPUs support high-speed, low-latency clusters of up to 512 GPUs with a 1,600 Gbps RDMA network and eight 2×100 Gbps NVIDIA ConnectX SmartNICs. These instances are available on request. Contact your Oracle sales representative.

-

OCI GU1 instances with NVIDIA RTX Virtual Workstation: Bare metal and virtual machine Oracle Cloud Infrastructure GU1 instances powered by NVIDIA A10 Tensor Core GPUs with the NVIDIA RTX Virtual Workstation software benefit from accelerated graphics for video processing, gaming, media streaming, virtual desktop and other graphics-intensive applications, as well as deep learning inferencing. The bare metal instances are available today and virtual machines are expected to be available soon.

OCI has always had a relentless focus on performance. While we excel at compute, storage, and network performance, we wanted to go a step further to improve performance at the edge. So, we’re announcing OCI Content Delivery Network, a globally distributed cloud native service that uses hundreds of edge networks to deliver the lowest possible latency for internet-facing workloads. This service is expected to be available in 2023. If you’re interested in joining the Limited Availability program, sign up today.

Get access to OCI’s latest features

OCI is simplifying cloud operations with compute, networking, and storage services that are flexible, easy to use, secure, and high-performance. To learn more about these announcements, use the following resources:

-

Try the generally available services through your existing OCI account or sign up for the free tier

-

Use the OCI Limited Availability sign-up form to request access to services in LA

-

Reach out to Oracle Sales for more information