Considering quantum computing offerings by numerous companies, do we really need a simulator? Yes! Since the current quantum computers, called noisy intermediate-scale quantum computers (NISQ), are ’noisy’, most researchers don’t use them for algorithm development, especially for proof-of-concept validation. You might develop several hypotheses, each with a set of experiments. Instead of running all of them on an expensive quantum computer, it makes sense to run them on a simulator to select candidates that can be further tested on a quantum computer. With the fidelity upper bound inherent to NISQ devices, these experiments can run into problems. This factor is another reason for using simulators to determine whether the problem is hardware or software related.

We tested our simulator based on the NVIDIA cuQuantum Appliance, simulating up to 36 qubit algorithms, and the environment is ideal for algorithm development because it’s not affected by any external noise.

NVIDIA cuQuantum Appliance

The NVIDIA cuQuantum Appliance is a highly performant multi-GPU solution for quantum circuit simulation. It contains the NVIDIA cuStateVec and cuTensorNet libraries, which optimize state vector and tensor network simulation, respectively. The cuTensorNet library functionality is accessible through Python for tensor network operations.

NVIDIA cuQuantum Appliance on Oracle Cloud Infrastructure (OCI) Marketplace is available to OCI customers for free. This appliance is deployed using an automation stack directly in the customer tenancies on instances with NVIDIA P100, V100, A10 and A100 Tensor Core GPUs, supporting all shapes including virtual machine (VM) and bare metal. The basic approach enables OCI customers to use these simulators to validate hypotheses with numerous nested experiments and narrow down to those candidate experiments which need to be run on a quantum computer for further computing.

Our benchmark tests

Google’s open-source quantum computer library is Cirq, which is a standard interface to use Google’s quantum computing system. Cirq has a CPU-based quantum computer simulator.

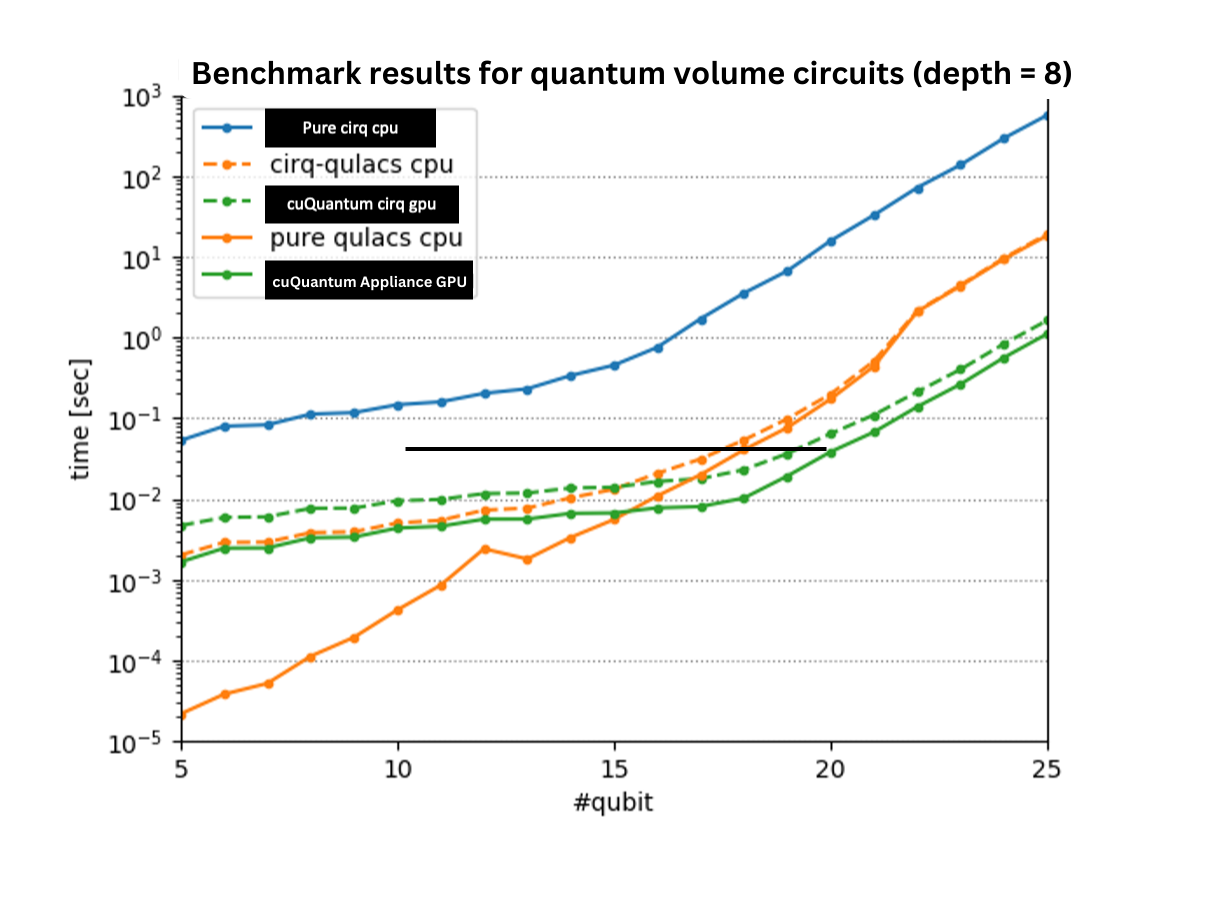

NVIDIA has created cuQuantum with extensions to the Cirq frontend for GPU support. We also used an opensource qulacs simulator in our benchmarks. Our benchmark results indicate that NVIDIA A100 GPU-based simulation is almost 1,000 times faster than CPU based simulators.

The following graph shows the run time of the quantum volume circuit using an AMD E4 Milan CPU and NVIDIA A100 GPU.

How to deploy the Virtual Appliance Image

The image contains NVIDIA’s cuStateVec and cuTensorNet libraries which optimize state vector and tensor network simulation, respectively. cuQuantum Python is also installed, offering not only Python bindings for both libraries but also a high-level, pythonic interface for cuTensorNet. With the cuStateVec library, NVIDIA provides a multi-GPU-optimized Google Cirq frontend via qsim, Google’s state vector simulator for quantum circuits. The cuQuantum Appliance is released under the NVIDIA cuQuantum SDK license.

The image with NVIDIA drivers for NVIDIA cuQuantum can be deployed in two ways.

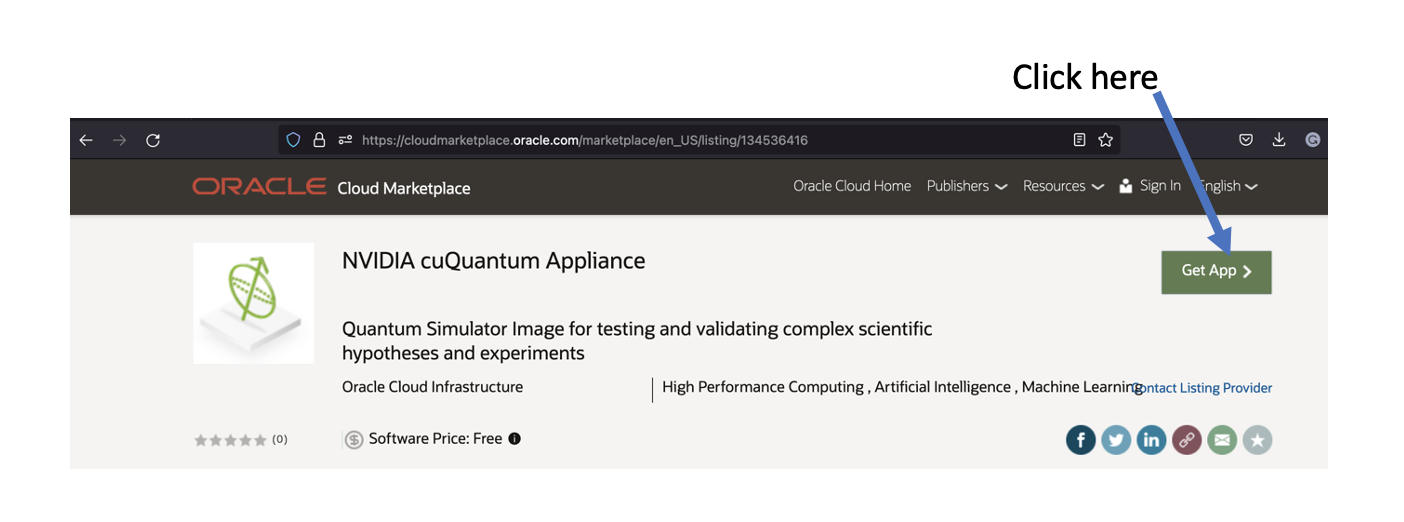

1. Directly from the Oracle Marketplace by clicking “Get App”

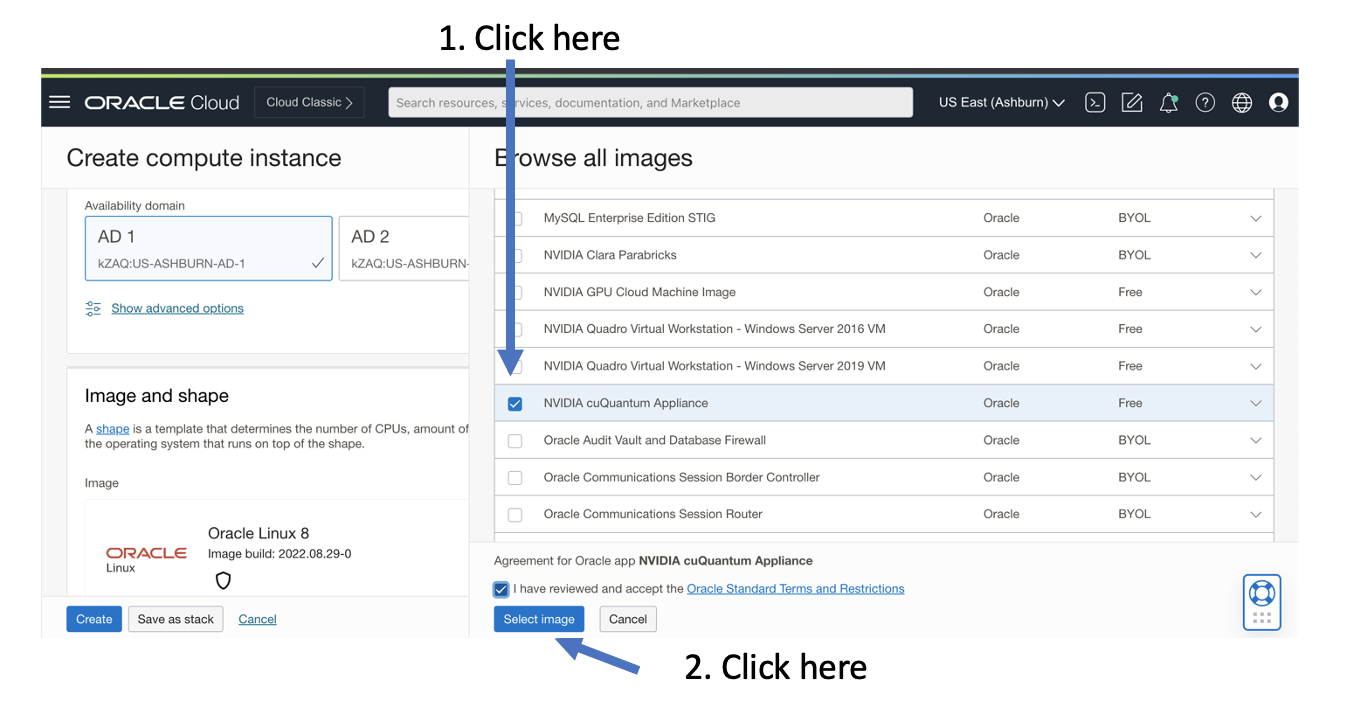

2. Using the console, select the image from the Oracle Platform Images tab

After the image is deployed, to invoke the latest version of the NVIDIA cuQuantum appliance run cuqc.sh from /home/opc directory. The cuQuantum Appliance should be running.

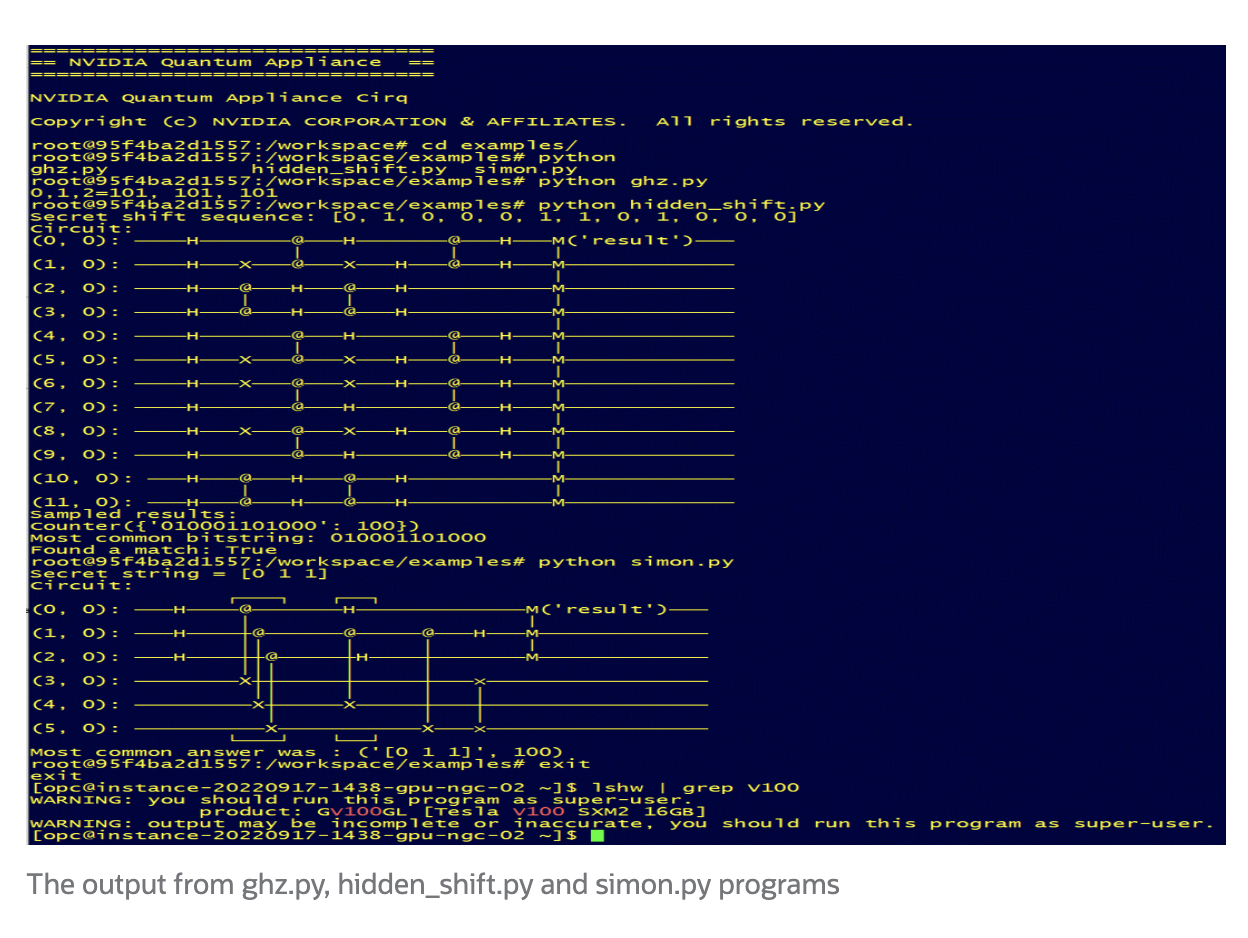

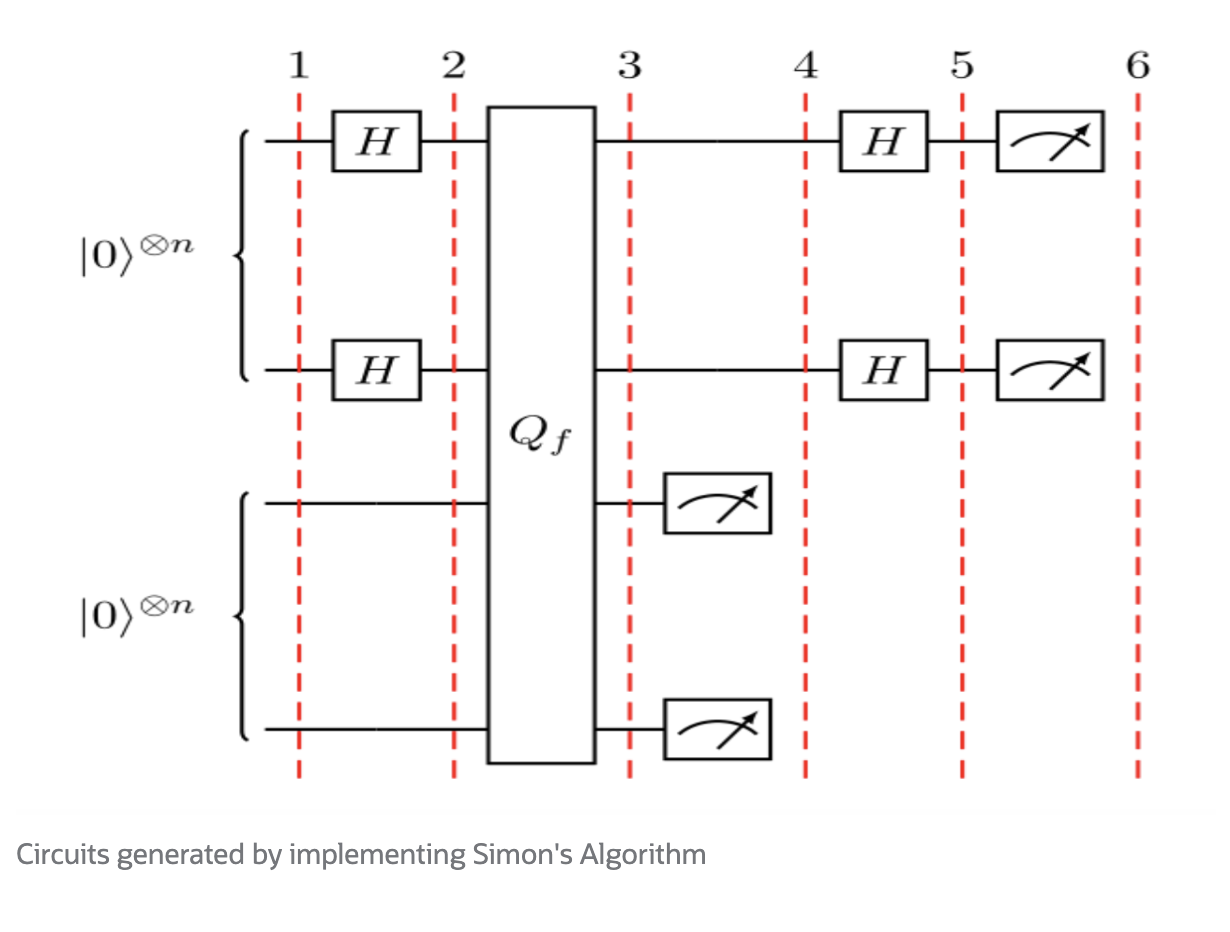

There are three examples included within the /examples directory – ghz.py, simon.py and hidden_shift.py

ghz.py is a three qubit Greenberger–Horne–Zeilinger state generation example. simon.py is the quantum circuit solution that implements Simon’s algorithm. Lastly, hidden_shift.py is generating the quantum circuit using hidden shift for bent functions.

Following are the outputs:

Use cases

Quantum simulators are being used across a broad range of scientific research and industrial use cases,including for generation and distribution of quantum-secure random numbers for use in entropy-as-a-service and for quantum key distribution. This use case is targeted for large Telcos and CSPs. For more details, see Quantum Computing—How does entropy-as-a-service work?

More use cases include testing and validation of post-quantum cryptographic algorithms for application and database security for highly regulated enterprise applications, and simulating molecules for drug discovery use cases in the life Sciences and pharma industry.

For the financial industry, this appliance is used for portfolio optimization and faster risk and fraud analysis use cases.

Quantum machine learning (QML) algorithms are going to be significant in the development of the next generation of AI systems and large language models using GPU-based QML trainings.

Conclusion

The future of accelerated computing depends on underlying compute, network, and storage infrastructures. Oracle’s second-generation cloud infrastructure has taken a revolutionary architectural approach. Try out Oracle Cloud Infrastructure for yourself with a free tier account.

Acknowledgments

Special thanks to Adnan Umar for installing and validating the NVIDIA drivers dependent libraries for the cuQuantum appliance on Oracle Linux 8.