Kubernetes has become the standard for running modern applications in the cloud. However, the complexity of Kubernetes operations remains a major obstacle to its adoption, according to a recent Cloud Native Computing Foundation (CNCF) survey. The following factors make Kubernetes complex:

-

Infrastructure right-sizing: Kubernetes is designed to manage and scale applications at scale. So, the operations involved in managing the infrastructure resources, such as CPU and memory, can be complicated and require a lot of resources.

-

Upgrades and maintenance: Keeping a Kubernetes cluster up to date requires regular updates and maintenance. Upgrades can be complex and require careful planning and coordination to ensure minimal downtime. Upgrades can commonly take a full day of work involving multiple teams in an organization.

-

Infrastructure security: Kubernetes security involves multiple aspects that include infrastructure hardening. If the infrastructure of a Kubernetes cluster is compromised, all applications running in the cluster are at risk. Infrastructure must be hardened, access must be restricted, and security patches must be applied timely.

Our customers have requested the simplification of Kubernetes infrastructure management, so we’re excited to introduce virtual nodes in Oracle Container Engine for Kubernetes (OKE).

Introducing OKE virtual nodes

OKE virtual nodes deliver a complete serverless Kubernetes experience. With virtual nodes, you can ensure reliable operations of Kubernetes at scale without needing to manage any worker node infrastructure. This cluster option provides granular pod-level elasticity and pay-per-pod pricing, while eliminating the operational overhead of managing, scaling, upgrading, and troubleshooting worker nodes’ infrastructure. Kubernetes clusters become fully managed. Oracle manages clusters’ control plane and data plane for you. You’re responsible only for the applications deployed through the cluster’s Kubernetes API.

8×8 Inc.

“As we increasingly standardize on Kubernetes for our workloads, operations become more complex. We are looking forward to using OKE Virtual Nodes that will provide us with a “serverless” Kubernetes experience. It should help us offload the Kubernetes infrastructure management to OKE. It will save us time and effort – simplifying Kubernetes operations at scale, along with better scaling economics.”

– Mehdi Salour, Senior Vice President, Global Network and Development Operations at 8×8, Inc.

Nomura Research Institute (NRI)

“Embracing cloud-native enables us to accelerate the growth of our business, but challenges managing our environment prevented us from harnessing the full potential of Kubernetes. With the new features in Oracle Container Engine for Kubernetes, we’ll be able to simplify our operations and scale our applications without needing to add complex configurations or additional nodes. The greater level of choice and control provided by the new enhancements will help us focus on building new applications while reducing complexity and costs.”

– Shigekazu Ohmoto, Senior Corporate Managing Director, Nomura Research Institute (NRI).

Virtually unlimited capacity to Kubernetes clusters

OKE virtual nodes provide the abstraction of regular nodes to Kubernetes, delivering on-demand elasticity within Kubernetes clusters to run your pods. There is no constraint on CPU and memory resources at the node level. Pods are executed on right-sized compute based on CPU and memory specified in the pod’s deployment spec. You can scale your deployments using Kubernetes horizontal pod autoscaler (HPA) without adding and removing worker nodes from your cluster or configuring the cluster autoscaler.

Virtual nodes seamlessly handle temporary bursts of your application load. They simplify the processing of scalable workloads, such as high-traffic web applications and data-processing jobs for which CPU and memory requirements fluctuate significantly over time. Virtual nodes allow you to improve operational efficiency and reduce total cost of ownerships. You don’t pay for any server capacity overhead, while your applications can consume the exact required CPU and memory resources they need at any time.

Seamless Kubernetes upgrades

OKE virtual nodes simplify the upgrades of your Kubernetes clusters. The cluster upgrade is a single-click operation that triggers the upgrade of all Kubernetes components in the cluster control plane and data plane. First, the cluster control plane and virtual nodes are upgraded without any impact on workloads. Then, kube-proxy running on each pod is upgraded. Pods restart asynchronously onto the same virtual node, and the pod disruption budget (PDB) is respected to maintain the availability of your application.

This managed upgrade experience with OKE virtual nodes allows you to focus on your applications rather than upgrading the Kubernetes infrastructure and applying security patches.

Critical workloads on OKE virtual nodes

Virtual nodes deliver a serverless experience while providing the necessary controls to satisfy your application’s requirements.

Some applications perform better with a particular processor architecture. Virtual nodes allow you to select a Compute shape to assign to your pods, allowing you to control the performance of your applications. Virtual nodes support the vertical scalability that your demanding workloads require. Pods can scale up to the maximum CPU and memory supported by the selected Compute shape. For example, with AMD E3 and E4 shapes, you can scale up to 64 cores (128 VCPU) and 1 TB of memory, while competitors apply a much lower limit.

Virtual nodes support topology spread constraints, allowing you to control the placement of Kubernetes pods across OCI availability domains and fault domains. As a result, you can configure high availability and anti-affinity for your application’s Pods.

Enhanced Security

Virtual nodes improve security, enabling you to run multitenant applications, untrusted workloads, and handle sensitive data. First, virtual nodes provide strong virtual machine (VM)-type isolation to each Kubernetes pod. Pods don’t share any underlying kernel, memory, and CPU resources. Then, each Kubernetes pod natively connects to your virtual cloud network (VCN) through a dedicated virtual network interface card (VNIC). So, each pod benefits from the security features OCI VCN provides, including security rules, flow logs, and routing policies. With this enhanced security, virtual nodes enable multitenant software-as-a-service (SaaS) applications and enterprise workloads that require strict isolation in a Kubernetes cluster.

In addition, virtual nodes support Workload Identity for fine-grained pod-level identity and access management control. You can grant individual Kubernetes Pods policy-driven access to OCI resources, such as secrets in Vault or an Object Storage bucket, using OCI Identity and Access Management (IAM). For more details, read OKE Workload Identity – Greater control of access.

OKE virtual nodes allow you to Pay-per-Pod

Virtual nodes allow you to optimize the cost of running Kubernetes workloads. You pay for the exact compute resources consumed by each Kubernetes pod instead of paying for whole servers that might have unused capacity. As a result, you don’t need to right-size worker nodes to accommodate your workloads or configure the cluster autoscaler to increase or decrease the number of worker nodes. Virtual nodes save you time to focus on your applications.

Furthermore, the price of the CPU and memory of pods running on virtual nodes is the same as OCI Compute. Consuming a core or a gigabyte on virtual nodes is the same as on a VM.

Getting started

If you don’t yet have an OCI account, request a a free trial and use the following steps:

- Log into your Oracle Cloud Infrastructure account.

- Set the required IAM policies to use OKE virtual nodes.

- Open the navigation menu. Under Developer Services, go to Kubernetes Clusters (OKE) and click Create Cluster.

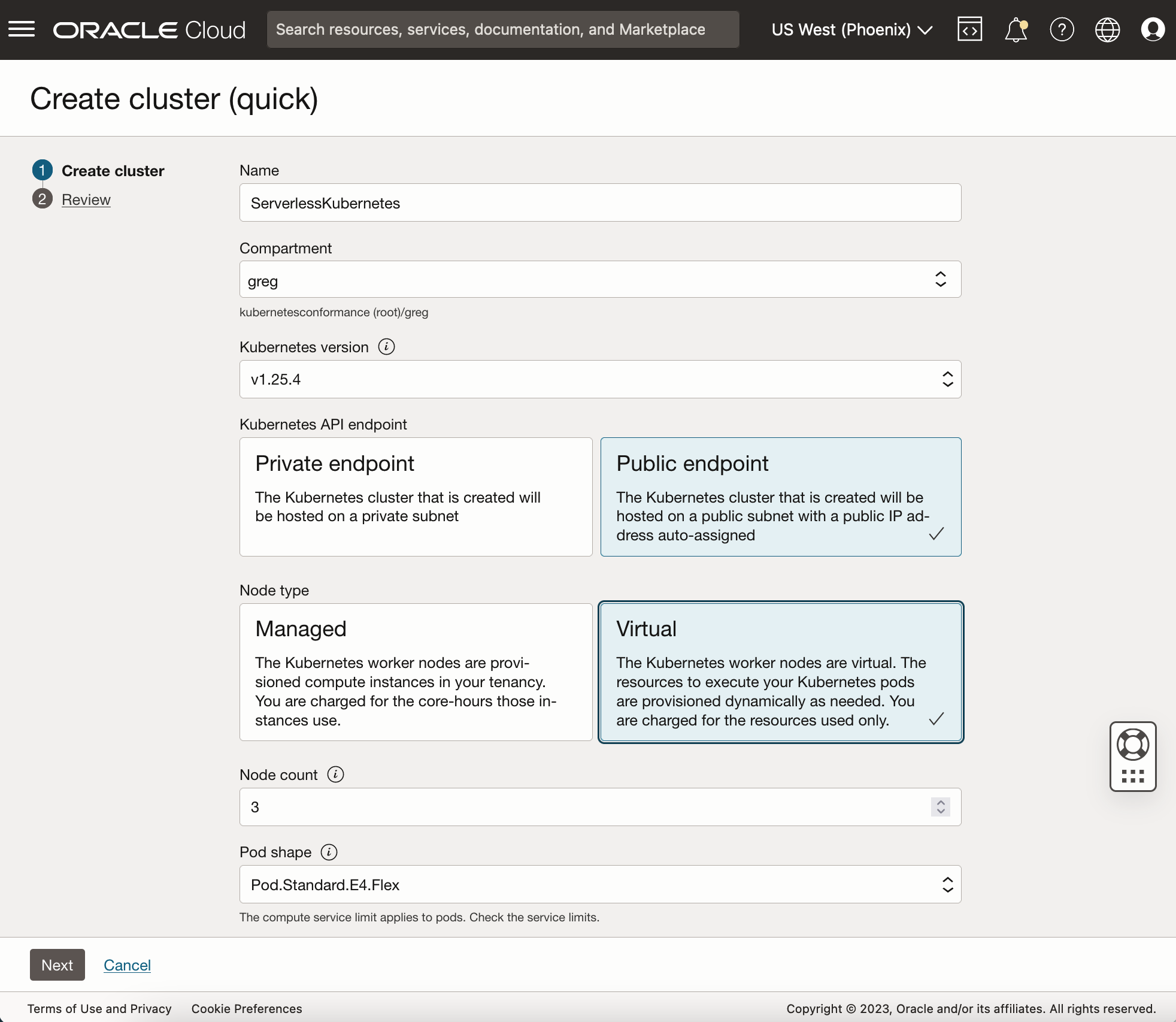

- In the Create Cluster dialog, choose Quick Create, and click Launch Workflow. For the node type, select Virtual, click Next, and click Create Cluster.

The Kubernetes cluster is made with a pool of 3 Virtual Nodes spread across Availability domains and Fault domains. The Virtual Nodes provide virtually unlimited capacity to execute pods. - Select your cluster and click Access Cluster. Select Cloud Shell Access, click Launch Cloud Shell, and run the “oci ce cluster create-kubeconfig” command.

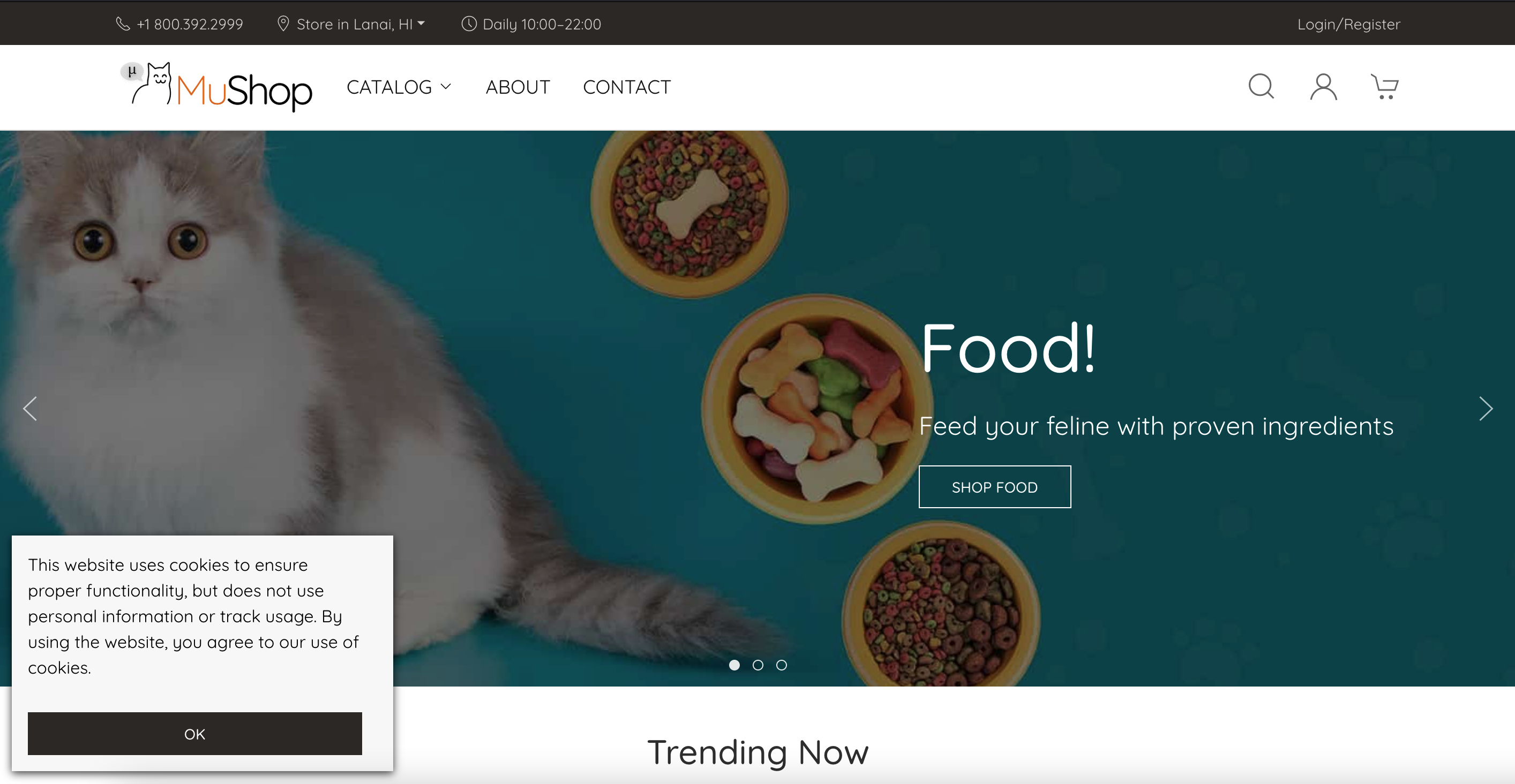

- Let’s now deploy Mushop, a sample application that implements an e-commerce platform built as a set of micro-services.

- In Cloud Shell, download the deployment manifests to deploy Mushop on Virtual Nodes:

$ git clone https://github.com/oracle-quickstart/oci-cloudnative.git mushop

$ cd mushop - Deploy Mushop

$ helm install mushop ./deploy/complete/helm-chart/mushop -f ./deploy/complete/helm-chart/mushop/values-virtual-nodes.yaml - Create security rules to allow HTTP traffic to the mushop app. Refer to the documentation.

- Get the external IP for the Mushop app and open your web browser at this address after all Mushop microservices pods are ready.

$ kubectl get svc edge

You’re now running all mushop microservices on OKE Virtual Node. You don’t have to manage any worker nodes, and you only pay for the CPU and memory resources the pods consume.

Learn more

We will soon publish other blog posts to go into the details of Kubernetes Horizontal Pod Autoscaler (HPA) with Virtual Nodes and Seamless Kubernetes Upgrade. Until then, you can learn more about OKE Virtual Nodes and get hands-on experience using the following resources:

-

Access the OKE resource center

- Blog – Kubernetes at scale just got a lot easier with new Oracle Container Engine

- Register for the Webcast

-

Get started with Oracle Cloud Infrastructure today with our Oracle Cloud Free Trial