Today, we’re introducing a major update to how Oracle Cloud Infrastructure (OCI) customers think about, use, and connect resources between their on-premises and cloud environment. We’re enabling new scenarios to address demands for global networks, network scalability, and remote connectivity using the dynamic routing gateway (DRG) and the Oracle backbone. These DRG enhancements collectively address and enable scenarios that global enterprises and independent software vendors (ISVs) require to enhance and simplify connectivity.

We added these features to the existing DRG to enable the use cases below and simplify configuration and management. These features have full interoperability to supplement previous networking releases, so you can decide the best way to build your cloud network.

We encourage you to read more about this release in the documentation and release notes. These enhancements are now available in all commercial realm regions and open to government realms later this year.

Use cases

Complex routing

The DRG allows you to specify a unique route table for each attached network. Communications between DRG attachments, including virtual cloud networks (VCNs), is controlled by these routing tables and their associated import policies. By default, all VCNs can communicate with each other, which the default VCN attachment routing table provides. You can change the associated route tables to achieve isolation of VCNs. This functionality supports customer topologies, such as hub and spoke, which allows you to use a network virtual appliance in a hub VCN to filter or inspect traffic between your on-premises network and a spoke VCN.

High availability

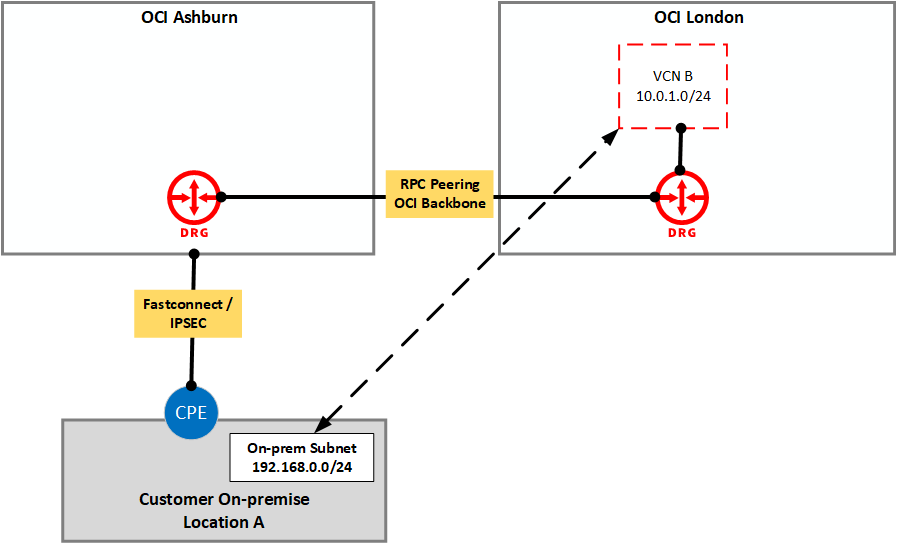

The DRG now allows you to use OCI FastConnect in one region to communicate with resources in a VCN in another region. Customers using FastConnect and VCNs in at least two different regions can continue to access all their resources if connectivity is lost for FastConnect.

Simplified configuration

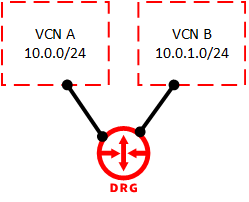

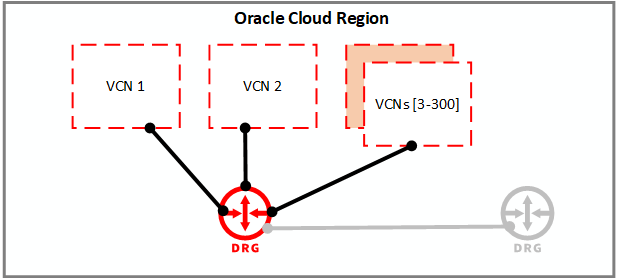

A single DRG can have multiple local VCNs attached to it. These VCNs can belong to the same or another tenancy. You no longer need to set up multiple local peering gateways to peer VCNs. This availability simplifies configuration and management of peering relationships and reduces operational expense.

Increased scale

A single DRG can have up to 300 local VCNs attached to it. VCN communications were previously restricted to peering with a maximum of 10 local peers. With our recent changes to the DRG, this number has increased significantly. This increase is useful for customers that need to peer a central VCN with multiple VCNs.

Getting started

You can start using these features immediately in your tenancy with the following steps:

-

Upgrade your DRG: DRGs made before June 2021 need to be upgraded to take advantage of the new features. See Upgrading a DRG in the documentation. Upgraded DRGs are fully backward compatible by default.

-

Identify your solution: Under Key Scenarios, we list the most common use cases for which configuration. You can find instructions under Network Scenarios and Peering Scenarios. Usually, a direct link to each scenario is provided.

-

Configure your solution or create a custom solution: Following the instructions under Network Scenarios, modify your DRG configuration to achieve your wanted solution. Alternatively, utilize the new features to develop a custom solution by following the DRG documentation.

Design or architecture support is available through your account team. If you don’t have an account team, you an open a service request and ask to be connected to the virtual networking product management team for solution architecture assistance. Help with configuration is directed to normal product support channels.

Key features

One-to-Many DRG to VCN connections

You can now connect a single DRG to multiple VCNs. This option enables a single DRG to be a central hub of connectivity between your on-premises and cloud resources. VCN attachments can also now be cross-tenancy.

DRG connections can reach remote regions

Your on-premises network connected to a DRG in one region can access networks connected to a DRG in a different region using a remote peering connection (RPC), including the Oracle Services Network in a remote region.

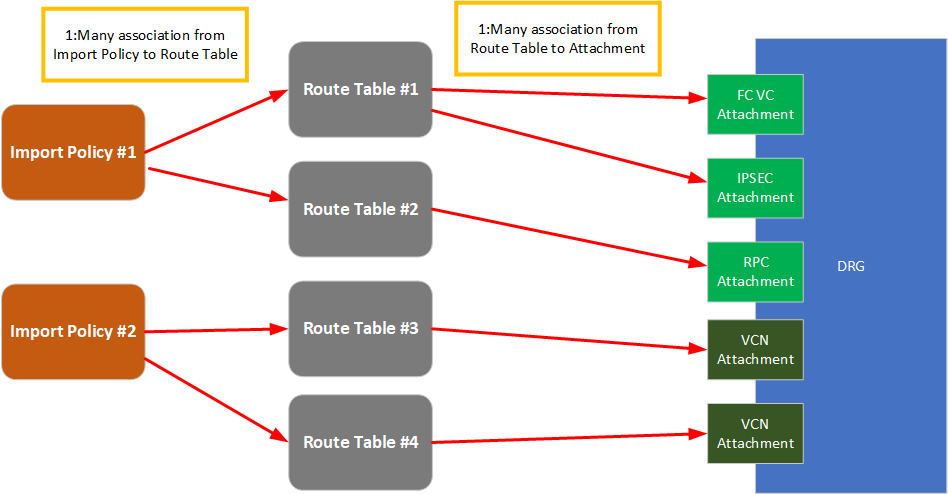

DRG routing engine enhancements and flexible routing policy control

You can now create routing tables in the DRG to route traffic arriving from connected networks. You can use a flexible policy-driven configuration can be used to define static and dynamic routing policies according to your business objectives. Each resource communicating through a DRG, such as VCN, IPSec, FastConnect, or RPC, is abstracted as a connection attachment, and the routing policy can be associated with the attachments.

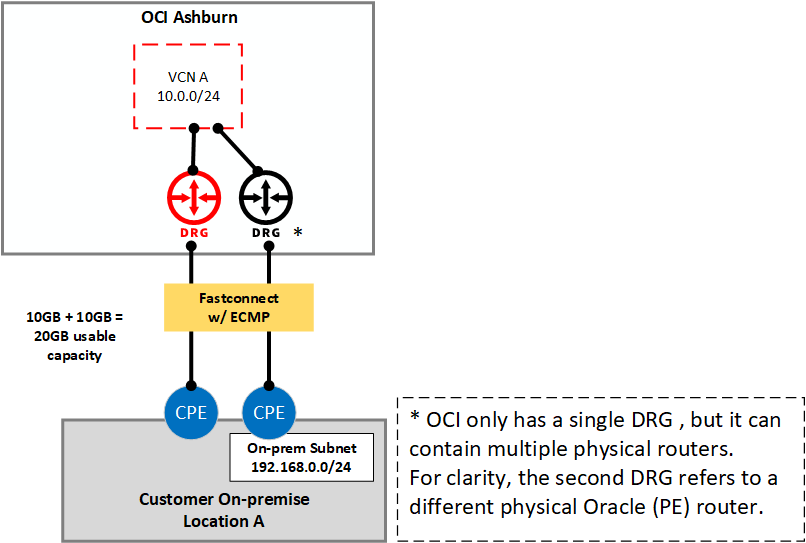

Equal cost multi-path (ECMP)

FastConnect and IPSec now support active-active load balancing through ECMP. This technology load balances traffic to your on-premises environment over multiple parallel paths of the same type, without requiring them to be on the same customer or provider routers.

Key scenarios

Remote on-ramp to OCI regions

You can now connect from your on-premises environment into one OCI region and reach any other OCI region around the globe. This traffic travels over the private OCI backbone, which typically has better latency and performance characteristics than commodity internet connectivity. You can also combine this traffic with existing transit routing features to gain access to the Oracle Services Network in the remote region.

You can apply this connectivity in many ways. You might find telco carrier costs are less expensive to reach an OCI region closer to your on-premises, or perhaps you want to deploy services in more OCI regions without extra fixed circuit costs. Certain markets, including international locations that weren’t previously orderable from your telecommunications provider, are now also available.

You can set up this scenario with the configuration guide.

If you have connectivity to multiple OCI regions, you can now use the remote region connectivity as a backup to your primary path direct to the region. By building out your network in this manner, you gain increased redundancy with decreased circuit costs.

Scaling and controlling VCN Connectivity

You can now let the DRG manage communications between VCNs, providing enhanced scale and a more simplified management experience. You no longer need to maintain point-to-point connectivity using a local peering gateway and instead can communicate between VCNs using the DRG. This feature allows a scale of up to 300 VCNs on a single DRG. If this option isn’t enough, you can connect an extra DRG through a remote peering connection (RPC).

Connected VCNs and DRGs can be in the same or different tenancies within the same region. Connected DRGs can now be in the same or different regions. You can also control which DRGs can talk to each other and which can’t, allowing network isolation if you want.

You can set up this scenario with the configuration guide.

Enabling FastConnect redundancy with ECMP and enhancing IPSec performance

Previously, when you had redundant FastConnect virtual circuits, you had to choose between redundancy and capacity. You could have full redundancy with active-passive circuits terminated on different customer and provider routers, or you could have capacity with active-active through link aggregation groups (LAGs). However, using a LAG required you to end all circuits on a single customer route (CPE) and Oracle DRG router (PE), leading to a potential single point of failure.

Today, we’re introducing support for ECMP, which allows you to end circuits on different CPE and PE routers, while having OCI distribution traffic for your on-premises network through both connections. If your network supports it, you can also send traffic to us back through both communications paths.

Alternatively, you can use ECMP to enhance performance, even where redundancy isn’t important. You can enhance the speed of ipsec-connections, which are usually limited to 1 Gbps because of cryptographic processing overhead. Using ECMP, you can now combine up to 8 ipsec-connections to achieve multi-gigabit level throughput.

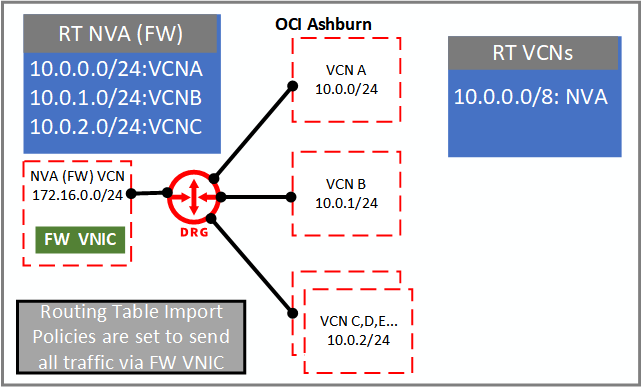

Network virtual appliance (firewall) VCN scaling and flexible bastion VCNs

The DRG now allows you to direct inter-VCN traffic through your self-managed network virtual appliances (NVA) or firewall in a scalable and robust manner. You can apply new DRG features to enforce rules that all traffic pass through an NVA route to the private IP of a virtual network interface card (VNIC) as the next hop. Hundreds of VCNs can now use a single NVA with a single VNIC, improving scalability and manageability. Import policies now also allow you to specify which routes your VCNs learn and, in turn, can communicate with.

In this example, the route tables for the VCN attachments only contain routes to the firewall VCN, forcing all traffic through the firewall. The firewall VCN can communicate with all VCNs, allowing it to return the traffic after applying firewall rules. We still support existing topologies using previous VCN transit routing features to firewalls.

If you want to try out this new technology, Oracle has partnered with several leading firewall vendors, including FortiGate, Cisco, Checkpoint, Palo Alto, and more. You can find a list of vendors with ready to deploy images in the OCI Marketplace.

You can set up this scenario with the configuration guide.

Usage as a bastion

Using the topology, you can also deploy a bastion host, or a purposely built Compute instance that provides ingress access to your private network. You can replace the firewall host in the previous diagram with a bastion and use its VCN as a conduit to communicate with the rest of your network. This configuration allows you to enforce security policy at a single point for remote access into your network.

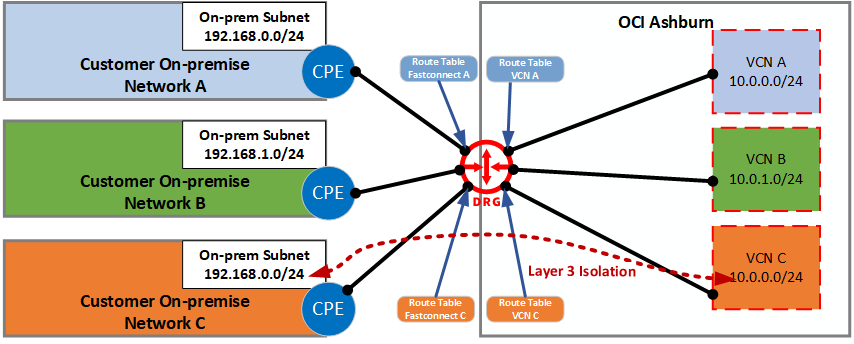

Layer 3 network isolation, ISV architectures, and supporting multi-tenancy scenarios

A single DRG now enables traffic isolation between your networks, customers, and associated on-premises resources. If you’re an ISV, you can now manage and isolate connectivity between your customers under a single pane of glass. In other cases, you might be an enterprise with separate connections and networks for different departments, subsidiaries, or functional roles, such as test and production. Connectivity between these networks can now utilize a single DRG through new flexibly routing policy controls. This configuration can also be implemented for communications between VCNs only.

Conclusion

We believe that these updates can enhance and simplify how you work with cloud networking and look forward to hearing about the way these features improve your network design and solution development.

If you want to share any feedback with the product team, we’d love to hear from you. Send an email to the Virtual Networking group or submit a note in the comments.