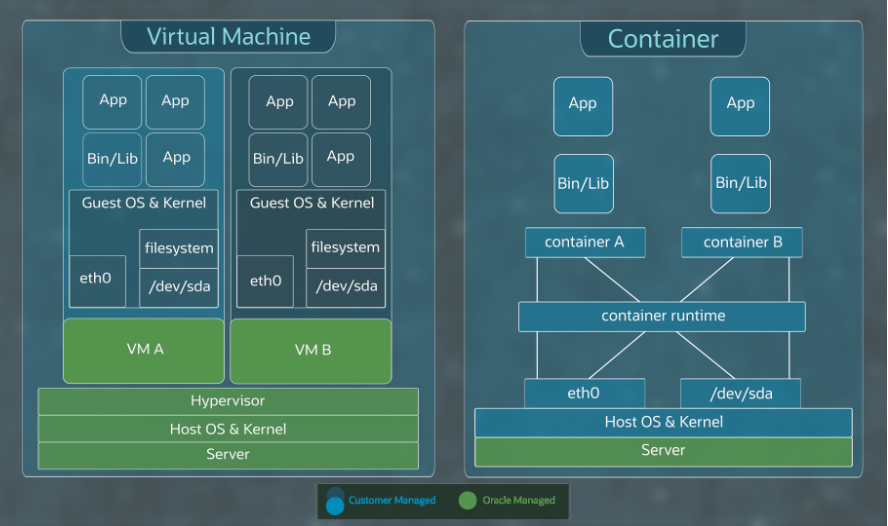

Cloud native applications commonly use virtual machines (VMs) and containers to implement their applications and microservices. VMs provide strong isolation by using hardware virtualization, dedicated IO, and full flexibility and ownership of the underlying operating system and kernel.

On the other hand, containers provide fast application start time, which help apply the elasticity provided by the cloud, strong operating system (OS)-level isolation for microservices, each running in their own container, and a centrally managed base OS that reduces overhead for application owners. Customers often run containers, Kubernetes-managed or otherwise, inside VMs.

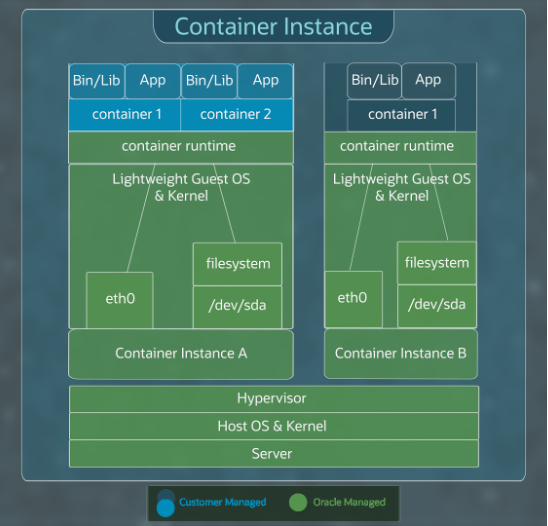

What if we had a product that combined the advantages of VMs and containers? OCI’s new Container Instances does exactly that, bringing together the benefits from both the VMs and Containers.

Inside OCI Container Instances

Oracle Cloud Infrastructure (OCI) Container Instances use hardware virtualization that provides strong isolation and dedicated IO, such as directly attached block storage devices. OCI Container Instances use a fully isolated base OS and kernel inside each container instance with each container instance hosting one or more containers, typically belonging to a single pod. For the container instance base image, we use a stripped-down Oracle Linux image with several optimizations designed to significantly reduce the boot-up time of the container instances. Finally, we manage the base OS inside the container instance with a VSOCK interface, which is limited to the OS update functionality to improve the overall security posture while removing the OS management overhead.

Want to know more?

In this video blog, we dive into the internal engineering architecture of OCI Container Instances and our key engineering choices. We discuss the rationale for using optimized and customized Oracle Linux with QEMU or KVM instead of alternatives, such as Kata Containers. We also discuss the rationale behind making container instances a first class OCI Compute resource that customers can directly use with enabling container instances as worker nodes for Oracle Cloud Infrastructure Container Engine for Kubernetes.

The choices we made to develop Oracle Cloud Infrastructure are markedly different. The most demanding workloads and enterprise customers have pushed us to think differently about designing our cloud platform.

We have more of these engineering deep dives as part of this First Principles series, hosted by Pradeep Vincent and other experienced engineers at Oracle. Click to view more First Principles posts.

For more information, see the following resources: