Load balancing is a key component in your cloud infrastructure and plays a pivotal role in the digital experience of your users. As the gateway between users and your application, load balancers enable the availability, scalability, and agility that your business needs in today’s digitally transformed world.

Over the past few months, we’ve made significant enhancements to our Load Balancing service and introduced Oracle Cloud Infrastructure (OCI) flexible load balancing. OCI Flexible Load Balancer is a layer 4 (TCP) and layer 7 (HTTP) proxy which supports features such as SSL termination and advanced HTTP routing policies. It provides the utmost flexibility, with responsive scaling up and down. You choose a custom minimum bandwidth and an optional maximum bandwidth, both anywhere 10–8,000 Mbps. The minimum bandwidth is always available and provides instant readiness for your workloads. If you need to control costs, the optional maximum bandwidth setting can limit bandwidth, even during unexpected peaks. Based on incoming traffic patterns, the available bandwidth scales up from the minimum as traffic increases.

Introducing Flexible Network Load Balancer

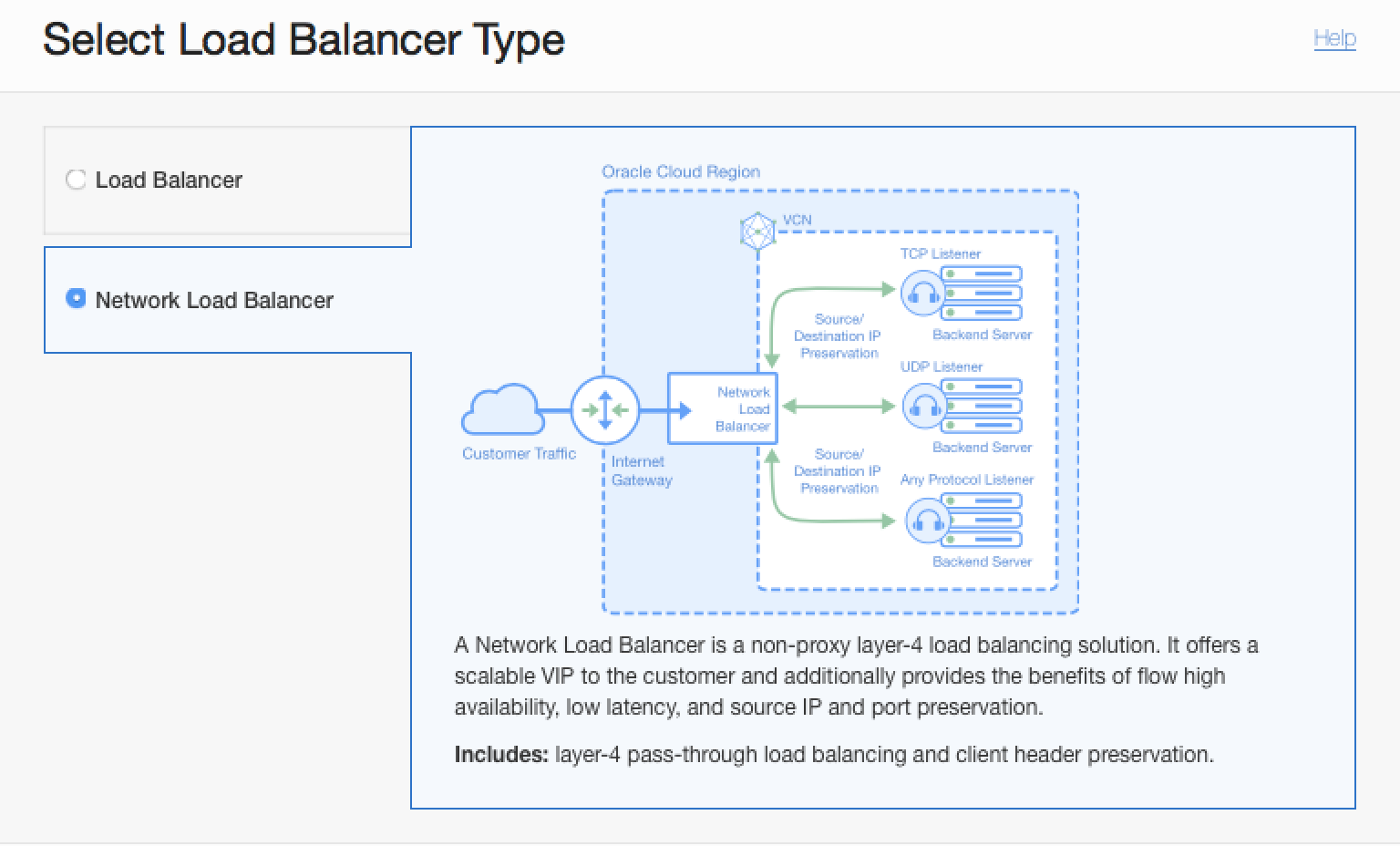

Today, we’re pleased to announce an exciting addition to the flexible load balancing portfolio: OCI Flexible Network Load Balancer (Network Load Balancer). The Network Load Balancer is a non-proxy load balancing solution that performs pass-through load balancing of layer 3 and layer 4 (TCP/UDP/ICMP) workloads. It offers an elastically scalable regional VIP address that can scale up or down based on client traffic with no minimum or maximum bandwidth configuration requirement.

It also provides the benefits of flow high availability, source and destination IP address, and port preservation. It’s designed to handle volatile traffic patterns and millions of flows, offering high throughput while maintaining ultra-low latency. This ideal load balancing solution for latency-sensitive workloads includes real-time streaming, VoIP, Internet of Things, and trading platforms. The Network Load Balancer is optimized for long-running connections in the order of days or months, which makes it best suited for your database or WebSocket type applications.

The Network Load Balancer operates at the connection level and balances incoming client connections to healthy backend servers based on IP protocol data. The load balancing policy uses a hashing algorithm to distribute the client flows. The default load balancing distribution policy is based on a 5-tuple hash of the source and destination IP address, port, and IP protocol information. This 5-tuple hash policy provides session affinity within a given TCP or UDP session, where packets in the same session are directed to the same backend server behind the Network Load Balancer. You can use a 3-tuple (source IP, destination IP, and protocol) or 2-tuple (source and destination IPs) load balancing policy to provide session affinity beyond the lifetime of a given session.

Pass-through layer 4 (TCP/UDP) load balancing

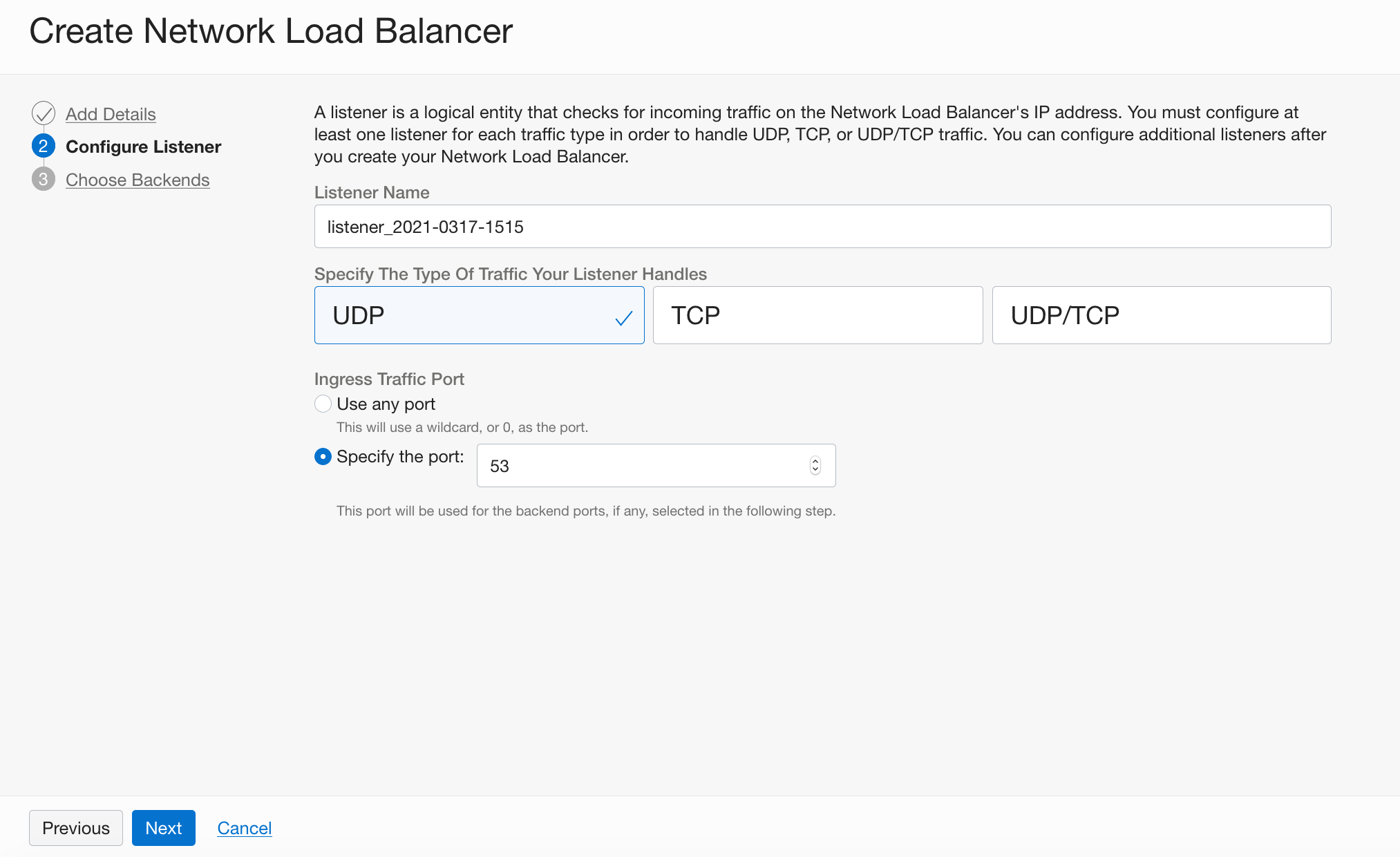

Say you’re an enterprise admin, tasked with migrating your on-premises DNS service to OCI. With the Network Load Balancer, you can now centralize your DNS service infrastructure to achieve operational efficiencies, such as scale, manage downtime or upgrades, and share one fixed IP externally. You can have a Network Load Balancer ready to handle any surge in DNS traffic within a few minutes by following these simple steps:

-

In the Console, under the Core Infrastructure group, go to Networking and click Load Balancers. Under Scope, choose a compartment that you have permission to work in, and then click Create Load Balancer.

-

From the Select Load Balancer Type panel, choose Network Load Balancer.

-

On the Add Details page, specify the attributes of the Network Load Balancer, including the name and the public or private visibility type.

-

Configure the listener properties. In this example, we use a UDP listener on port 53 for DNS queries.

-

As the final step, add the backend servers that host your DNS service and specify the health check policy.

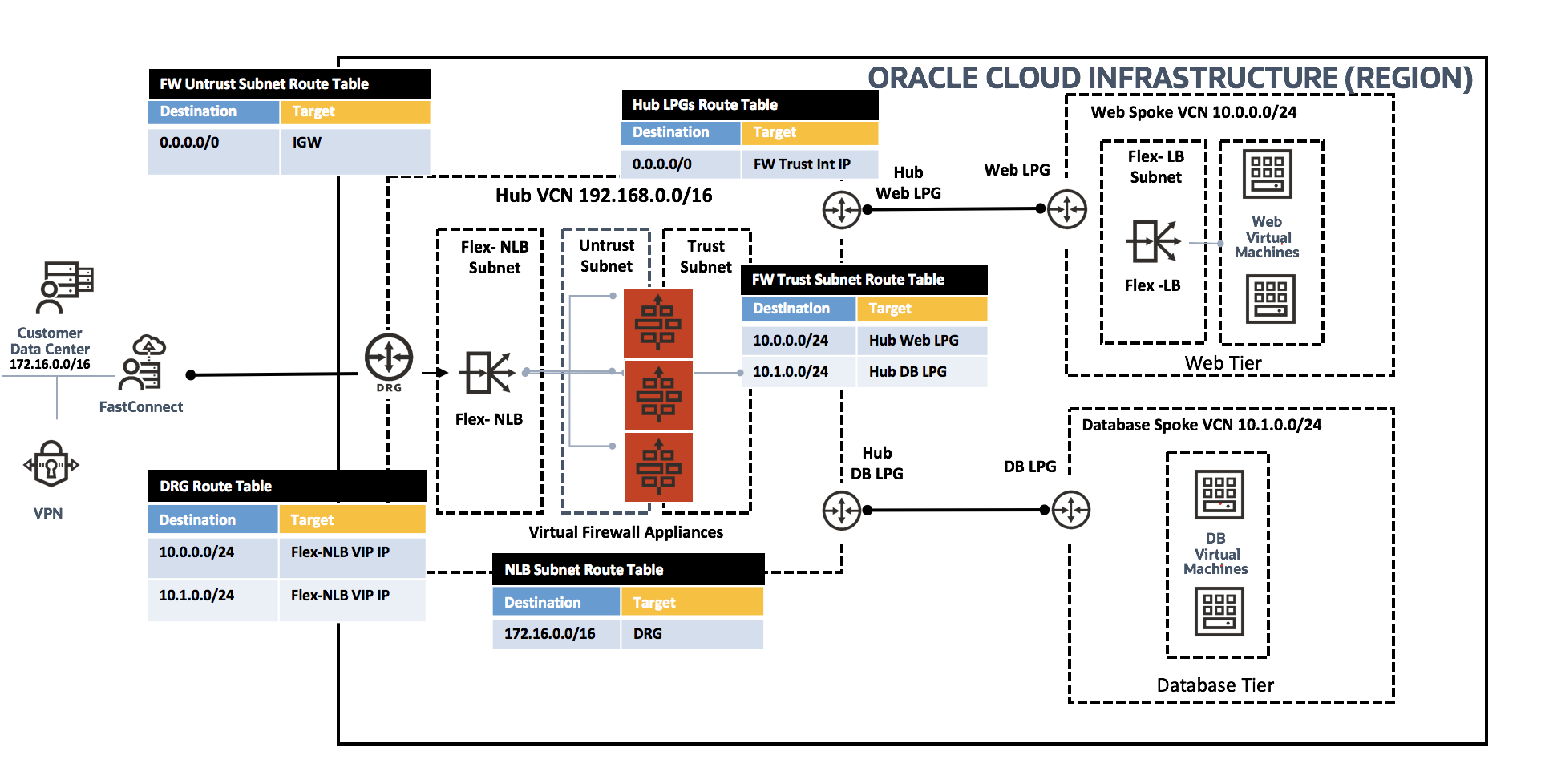

Scaling network virtual appliances and transparent layer-3 next hop

The OCI Flexible Network Load Balancer enables you to deploy and scale your network virtual appliances, such as firewalls and intrusion detection systems, behind a single regional IP address. You can also use a private Network Load Balancer as the next-hop route target to which packets are forwarded along the path to their final destination.

As shown in the following diagram, user traffic that would otherwise flow from source directly to destination is routed to the firewall instances, hosted behind the Network Load Balancer using VCN route tables. In this mode, the Network Load Balancer acts as a bump-in-the-wire layer 3 transparent load balancer that doesn’t modify the packet characteristics and preserves the client source and destination IP header information. This configuration enables the firewall appliances to inspect the original client packet and apply security policies before forwarding it to the application backend servers.

Next steps

For more information, see OCI Network Load Balancer in the OCI documentation. We want you to experience these new features and all the enterprise-grade capabilities that Oracle Cloud Infrastructure offers. It’s easy to try them out with a US$300 free credit. As always, your feedback drives our feature roadmap and product development. If you have any feedback or virtual networking feature requests, contact us through your Oracle sales team.