We’re excited to announce the general availability of E4 DenseIO with AMD’s third-generation EPYC “Milan” processor and the latest generation of SSDs. With 128 cores, 2 TB of memory, and 54.4 TB of direct-attached non-volatile memory express (NVMe) storage. E4 DenseIO instances are designed to run the most demanding storage optimized workloads at 50% better price-performance than the previous generation DenseIO2 instances.

Database and analytics workloads, such as relational databases like MySQL, PostgreSQL, NoSQL databases like Cassandra, MongoDB, online transaction processing (OLTP), data analytics, data warehouse, and distributed file systems benefit from low-latency, high I/O performance (IOPS) direct-attached NVMe storage. Local NVMe storage maximizes the performance of these workloads to meet end-user performance expectations.

Lower latency direct-attached storage and higher compute with the latest generation of AMD processors

E4 DenseIO supports both bare metal and virtual machine (VM) instances with the following configurations:

| Shape | OCPU | Memory | Attached Storage | Remote Storage | Network |

| BM.DenseIO.E4.128 | 128 cores | 2 TB | 54.4 TB | Up to 1 PB of remote block storage | 100 G |

| Virtual machine: VM.DenseIO.E4.Flex |

8–32 cores | 128–512 GB | 6.8–27.2 TB | Up to 1 PB of remote block storage | Up to 32 Gbps |

E4 DenseIO bare metal provides two times the number of cores, 2.5 times the memory, and two times the networking, compared to the previous generation DenseIO2 storage-optimized instances. With the newer generation of NVMe SSDs on E4 DenseIO, bare metal customers get double the IOPS and 50% higher price-performance than DenseIO2 bare metal instances.

Customers using the previous generation DenseIO2 instances can now take advantage of the higher compute performance and higher performance attached storage, allowing them to process even larger and more complex workloads. Customers running legacy on-premises applications that require large memory can now take advantage of the 2 TB of memory on the E4 DenseIO bare metal instances.

E4 DenseIO Flex VM instances initially support 8 OCPU and 6.8-TB NVMe, 16 OCPU and 13.6-TB NVMe, and 32 OCPU and 27.2 TB NVMe. Customers can benefit from a higher frequency CPU and higher-performing NVMe solid-state drives (SSDs) available on DenseIO VM instances.

E4 DenseIO provides over 50% better price-performance benefit at a lower per-core price

E4 DenseIO is priced to deliver industry-leading price-performance at $0.025 OCPU, $0.0015 per GB-hour memory, and $0.0612 NVMe storage TB-hr. The total price for a shape is the sum of these three components.

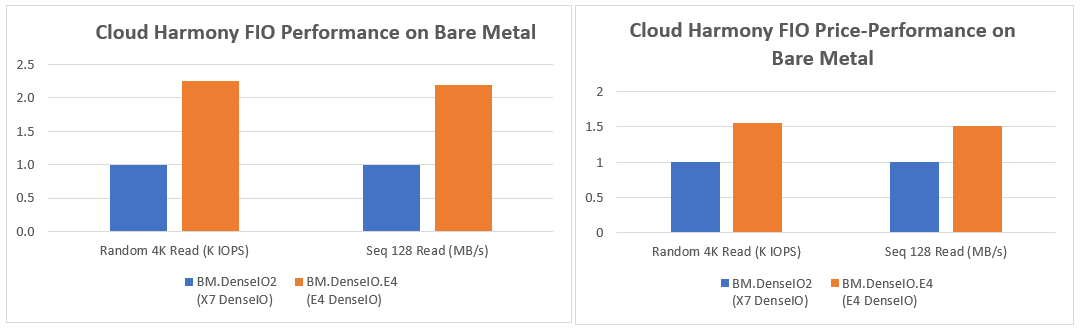

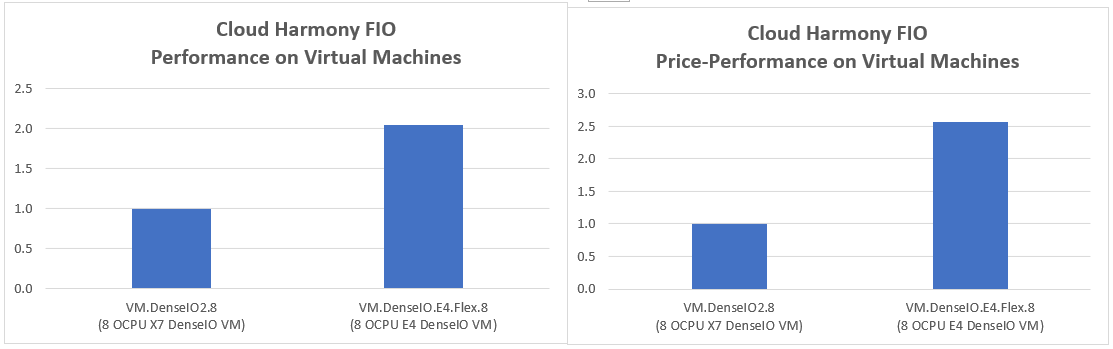

We ran industry-standard micro-benchmarks and application benchmarks to exercise the CPU, memory, and NVMe performance on E4 DenseIO bare metal and VM instances. Customers running workloads like relational, NoSQL databases, and OLTP workloads can benefit from the higher IOPS performance, while workloads like Data Warehouse and Analytics benefit from the higher sequential performance.

For bare metal instances, Gartner Cloud Harmony’s Flexible IO (FIO) benchmarks on E4 DenseIO show over two-times higher random 4 KB reads IOPS and sequential 128 K reads than the DenseIO2 and E4 DenseIO provides a 50% price-performance benefit over DenseIO2.

Gartner Cloud Harmony’s FIO benchmarks on an 8-OCPU E4 DenseIO VM showed two-times higher performance than DenseIO2. With a 20% lower price, VM’s price-performance benefit is over 2.5 times that of DenseIO2.

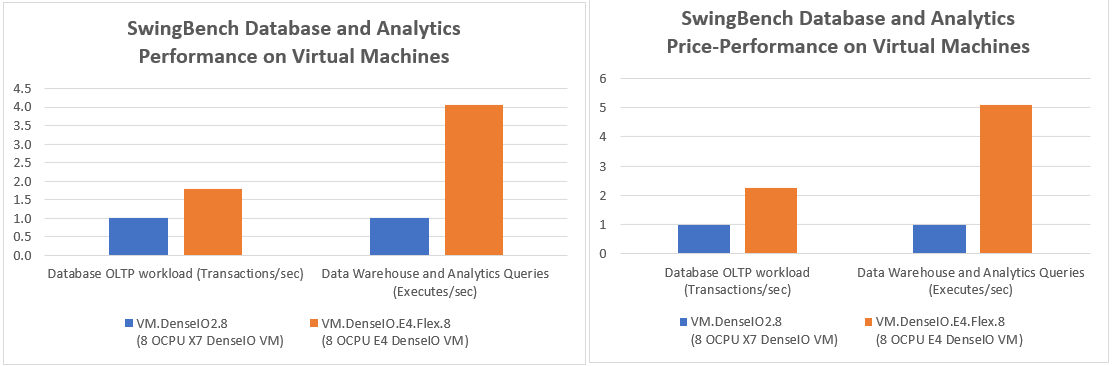

We also ran SwingBench application benchmarks on an 8-OCPU E4 DenseIO VM and 8-OCPU DenseIO2 VM.

SwingBench includes six benchmarks, including OLTP benchmarks and TPC-DS-like benchmarks. With SwingBench, we saw up to 1.8 times the transactions per second compared to VM.DenseIO2 instances for OLTP workloads with varying user loads and up to four times the runs per second for Data Warehouse and Analytics queries. With the E4 Dense VMs priced at 20% lower than similar DenseIO2 VMs, customers now get a much higher performance platform at a lower price.

What customers and partners are saying

Weka solves the most demanding data management challenges of next-generation workloads. The Weka Data Platform delivers epic performance at scale across the entire data pipelines for those workloads. For example, workloads that require low-latency access to millions of small files like AI, ML, EDA, financial modeling, genomics and life sciences, and media rendering, and big data analytics can achieve drastic improvements compared to traditional data solutions.

“Weka’s resilient, scalable platform was architected from the ground up to avoid the common bottlenecks of traditional unified storage systems,” said Nilesh Patel, chief product officer at Weka. “Exceptional scale with greater DataOps efficiency is a fundamental requirement for our customers. The new E4 DenseIO instances in Oracle Cloud Infrastructure, which feature the latest AMD CPU and large 54 TB of low-latency NVMe storage, complement Weka’s cloud native data platform technology to provide exponentially fast business outcomes for customer’s AI and GPU workloads.”

Oracle Cloud VMware Solution launched its new service with the E4 DenseIO shape and offers customers the flexibility of running their workload on various core counts tailored to their specific workloads. “With the new E4 DenseIO shape based on the third-generation AMD EPYC processors, Oracle Cloud VMware Solution can now provide customers with industry-leading VM deployment options per SDDC host for high CPU or high memory use cases. With over 2.5x times the memory and CPUs per host than competing offerings,” said Stephen Cross, director of product management for Oracle Cloud VMware Solution.

“AMD EPYC processors continue to demonstrate strong performance across a variety of cloud workloads and environments,” said Lynn Comp, corporate vice president of the Cloud Business Unit at AMD. “AMD EPYC processor-based E4 DenseIO bare metal instances running Cassandra workloads saw, on average, a two-times performance uplift compared to the previous generation DenseIO2.”

Get started with E4 DenseIO instances

For more information on the E4 DenseIO instances, you can access our Compute Shapes documentation. E4 DenseIO instances are now available in 14 regions globally. If you’re new to Oracle Cloud Infrastructure, you can sign up for a trial account for free.

MLNC-081: Results as of April 20, 2022 based on AMD internal tests using the YCSB benchmark version 0.16.0 on OCI BM.DenseIO.E4.128 i versus BM.DenseIO 2.52 bare metal instances for 95 Read–5 Write, 50 Read–50 Write, and 5 Read–95 Write operations, each with 2 billion records. Performance results presented are based on the test date in the configuration and are in alignment with AMD internal bare metal testing factoring in cloud service provider server configuration differences. Results vary from changes to the underlying configuration and other conditions, such as resources and optimizations by the cloud service provider.