This post is the third in the four-part series on Autoscaling a load balanced web application. You can find links for the entire series at the end of this post.

In Part 1, we set up the scenario of TheSmithStore, a fictional online retailer who needs their web application to respond to variable levels of customer traffic. TheSmithStore has decided to harness the scalability of Oracle Cloud Infrastructure to ensure the availability of their web application, even during unexpected traffic spikes. In Part 2, we set up the load balancer.

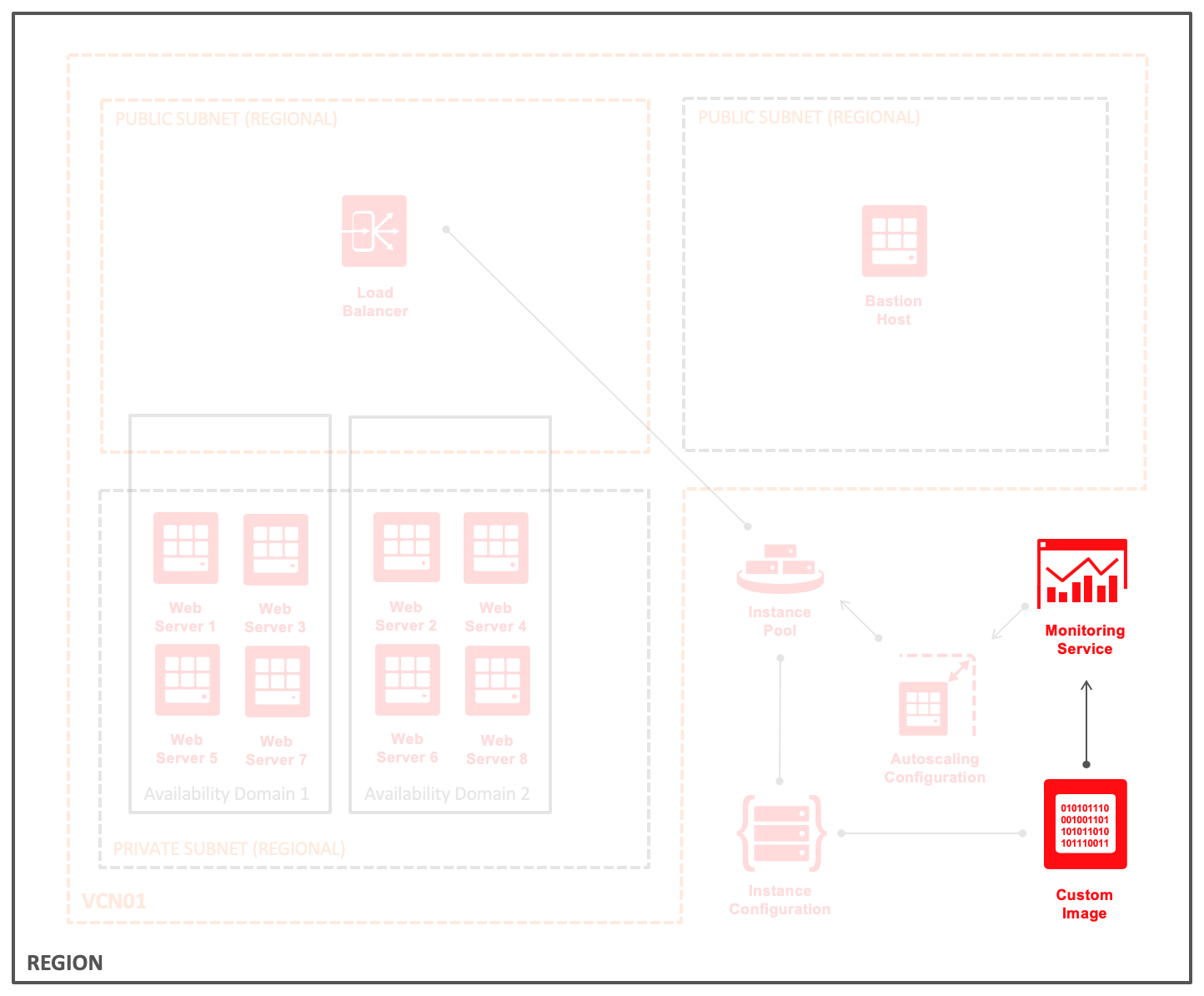

This part focuses on the custom Compute image and its interaction with the Monitoring service. The following diagram was introduced in Part 1, but here it highlights the image and the Monitoring service. The connection between the them depicts a one-way push relationship. The instance emits raw data points to the service through the OracleCloudAgent.

Part 4 describes how metrics provided by the Monitoring service trigger autoscaling events. This post doesn’t cover details about the Monitoring service. For information, including how the Monitoring service extends beyond Compute instance resources, see the service documentation.

Scaling Custom Images

The core of Oracle Cloud Infrastructure Compute autoscaling is individual compute instances. Part 4 describes how individual instances are launched and terminated based on an autoscaling configuration. When a scale-out event occurs, new instances reference a custom image as part of the launch process. Custom images should be set up not only to run the service that you want but to do so in a way that interacts well with autoscaling. The key to autoscaling is that no human operator is involved in provisioning or de-provisioning individual instances.

Consider the following best practices when creating a custom image to be used with autoscaling.

Launch Immediately into Service

The instance should start and immediately begin to process work. It wouldn’t make sense for these servers to need someone to log in and run a command or create a file before the they start to operate. That means there should be a service that runs on system startup.

Prepare at Image Build, not at Instance Launch

Most of the time, an instance pool expands or contracts based on load. When a new instance launches, it should be able to handle work as quickly as possible, which means that it shouldn’t go through a full system build and deploy. Instead, as much preparation as possible should happen when the image is created, such as installing packages, writing configuration, and possibly downloading or indexing data.

Enter the Load Balancer Pool When Fully Warmed Up

Some things are defined only after instance launch. Sometimes these are things that must be unique to a particular host. Sometimes, an instance might need to synchronize or otherwise warm up before it accepts production loads. When this happens, it might be necessary for the instance not to signal that it’s able to accept work until it’s truly available. For load-balanced services, the instance might come up with an “unhealthy” health check on purpose and only respond as “healthy” after it’s fully warmed up.

Publish Metrics to the Monitoring Service

All infrastructure systems determine the appropriate resources based on usage. A human operator could check an instance to see if it’s overloaded. Autoscaling must be able to query an instance’s use through the Monitoring service. By default, Oracle-provided images emit raw data to the Monitoring service about memory, disk, CPU, and network. For Linux and Windows images not provided by Oracle, the OracleCloudAgent package is available for manual installation. If you need metrics beyond the standard, you can use custom utilization measurements by following our Publishing Custom Metrics guide.

Terminate Without Data Loss

Instances that are managed by an autoscaling configuration terminate without warning. Instances receive a power-off signal to shut down services gracefully, but if the system doesn’t stop in time, a hard stop occurs. To prevent data loss, your service should complete its work when it receives a terminate signal.

TheSmithStore Implementation

The web server images for TheSmithStore are built on Oracle Linux 7, have the Apache httpd web server installed and configured, and are enabled to run as a service at launch. Apache httpd knows how to gracefully shut down, so no other action is needed on that point. The prelaunch deployment consists of a web server package and scripts required to warm up our service. Finally, we include configurations to ensure that the service launches with a 503 Service Unavailable error message until warmup is complete.

Following is the manual build process for TheSmithStore, after which you follow the documentation to create a custom image.

Note: The image OCID of the custom image is a required reference in Part 4 of this blog series.

First run these commands:

yum install -y httpd firewall-cmd --zone=public --permanent --add-service=http systemctl enable httpd

Then write these files:

/etc/httpd/conf.d/maintenance.conf

If you remember the health checks from Part 2, the load balancer is looking for a 200 response from the instance to determine its health. Here, the default 503 error response ensures that the load balancer doesn’t distribute incoming requests to new instances prematurely.

RewriteEngine On ErrorDocument 503 /maintenance.html RewriteCond /var/www/html/maintenance.html -f RewriteRule !^/maintenance.html - [L,R=503]

/var/www/html/maintenance.html

503 Service Unavailable

/usr/local/bin/warmup

#!/bin/sh # warmup: sync displayName data into our index and mark as healthy for load balancer curl -Ls http://169.254.169.254/opc/v1/instance/displayName > /var/www/html/index.html echo >> /var/www/html/index.html rm -f /var/www/html/maintenance.htm

/etc/systemd/system/warmup.service

[Unit] After=network.target [Service] ExecStart=/usr/local/bin/warmup [Install] WantedBy=default.target

Finally run this command:

systemctl enable warmup

Wrapping Up

With that, a custom image is ready to be used with autoscaling. Be sure to copy the image OCID for use in Part 4. In that final post of this series, the custom Compute image created here launches into an instance pool that is attached to the load balancer from Part 2.

As always, you can try all these features for yourself with a free trial. If you don’t already have an Oracle Cloud account, go to https://www.oracle.com/cloud/free/ to sign up today.

Blog Series Links

Part 1: Autoscaling a Load Balanced Web Application

Part 2: Preparing a Load Balancer for Instance Pools and Autoscaling

Part 3: You are here.

Part 4: Using Compute Autoscaling with the Load Balancing Service