We’re excited to announce the general availability of Streaming-as-a-source and Functions-as-a-task in Service Connector Hub to enable stream and log processing scenarios.

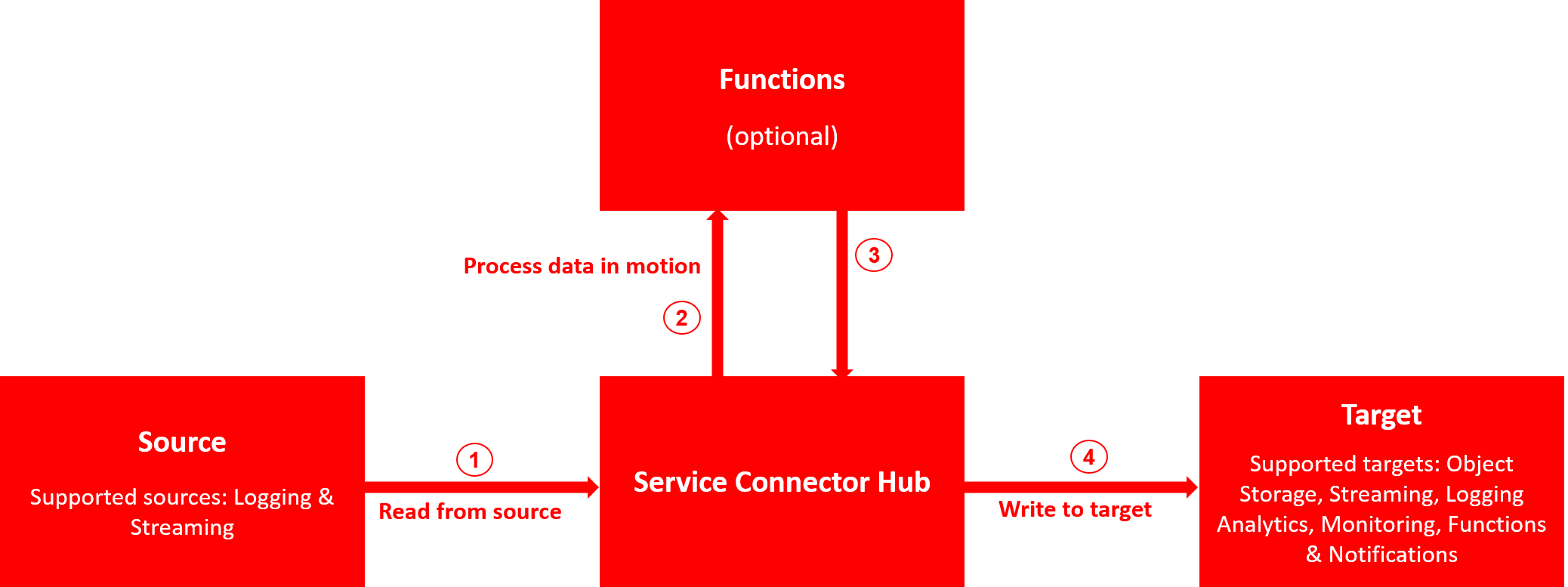

The Service Connector Hub helps you move data between Oracle Cloud Infrastructure (OCI) services and from OCI to third-party services, such as Splunk, Datadog, and QRadar. It currently supports moving logs from Logging to Object Storage, Streaming, Logging Analytics, and Monitoring. It also triggers Functions sends notifications from the Notifications service.

Today’s launch extends Service Connector Hub and enables moving data from Streaming to supported target services. You can now create connectors that move data from a stream to Object Storage or to a different stream in the Streaming service. You can also trigger a function or send notifications with data from the Streaming service.

Service Connector Hub now also supports triggering Functions as an optional task for processing data in motion between the source and target services. This capability enables you to use Functions for processing and enriching logs from Logging or data from Streaming before they’re delivered to target services. You can get started with sample Functions from our GitHub repo for converting JSON data from Logging and Streaming into CSV or Parquet formats before they are delivered to target services.

Common use cases

Today’s launch announcement enables the following scenarios:

-

Persist event streams for compliance and long-term archival: Move event streams, such as processed financial transaction data, from Streaming to Object Storage for long-term, low-cost data archival by configuring Streaming-as-a-source in Service Connector Hub.

-

Event stream processing for data transformation and enrichment: Transform and enrich events streams and logs, such as transforming JSON events to CSV, while in motion from Streaming and Logging to target services by configuring Functions-as-a-task.

-

Filter and consolidate data across streams: Filter data from one stream using Functions-as-a-task and push the resulting data to a new stream by configuring Streaming-as-a-target.

-

Send notifications on event streams: Process event streams using Functions-as-a-task and send notifications by configuring Notifications-as-a-target.

Open standards-based

We understand that our customers have standardized on tools from different vendors. We want to support them in using the tools they have invested in. All the services in today’s announcement align with open standards to ensure no vendor lock-in with the following features:

-

Streaming is Apache Kafka-compatible, which enables you to push data to over 100 other technologies and cloud service targets using the popular Kafka Connect.

-

Logging schema adheres to CNCF’s cloud events 1.0 format to easily interoperate with the rest of the cloud native ecosystem and tools.

-

Logging uses Fluentd for a seamless way to deploy, configure, and manage agents across your entire fleet. You can apply hundreds of open source community plugins to ingest, parse, and enrich your logs.

-

Oracle Functions is based on the open source Apache 2.0 licensed Fn project. You can use the managed service, or self-managed, open source-based Fn clusters deployed on-premises or on any cloud.

-

Metrics and logs from Monitoring and Logging work out of the box with partners like Grafana.

Pricing

Using Service Connector Hub incurs no charges. Standard pricing applies for services used as source, task, and targets.

Get started today

Support for stream and log processing Service Connector Hub is now available in all commercial regions. Service Connector Hub is accessible through the Oracle Cloud Infrastructure Console (under the Data and AI section), SDK, CLI, REST API, and Terraform. To learn more about Streaming-as-a-source and Functions-as-a-task, see the Service Connector Hub documentation.

To try Service Connector Hub, Logging and Streaming for yourself, sign up for the Oracle Cloud Free Tier or sign in to your account.