Written in collaboration with Leo Leung.

To mark the launch of our EU Germany Datacentre Region, we’d like to share a series of posts highlighting key considerations for selecting an enterprise cloud provider.

There are three major trends driving CIOs to rethink their IT strategy: the rise of information-driven business models, the adoption of more agile working approaches, and the acceleration of cloud-native services. With internet platform providers like Google, facebook and Uber creating substantial revenue with vertical integrated services for digital ecosystems, companies across industries started to transform their business and participate in these fast growing markets.

But succeeding in online markets demands fast delivery of digital services. Enterprise IT departments can’t act like cost centres anymore. CIOs have to reshape IT service management (ITSM) processes so they become revenue generators; they need to shift their focus from process automation to user experience.

Public cloud providers have responded by delivering infrastructure on-demand, which stimulates the adoption of new delivery models, fosters integration with external partners, and helps companies better serve mobile users on the public internet. But while several public cloud providers have built impressive service portfolios, they are heavily geared towards cloud-native startups.

Today, most Enterprise IT departments can hardly manage the frequent iterations of application code and changing integration requirements needed to keep up with market changes and customer demand. They aren’t able to operate cloud-native services with existing ITSM processes and tools. And the vast majority lack a delivery platform that can access existing systems and facilitate the continuous deployment of new services. Instead, they risk building a large development backlog and “shadow IT.”

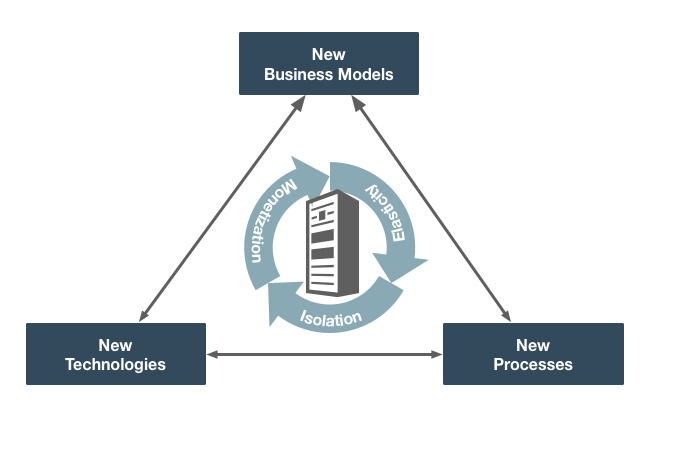

That is why Oracle offers a cloud service delivery platform that can access business critical data and facilitate the continuous integration and deployment of new services, thereby allowing IT departments to compete with public service providers. Oracle’s offering is geared towards enterprises that want to develop new business models, new processes, and new technologies from the existing operation. This ability to enhance, not just replace, existing applications with cloud-native applications, is the foundation of a successful cloud migration.

The three tenets of a robust enterprise cloud

The evolution of software architecture has led to the development of diverse hosting environments, which we now refer to as the cloud. While there is an expectation that the cloud is equally suited for any type of application workload, today this is far from true. Infrastructure stacks that are designed for cloud-native workloads are profoundly different from hosting environments built for enterprise applications. The former targets efficient operations processes with programmability, while the latter keeps operations and software development completely separated. Total cost-of-ownership (TCO) are addressed during the run phase only.

Oracle’s cloud infrastructure was built to serve both needs, hosting them on a single platform. Our solution is based on three core tenets:

- Providing one versatile delivery platform to both run your organization and power your new ideas

- Delivering measurable savings over on-premises infrastructure and other clouds

- Offering companies control over their own cloud services powered by Oracle’s infrastructure

We’ll cover the first tenet in this blog.

One versatile platform for both traditional and cloud-native

It is important to remember that a cloud infrastructure stack is comprised of more than physical hardware. It also includes the software to support the provisioning of new servers, manage connections between bare metal or virtual machines, schedule workloads and assign resources.

Traditional and cloud-native applications do not usually run on the same infrastructure stack. The application code is different. Developers of traditional enterprise applications rely on mechanisms in the operating system (OS) to enable inter-process communication (IPC), while cloud-native developers build on network protocols to schedule workloads across hosts.

Enterprise application developers use the OS to implement synchronous communication patterns that execute processes sequentially. When spanning across multiple servers, operators implement middleware protocols. Correcting transmission errors and maintaining secure access to business-critical resources is kept separate from the application code. This allows developers to focus entirely on application functionality, but requires operators to build and maintain complex infrastructure stacks.

Cloud-native services are designed to run on the internet, but because the internet is considered an “unreliable” network, it does not facilitate continuous input-output (I/O). Application developers therefore turn to loosely coupled architectures with parallel processing and asynchronous communication. This leads to a very different approach towards error and security handling. Error correction is provided by the transport layer and security is enabled by the operating system (OS). This pattern results in less administrative work and splitting tasks between developers and operators is much easier.

The layered network architecture becomes the core integration point and is key to serving both of these very different development and operating patterns.

However, in order to serve application-specific needs in the network, most cloud operators employ virtual communication endpoints in the hypervisor, creating overlay networks that enable process-to-process communication via sockets (sockets combine the IP address of a host with a port on the network). What provides flexibility and a certain grade of autonomy for web service developers separates hosting environments for enterprise and web applications into distinct network domains.

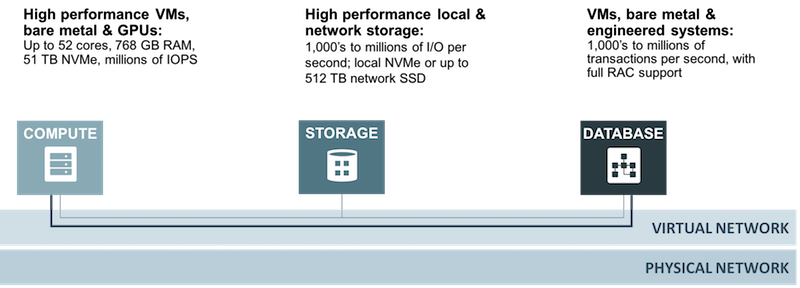

Hypervisor networks are a performance bottleneck and cause unpredictable stability issues for enterprise applications. This is why a main design criterion for Oracle was to allow both physical and virtual compute nodes to connect through one virtual network to a single, consolidated database cluster. By deviating from hypervisor networking and developing the concept of “off-box IO virtualization” we were able to build a network that behaves similarly to a transport network in telecommunications.

Using off-box virtualization we are able to connect bare metal servers, virtual machines (VMs) and engineered systems on a single overlay. We avoid the constraints of a layer 2 overlay that runs between hypervisors by establishing host-to-host communication with a layer 3 overlay that runs between the network interface cards (NICs). This provides users with multiple options for storing and persisting consistent or eventually consistent data.

For database, we offer a range of form factors from VMs to bare metal to Exadata. The customer can pick and choose whatever suits their development, test, production or scaling needs without redesigning or redeploying their runtime environments. A broad selection of bare metal and VM shapes serves even very specific workloads like High Performance Computing (HPC) or the fast processing of HD media transcoding. We also offer a choice between direct attached solid state disks (SSD) with NVMe interface for compute nodes and network attached SSDs that communicate via iSCSI.

In order to provide maximum performance between our smart endpoints, we implemented a flat, non-blocking end-to-end network architecture that allows all compute nodes to reside on a single, high performance network where they can be combined with all the aforementioned storage and database options. A single interface allows customers to provision both dedicated- and virtually-assigned-resources and configure the network services in between, including security rules, route tables or load balancing. All these features address both the traditional enterprise and cloud-native application patterns, and give customers a much higher level of automation.

In our next post, we’ll cover how a robust enterprise cloud offers measurable savings over both on-premises infrastructure and older public clouds.