The data flow capability in Oracle Analytics Cloud (OAC) offers a variety of tools that allow end-users to ingest data, perform data preparation, and produce curated datasets in standardized workflows. Business analysts and end-users often want greater control when performing data preparation tasks. In these situations, the custom script feature within data flow gives you greater control and flexibility over specific data processing needs.

Custom Script Overview

A custom script is another term used to describe an Oracle Cloud Infrastructure (OCI) Function. An OCI Function is a fully managed Functions-as-a-Service (FaaS) platform that allows developers to write code in Java, Python, Node, Go, Ruby, and C#. OAC offers a way to leverage these functions in data flows to customize your data preparation workflows. There are many use cases where a custom script in a data flow could be very powerful. For example, you could call an API to add new information to an existing dataset, perform custom date formatting, or implement a custom data transformation.

The remainder of this blog highlights a custom script use case which involves imputing, or replacing, missing values with new values. Dealing with missing values is one of the most important steps in preparing data for machine learning and reporting. There are many ways to address the issue of missing values, but for the purposes of this blog, you’ll learn the high-level steps of using a missing-value imputation script that replaces the missing values in our data with values it infers from existing values.

Custom Script Use Case

To begin, create a FaaS script that contains the logic to fill in missing values with the column mean. There are a number of requirements to follow when writing the script to ensure that it’s compatible with OAC. For example, the script must use a variable called ‘funcMode.’ In short, Oracle Analytics sends a request to register the function when funcMode=describeFunction, and it sends a request to invoke the function when funcMode=executeFunction. For a more in-depth description of how to use the funcMode variable in your function code, refer to this link.

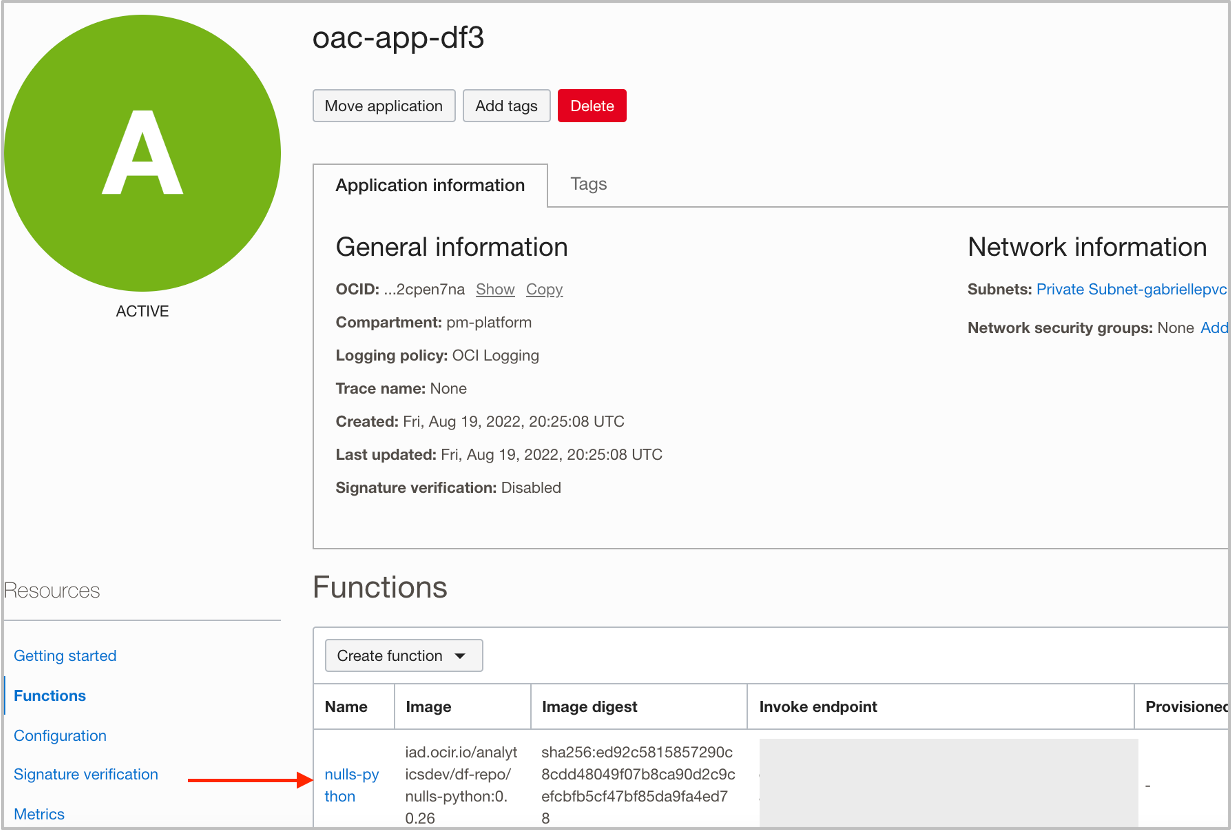

Once you’ve created the script, create an application within OCI Developer Services, and deploy the function within the application. The image below shows an example of what you should see once you have deployed the function within the application.

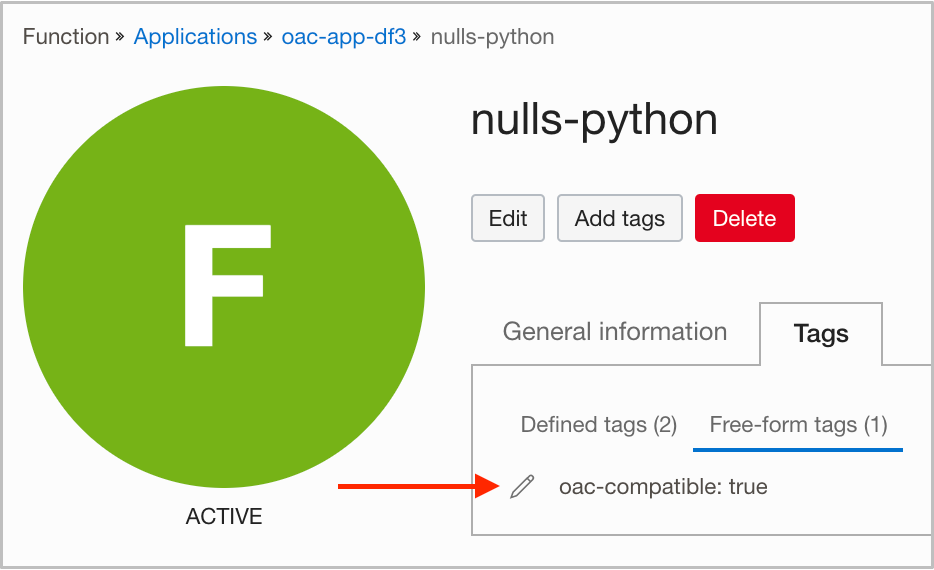

For an OCI function to be compatible with OAC, it must contain an oac-compatible tag. Add the tag to the function directly from the OCI console, as shown in the image below.

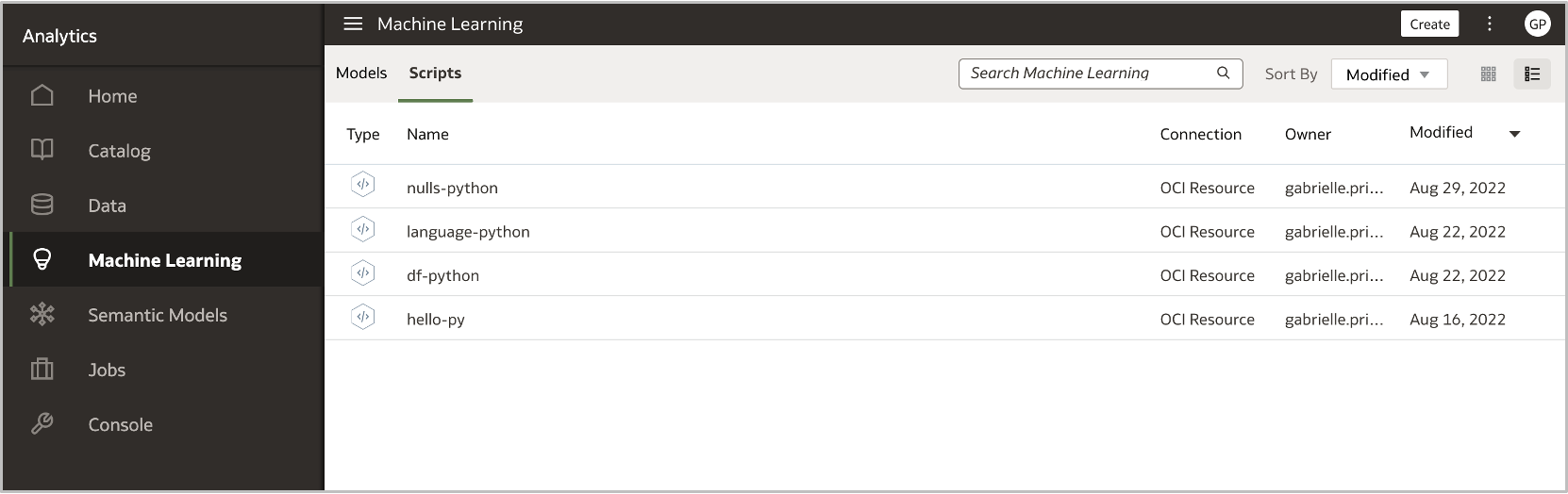

Once you’ve deployed the function within the application with the proper formatting requirements and have ensured it contains the oac-compatible tag, you must register the script in OAC to use it in a data preparation step in a data flow. Follow the steps in this link to first create a connection to your OCI Tenancy, and then to register the function in OAC. Verify that the function has successfully been registered in OAC by navigating to the Scripts tab within the Machine Learning section of OAC.

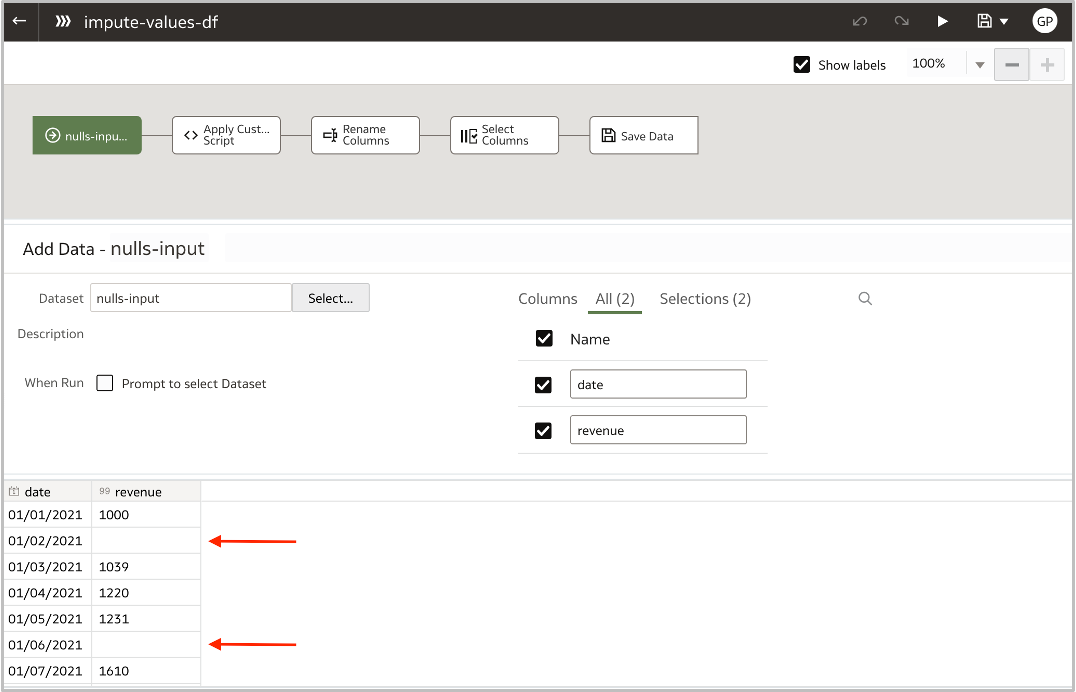

After successfully registering the function in OAC, invoke the function in a data flow. Create a data flow, supply input data that works with the parameters that you’ve specified in the function, optionally add more transformations, and save the output. This example shows a 2-column input dataset with a date column and a revenue column, with missing revenue values. The image below shows a sample of the input data and the missing records, as indicated by the red arrows. Note that not all of the records are visible in the image.

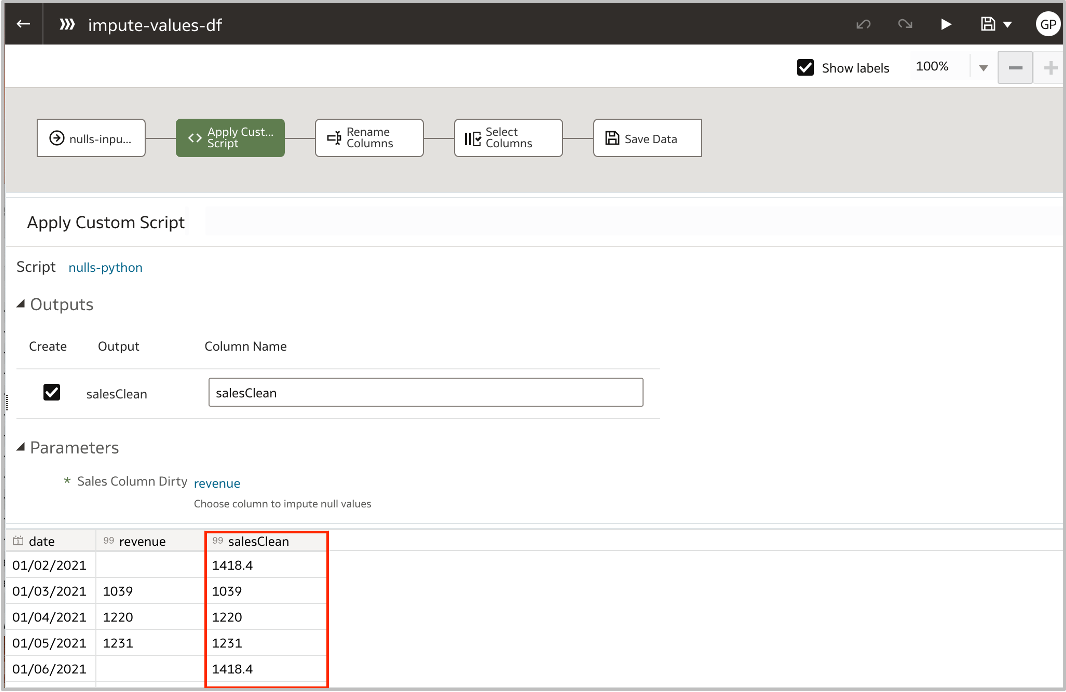

Next, add the Apply Custom Script step to the data flow, select the script that you registered in OAC, and specify the parameters that you want to send to the function. In this example, the revenue column was the column that contained missing values, so revenue was included as the parameter. Based on the function created, a new column was returned with the missing values filled in.

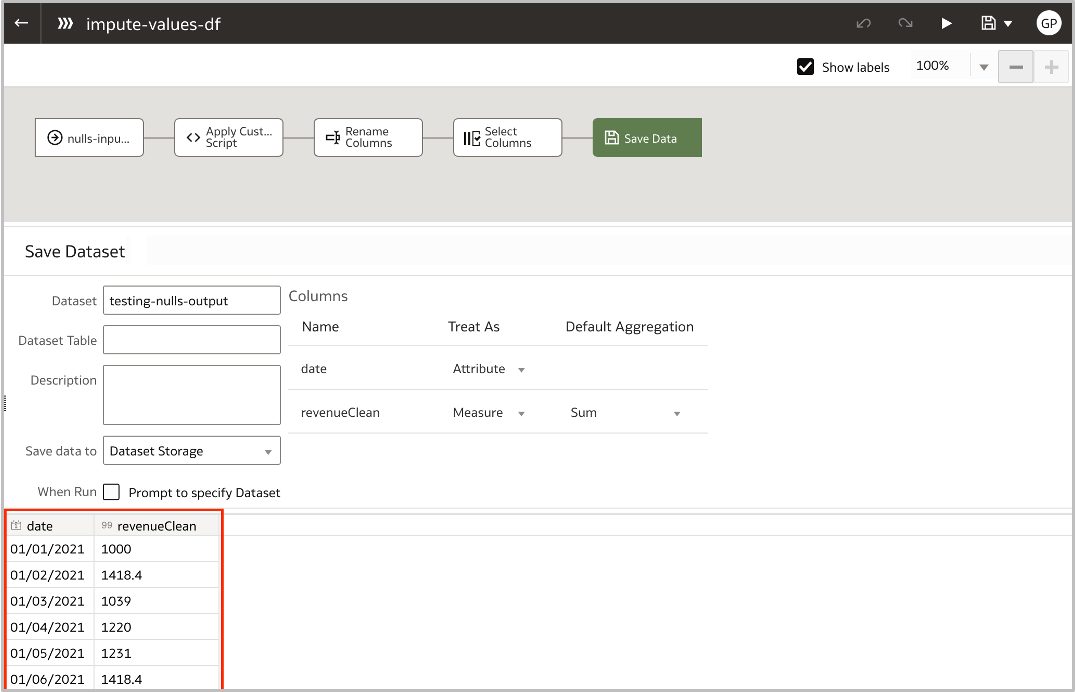

Optionally specify formatting changes or data transformations to fit your preparation requirements, and save the output dataset. Finally, save and run the data flow to create a clean dataset ready for machine learning and reporting!

Call to Action

This is one of many scenarios where implementing a custom script in a data flow would be beneficial. I encourage you to visit the resources linked in this blog and to create a custom script of your own to enrich your data!

For more information on how to make Oracle Functions OAC compatible, watch this video: