Background

Oracle Analytics Cloud (OAC) logs are now available via the Oracle Cloud Infrastructure (OCI) Logging service. The integration provides administrators valuable information into the usage of OAC resources. Administrators can access details on audit events and diagnostic logs for OAC environments. The OCI Logging service is a single pane of glass for log management for OCI resources within a tenancy.

Insights Provided by OAC Logs

Logs available via the Logging service provide important insights for administering OAC instances. Both audit and diagnostic logs are available. Audit logs capture user-initiated actions within OAC resources in a tenancy. Audit activity is organized into categories that include catalog updates, export actions, security management, snapshot operations, and system setting configuration. Audit logs provide administrators insights into the who, what, and when for auditable events. For example, if a user changes permissions for a connection, exports data from a Workbook, modifies an application role, or downloads a snapshot, that action gets logged. Diagnostic logs provide access to data query details that help administrators diagnose issues with source data platforms. Administrators can review response timings, SQL issued, and error responses.

The Logging service provides capabilities to search, analyze, filter, and aggregate logs. Robust integrations allow for automated monitoring and notification as well as integration with Oracle and third-party systems for analysis and monitoring. Logs can be archived to OCI Object storage.

Here’s how you can enable, access, and analyze OAC logs.

Enabling Logging

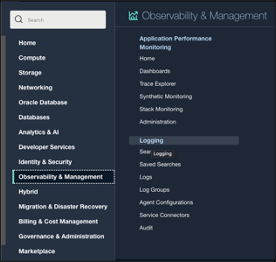

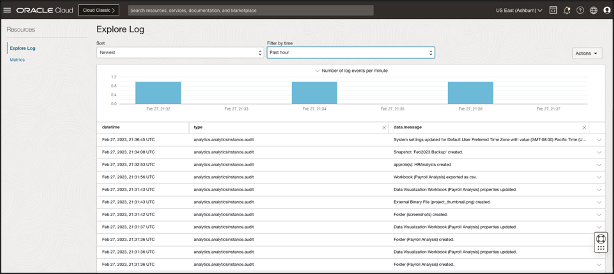

The Logging section of the OCI Console provides access to all capabilities for log management and access.

Logging can be enabled by a user in a group with the required policy. Please refer to the documentation link at the end of the blog for details.

The administrator first creates a log group. Log groups are logical containers for organizing logs. Logs must always be inside log groups.

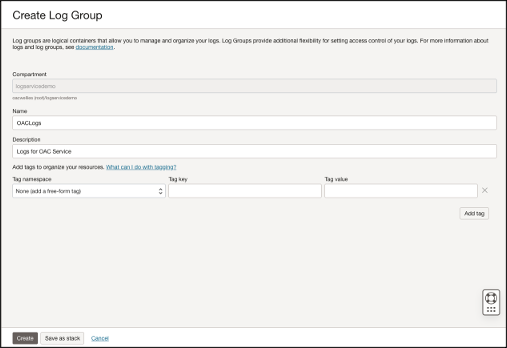

Next, the administrator enables the log for the specific OAC resource. The administrator chooses the type of log, e.g., Audit. The Advanced option can be used to specify log group, log retention period, and other configuration options.

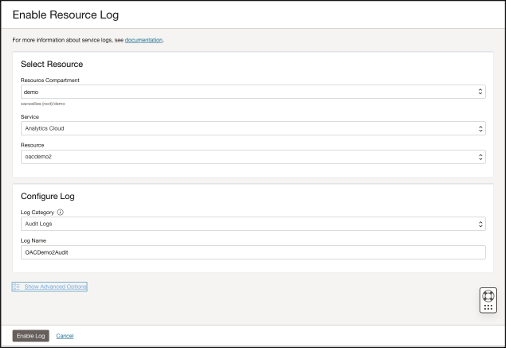

Accessing and Exploring Logs

Once logs are enabled, a user with the appropriate permissions can access log entries in the Log Explorer in the OCI Console. The Log Explorer includes a powerful search to quickly locate logs of specific interest.

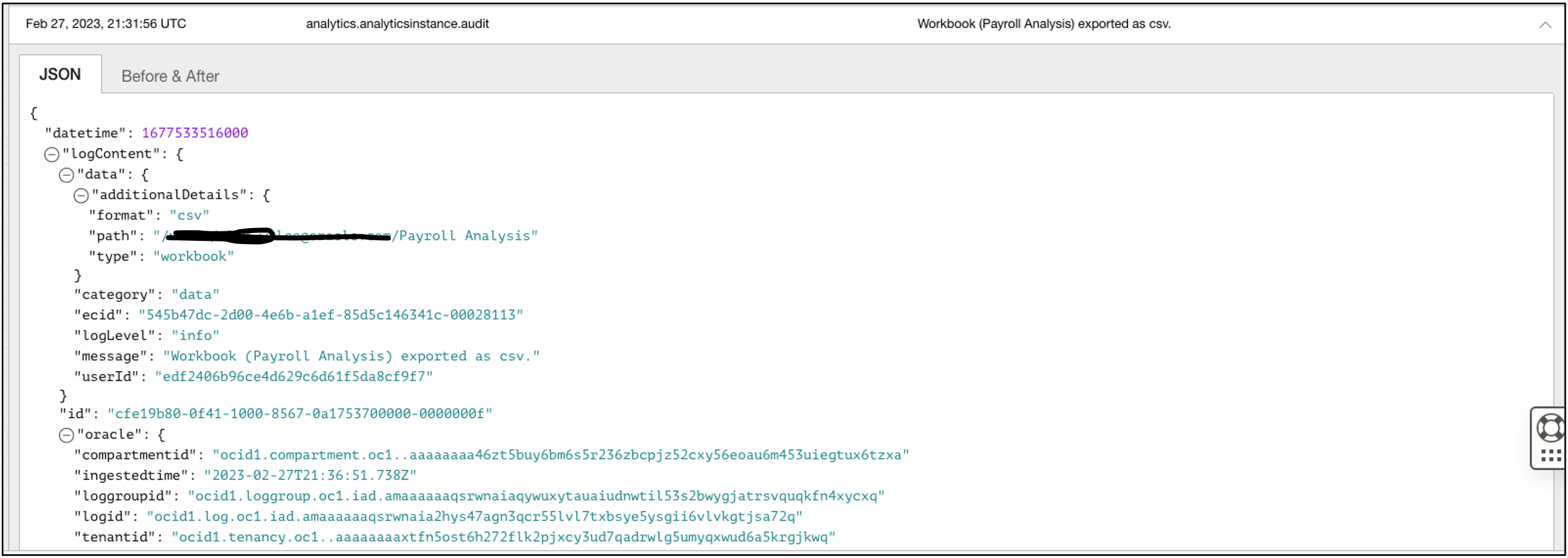

The contents of each log entry are in a JSON format. Below, you see an example of a log generated by a user exporting data from a Workbook. The JSON content provides a timestamp of the event, the Workbook that was downloaded, the download format, user, and other details.

Since entries are in a standard JSON format, they can be exported for further analysis.

Using a Service Connector and a Function to Copy Log Entries to an Autonomous Database

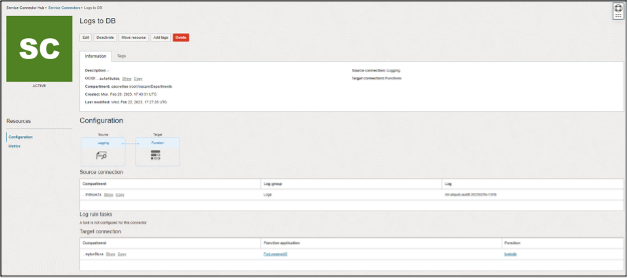

Log entries can easily be copied to an Oracle Autonomous JSON database using an Oracle OCI Function and a Service Connector. The scenario is described in the documentation.

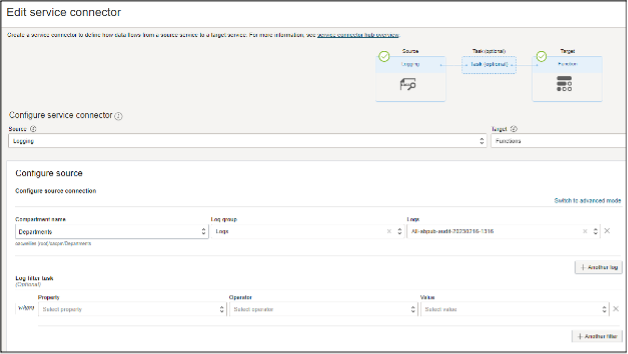

The source for the Service Connector is the log file:

The target in the Service Connection is a Function:

Here is some sample code for the Function:

import io

import json

import logging

import requests

from fdk import response

# soda_insert uses the Autonomous Database REST API to insert JSON documents

def soda_insert(ordsbaseurl, dbschema, dbuser, dbpwd, collection, logentries):

#auth=(dbuser, dbpwd)

#sodaurl = ordsbaseurl + dbschema + '/soda/latest/'

#bulkinserturl = sodaurl + 'custom-actions/insert/' + collection + "/"

#logger.info("bulkinserturl is:" + bulkinserturl)

#headers = {'Content-Type': 'application/json'}

#resp = requests.post(bulkinserturl, auth=auth, headers=headers, data=json.dumps(logentries))

return resp.json()

def handler(ctx, data: io.BytesIO = None):

logger = logging.getLogger()

logger.info("function start")

# Retrieving the Function configuration values

try:

cfg = dict(ctx.Config())

ordsbaseurl = cfg["ordsbaseurl"]

logger.info("ordsbaseurl is:" + ordsbaseurl)

dbschema = cfg["dbschema"]

logger.info("dbschema is:" + dbschema)

dbuser = cfg["dbuser"]

logger.info("dbuser is:" + dbuser)

dbpwd = cfg["dbpwd"]

logger.info("dbpwd is:" + dbpwd)

collection = cfg["collection"]

logger.info("collection is:" + collection)

auth=(dbuser, dbpwd)

sodaurl = ordsbaseurl + dbschema + '/soda/latest/'

bulkinserturl = sodaurl + 'custom-actions/insert/' + collection + "/"

logger.info("bulkinserturl is:" + bulkinserturl)

headers = {'Content-Type': 'application/json'}

except (Exception, ValueError) as ex:

logger.error('Missing configuration keys: ordsbaseurl, dbschema, dbuser, dbpwd and collection')

# Retrieving the log entries from Service Connector Hub as part of the Function payload

try:

logentries = json.loads(data.getvalue())

# The log entries are in a list of dictionaries. We can iterate over the the list of entries and process them.

# For example, we are going to put the Id of the log entries in the function execution log

logger.info("Processing the following LogIds:")

logger.info(json.dumps(logentries))

for logentry in logentries:

logger.info(logentry["oracle"]["logid"])

except (Exception, ValueError) as ex:

logger.error('Invalid payload')

# Now, we are inserting the log entries in the JSON Database

resp = requests.post(bulkinserturl, auth=auth, headers=headers, data=json.dumps(logentries))

logging.getLogger().info("Ending")

return response.Response(

ctx, response_data=json.dumps(

{"message": "Hello"}),

headers={"Content-Type": "application/json"}

)

Be sure to add the following to include ‘requests’ in the requirements.txt file:

fdk>=0.1.51 oci requests

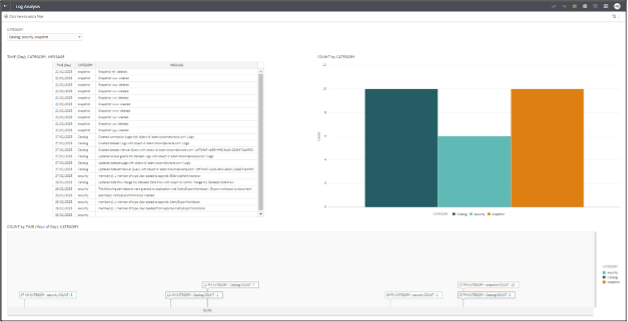

Analyzing Log Entries using Oracle Analytics Cloud

You can connect from OAC to the Autonomous JSON Database where your log data is available and perform analysis on your log entries. Connecting to an Autonomous JSON Database is the same as connecting to Oracle Autonomous Data Warehouse.

A good way to build a Dataset on your log data is by using SQL with JSON syntax. There are various ways to write the SQL. Here is one example:

select M.* from logs l, JSON_TABLE( l.JSON_DOCUMENT , '$' columns Category VARCHAR2(30 CHAR) path '$.data.category', ecid VARCHAR2(30 CHAR) path '$.data.ecid', Message VARCHAR2(30 CHAR) path '$.data.message', Time VARCHAR2(30 CHAR) path '$.time') M;

You can use this dataset to create visualizations on your log events.

Summary

You can easily access insights on usage of OAC instances through logs via the OCI Logging service. Log data can be piped to Oracle Autonomous Database and further analysis performed using the powerful visualization capabilities of OAC.

For complete details on enabling and accessing OAC logs using the OCI logging service, please see: Monitor Oracle Analytics Cloud Logs.